designing-real-world-ai-agents-workshop

Health Pass

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 22 GitHub stars

Code Pass

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Pass

- Permissions — No dangerous permissions requested

This project is an educational workshop that teaches developers how to build multi-agent AI systems. It provides two MCP servers: one for conducting deep web research using Gemini, and another for generating and reviewing LinkedIn posts.

Security Assessment

Overall Risk: Low. The codebase was scanned for dangerous patterns, hardcoded secrets, and risky permissions, with none found. As an AI agent workflow, the tool inherently makes network requests to external APIs (such as Google Gemini and YouTube) to gather data and generate content. However, it does not appear to execute arbitrary shell commands or access local sensitive data without explicit user direction. Users should still be mindful of configuring their own API keys securely via environment variables rather than hardcoding them.

Quality Assessment

The project is in excellent health. It is licensed under the standard and permissive MIT license, making it fully open source and legally safe to use. The repository is highly active, with its latest changes pushed just today. While the community is relatively small (22 GitHub stars), this is expected for a specialized, hands-on workshop. The included documentation and README are exceptionally clear, detailing the exact architecture, workflows, and agentic patterns covered.

Verdict

Safe to use — a well-documented educational resource with no detected security risks.

Hands-on workshop: Build a multi-agent AI system from scratch — Deep Research Agent + Writing Workflow served as MCP servers. Includes code, slides, and video (coming soon)

Designing Real-World AI Agents Workshop

A hands-on workshop building a multi-agent AI system with two MCP servers: a Deep Research Agent and a LinkedIn Writing Workflow. Both connected to a harness like Claude Code or Cursor.

Built as a lightweight companion to the Agentic AI Engineering Course, which covers 34 lessons and three end-to-end portfolio projects. This workshop distills the core agentic patterns into a ~2-hour hands-on build.

Link to the slides here.

What You'll Build

Deep Research Agent — An MCP server that runs deep research using Gemini with Google Search grounding and native YouTube video analysis:

user topic → [deep_research] × N → analyze_youtube_video (if URLs) → [deep_research gap-fill] → compile_research → research.md

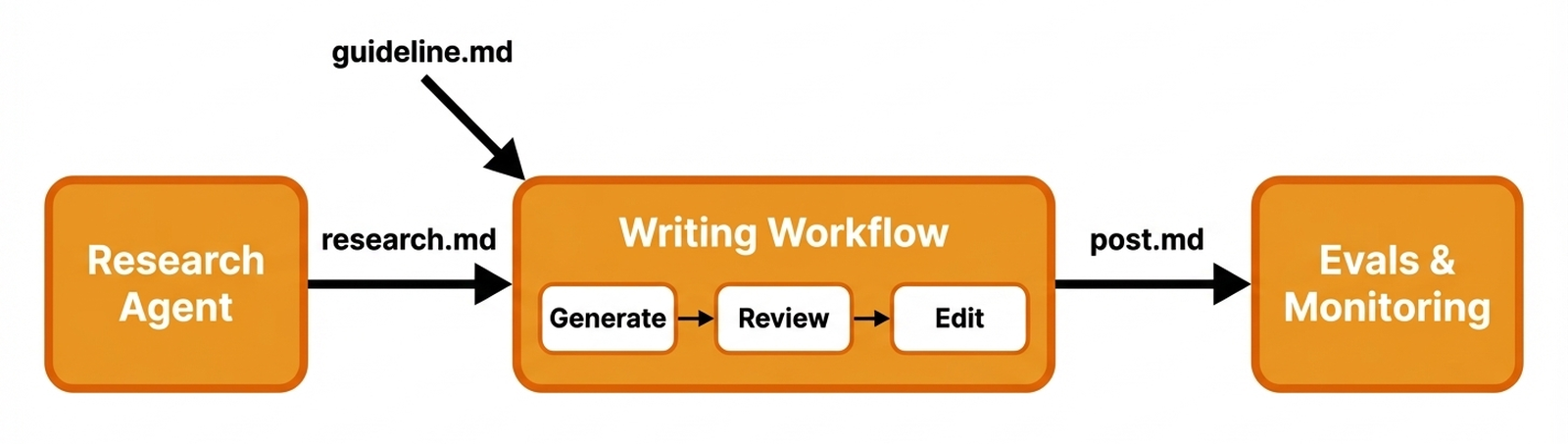

LinkedIn Writing Workflow — An MCP server that generates LinkedIn posts with an evaluator-optimizer loop:

research.md + guideline → generate post → [review → edit] × N → post.md → generate image

Both servers expose tools, resources, and prompts via the Model Context Protocol, letting any MCP-compatible harness orchestrate the workflow.

Patterns and concepts you'll learn:

- Tool-use agents — letting the LLM decide which tools to call and when

- Evaluator-optimizer loop — generate, review, edit in cycles

- Grounded search — Gemini with Google Search grounding for factual research

- Structured LLM output — Pydantic schemas for type-safe model responses

- MCP server design — registering tools, resources, and prompts with FastMCP

- LLM-as-judge evaluation — automated quality scoring with Opik

Example: End-to-End Workflow

Here's a real run through the full pipeline — from a topic seed to a published-ready LinkedIn post with an AI-generated image.

Final output

|

Phil Tobaloo

AI Engineer | I ship AI products and teach you about the process. We planned 12 AI agents and shipped 1. It worked better. A client built an AI marketing chatbot. Their initial design had dozens of agents: orchestrator, validators, spam prevention. It failed. A single agent with tools won. Tasks were tightly coupled. One brain maintained context. Tools were still specialized. This is the core mistake. People jump to complex multi-agent setups too fast. Think AI system design as a spectrum:

... A single agent works for most cases. But it has limits. So, when do you actually need multi-agent? ... The simplest system that reliably solves the problem is always the best system. What's the most complex agent architecture you've simplified? Tell me below.

|

1. Start with a seed

A short research brief with 2-3 questions and reference links:

# Research Topic: AI Agent Architecture — When Less Is More

## Key Questions

1. Why do single-agent architectures with smart tools outperform multi-agent systems?

2. What are the only legitimate reasons to adopt a multi-agent architecture?

## References

- Stop Overengineering: Workflows vs AI Agents Explained (YouTube)

- From 12 Agents to 1 (DecodingAI article)

2. Deep Research Agent produces research.md

The agent runs multiple Gemini-grounded search queries and analyzes YouTube videos, then compiles everything into a structured research brief with sources.

The full research.md for this example is ~20k tokens across 2 queries and 1 video transcript.

3. Write a guideline

A short brief describing the post angle, audience, and key points:

# LinkedIn Post Guideline

## Topic

Why most AI teams should use 1 agent instead of 12.

## Angle

Open with the counterintuitive "12 agents → 1" hook. Introduce the complexity

spectrum. End with a clear mental model.

## Target Audience

AI engineers and technical leads building LLM-powered applications.

## Key Points

- A team planned 12 agents but shipped 1 — it worked better.

- The spectrum: workflows → single agent + tools → multi-agent. Stay left.

- "Context rot": past ~10-20 tools, LLMs degrade at tool selection.

- Only 4 valid reasons for multi-agent.

## Tone

Direct, opinionated, engineer-to-engineer. No fluff.

4. Writing Workflow refines the post

The evaluator-optimizer loop generates a draft, then runs 3 rounds of review + edit:

|

v0 — Initial draft

|

v3 — After 3 review/edit cycles

|

| Verbose, redundant phrasing, weak hook | Tighter, punchier, stronger structure |

Harness engineering isn't just a new term for prompt engineering. It's where AI is heading.

Agents got useful enough for code and tools, but they weren't reliable. They'd repeat mistakes. The bottleneck shifted from code generation to consistent, reliable behavior in real systems.

Think of it this way: prompt engineering is what to ask. Context engineering is what to send the model. Harness engineering is how the whole thing operates. It's the environment around the model, beyond just tokens.

Car analogy: the model is the engine. Context is the fuel. The harness is the rest of the car: steering, brakes, lane boundaries. It prevents crashes.

A harness includes tools, permissions, state, tests, logs, retries, checkpoints, guardrails, and evals.

Stop hoping the model improves. Engineer its environment. The burden shifts to us, the builders, to prevent repeat mistakes.

I use self-reflection in my Claude Code setup. The agent learns what I liked, saving tokens and time.

Real companies are already doing this. Anthropic's long-running agents externalize memory into artifacts. OpenAI built a 1M-line product with zero manual code using structured docs and agent-to-agent reviews. Stripe agents merge 1K+ PRs weekly within isolated environments. LangChain moved a coding agent from outside the top 30 to top 5 on Terminal Bench 2.0 by changing only the harness. Same model, better system.

This isn't just for coding agents. This is the new way software gets built.

The programmer's job is shifting: less writing code, more designing habitats for agents to work without issues. Think machine-readable docs, evals, sandboxes, permission boundaries, and structural tests.

Reliability is the real work. Not just prompting.

LLMs are heading into systems, workflows, harnesses. Value comes from orchestration, constraints, feedback loops—not just a single prompt. The future isn't one genius model. It's models in well-engineered environments.

That's why harness engineering matters. It's what happens when you stop demoing intelligence and start shipping it.

Want to learn more? I explain it all in my latest video: https://youtu.be/zYerCzIexCg What's your biggest challenge building reliable agent systems right now?

Forget your latest AI model. There's a new system breaking the internet: Angine de Poitrine.

This masked duo from Quebec, deploys a high-resolution audio architecture. It makes everything else sound low-res.

Khn's custom double-necked microtonal guitar features 2x resolution: 24 notes per octave, not 12. Fine-grained frequency modulation.

Klek backs him up on drums, driving rhythms that feel like O(n^2) time signatures. Pure algorithmic complexity.

Their sound: "Dada Pythagorean-Cubist mantra-rock." Eastern traditions meet Frank Zappa.

Their 27-minute inference run for KEXP in Feb 2026 hit 7M+ views and broke the internet. Sold-out shows in NYC, London, Rennes followed.

Their anonymity adds another layer. Polka-dot costumes and papier-mâché masks are anonymous inference endpoints.

They communicate in an invented language. This ensures pure signal: raw output, no artist biases. A decoupled identity art experiment.

Khn's loop pedals are recursive pipelines. They stack complex guitar and bass in real-time, building dense soundscapes.

Just dropped: their new album, Vol. II, on April 3, 2026.

AI music is common. Angine de Poitrine proves human artistry is the ultimate non-deterministic function. Raw, complex, and deeply human.

You need to hear this.

What's the most complex system you've encountered recently?

Browse more full examples (seed, research, post drafts, reviews, final post + image) in the

examples/directory.

Tech Stack

| Component | Tool |

|---|---|

| LLM API | Google Gemini (via google-genai SDK) |

| MCP Framework | FastMCP |

| Data Validation | Pydantic |

| Settings | Pydantic Settings |

| Observability | Opik |

| Image Generation | Gemini Flash Image |

| QA | Ruff |

| Package Manager | uv |

Getting Started

Assumes working Python knowledge and basic familiarity with LLMs.

| Section | Estimated Time |

|---|---|

| Setup | ~15 min |

| Deep Research Agent | ~40 min |

| LinkedIn Writing Workflow | ~40 min |

| Evaluation & Polish | ~25 min |

Prerequisites

| Requirement | Check | Install |

|---|---|---|

| Python 3.12+ | python --version |

uv python install 3.12 or python.org |

| uv 0.7+ | uv --version |

curl -LsSf https://astral.sh/uv/install.sh | sh (docs) |

| GNU Make | make --version |

Pre-installed on macOS/Linux. Windows: choco install make |

| Google API Key | — | aistudio.google.com/apikey (required — all LLM calls use Gemini) |

| Opik account | — | comet.com/site/products/opik (optional, for observability and evals) |

Installation

Clone and configure:

git clone https://github.com/iusztinpaul/designing-real-world-ai-agents-workshop.git cd designing-real-world-ai-agents-workshop cp .env.example .env # add your GOOGLE_API_KEY (+ optional OPIK_API_KEY, OPIK_WORKSPACE)Install dependencies:

uv syncNote: If you don't have Python 3.12+, uv can install it for you:

uv python install 3.12, then re-runuv sync.Verify the setup:

make test-end-to-end # runs research + writing pipeline end-to-endIf it completes without errors, you're good to go.

Running the Code

There are three ways to run the workflows:

| Mode | Best for |

|---|---|

| MCP Servers (recommended) | Interactive use with AI harness |

| Skills | Guided slash-command workflows |

| Scripts | Verify setup, smoke tests |

MCP Servers (recommended)

Connect the servers to an MCP-compatible harness (Claude Code, Cursor) for interactive use. This is the primary way to use the workshop.

Setup: The .mcp.json file is pre-configured. Both servers start automatically when you open the project in Claude Code or Cursor.

| Server | Tools | Prompt |

|---|---|---|

deep-research |

deep_research, analyze_youtube_video, compile_research |

research_workflow |

linkedin-writer |

generate_post, edit_post, generate_image |

linkedin_post_workflow |

Usage:

- Open the project in Claude Code or Cursor

- Invoke an MCP prompt (e.g.,

research_workflow) to get guided through the full workflow - Or call individual tools directly for fine-grained control

Manual server start (advanced):

make run-research-server # stdio transport

make run-writing-server # stdio transport

Skills

Pre-built slash commands that orchestrate the MCP tools with sensible defaults. All output goes to outputs/{topic-slug}/.

| Command | What it does |

|---|---|

/research |

Deep research on a topic → research.md |

/write-post |

Generate LinkedIn post from existing research → post.md + post_image.png |

/research-and-write |

Full pipeline: research a topic, then write a post from it |

Example:

/research-and-write

The skill will ask you for a topic and guideline, then run the full pipeline end-to-end. Check examples/ to see what each step produces.

Run workflows directly from the terminal via make. Useful for verifying your setup works and running quick smoke tests. See [examples/](examples/) for full end-to-end output samples.

Test workflows:

make test-research-workflow # Research on a sample topic → test_logic/research.md

make test-writing-workflow # Generate post from research → test_logic/post.md

make test-end-to-end # Both steps sequentially

Note:

test-writing-workflowrequirestest_logic/research.mdto exist. Runtest-research-workflowfirst, or usetest-end-to-end.

Full dataset run:

The [datasets/](datasets/) directory contains a pre-built LinkedIn posts dataset with seeds, guidelines, research documents, ground truth posts, and generated outputs — used for both batch runs and evaluation.

make run-dataset-writing # Research + write for all dataset posts (with images)

make run-dataset-writing-no-image # Same, skip image generation (faster)

Evaluation (requires Opik)

The workshop includes an LLM-as-judge evaluation pipeline. Instead of manually reviewing each generated post, an LLM scores them against quality criteria (structure, tone, accuracy). Opik tracks these scores across runs so you can measure whether prompt or pipeline changes actually improve output quality.

make eval-dev # LLM judge on dev split

make eval-test # LLM judge on test split

make eval-online # Generate + judge posts on the fly

Each command automatically uploads the dataset to Opik before running. To upload without evaluating (e.g., to browse in the Opik UI), use

make upload-eval-dataset.

Project Structure

├── src/

│ ├── research/ # Deep Research Agent MCP server

│ │ ├── server.py # FastMCP entry point

│ │ ├── config/ # Settings, constants, prompt templates

│ │ ├── models/ # Pydantic schemas for structured LLM output

│ │ ├── app/ # Business logic handlers

│ │ ├── tools/ # MCP tool implementations

│ │ ├── routers/ # MCP tool, resource, and prompt registration

│ │ └── utils/ # Gemini client, file I/O, Opik, markdown helpers

│ └── writing/ # LinkedIn Writer MCP server

│ ├── server.py # FastMCP entry point

│ ├── profiles/ # Shipped markdown profiles (structure, terminology, character, branding)

│ ├── config/ # Settings, constants, prompt templates

│ ├── models/ # Pydantic schemas (Post, Review, Profiles)

│ ├── app/ # Business logic handlers

│ ├── evals/ # LLM judge metric, dataset upload, evaluation harness

│ ├── tools/ # MCP tool implementations

│ ├── routers/ # MCP tool, resource, and prompt registration

│ └── utils/ # Gemini client, Imagen, Opik helpers

├── datasets/ # LinkedIn posts dataset with labels and splits

├── examples/ # Full end-to-end output samples (seed → research → posts → image)

├── scripts/ # Entrypoints and test scripts

├── .mcp.json # MCP server configuration for harnesses

├── Makefile # Command center

└── .env.example # Environment variable template

Next Steps

| Resource | Description |

|---|---|

| Agentic AI Engineering Course | Our full course. 34 lessons. Three end-to-end portfolio projects. A certificate. And a Discord community. |

| Agentic AI Engineering Guide | Free 6-day email course on the mistakes that silently break AI agents in production. |

| AI Engineering Cheatsheets | Quick-reference sheets for agents, RAG, fine-tuning, and more. Ready to be plugged into Claude Code as context. |

Contributors

|

|

|

| Louis-François Bouchard AI Engineer |

Paul Iusztin AI Engineer |

Samridhi Vaid AI Engineer |

License

MIT License. See LICENSE for details.

Copyright (c) 2026 Paul Iusztin, Towards AI Inc

Reviews (0)

Sign in to leave a review.

Leave a reviewNo results found