starling

Health Pass

- License — License: Apache-2.0

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 11 GitHub stars

Code Fail

- eval() — Dynamic code execution via eval() in inspect/ui/static/htmx.min.js

- new Function() — Dynamic code execution via Function constructor in inspect/ui/static/htmx.min.js

- exec() — Shell command execution in inspect/ui/static/htmx.min.js

- network request — Outbound network request in inspect/ui/static/replay.js

- rm -rf — Recursive force deletion command in scripts/smoke.sh

Permissions Pass

- Permissions — No dangerous permissions requested

No AI report is available for this listing yet.

event-sourced adk for go

Event-sourced agent runtime for Go.

Replayable runs · Tamper-evident logs · Provider-neutral tools · Production debugging

Every run is an event log.

That's the whole pitch. Starling treats the agent loop as a stream of

typed, append-only events - every prompt, model chunk, tool call,

budget decision, and terminal state - committed to a BLAKE3 hash chain

with a Merkle root over the whole run. The log is the source of truth;RunResult is just a convenience derived from it.

That single decision is what gives you everything else:

- Replay is reading the log back through the same agent wiring and

byte-comparing each re-emitted event. Divergence is a structured

error pointing at the first event that didn't reproduce. - Resume is appending to a chain that didn't reach a terminal

event. The hash chain enforces "nothing was lost in the gap." - Audit is the Merkle root on the terminal event committing to

every leaf - tampering with any earlier event invalidates the

commitment. - Cost control, observability, the inspector, replay tests -

all of them are projections of the same event stream.

If you've worked with event sourcing before, this should sound

familiar. If you've shipped LLM agents before, you know what it costs

to not have this.

What's included

- Event-sourced execution: every meaningful runtime action is an event.

- Deterministic replay: recorded runs can be replayed without calling the

model or re-running recorded side effects. - Durable event logs: in-memory, SQLite, and Postgres backends with schema

migration and validation helpers. - Provider adapters: OpenAI-compatible APIs, Anthropic, Gemini, Amazon

Bedrock, and OpenRouter. - MCP tools: stdio subprocess and streamable HTTP clients backed by the

official Go MCP SDK. - Tool safety: retries, transient error classification, typed tool errors,

max MCP output caps, and replay-safe side effects. - Hermetic tests:

starlingtestships a scripted provider and replay

assertions so agent tests run without an LLM. - Inspector: dependency-free browser UI for exploring runs and replay

divergence. - HTTP daemon helper:

starlingdlets your own agent binary accept runs

over HTTP, stream SSE updates, expose metrics, and mount the inspector. - Observability: metrics wrappers, OpenTelemetry-friendly examples, and

opt-in structuredslogoutput (silent by default; passConfig.Logger = slog.New(...)to enable).

Install

go get github.com/jerkeyray/[email protected]

Starling is a single Go module. The provider sub-packages

(provider/anthropic, provider/openai, provider/gemini,provider/bedrock, provider/openrouter) come along with this go get;

no separate install is needed.

Pin a tag rather than tracking main - Starling is in beta and breaking

changes are permitted between beta cuts. See Release policy

and CHANGELOG.md.

Documentation

- docs/getting-started.md - install, your

first agent, tools, durable storage, replay. - docs/mental-model.md - what a Run is, when

it terminates, when to use one Run versus many, what replay

actually checks. - docs/faq.md - quick answers to recurring questions.

- Cookbook: branching,

manual writes,

multi-turn. - Reference: events,

step primitives,

cost model,

tools,

replay,

contracts,

metrics,

starlingd,

save file,

MCP server.

docs/README.md is the full index.

Quickstart

Single-turn, no tools — the most common shape:

package main

import (

"context"

"fmt"

"os"

starling "github.com/jerkeyray/starling"

"github.com/jerkeyray/starling/eventlog"

"github.com/jerkeyray/starling/provider/openai"

)

func main() {

prov, err := openai.New(openai.WithAPIKey(os.Getenv("OPENAI_API_KEY")))

if err != nil {

panic(err)

}

log := eventlog.NewInMemory()

a := &starling.Agent{

Provider: prov,

Log: log,

Config: starling.Config{Model: "gpt-4o-mini"},

}

text, err := a.RunOnce(context.Background(), "Give me a three bullet incident summary.")

if err != nil {

panic(err)

}

fmt.Println(text)

}

RunOnce ignores any tools on the agent and caps the loop at one turn —

ideal for prompt-in/text-out use cases. For tool-using or multi-turn

flows, call Agent.Run (see examples/incident_triage).

Note that MaxTurns counts every model call, so a forced single-tool

flow needs MaxTurns >= 2 (turn 1 emits the tool_use, turn 2 lets the

model respond to the tool result).

Core Model

Agent.Run

-> provider.Stream

-> tool execution

-> budget checks

-> append-only event log

-> replay / inspect / resume

Starling treats the event log as the source of truth. The runtime records model

requests, streaming chunks, tool calls, usage, budget decisions, terminal states,

and replay metadata as structured events. Backends validate event ordering,

schema versions, and hash continuity.

Durable Logs

Use SQLite or Postgres when runs must survive process restarts or be inspected

later.

log, err := eventlog.NewSQLite("starling.db")

if err != nil {

panic(err)

}

defer log.Close()

Durable backends support schema preflight checks, migrations, validation, and

read-only inspection workflows.

Replay And Resume

Replay a recorded run against the same agent wiring:

if err := starling.Replay(ctx, log, runID, a); err != nil {

if errors.Is(err, starling.ErrNonDeterminism) {

// Inspect the log for the first diverging event.

}

panic(err)

}

Resume continues from a persisted run while preserving call correlation and

budget accounting.

next, err := a.Resume(ctx, runID, "Continue with remediation steps.")

The starlingtest package wires the same machinery into Go tests

without touching a real model:

p := &starlingtest.ScriptedProvider{Scripts: scripts}

a := &starling.Agent{Provider: p, Log: eventlog.NewInMemory(), Config: cfg}

res, _ := a.Run(ctx, "...")

p.Reset()

starlingtest.AssertReplayMatches(t, a.Log, res.RunID, a)

Providers

| Provider | Package | Notes |

|---|---|---|

| OpenAI-compatible | provider/openai |

OpenAI, Groq, Together, Ollama, vLLM, LM Studio, Azure OpenAI, and compatible APIs via custom BaseURL. |

| Anthropic | provider/anthropic |

Messages API support, tool use, thinking/signatures, and prompt caching metadata. |

| Gemini | provider/gemini |

Native Gemini adapter for Google models. |

| Amazon Bedrock | provider/bedrock |

Native Bedrock ConverseStream adapter with AWS SDK auth, tool use, reasoning, and cache-aware usage. |

| OpenRouter | provider/openrouter |

OpenRouter-specific convenience wrapper over the OpenAI-compatible path. |

Provider behavior is covered by a conformance suite so adapters share the same

streaming, usage, tool-call, and error contracts.

MCP Tools

Starling can expose remote MCP tools as regular tool.Tool values.

client, err := toolmcp.NewCommand(ctx,

exec.Command("uvx", "mcp-server-filesystem", "/tmp"),

toolmcp.WithIncludeTools("read_file", "list_directory"),

toolmcp.WithMaxOutputBytes(64<<10),

)

if err != nil {

panic(err)

}

defer client.Close()

tools, err := client.Tools(ctx)

if err != nil {

panic(err)

}

a := &starling.Agent{

Provider: prov,

Log: log,

Tools: tools,

Config: starling.Config{Model: "gpt-4o-mini", MaxTurns: 8},

}

Supported transports:

toolmcp.NewCommand(ctx, cmd, opts...)for stdio subprocess servers.toolmcp.NewHTTP(ctx, endpoint, httpClient, opts...)for streamable HTTP servers.toolmcp.New(ctx, transport, opts...)for custom transports.

MCP tool calls are wrapped in step.SideEffect, so replay uses the recorded

result instead of contacting the remote MCP server again. Starling currently

supports MCP tools; resources, prompts, and sampling are intentionally deferred.

Budgets And Retries

Budgets can cap input tokens, output tokens, USD cost, and wall-clock runtime.

a := &starling.Agent{

Provider: prov,

Log: log,

Budget: &starling.Budget{

MaxInputTokens: 20_000,

MaxOutputTokens: 4_000,

MaxUSD: 0.50,

MaxWallClock: 30 * time.Second,

},

Config: starling.Config{Model: "gpt-4o-mini", MaxTurns: 8},

}

Tool retries are explicit and replay-aware:

out, err := step.CallTool(ctx, step.ToolCall{

CallID: "fetch-ticket",

TurnID: turnID,

Name: "fetch_ticket",

Args: args,

Idempotent: true,

MaxAttempts: 3,

})

MCP server

starling-mcp exposes the recorded event log to AI assistants

(Claude Desktop, Cursor, Claude Code) over stdio. Read-only by

construction. Once wired into your MCP client, you ask normal

questions about your agent's runs and the model calls the

appropriate tool — list_runs, summarize_run, get_event,diff_runs, search_runs, etc.

go install github.com/jerkeyray/starling/cmd/starling-mcp@latest

Add to your client config (Claude Desktop shape; Cursor / Claude

Code follow the same pattern):

{

"mcpServers": {

"starling": {

"command": "starling-mcp",

"args": ["/path/to/runs.db"]

}

}

}

Full reference: docs/reference/mcp-server.md.

HTTP Daemon

Use package starlingd when you want your own agent wiring exposed as

a private HTTP service. It provides a bounded in-process queue, asyncPOST /api/v1/runs, SSE progress streams, run/event read APIs,/metrics, bearer auth, and an optional inspector mount.

if err := starlingd.Command(buildAgent).Run(os.Args[1:]); err != nil {

panic(err)

}

Full reference: docs/reference/starlingd.md.

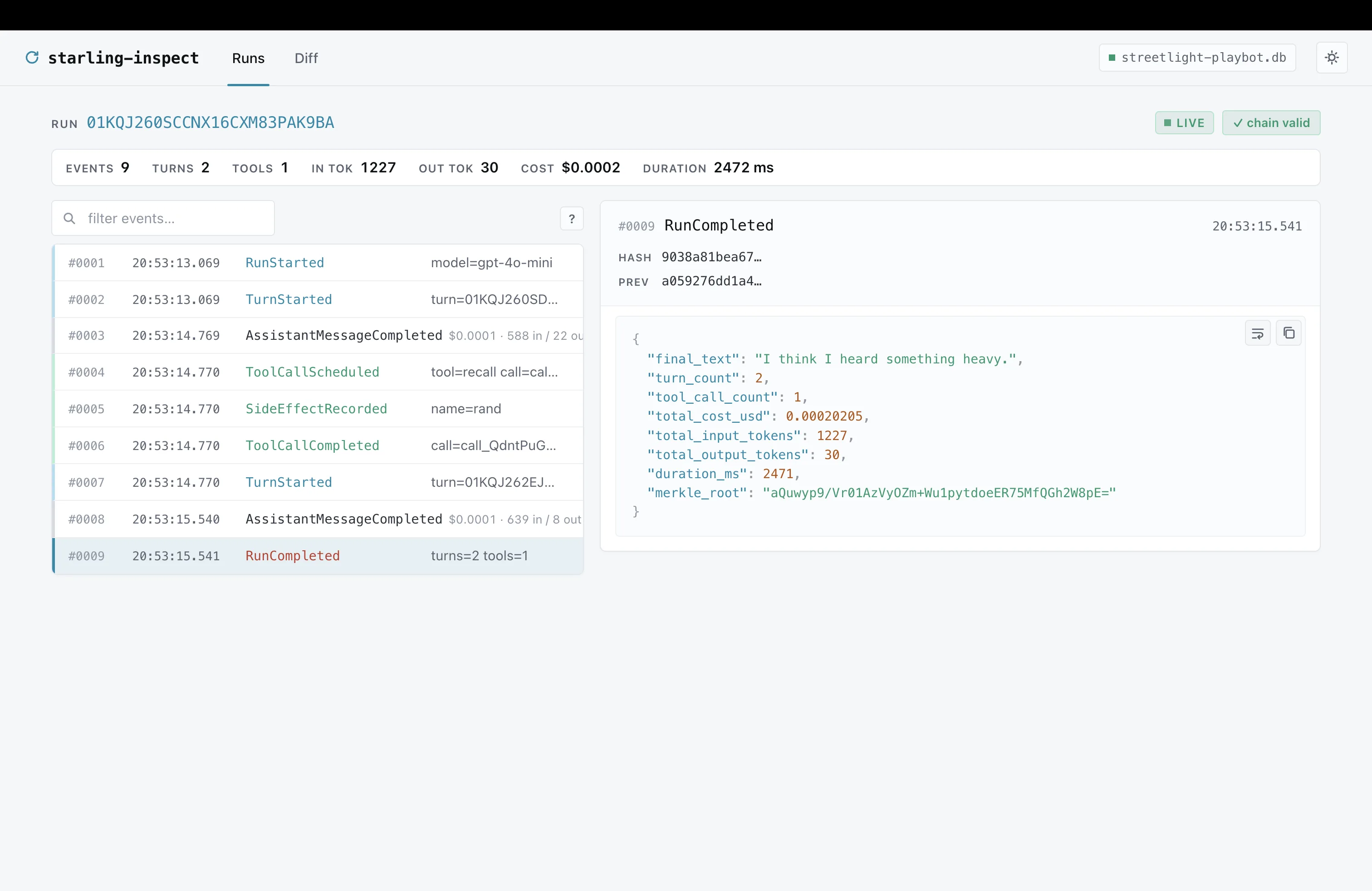

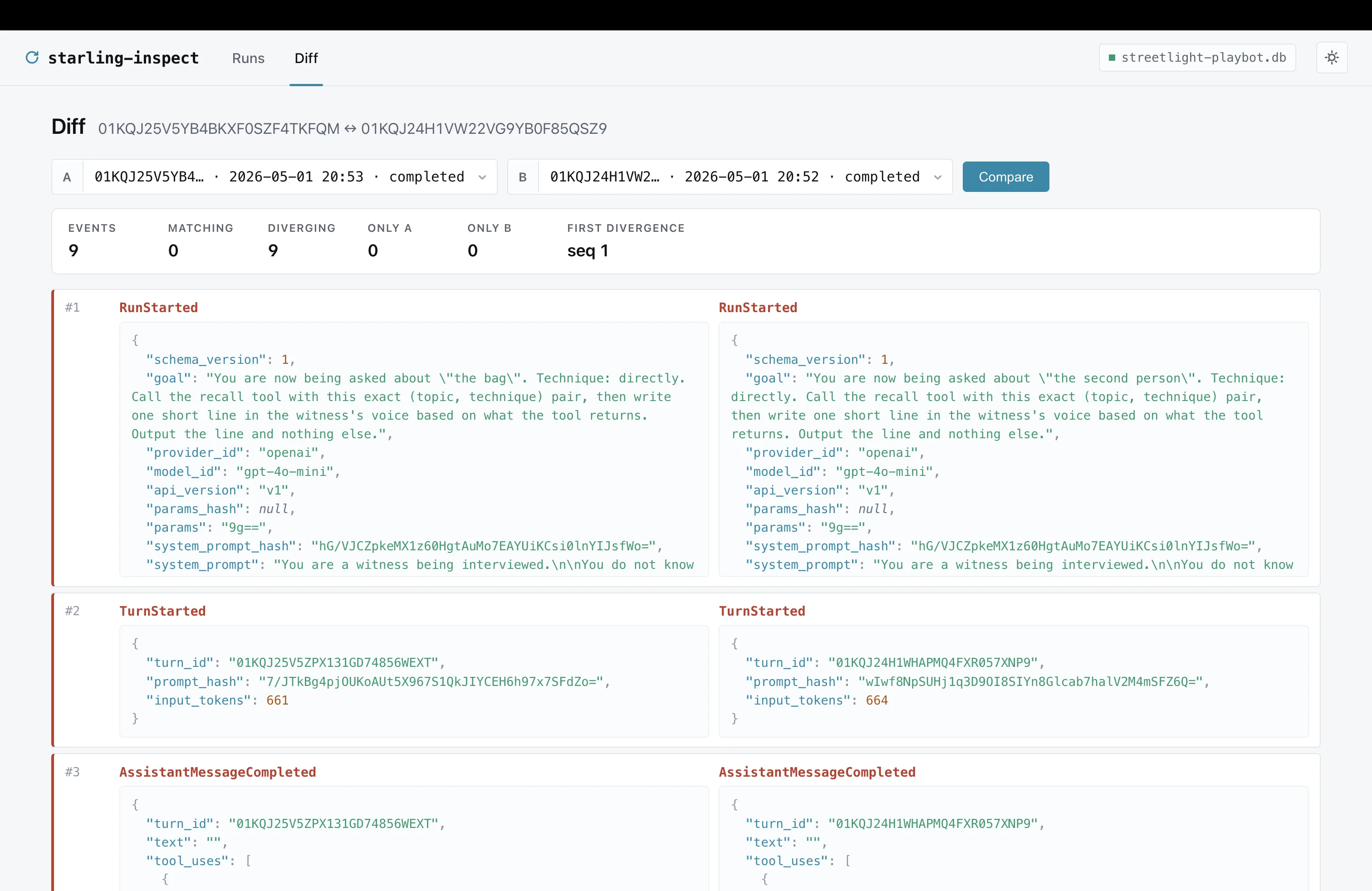

Inspector

go run ./cmd/starling-inspect starling.db

Loopback web UI: runs list with per-row totals, per-event timeline

with a syntax-highlighted JSON detail pane, a /sessions page that

groups runs by Config.SessionID, and a /diff page aligning any

two runs side-by-side by sequence number. Dark by default, theme

toggle in the topbar, hashes and run ids are click-to-copy, no CDN

or JS build step. Runs read-only - Append is impossible on the

inspector's DB handle.

CLI

go install github.com/jerkeyray/starling/cmd/starling@latest for the

stock binary, or build a dual-mode binary aroundstarling.InspectCommand / starling.ReplayCommand to wire your

own agent factory.

| Subcommand | What it does |

|---|---|

validate <db> [<runID>] |

Hash-chain + Merkle check, one run or every run. |

export <db> <runID> |

Dump events as NDJSON (pipe into jq). |

prune [flags] <db> |

Delete old whole runs after an explicit dry-run. |

inspect [flags] <db> |

Read-only web inspector. |

replay <db> <runID> |

Headless replay. Dual-mode binaries only. |

contracts <db> <file> <runID...> |

Validate explicit runs against a YAML contract file. |

migrate <db> |

Apply pending schema migrations. |

schema-version <db> |

Print the on-disk schema version. |

doctor [<db>] |

Health check: env vars, schema, chain validation. |

--version |

Print the linked starling module version. |

Production Checklist

- Run

make checkbefore release: format, vet, build, race tests, lint, and

vulnerability scan. - Pick a durable log backend for production runs: SQLite for single-node use,

Postgres for shared infrastructure. - Run eventlog preflight and migrations during deploys.

- Protect inspector access behind your normal internal auth boundary.

- Put

starlingdbehind TLS, rate limiting, and your normal service auth;

its built-in bearer token is a private-service guard, not a full auth system. - Set explicit budgets for tokens, cost, and wall-clock runtime.

- Use idempotent retries and per-call timeouts for tools that touch external

systems. - Use replay regression tests for critical agent workflows.

- Store raw provider responses only when your privacy and retention policy

allows it. - Export runs you must keep, then run

starling prune --older-than <duration> --confirm <db>as a scheduled retention job. Without--confirm, prune is

a dry-run report.

Examples

| Example | What it shows |

|---|---|

| examples/hello | The smallest end-to-end agent (~50 lines). Start here. |

| examples/m1_hello | Dual-mode pattern: run / inspect / replay / reset / show. |

| examples/multi_turn | Chat-style workflow: one Run per user message. |

| examples/branching | eventlog.ForkSQLite to split a recorded run into a counterfactual branch. |

| examples/manual_writes | Writing events without Agent.Run, including the Merkle root. |

| examples/incident_triage | End-to-end production-style workflow with budgets, replay, resume, metrics, OTel, and durable logs. |

| examples/mcp_tools | MCP server tools adapted into Starling tools. |

| examples/m4_inspector_demo | Local run data for the inspector. |

Code layout

package starling lives at the module root - that's a Go convention,

not a layout choice. The interesting parts are under sub-packages.

.

├── agent.go, config.go, errors.go, result.go, Core API: Agent, Config,

│ stream.go, runstream.go, resume.go, RunResult, Resume, replay

│ replay_api.go, metrics.go, version.go, wrappers, sentinel errors,

│ *_command.go, *_test.go CLI command helpers, tests.

│

├── bench/ benchmarks

├── budget/ pricing tables, USD/token caps

├── cmd/

│ ├── starling/ stock CLI (validate / export / inspect / replay / migrate / doctor)

│ └── starling-inspect/ standalone inspector binary

├── docs/ prose docs (getting-started, mental-model, cookbook, reference)

├── event/ Event / Kind types, per-kind payload schemas

├── eventlog/ append-only log: in-memory / SQLite / Postgres

├── examples/ runnable agents (start with examples/hello)

├── inspect/ inspector server + UI templates + static assets

├── internal/ unexported helpers (cborenc, obs)

├── merkle/ public BLAKE3 Merkle helpers

├── provider/ OpenAI / Anthropic / Gemini / Bedrock / OpenRouter adapters

├── replay/ replay re-execution + Stream

├── starlingtest/ test helpers (ScriptedProvider, AssertReplayMatches)

├── starlingd/ HTTP daemon package + command helper

├── step/ step.Now / step.Random / step.SideEffect, CallTool

└── tool/ Tool interface, Typed[In,Out], Wrap middleware, MCP client

Development

make check

Useful targets:

make test # race-enabled Go test suite

make lint # golangci-lint

make vuln # govulncheck

make inspect # run the inspector locally

make smoke # quick end-to-end smoke run

Release policy

Starling is in beta. Versions are tagged v0.x.y-beta.N and

distributed through Go module proxy.

- Pin a tag. Don't track

main; the working branch may carry

breaking changes between beta cuts. - Breaking changes are permitted between beta tags. Each tag's

delta is recorded in CHANGELOG.md. Until GA there

is no API or wire-format compatibility promise. - Within a tag, breakage is a bug. A pinned beta is reproducible.

- Schema versioning for the event log is documented under

Event log schema below - this is the one

surface that has its own forward/back-compat contract. - GA (

v1.0.0) will land when the public API surface, event

schema, and replay contract are stable enough to commit to. No

date promised.

Event log schema

event.SchemaVersion is the format version of the events written

into the log. Resume and replay both read this field and refuse runs

written by an unknown schema (ErrSchemaVersionMismatch).

- When the constant bumps. Whenever the wire-format of an event

payload, the set ofevent.Kindvalues, or the canonical-encoding

rules change in a way that affects the BLAKE3 hash chain. - What consumers must do. Re-pin to the matching beta tag, then

runstarling migrate <db>(also exposed in-process asstarling.MigrateCommand) to bring on-disk logs forward. Thestarling schema-version <db>command prints the current version. - Compatibility within a major-schema family. Minor bumps must

remain resume-compatible: an older agent binary should be able to

resume a run written by a newer one whenever the new schema is a

superset. Breaking format changes bump the major part and require

the explicitmigratestep. - Migrations live in

migrate_command.go. Each new on-disk

format ships its forward migration alongside the schema bump in

the same beta.

License

Apache 2.0. See LICENSE.

Reviews (0)

Sign in to leave a review.

Leave a reviewNo results found