olb

Health Pass

- License — License: NOASSERTION

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 16 GitHub stars

Code Pass

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Pass

- Permissions — No dangerous permissions requested

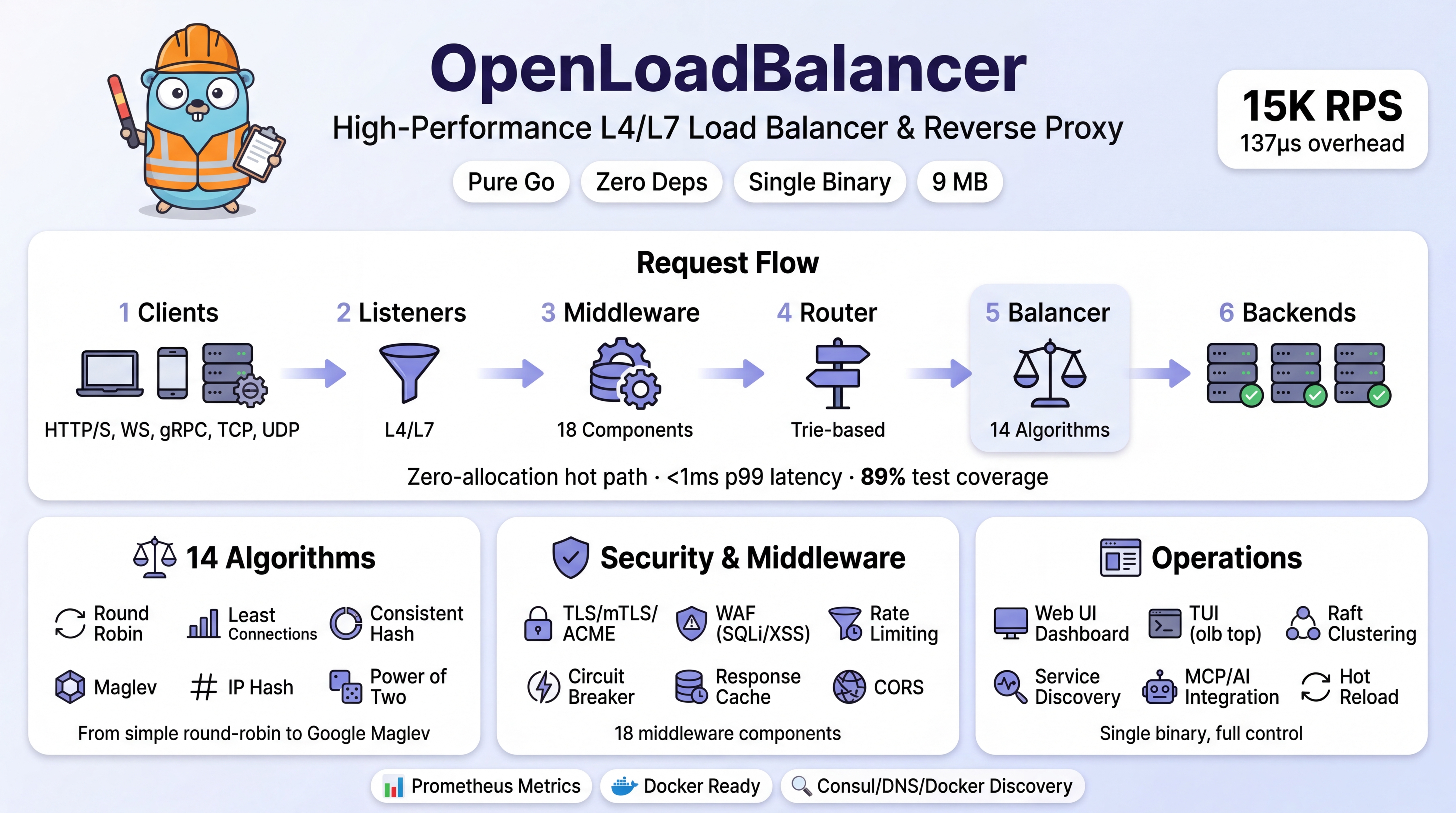

This tool is a high-performance Layer 4 and Layer 7 load balancer written in Go. It acts as an MCP server to provide AI integration while offering a single-binary solution with a Web UI, clustering, and advanced routing capabilities.

Security Assessment

By design, a load balancer inherently makes network requests to route traffic and handles sensitive data, including TLS certificates and potentially WAF-logged payloads. The suggested installation method uses `curl | sh`, which requires caution, though building from source is a safe alternative. The automated code scan found no dangerous patterns, hardcoded secrets, or requests for overly broad permissions. However, the GitHub repository license is marked as "NOASSERTION" despite the README claiming Apache 2.0, and the tool claims to require Go 1.25+ (a version that does not exist yet). Overall risk: Low.

Quality Assessment

The project is highly active, with its last push occurring today. It demonstrates a strong commitment to testing, boasting 105 combined tests and 90% code coverage. While community trust is currently low due to having only 16 GitHub stars, the codebase is clean and recently maintained.

Verdict

Safe to use, but verify the actual licensing terms in the repository and prefer building from source over piping web scripts to your shell.

High-performance zero-dependency L4/L7 load balancer written in Go. Single binary with Web UI, clustering, MCP/AI integration. 8.5K RPS, 39 E2E tests.

OpenLoadBalancer

Zero-dependency L4/L7 load balancer for any backend. One binary. Written in pure Go.

Works with Node.js, Python, Java, Go, Rust, .NET, PHP — anything that speaks HTTP or TCP.

Quick Start

curl -sSL https://openloadbalancer.dev/install.sh | sh

Create olb.yaml:

admin:

address: "127.0.0.1:8081"

listeners:

- name: http

address: ":80"

routes:

- path: /

pool: web

pools:

- name: web

algorithm: round_robin

backends:

- address: "10.0.1.10:8080"

- address: "10.0.1.11:8080"

health_check:

type: http

path: /health

interval: 10s

olb start --config olb.yaml

That's it. HTTP proxy on :80, admin API on :8081, health checks every 10s, round-robin across two backends.

Install

# Binary

curl -sSL https://openloadbalancer.dev/install.sh | sh

# Docker

docker pull ghcr.io/openloadbalancer/olb:latest

docker run -d -p 80:80 -p 8081:8081 -v ./olb.yaml:/etc/olb/configs/olb.yaml ghcr.io/openloadbalancer/olb:latest

# Homebrew

brew tap openloadbalancer/olb && brew install olb

# Build from source

git clone https://github.com/openloadbalancer/olb.git && cd olb && make build

Requires Go 1.25+. No other dependencies.

Features

Proxy: HTTP/HTTPS, WebSocket, gRPC, SSE, TCP (L4), UDP (L4), SNI routing, PROXY protocol v1/v2

Load Balancing: 14 algorithms — Round Robin, Weighted RR, Least Connections, Weighted Least Connections, Least Response Time, Weighted Least Response Time, IP Hash, Consistent Hash (Ketama), Maglev, Ring Hash, Power of Two, Random, Weighted Random, Sticky Sessions

Security: TLS termination + SNI, ACME/Let's Encrypt, mTLS, OCSP stapling, 6-layer WAF (IP ACL, rate limiting, request sanitizer, detection engine with SQLi/XSS/path traversal/CMDi/XXE/SSRF, bot detection with JA3 fingerprinting, response protection with security headers + data masking), circuit breaker

Middleware: 16 components — Recovery, body limit, WAF (6-layer pipeline), IP filter, real IP, request ID, timeout, rate limit, circuit breaker, CORS, headers, compression (gzip), retry, cache, metrics, access log

Observability: Web UI dashboard (8 pages), TUI (olb top), Prometheus metrics, structured JSON logging, admin REST API (15+ endpoints)

Operations: Hot config reload (SIGHUP or API), Raft clustering + SWIM gossip, service discovery (Static/DNS/Consul/Docker/File), MCP server for AI integration, plugin system, 30+ CLI commands

MCP Integration (AI-Powered Management)

OpenLoadBalancer includes a built-in Model Context Protocol (MCP) server that enables AI agents (Claude, GPT, Copilot) to monitor, diagnose, and manage the load balancer.

Transport

- SSE (Server-Sent Events):

GET /ssefor streaming +POST /messagefor commands — MCP spec compliant - HTTP POST:

POST /mcpfor simple request/response (backwards compatible) - Stdio: Line-delimited JSON-RPC over stdin/stdout for local CLI tools

Authentication

admin:

mcp_address: ":8082"

mcp_token: "your-secret-token" # Bearer token auth

mcp_audit: true # Log all tool calls

17 MCP Tools

| Category | Tools |

|---|---|

| Metrics | olb_query_metrics — RPS, latency, error rates, connections |

| Backends | olb_list_backends, olb_modify_backend — Add, remove, drain, enable/disable |

| Routes | olb_modify_route — Add, update, remove routes with traffic splitting |

| Diagnostics | olb_diagnose — Automated error/latency/capacity/health analysis |

| Config | olb_get_config, olb_get_logs, olb_cluster_status |

| WAF | waf_status, waf_add_whitelist, waf_add_blacklist, waf_remove_whitelist, waf_remove_blacklist, waf_list_rules, waf_get_stats, waf_get_top_blocked_ips, waf_get_attack_timeline |

Connect from Claude Desktop

{

"mcpServers": {

"olb": {

"url": "http://localhost:8082/sse",

"headers": {

"Authorization": "Bearer your-secret-token"

}

}

}

}

Performance

Benchmarked on AMD Ryzen 9 9950X3D:

| Metric | Result |

|---|---|

| Peak RPS | 15,480 (10 concurrent, round_robin) |

| Proxy overhead | 137µs (direct: 87µs → proxied: 223µs) |

| RoundRobin.Next | 3.5 ns/op, 0 allocs |

| Middleware overhead | < 3% (full stack vs none) |

| WAF overhead (6-layer) | ~35μs per request, < 3% at proxy scale |

| Binary size | 9 MB |

| P99 latency (50 conc.) | 22ms |

| Success rate | 100% across all tests |

| Algorithm | RPS | Avg Latency | Distribution |

|---|---|---|---|

| random | 12,913 | 3.5ms | 32/34/34% |

| maglev | 11,597 | 3.8ms | 68/2/30% |

| ip_hash | 11,062 | 4.0ms | 75/12/13% |

| power_of_two | 10,708 | 4.0ms | 34/33/33% |

| least_connections | 10,119 | 4.4ms | 33/33/34% |

| consistent_hash | 8,897 | 4.6ms | 0/0/100% |

| weighted_rr | 8,042 | 5.6ms | 33/33/34% |

| round_robin | 7,320 | 6.3ms | 35/33/32% |

See docs/benchmark-report.md for the complete report including concurrency scaling, backend latency impact, and middleware overhead measurements.

E2E Verified

56 end-to-end tests prove every feature works in a real proxy scenario:

| Category | Verified |

|---|---|

| Proxy | HTTP, HTTPS/TLS, WebSocket, SSE, TCP, UDP |

| Algorithms | RR, WRR, LC, IPHash, CH, Maglev, P2C, Random, RingHash |

| Middleware | Rate limit (429), CORS, gzip (98% reduction), WAF 6-layer (SQLi/XSS/CMDi/path traversal → 403, rate limit → 429, monitor mode, security headers, bot detection, IP ACL, data masking), IP filter, circuit breaker, cache (HIT/MISS), headers, retry |

| Operations | Health check (down/recovery), config reload, weighted distribution, session affinity, graceful failover (0 downtime) |

| Infra | Admin API, Web UI, Prometheus, MCP server, multiple listeners |

| Performance | 15K RPS, 137µs proxy overhead, 100% success rate |

Algorithms

| Algorithm | Config Name | Use Case |

|---|---|---|

| Round Robin | round_robin |

Default, equal backends |

| Weighted Round Robin | weighted_round_robin |

Unequal backend capacity |

| Least Connections | least_connections |

Long-lived connections |

| Least Response Time | least_response_time |

Latency-sensitive |

| IP Hash | ip_hash |

Session affinity by IP |

| Consistent Hash | consistent_hash |

Cache locality |

| Maglev | maglev |

Google-style hashing |

| Ring Hash | ring_hash |

Consistent with vnodes |

| Power of Two | power_of_two |

Balanced random |

| Random | random |

Simple, no state |

Configuration

Supports YAML, JSON, TOML, and HCL with ${ENV_VAR} substitution.

admin:

address: "127.0.0.1:8081"

middleware:

rate_limit:

enabled: true

requests_per_second: 1000

cors:

enabled: true

allowed_origins: ["*"]

compression:

enabled: true

waf:

enabled: true

mode: enforce

detection:

enabled: true

threshold: {block: 50, log: 25}

bot_detection: {enabled: true, mode: monitor}

response:

security_headers: {enabled: true}

listeners:

- name: http

address: ":8080"

routes:

- path: /api

pool: api-pool

- path: /

pool: web-pool

pools:

- name: web-pool

algorithm: round_robin

backends:

- address: "10.0.1.10:8080"

- address: "10.0.1.11:8080"

health_check:

type: http

path: /health

interval: 5s

- name: api-pool

algorithm: least_connections

backends:

- address: "10.0.2.10:8080"

weight: 3

- address: "10.0.2.11:8080"

weight: 2

See docs/configuration.md for all options.

CLI

olb start --config olb.yaml # Start proxy

olb stop # Graceful shutdown

olb reload # Hot-reload config

olb status # Server status

olb top # Live TUI dashboard

olb backend list # List backends

olb backend drain web-pool 10.0.1.10:8080

olb health show # Health check status

olb config validate olb.yaml # Validate config

olb cluster status # Cluster info

Architecture

┌─────────────────────────────────────────────────┐

│ OpenLoadBalancer │

Clients ─────────┤ │

HTTP/S, WS, │ Listeners → Middleware → Router → Balancer → Backends

gRPC, TCP, UDP │ (L4/L7) (16 types) (trie) (14 algos) │

│ │

│ WAF (6 layers) │ TLS │ Cluster │ MCP │ Web UI │

└─────────────────────────────────────────────────┘

Documentation

| Guide | Description |

|---|---|

| Getting Started | 5-minute quick start |

| Configuration | All config options |

| Algorithms | Algorithm details |

| API Reference | Admin REST API |

| Clustering | Multi-node setup |

| WAF | Web Application Firewall (6-layer defense) |

| MCP / AI | AI integration |

| Benchmarks | Performance data |

| Specification | Technical spec |

Contributing

See CONTRIBUTING.md. Key rules:

- Zero external deps — stdlib only

- Tests required — 90% coverage, don't lower it

- All features wired — no dead code in engine.go

- gofmt + go vet — CI enforced

License

Apache 2.0 — LICENSE

Reviews (0)

Sign in to leave a review.

Leave a reviewNo results found