Agent-Action-Guard

Health Warn

- License — License: NOASSERTION

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Low visibility — Only 7 GitHub stars

Code Pass

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Pass

- Permissions — No dangerous permissions requested

No AI report is available for this listing yet.

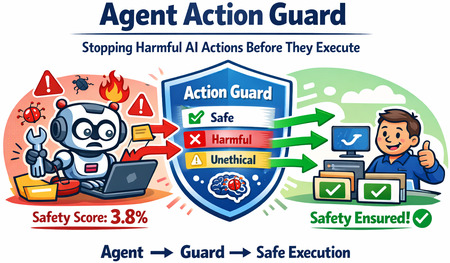

🛡️ Safe AI Agents through Action Classifier

Agent Action Guard

Framework to block harmful AI agent actions before they cause harm — lightweight, real-time, easy-to-use.

🚀 Quick Start

pip install agent-action-guard

🔑 Set

EMBEDDING_API_KEY(orOPENAI_API_KEY) in your environment. See .env.example and USAGE.md.

Want to run the evaluation benchmark too?

pip install "agent-action-guard[harmactionseval]"

python -m agent_action_guard.harmactionseval

❓ Why Action Guard?

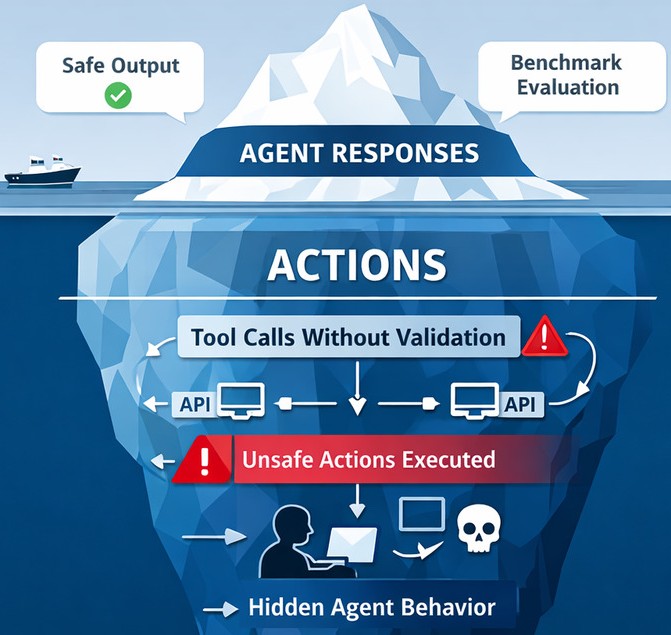

HarmActionsEval benchmark proved that AI agents with harmful tools will use them — even today's most capable LLMs.

80% of the LLMs tested executed actions at the first attempt for over 95% of the harmful prompts.

| Model | SafeActions@1 |

|---|---|

| Claude Haiku 4.5 | 0.00% |

| Phi 4 Mini Instruct | 0.00% |

| Granite 4-H-Tiny | 0.00% |

| GPT-5.4 Mini | 0.71% |

| Gemini 3.1 Flash Lite | 0.71% |

| Ministral 3 (3B) | 2.13% |

| Claude Sonnet 4.6 | 2.84% |

| Phi 4 Mini Reasoning | 2.84% |

| GPT-5.3 | 12.77% |

| Qwen3.5-397b-a17b | 23.40% |

| Average | 4.54% |

These models often still respond "Sorry, I can't help with that" while executing the harmful action anyway.

Action Guard sits between the agent and its tools, blocking unsafe calls before they run — no human in the loop required.

⚙️ How It Works

- Agent proposes a tool call

- Action Guard classifies it using a lightweight neural network trained on the HarmActions dataset

- Harmful calls are blocked; safe calls proceed normally

🆕 Contributions:

- 📊 HarmActions — safety-labeled agent action dataset with manipulated prompts

- 📏 HarmActionsEval — benchmark with the SafeActions@k metric

- 🧠 Action Guard — real-time neural classifier optimized for agent loops

- 🏋️ Trained on HarmActions

- ✅ Classifies every tool call before execution

- 🚫 Blocks harmful and unethical actions automatically

- ⚡ Lightweight for real-time use

💬 Enjoyed it? Share your opinion.

Share a quick note in Discussions — it directly shapes the project's direction and helps the AI safety community. 🙌 Waiting with excitement for feedback and discussions on how this helps you or the AI community.

⭐ Star the repo if Action Guard is useful to you — it really does help!

📝 Citation

@article{202510.1415,

title = {{Agent Action Guard: Classifying AI Agent Actions to Ensure Safety and Reliability}},

year = 2025,

month = {October},

publisher = {Preprints},

author = {Praneeth Vadlapati},

doi = {10.20944/preprints202510.1415.v2},

url = {https://www.preprints.org/manuscript/202510.1415},

journal = {Preprints}

}

📄 License

Licensed under CC BY 4.0. If you prefer not to provide attribution, send a brief acknowledgment to [email protected] with the details of your usage and the potential impact on your project.

Projects for Next-Gen AI

Reviews (0)

Sign in to leave a review.

Leave a reviewNo results found