Dive-into-Claude-Code

Health Pass

- License — License: NOASSERTION

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 44 GitHub stars

Code Warn

- Code scan incomplete — No supported source files were scanned during light audit

Permissions Pass

- Permissions — No dangerous permissions requested

This project is a comprehensive research repository and architectural analysis paper dissecting Claude Code's source code. It provides design guidelines and community insights for developers building AI agent systems.

Security Assessment

The risk level is Low. This repository is a documentation and educational resource, not an executable software tool. The automated code scan did not find any supported source files to analyze, but this is expected for a paper-based repository. It does not request dangerous permissions, execute shell commands, access sensitive data, or contain hardcoded secrets. The project does discuss 4 CVEs and security bypasses within Claude Code, but these are research observations, not functional vulnerabilities in the repo itself.

Quality Assessment

The project is highly maintained, with its last push occurring today. It has a solid baseline of community trust with 44 GitHub stars. While the automated license check returned a generic "NOASSERTION" (likely due to how the repository files are structured), the manual README excerpt clearly indicates the content is shared under a Creative Commons license (CC-BY-NC-SA-4.0).

Verdict

Safe to use (as a reference and educational resource).

A Systematic Analysis and Discussion of Claude Code for Designing Today's and Future AI Agent Systems

Dive into Claude Code

A comprehensive source-level architectural analysis of Claude Code (v2.1.88, ~1,900 TypeScript files, ~512K lines of code), combined with a curated collection of community analyses, a design-space guide for agent builders, and cross-system comparisons.

[!TIP]

TL;DR -- Only 1.6% of Claude Code's codebase is AI decision logic. The other 98.4% is deterministic infrastructure -- permission gates, context management, tool routing, and recovery logic. The agent loop is a simple while-loop; the real engineering complexity lives in the systems around it. This repo dissects that architecture and distills it into actionable design guidance for anyone building AI agent systems.

Table of Contents

From Our Paper

- 🌟 Key Highlights

- 📖 Reading Guide

- 🏗️ Architecture at a Glance

- 🧭 Values and Design Principles

- 🔄 The Agentic Query Loop

- 🛡️ Safety and Permissions

- 🧩 Extensibility

- 🧠 Context and Memory

- 👥 Subagent Delegation

- 💾 Session Persistence

Beyond the Paper

- 🛠️ Build Your Own AI Agent: A Design Guide

- 🌐 Community Projects & Research

- 🚀 Other Notable AI Agent Projects

- 🔖 Citation

Key Highlights

- 98.4% Infrastructure, 1.6% AI -- The agent loop is a simple while-loop; the real complexity is permission gates, context management, and recovery logic.

- 5 Values → 13 Principles → Implementation -- Every design choice traces back to human authority, safety, reliability, capability, and adaptability.

- Defense in Depth with Shared Failure Modes -- 7 safety layers, but all share performance constraints. 50+ subcommands bypass security analysis.

- 4 CVEs Reveal a Pre-Trust Window -- Extensions execute before the trust dialog appears.

- The Cross-Cutting Harness Resists Reimplementation -- The loop is easy to copy; hooks, classifier, compaction, and isolation are not.

Reading Guide

| If you are a... | Start here | Then read |

|---|---|---|

| Agent Builder | Build Your Own Agent | Architecture Deep Dive |

| Security Researcher | Safety and Permissions | Architecture: Safety Layers |

| Product Manager | Key Highlights | Values and Principles |

| Researcher | Full Paper (arXiv) | Community Resources |

1,884 files · ~512K lines · v2.1.88 · 7 safety layers · 5 compaction stages · 54 tools · 27 hook events · 4 extension mechanisms · 7 permission modes

Architecture at a Glance

Claude Code answers four design questions that every production coding agent must face:

| Question | Claude Code's Answer |

|---|---|

| Where does reasoning live? | Model reasons; harness enforces. ~1.6% AI, 98.4% infrastructure. |

| How many execution engines? | One queryLoop for all interfaces (CLI, SDK, IDE). |

| Default safety posture? | Deny-first: deny > ask > allow. Strictest rule wins. |

| Binding resource constraint? | ~200K (older models) / 1M (Claude 4.6 series) context window. 5 compaction layers before every model call. |

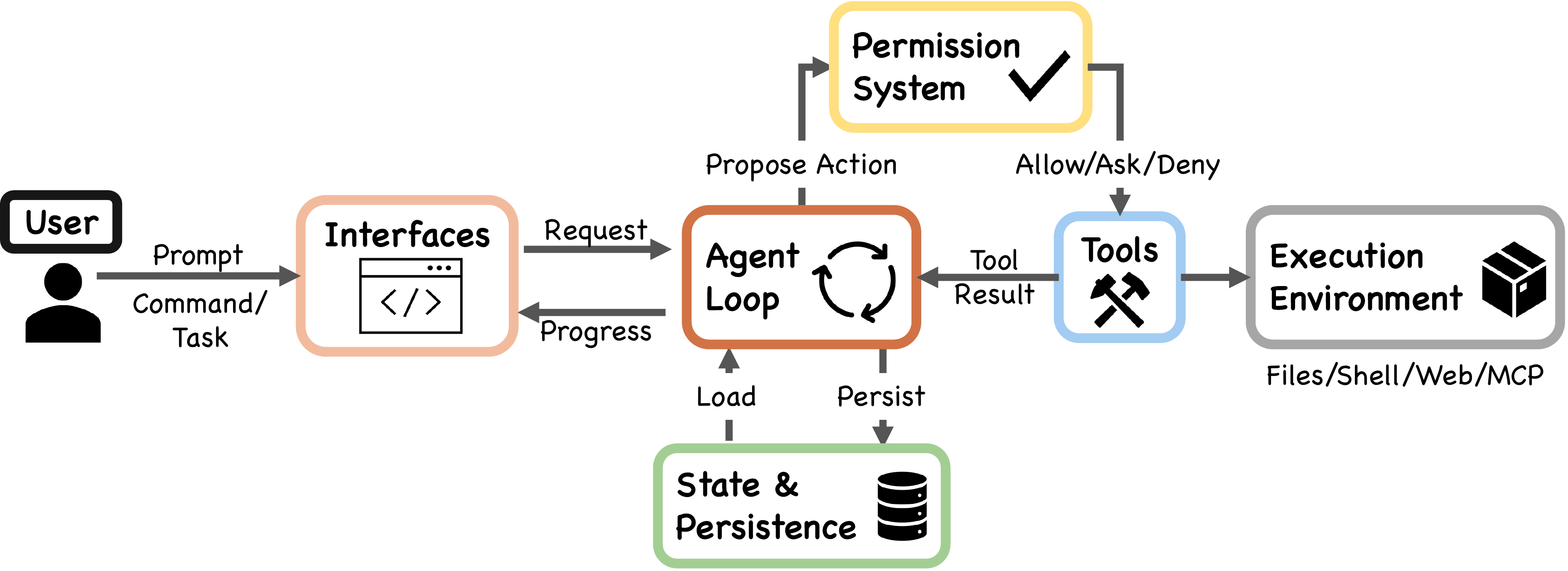

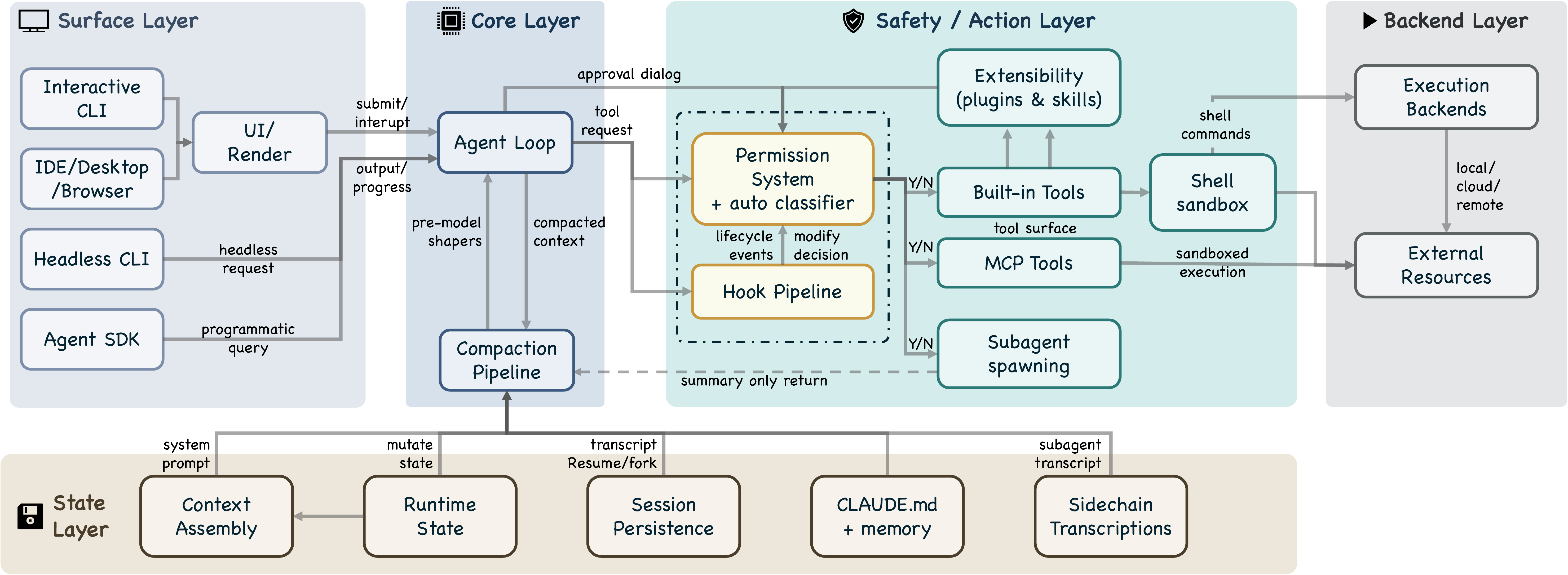

The system decomposes into 7 components (User → Interfaces → Agent Loop → Permission System → Tools → State & Persistence → Execution Environment) across 5 architectural layers.

[!NOTE]

For the full architectural deep dive -- 7 safety layers, 9-step turn pipeline, 5-layer compaction, and more -- see docs/architecture.md.

Values and Design Principles

The architecture traces from 5 human values through 13 design principles to implementation:

| Value | Core Idea |

|---|---|

| Human Decision Authority | Humans retain control via principal hierarchy. When a 93% prompt-approval rate revealed approval fatigue, response was restructured boundaries, not more warnings. |

| Safety, Security, Privacy | System protects even when human vigilance lapses. 7 independent safety layers. |

| Reliable Execution | Does what was meant. Gather-act-verify loop. Graceful recovery. |

| Capability Amplification | "A Unix utility, not a product." 98.4% is deterministic infrastructure enabling the model. |

| Contextual Adaptability | CLAUDE.md hierarchy, graduated extensibility, trust trajectories that evolve over time. |

| Principle | Design Question |

|---|---|

| Deny-first with human escalation | Should unrecognized actions be allowed, blocked, or escalated? |

| Graduated trust spectrum | Fixed permission level, or spectrum users traverse over time? |

| Defense in depth | Single safety boundary, or multiple overlapping ones? |

| Externalized programmable policy | Hardcoded policy, or externalized configs with lifecycle hooks? |

| Context as scarce resource | Single-pass truncation or graduated pipeline? |

| Append-only durable state | Mutable state, snapshots, or append-only logs? |

| Minimal scaffolding, maximal harness | Invest in scaffolding or operational infrastructure? |

| Values over rules | Rigid procedures or contextual judgment with deterministic guardrails? |

| Composable multi-mechanism extensibility | One API or layered mechanisms at different costs? |

| Reversibility-weighted risk assessment | Same oversight for all, or lighter for reversible actions? |

| Transparent file-based config and memory | Opaque DB, embeddings, or user-visible files? |

| Isolated subagent boundaries | Shared context/permissions, or isolation? |

| Graceful recovery and resilience | Fail hard, or recover silently? |

The paper also applies a sixth evaluative lens -- long-term capability preservation -- citing evidence that developers in AI-assisted conditions score 17% lower on comprehension tests.

The Agentic Query Loop

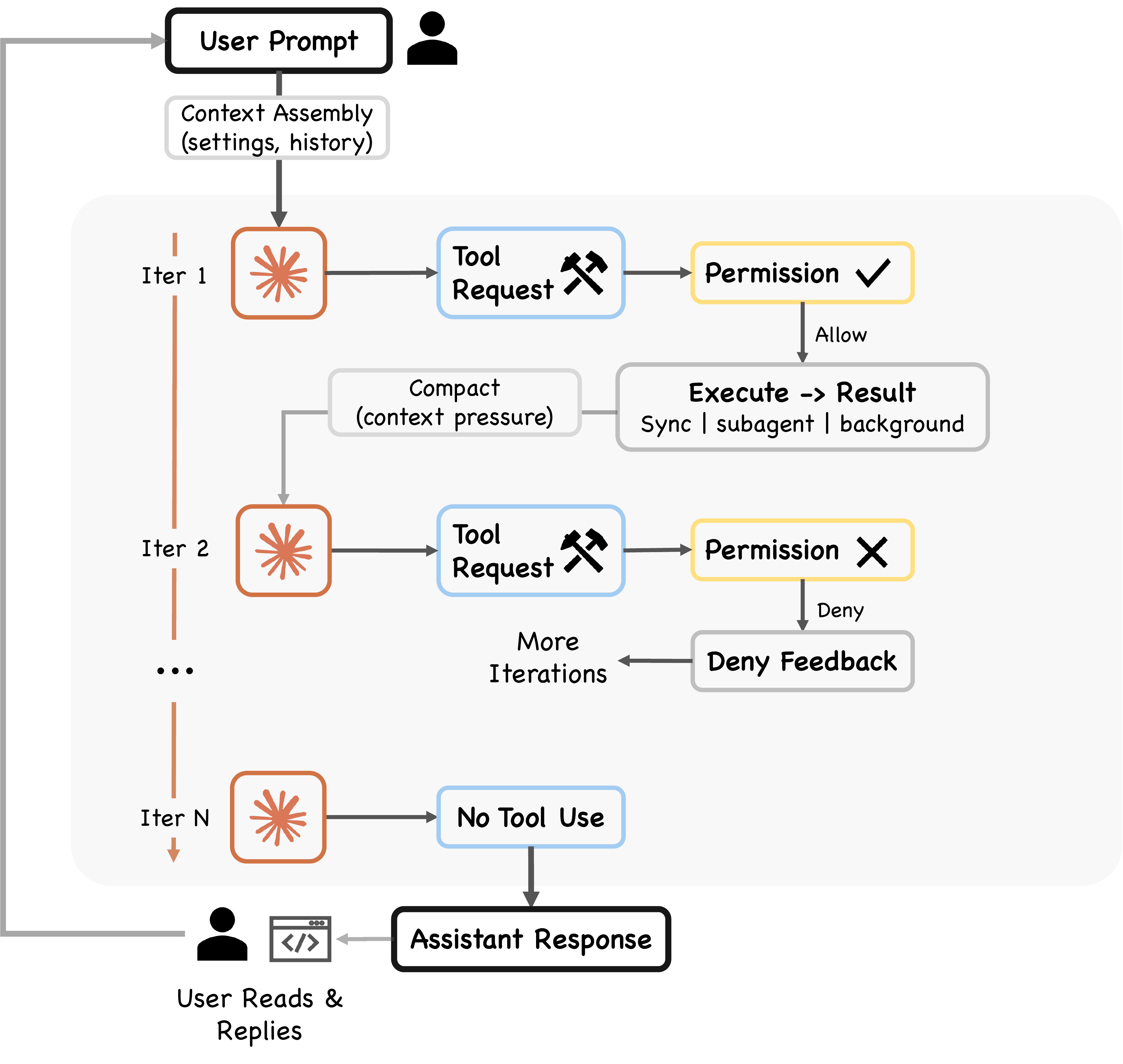

The core is a ReAct-pattern while-loop: assemble context → call model → dispatch tools → check permissions → execute → repeat. Implemented as an AsyncGenerator yielding streaming events.

Before every model call, five compaction shapers run sequentially (cheapest first): Budget Reduction → Snip → Microcompact → Context Collapse → Auto-Compact.

9-step pipeline per turn: Settings resolution → State init → Context assembly → 5 pre-model shapers → Model call → Tool dispatch → Permission gate → Tool execution → Stop condition

Two execution paths:

StreamingToolExecutor-- begins executing tools as they stream in (latency optimization)- Fallback

runTools-- classifies tools as concurrent-safe or exclusive

Recovery: Max output token escalation (3 retries), reactive compaction (once per turn), prompt-too-long handling, streaming fallback, fallback model

5 stop conditions: No tool use, max turns, context overflow, hook intervention, explicit abort

Safety and Permissions

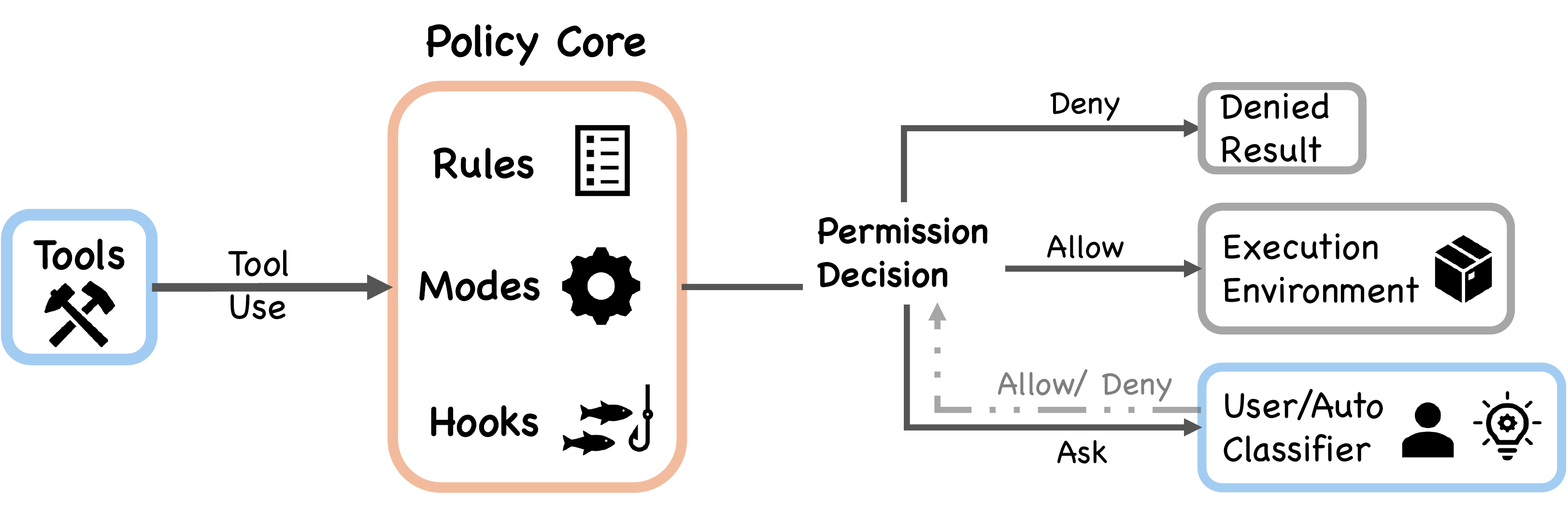

7 permission modes form a graduated trust spectrum: plan → default → acceptEdits → auto (ML classifier) → dontAsk → bypassPermissions (+ internal bubble).

Deny-first: A broad deny always overrides a narrow allow. 7 independent safety layers from tool pre-filtering through shell sandboxing to hook interception. Permissions are never restored on resume -- trust is re-established per session.

More details: authorization pipeline, auto-mode classifier, CVEs[!WARNING]

Shared failure modes: Defense-in-depth degrades when layers share constraints. Per-subcommand parsing causes event-loop starvation -- commands exceeding 50 subcommands bypass security analysis entirely to prevent the REPL from freezing.

Authorization pipeline: Pre-filtering (strip denied tools) → PreToolUse hooks → Deny-first rule evaluation → Permission handler (4 branches: coordinator, swarm worker, speculative classifier, interactive)

Auto-mode classifier (yoloClassifier.ts): Separate LLM call with internal/external permission templates. Two-stage: fast-filter + chain-of-thought.

Pre-trust execution window: 2 patched CVEs share this root cause -- hooks and MCP servers execute during initialization before the trust dialog appears, creating a structurally privileged attack window outside the deny-first pipeline.

Extensibility

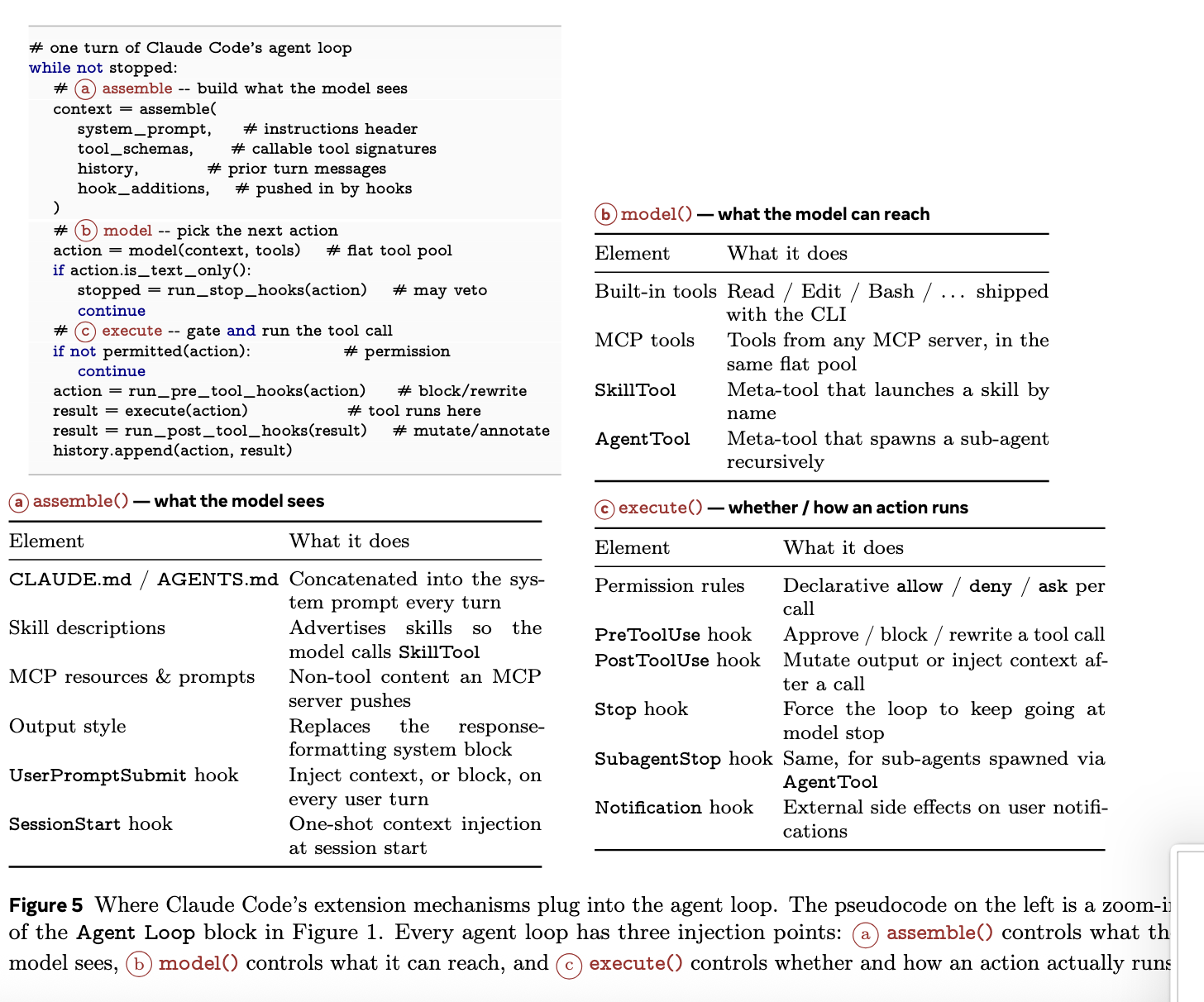

Four mechanisms at graduated context costs: Hooks (zero) → Skills (low) → Plugins (medium) → MCP (high). Three injection points in the agent loop: assemble() (what the model sees), model() (what it can reach), execute() (whether/how actions run).

Tool pool assembly (5-step): Base enumeration (up to 54 tools) → Mode filtering → Deny pre-filtering → MCP integration → Deduplication

27 hook events across 5 categories with 4 execution types (shell, LLM-evaluated, webhook, subagent verifier)

Plugin manifest accepts 10 component types: commands, agents, skills, hooks, MCP servers, LSP servers, output styles, channels, settings, user config

Skills: SKILL.md with 15+ YAML frontmatter fields. Key difference -- SkillTool injects into current context; AgentTool spawns isolated context.

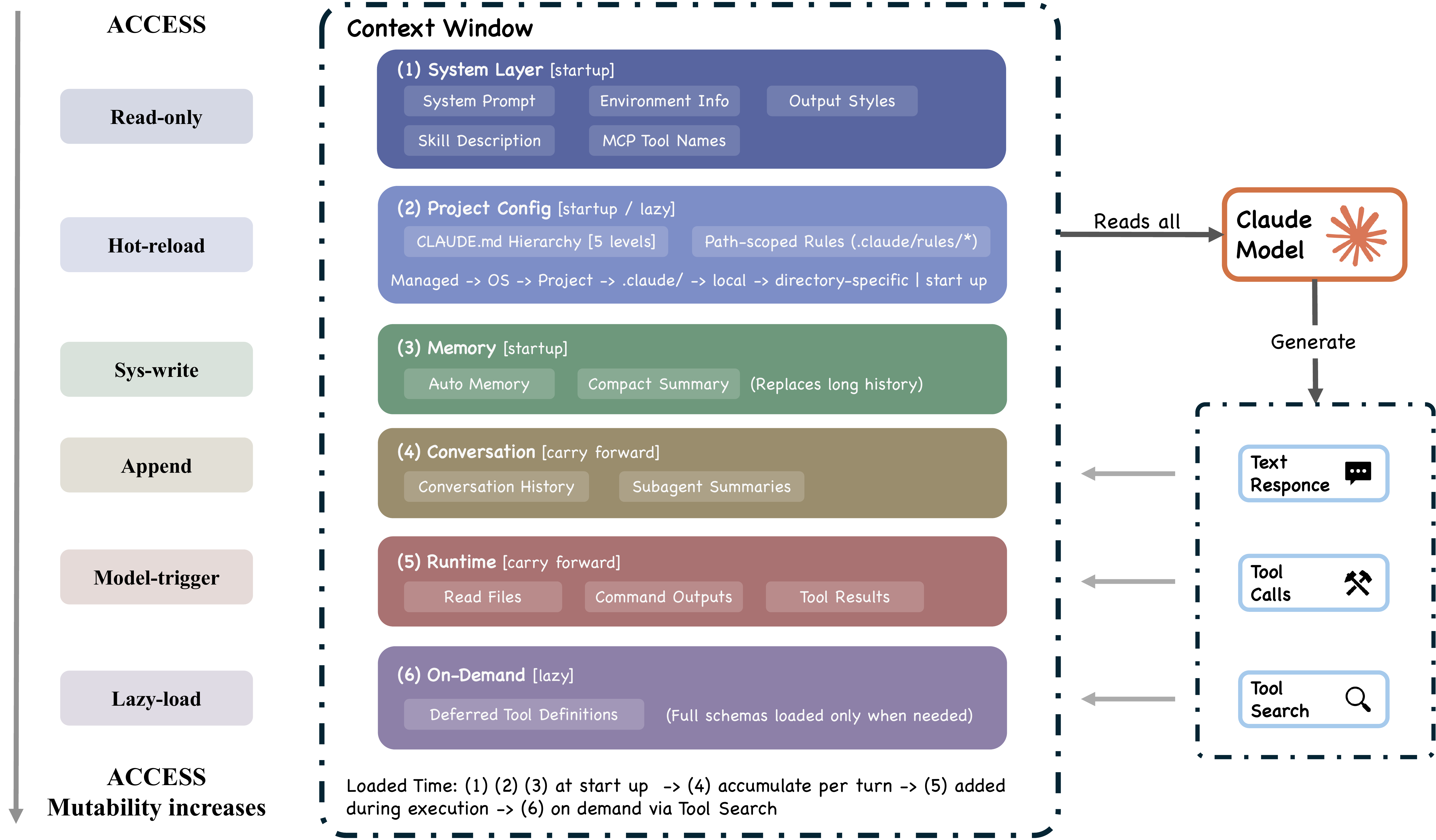

Context and Memory

9 ordered sources build the context window. CLAUDE.md instructions are delivered as user context (probabilistic compliance), not system prompt (deterministic). Memory is file-based (no vector DB) -- fully inspectable, editable, version-controllable.

4-level CLAUDE.md hierarchy: Managed (/etc/) → User (~/.claude/) → Project (CLAUDE.md, .claude/rules/) → Local (CLAUDE.local.md, gitignored)

5-layer compaction (graduated lazy-degradation): Budget reduction → Snip → Microcompact → Context Collapse (read-time projection, non-destructive) → Auto-Compact (full model summary, last resort)

Memory retrieval: LLM-based scan of memory-file headers, selects up to 5 relevant files. No embeddings, no vector similarity.

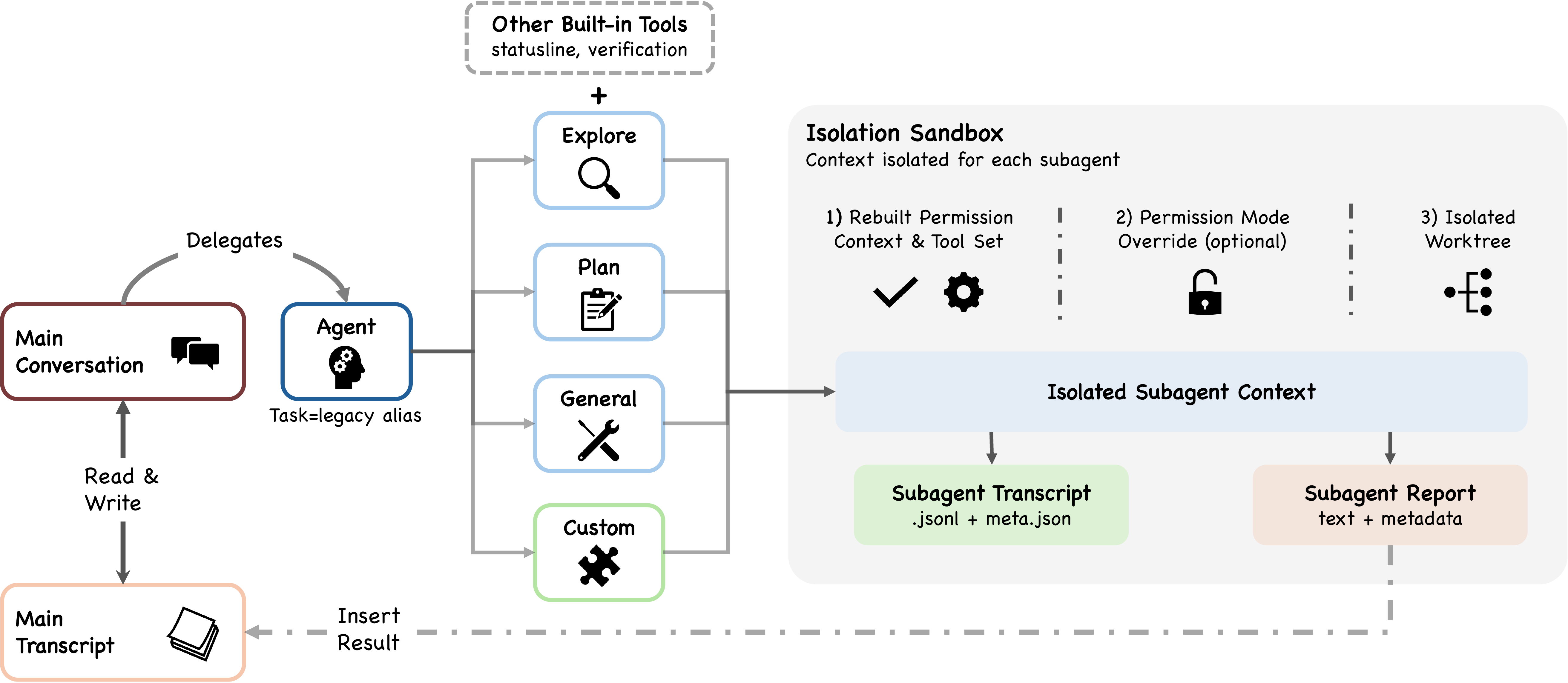

Subagent Delegation

6 built-in types (Explore, Plan, General-purpose, Guide, Verification, Statusline) + custom agents via .claude/agents/*.md. Sidechain transcripts: only summaries return to parent (parent's context is protected from subagent verbosity). Three isolation modes: worktree, remote, in-process. Coordination via POSIX flock().

SkillTool vs AgentTool: SkillTool injects into current context (cheap). AgentTool spawns isolated context (expensive, but prevents context explosion).

Permission override: Subagent permissionMode applies UNLESS parent is in bypassPermissions/acceptEdits/auto (explicit user decisions always take precedence).

Custom agents: YAML frontmatter supports tools, disallowedTools, model, effort, permissionMode, mcpServers, hooks, maxTurns, skills, memory scope, background flag, isolation mode.

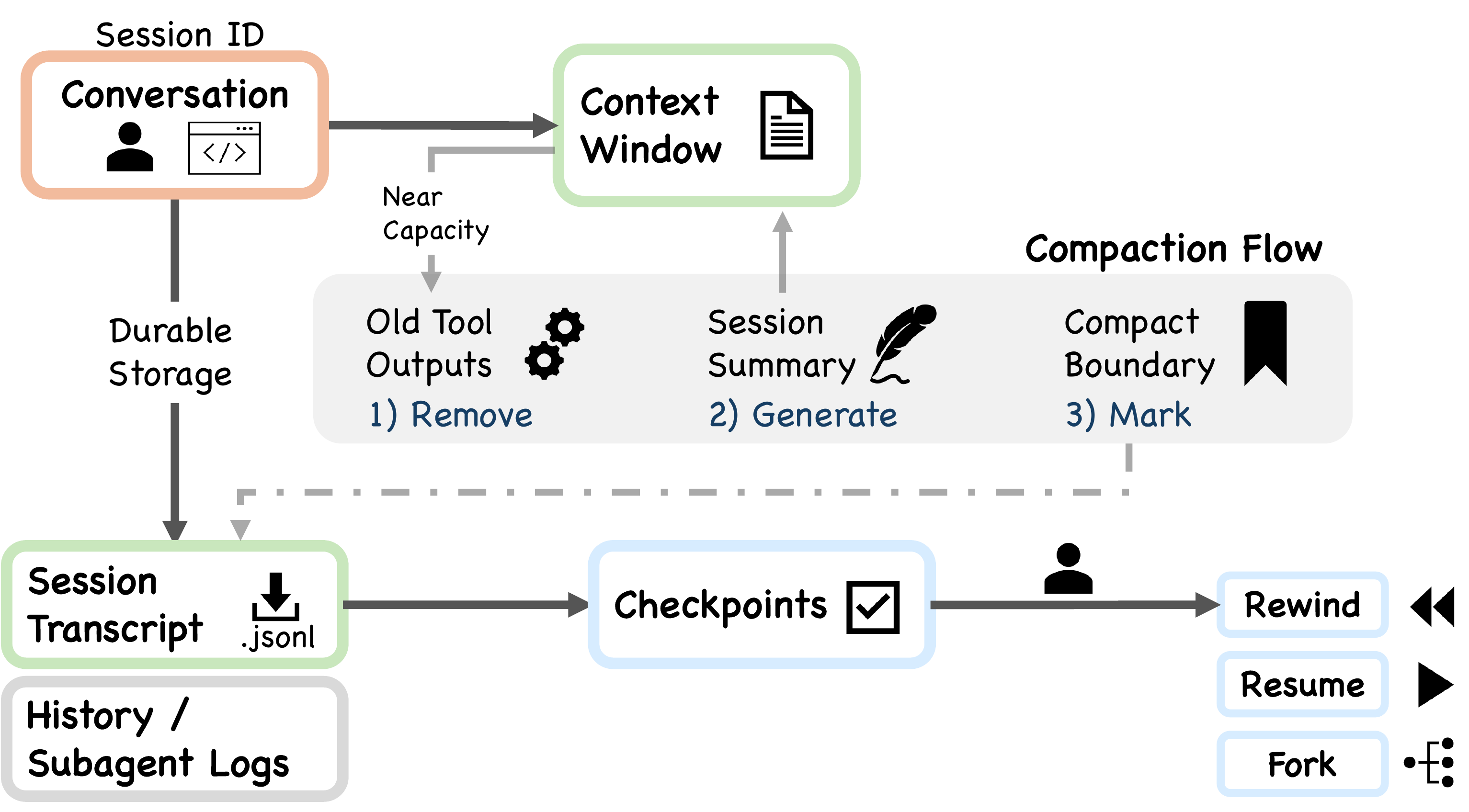

Session Persistence

Three channels: append-only JSONL transcripts, global prompt history, subagent sidechains. Permissions never restored on resume -- trust is re-established per session. Design favors auditability over query power.

Chain patching: Compact boundaries record headUuid/anchorUuid/tailUuid. The session loader patches the message chain at read time. Nothing is destructively edited on disk.

Checkpoints: File-history checkpoints for --rewind-files, stored at ~/.claude/file-history/<sessionId>/.

Build Your Own AI Agent: A Design Guide

Not a coding tutorial. A guide to the design decisions you must make, derived from architectural analysis.

Every production agent must navigate these decisions:

| Decision | The Question | Key Insight |

|---|---|---|

| Reasoning placement | How much logic in the model vs. harness? | As models converge in capability, the harness becomes the differentiator. |

| Safety posture | How do you prevent harmful actions? | Defense-in-depth fails when layers share failure modes. |

| Context management | What does the model see? | Design for context scarcity from day one. Graduated > single-pass. |

| Extensibility | How do extensions plug in? | Not all extensions need to consume context tokens. |

| Subagent architecture | Shared or isolated context? | Agent teams in plan mode cost ~7× tokens. Subagent summary-only returns prevent context blow-up. |

| Session persistence | What carries over? | Never restore permissions on resume. Auditability > query power. |

Read the full guide: docs/build-your-own-agent.md

Community Projects & Research

A curated map of the repos, reimplementations, and academic papers surrounding Claude Code's architecture.

Official Anthropic Resources

Primary sources referenced throughout the paper — Anthropic's own engineering and research publications, plus product documentation.

Research & Engineering Blogs

| Article | Topic |

|---|---|

| Building Effective Agents | Foundational: simple composable patterns over heavy frameworks. |

| Effective Context Engineering for AI Agents | Context curation and token-budget management. |

| Harness Design for Long-Running Application Development | Harness architecture for autonomous full-stack dev; multi-agent patterns. |

| Claude Code Auto Mode: A Safer Way to Skip Permissions | ML-classifier approval automation; source of the 93% approval-rate finding. |

| Beyond Permission Prompts: Making Claude Code More Secure and Autonomous | Sandbox-based security; 84% reduction in permission prompts. |

| Measuring AI Agent Autonomy in Practice | Longitudinal usage: auto-approve rates grow from ~20% to 40%+ with experience. |

| Our Framework for Developing Safe and Trustworthy Agents | Governance framework for responsible agent deployment. |

| Scaling Managed Agents: Decoupling the Brain from the Hands | Hosted-service architecture separating reasoning, execution, and session. |

Product Documentation

| Document | Topic |

|---|---|

| How Claude Code Works | Official overview of the agent loop, tools, and terminal automation. |

| Permissions | Tiered permission system, modes, granular rules. |

| Hooks | 27-event hook reference, execution models, lifecycle events. |

| Memory | CLAUDE.md hierarchy, auto memory, learned preferences. |

| Sub-agents | Specialized isolated assistants, custom prompts, tool access. |

Architecture Analysis

Deep dives into Claude Code's internal design.

| Repository | Description |

|---|---|

| ComeOnOliver/claude-code-analysis | Comprehensive reverse-engineering: source tree structure, module boundaries, tool inventories, and architectural patterns. |

| alejandrobalderas/claude-code-from-source | 18-chapter technical book (~400 pages). All original pseudocode, no proprietary source. |

| liuup/claude-code-analysis | Chinese-language deep-dive — startup flow, query main loop, MCP integration, multi-agent architecture. |

| sanbuphy/claude-code-source-code | Quadrilingual analysis (EN/JA/KO/ZH) — multi-domain reports covering telemetry, codenames, KAIROS, unreleased tools. |

| cablate/claude-code-research | Independent research on internals, Agent SDK, and related tooling. |

| Yuyz0112/claude-code-reverse | Visualize Claude Code's LLM interactions — log parser and visual tool to trace prompts, tool calls, and compaction. |

Open-Source Reimplementations

Clean-room rewrites and buildable research forks.

| Repository | Description |

|---|---|

| chauncygu/collection-claude-code-source-code | Meta-collection of community Claude Code source artifacts -- includes claw-code (Rust port), nano-claude-code (Python), and the extracted original source archive. |

| 777genius/claude-code-working | Working reverse-engineered CLI. Runnable with Bun, 450+ chunk files, 31 feature flags polyfilled. |

| T-Lab-CUHKSZ/claude-code | CUHK-Shenzhen buildable research fork — reconstructed build system from raw TypeScript snapshot. |

| ruvnet/open-claude-code | Nightly auto-decompile rebuild — 903+ tests, 25 tools, 4 MCP transports, 6 permission modes. |

| Enderfga/openclaw-claude-code | OpenClaw plugin — unified ISession interface for Claude/Codex/Gemini/Cursor. Multi-agent council. |

| memaxo/claude_code_re | Reverse engineering from minified bundles — deobfuscation of the publicly distributed cli.js file. |

Guides & Learning

Tutorials and hands-on learning paths.

| Repository | Description |

|---|---|

| shareAI-lab/learn-claude-code | "Bash is all you need" — 19-chapter 0-to-1 course with runnable Python agents, web platform. ZH/EN/JA. |

| FlorianBruniaux/claude-code-ultimate-guide | Beginner-to-power-user guide with production-ready templates, agentic workflow guides, and cheatsheets. |

| affaan-m/everything-claude-code | Agent harness optimization — skills, instincts, memory, security, and research-first development. 50K+ stars. |

Blog Posts & Technical Articles

| Article | What Makes It Valuable |

|---|---|

| Marco Kotrotsos — "Claude Code Internals" (15-part series) | Most systematic pre-leak analysis. Architecture, agent loop, permissions, sub-agents, MCP, telemetry. |

| Alex Kim — "The Claude Code Source Leak" | Anti-distillation mechanisms, frustration detection, Undercover Mode, ~250K wasted API calls/day. |

| Haseeb Qureshi — Cross-agent architecture comparison | Claude Code vs Codex vs Cline vs OpenCode — architecture-level comparison. |

| George Sung — "Tracing Claude Code's LLM Traffic" | Complete system prompts and full API logs. Discovered dual-model usage (Opus + Haiku). |

| Agiflow — "Reverse Engineering Prompt Augmentation" | 5 prompt augmentation mechanisms backed by actual network traces. |

| Engineer's Codex — "Diving into the Source Code Leak" | Modular system prompt, ~40 tools, large query/tool subsystem, anti-distillation. |

| MindStudio — "Three-Layer Memory Architecture" | In-context memory, MEMORY.md pointer index, CLAUDE.md static config. Best single resource on memory. |

| WaveSpeed — "Claude Code Architecture: Leaked Source Deep Dive" | 512K-line TS source deep dive; context compression and anti-distillation. |

| Zain Hasan — "Inside Claude Code: An Architecture Deep Dive" | Layered architecture, 5 entry modes, multi-agent walkthrough. |

Related Academic Papers

| Paper | Venue | Relevance |

|---|---|---|

| Decoding the Configuration of AI Coding Agents | arXiv | Empirical study of 328 Claude Code configuration files — SE concerns and co-occurrence patterns. |

| On the Use of Agentic Coding Manifests | arXiv | Analyzed 253 CLAUDE.md files from 242 repos — structural patterns in operational commands. |

| Context Engineering for Multi-Agent Code Assistants | arXiv | Multi-agent workflow combining multiple LLMs for code generation. |

| OpenHands: An Open Platform for AI Software Developers | ICLR 2025 | Primary academic reference for open-source AI coding agents. |

| SWE-Agent: Agent-Computer Interfaces | NeurIPS 2024 | Docker-based coding agent with custom agent-computer interface. |

How This Paper Differs

While the projects above focus on engineering reverse-engineering or practical reimplementation, this paper provides a systematic values → principles → implementation analytical framework — tracing five human values through thirteen design principles to specific source-level choices, and using OpenClaw comparison to reveal that cross-cutting integrative mechanisms, not modular features, are the true locus of engineering complexity.

See the full curated list with more resources: docs/related-resources.md

Other Notable AI Agent Projects

Recently launched (2025–2026) open-source AI agent projects outside the Claude Code ecosystem.

| Repository | Launch | Focus |

|---|---|---|

| openclaw/openclaw | Jan 2026 | Local-first personal AI assistant across messaging platforms. |

| sst/opencode | Jun 2025 | Provider-agnostic terminal coding agent. |

| NousResearch/hermes-agent | Feb 2026 | Self-improving personal agent with cross-session memory. |

| 666ghj/MiroFish | Mar 2026 | Multi-agent swarm-intelligence simulation engine. |

| MemPalace/mempalace | 2026 | Local-first memory system for AI agents. |

| multica-ai/multica | 2026 | Managed-agents platform for task assignment and skill compounding. |

Citation

@article{diveclaudecode2026,

title={Dive into Claude Code: The Design Space of Today's and Future AI Agent Systems},

author={Jiacheng Liu, Xiaohan Zhao, Xinyi Shang, and Zhiqiang Shen},

year={2026},

}

License

This work is licensed under CC BY-NC-SA 4.0.

Reviews (0)

Sign in to leave a review.

Leave a reviewNo results found