semantic-router

Health Gecti

- License — License: Apache-2.0

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 3562 GitHub stars

Code Basarisiz

- eval() — Dynamic code execution via eval() in .github/workflows/anti-spam-filter.yml

- process.env — Environment variable access in .github/workflows/anti-spam-filter.yml

- eval() — Dynamic code execution via eval() in .github/workflows/cleanup-existing-spam.yml

- process.env — Environment variable access in .github/workflows/cleanup-existing-spam.yml

- rm -rf — Recursive force deletion command in .github/workflows/docker-publish.yml

- rm -rf — Recursive force deletion command in .github/workflows/docker-release.yml

Permissions Gecti

- Permissions — No dangerous permissions requested

Bu listing icin henuz AI raporu yok.

System Level Intelligent Router for Mixture-of-Models at Cloud, Data Center and Edge

Latest News 🔥

- [2026/03/10] v0.2 Released: vLLM Semantic Router v0.2 Athena Release

- [2026/02/27] White Paper Released: Signal Driven Decision Routing for Mixture-of-Modality Models

- [2026/01/05] Iris v0.1 is Released: vLLM Semantic Router v0.1 Iris: The First Major Release

- [2025/12/16] Collaboration: AMD × vLLM Semantic Router: Building the System Intelligence Together

- [2025/12/15] New Blog: Token-Level Truth: Real-Time Hallucination Detection for Production LLMs

- [2025/11/19] New Blog: Signal-Decision Driven Architecture: Reshaping Semantic Routing at Scale

- [2025/11/03] Our paper Category-Aware Semantic Caching for Heterogeneous LLM Workloads published

- [2025/10/27] New Blog: Scaling Semantic Routing with Extensible LoRA

- [2025/10/12] Our paper When to Reason: Semantic Router for vLLM accepted by NeurIPS 2025 MLForSys.

- [2025/10/08] Collaboration: vLLM Semantic Router with vLLM Production Stack Team.

- [2025/09/01] Released the project: vLLM Semantic Router: Next Phase in LLM inference.

Quick Start

Installation

$ curl -fsSL https://vllm-semantic-router.com/install.sh | bash

For detailed setup options, platform notes, and troubleshooting, see the Docs.

[!IMPORTANT]

Online playground default credentials:

- username:

[email protected]- password:

vllm-sr

Goals

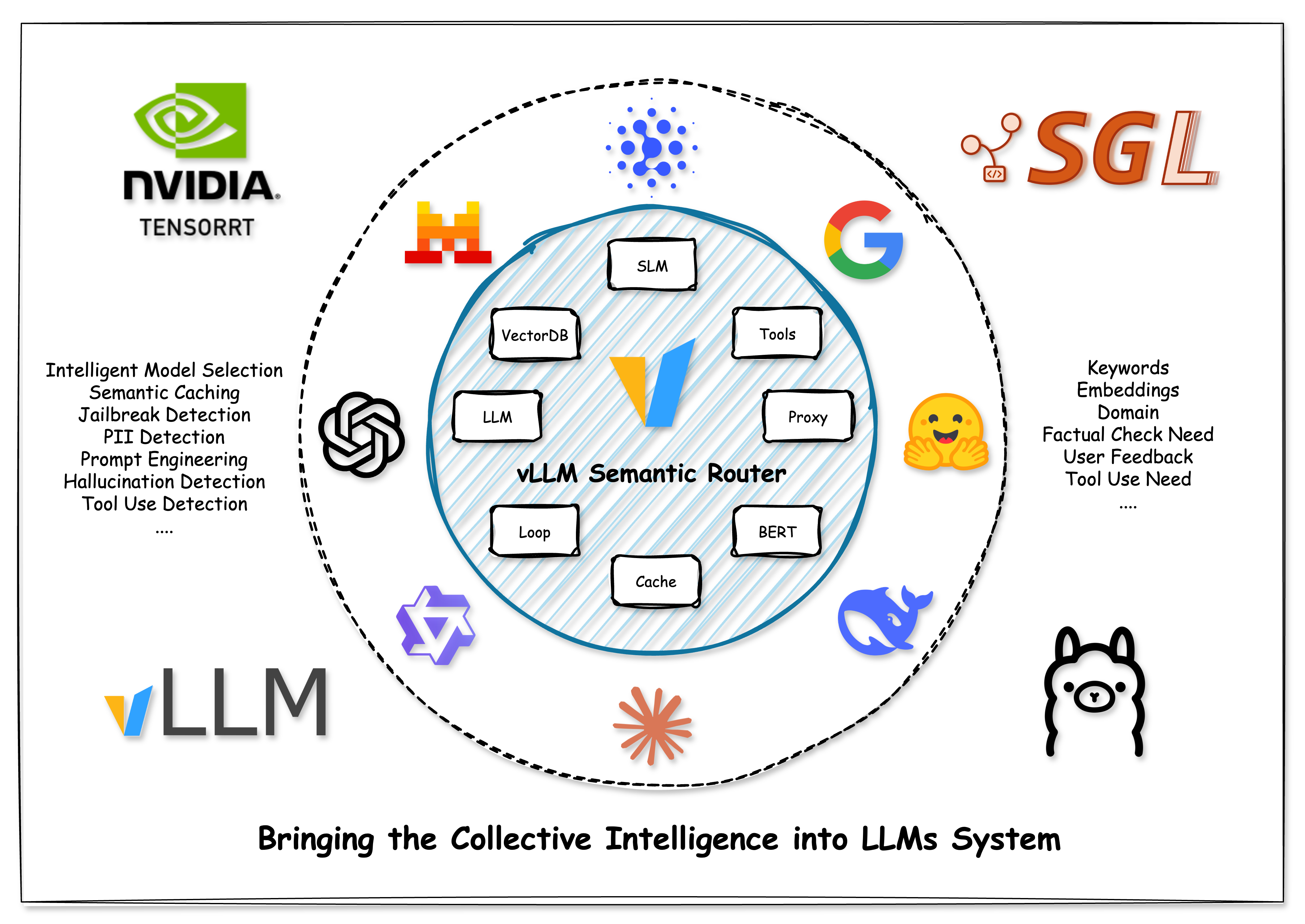

We are building the System Level Intelligence for Mixture-of-Models (MoM), bringing the Collective Intelligence into LLM systems, answering the following questions:

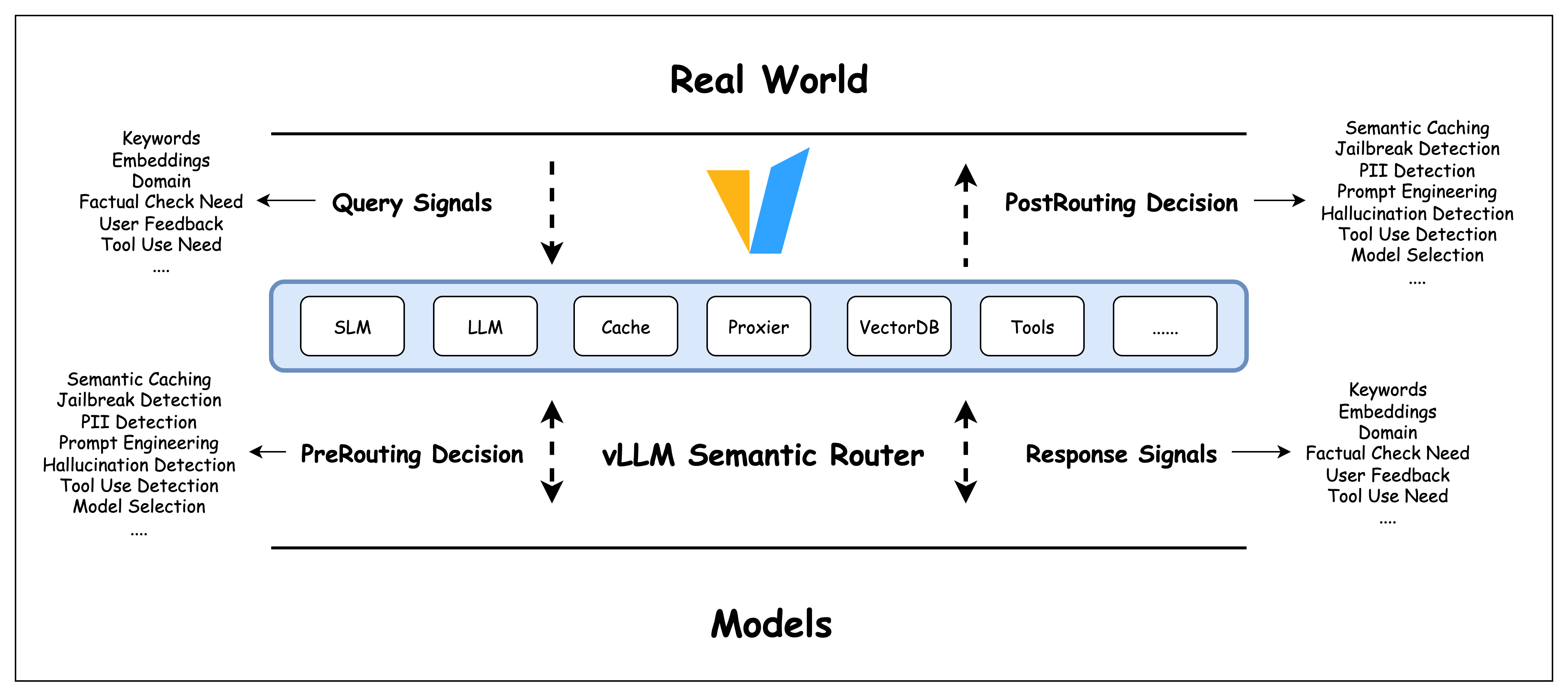

- How to capture the missing signals in request, response and context?

- How to combine the signals to make better decisions?

- How to collaborate more efficiently between different models?

- How to secure the real world and LLM system from jailbreaks, pii leaks, hallucinations?

- How to collect the valuable signals and build a self-learning system?

Where it lives

It lives between the real world and models:

Documentation 📖

For comprehensive documentation including detailed setup instructions, architecture guides, and API references, visit:

Complete Documentation at Read the Docs

The documentation includes:

- Installation Guide - Complete setup instructions

- System Architecture - Technical deep dive

- Model Training - How classification models work

- API Reference - Complete API documentation

Community 👋

For questions, feedback, or to contribute, please join #semantic-router channel in vLLM Slack.

Community Meetings 📅

We host bi-weekly community meetings to sync up with contributors across different time zones:

- First Tuesday of the month: 9:00-10:00 AM EST (accommodates US EST, EU, and Asia Pacific contributors)

- Third Tuesday of the month: 1:00-2:00 PM EST (accommodates US EST and California contributors)

- Meeting Recordings: YouTube

Join us to discuss the latest developments, share ideas, and collaborate on the project!

Citation

If you find Semantic Router helpful in your research or projects, please consider citing it:

@misc{semanticrouter2025,

title={vLLM Semantic Router},

author={vLLM Semantic Router Team},

year={2025},

howpublished={\url{https://github.com/vllm-project/semantic-router}},

}

Star History 🔥

We opened the project at Aug 31, 2025. We love open source and collaboration ❤️

Sponsors 👋

We are grateful to our sponsors who support us:

AMD provides us with GPU resources and ROCm™ Software for training and researching the frontier router models, enhancing e2e testing, and building online models playground.

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi