PokeClaw

Health Gecti

- License — License: Apache-2.0

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 37 GitHub stars

Code Gecti

- Code scan — Scanned 4 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

Bu listing icin henuz AI raporu yok.

PokeClaw (PocketClaw) — first on-device AI that controls your Android phone. Gemma 4, no cloud, no API key. Poke is short for Pocket.

PokeClaw (PocketClaw) - A Pocket Versoin Inspired By OpenClaw

Gemma 4 launched days ago.

Most people ship demos. I wanted to know if it could actually drive a phone.

So I pulled two all-nighters and built it.

As far as I know, this is the first working app built on Gemma 4 that can autonomously control an Android phone.

The entire pipeline is a closed loop inside your device. No Wifi needed,No monthly billing for the API keys.

Everyone else: Phone → Internet → Cloud API → Internet → Phone

💳Credit card needed, API key required. Monthly bill attached.

PokeClaw: Phone → LLM → Phone

That's it. No internet. No API key. No bill.

AI controls your phone. And it never leaves your phone.

https://github.com/user-attachments/assets/c713e227-7581-4475-acd4-0480128c8ec8

☝️ Auto-reply demo: PokeClaw monitors messages from Mom, reads what she said, and replies based on context using the on-device LLM. The video is a bit blurry because I compressed it too hard. I've been up for two nights straight building this thing, I'll upload a clearer version after I finally get some sleep. Bear with me lol

https://github.com/user-attachments/assets/18d49148-c744-46a5-98a2-0f8320f00d19

Why is the "hi" demo slow? Recorded on a budget Android phone (I'm literally too broke to buy a proper one, got this just to demo the app lol) with CPU-only inference, no GPU, no NPU. Running Gemma 4 E2B on pure CPU takes about 45 seconds to warm up — started at several minutes, we optimized the engine initialization and session handoff to squeeze it down this far. If your phone actually has a decent chip it's way faster:

- Google Tensor G3/G4 (Pixel 8, Pixel 9)

- Snapdragon 8 Gen 2/3 (Galaxy S24, OnePlus 12)

- Dimensity 9200/9300 (recent MediaTek flagships)

- Snapdragon 7+ Gen 2+ (mid-range with GPU)

On these devices, warmup drops to seconds. Same model, better hardware.

That said, the fact that a 2.3B model can autonomously control a phone running purely on CPU is already pretty impressive. GPU just makes it faster.

The Story

I'm a solo developer. When Gemma 4 dropped on April 2nd with native tool calling on LiteRT-LM, I pulled two all-nighters and built this from scratch. This is the first local LLM that can run on a phone and is capable enough to handle genuinely complex tasks — having conversations, auto-replying to your mom based on context, navigating apps autonomously. That's exciting to me.

It's not perfect. Local LLMs are not as smart as cloud models, and there are plenty of rough edges. Hardware is what it is — we can't make your CPU faster. But on the software side, we're actively improving the architecture, the tool system, and the overall design. Cloud LLM support is coming as an optional feature for people who want more power.

And it's completely free. No API keys that bill you every month. No subscription. No usage limits. The model runs on your hardware and costs you nothing.

We're living through a historic shift. Local LLMs are now smart enough to actually do useful work on a phone. That wasn't true 6 months ago. On-device models are getting smarter fast, and we're hoping it won't be long before they close the gap with cloud models entirely. When that happens, PokeClaw is ready.

This project has a lot of issues. That's expected. Please open them. Every bug report makes this better.

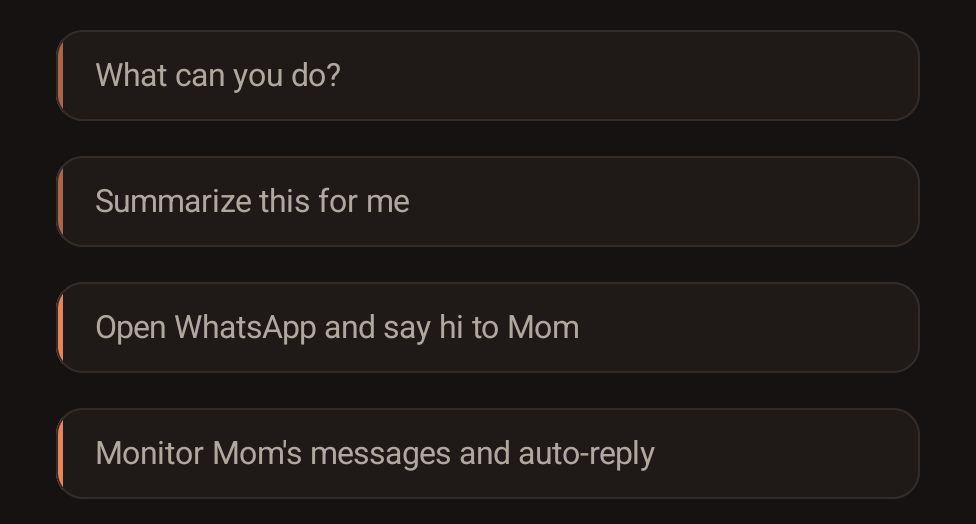

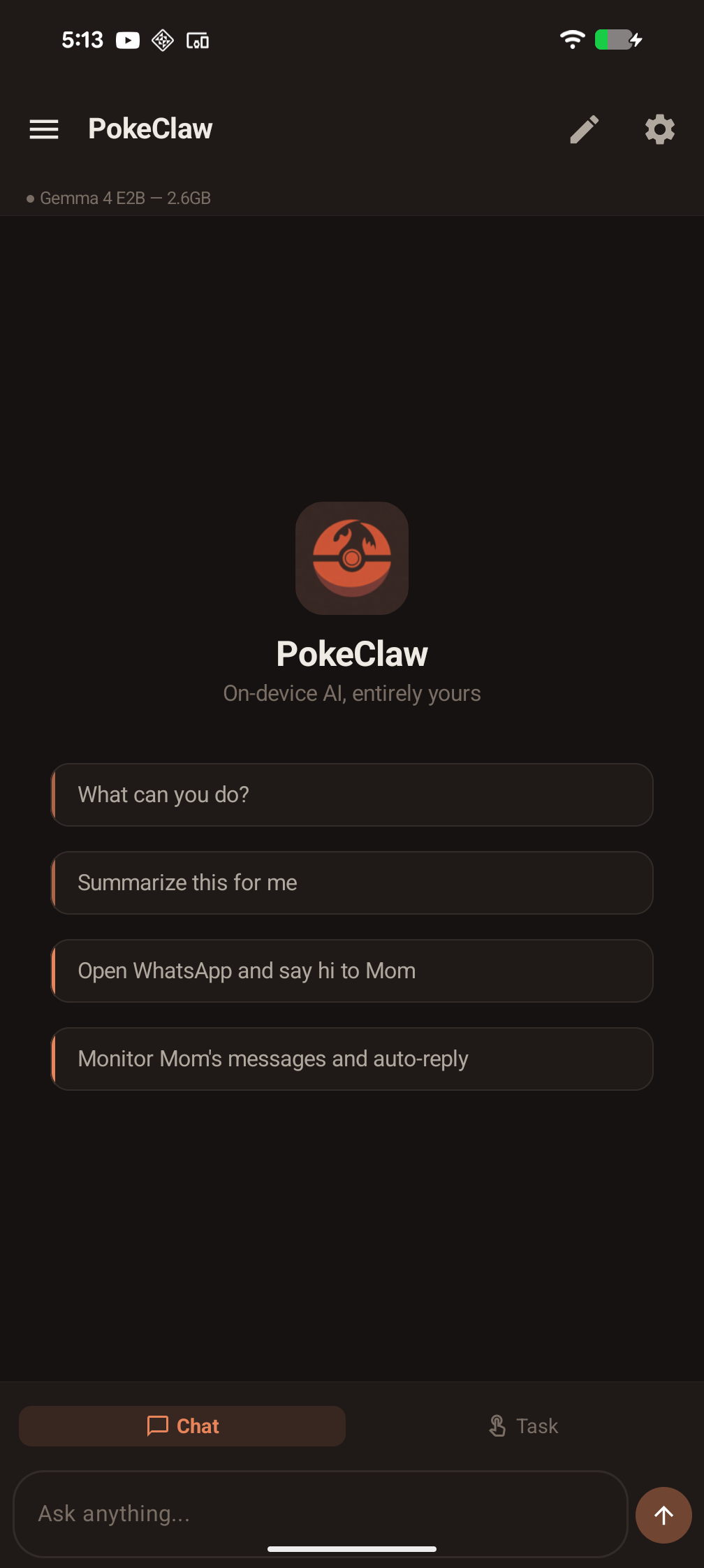

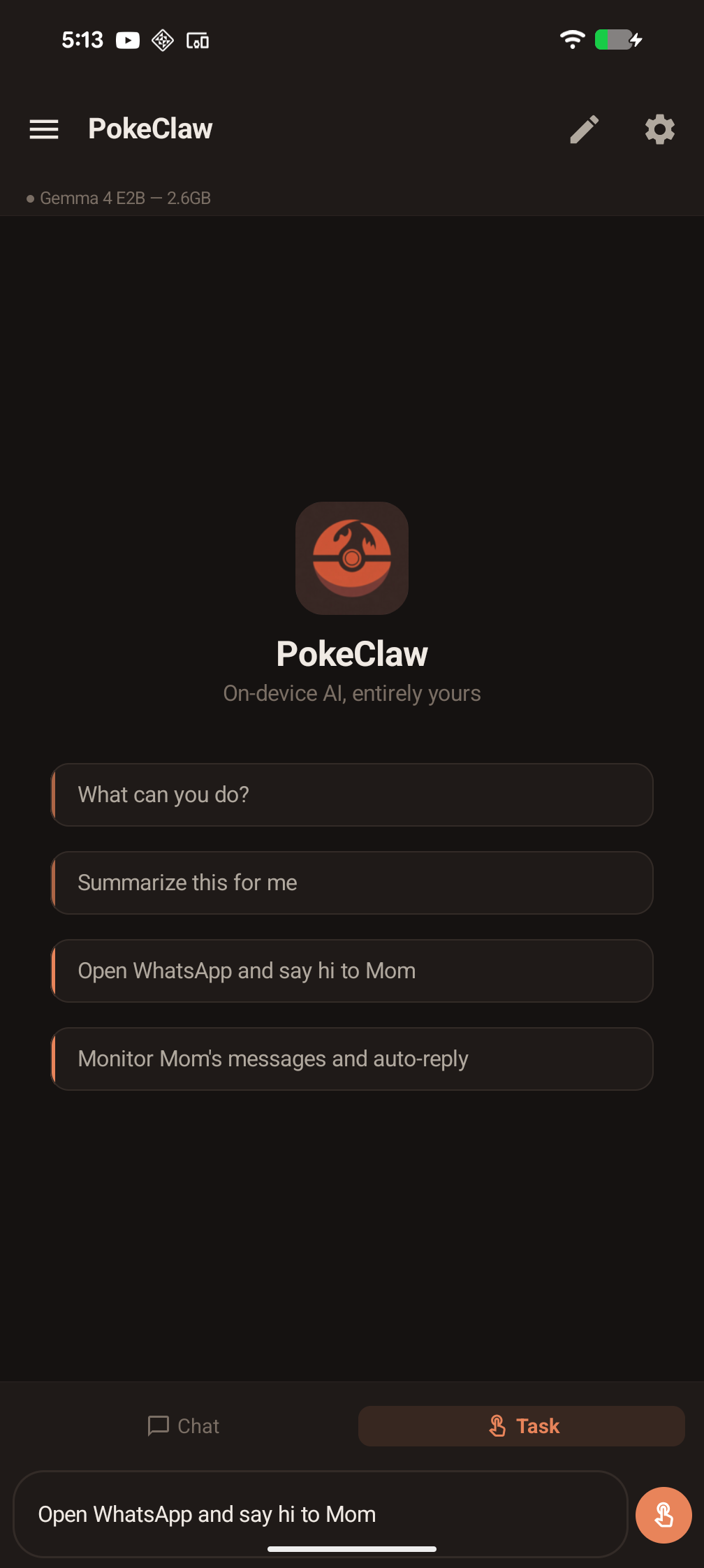

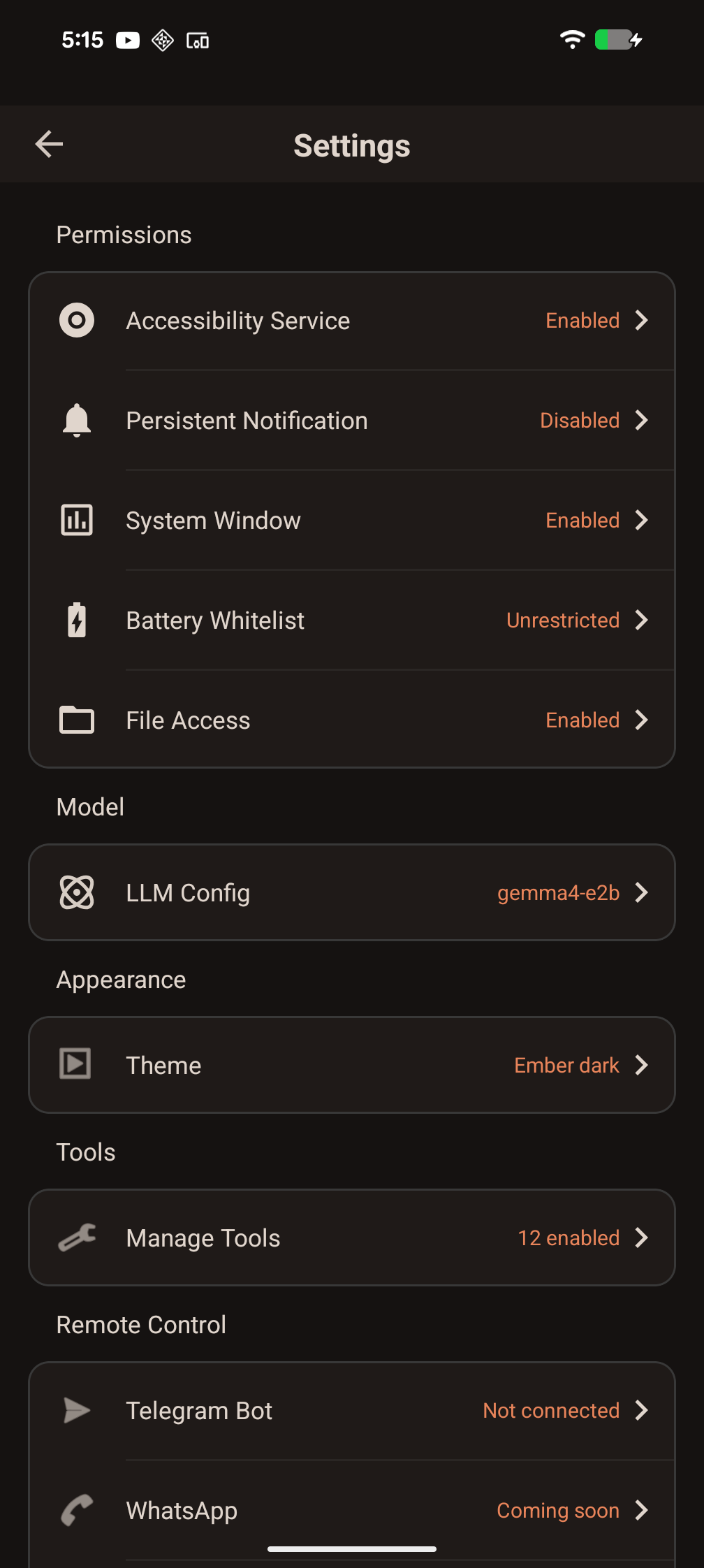

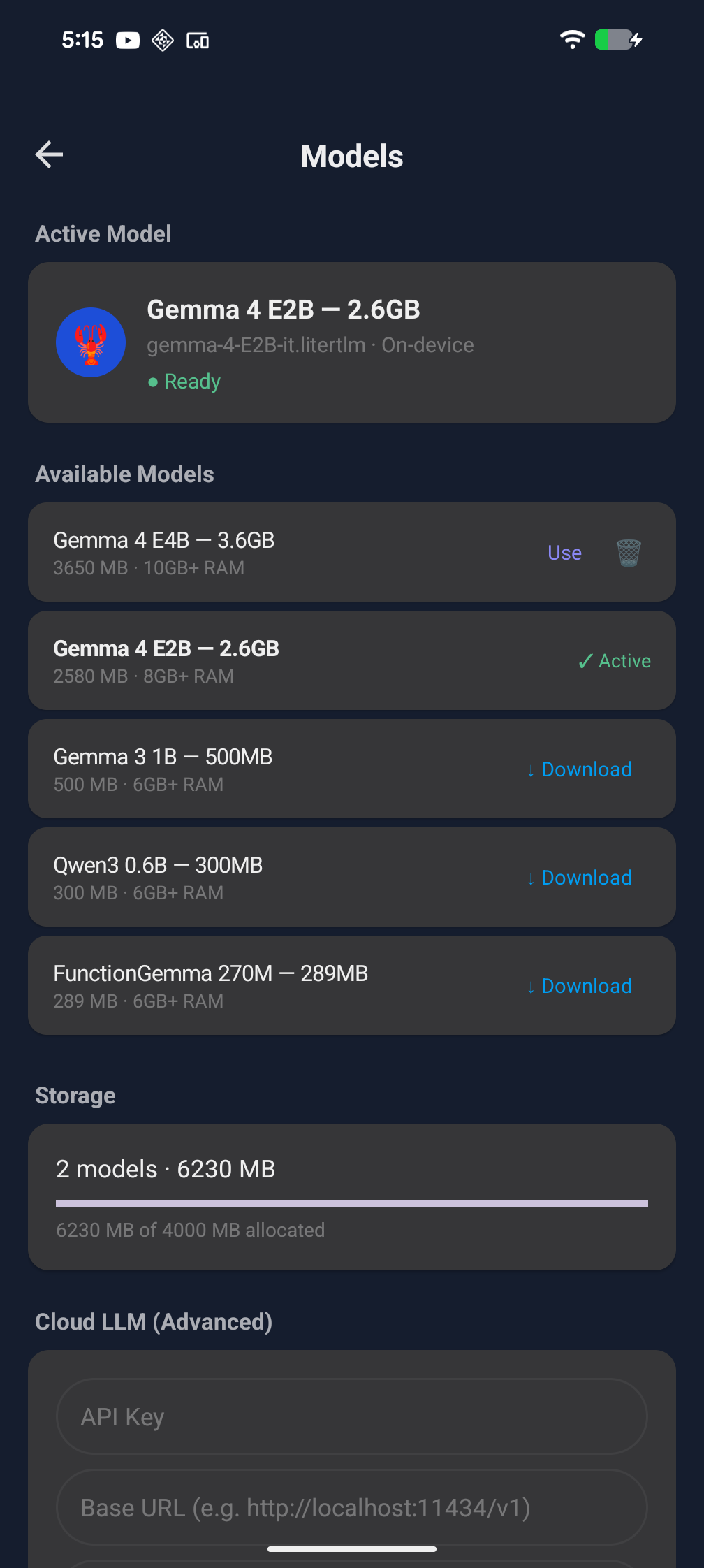

Screenshots

What it does

The model picks the right tool, fills in the parameters, and executes. You don't configure anything per-app. It just reads the screen and acts.

How it works

PokeClaw gives a small on-device LLM a set of tools (tap, swipe, type, open app, send message, enable auto-reply, etc.) and lets it decide what to do. The LLM sees a text representation of the current screen, picks an action, sees the result, picks the next action, until the task is done.

Everything runs locally via LiteRT-LM with native tool calling. The model never phones home.

Tools

The LLM has access to these tools and picks them autonomously:

| Tool | What it does |

|---|---|

tap / swipe / long_press |

Touch the screen |

input_text |

Type into any text field |

open_app |

Launch any installed app |

send_message |

Full messaging flow: open app, find contact, type, send |

auto_reply |

Monitor a contact and reply automatically using LLM |

get_screen_info |

Read current UI tree |

take_screenshot |

Capture screen |

finish |

Signal task completion |

Download

Requirements

| Minimum | Recommended | |

|---|---|---|

| Android | 9+ | 12+ |

| Architecture | arm64 | arm64 |

| RAM | 8 GB | 12 GB+ |

| Storage | 3 GB free (model download) | 5 GB+ |

| GPU | Not required (CPU works) | Tensor G3/G4, Snapdragon 8 Gen 2+, Dimensity 9200+ |

| Root | Not required | Not required |

⚠️ 8 GB is the minimum but may still crash on some devices depending on what else is running. 12 GB+ is comfortable. If the app crashes during model loading, close other apps and try again. If it still crashes, your phone doesn't have enough free RAM for a 2.3B model. Hardware limitation, not a bug.

Quick start

- Install the APK

- Grant accessibility permission when prompted

- The model downloads automatically on first launch (~2.6 GB)

- Switch to Task mode, type what you want

No API keys. No cloud config. No account.

Help Wanted

This is the first local LLM that can autonomously control a phone. Built in two all-nighters on a model that dropped days ago. It's already pretty impressive that this works at all, and it's only going to get better as on-device models improve.

That said, there are a LOT of issues. That's expected for something this new. If you run into bugs, weird behavior, or have ideas:

- ⭐ Star this repo if you think local AI phone control matters

- 🐛 Open an issue when something breaks (it will)

- 🍴 Fork it and build on it

Every issue makes this better. Every star helps more people find it.

Acknowledgments

PokeClaw exists because of Gemma 4 by Google DeepMind. Thank you to Clément Farabet, Olivier Lacombe, and the entire Gemma team for shipping an open model with native tool calling under Apache 2.0. You made it possible for a solo developer to build a working phone agent in two nights. The LiteRT-LM runtime is what makes on-device inference practical.

Also inspired by the OpenClaw community 🦞 for proving that AI agents that actually do things are what people want.

And thank you to Claude Code by Anthropic. I'm a CS dropout with zero Android development experience. Claude Code made it possible for me to go from nothing to a working app in two nights. The future is wild.

License

Apache 2.0

Contributors sign our CLA before their first PR is merged.

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi