altk-evolve

Health Uyari

- License — License: Apache-2.0

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Low visibility — Only 8 GitHub stars

Code Gecti

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

This tool provides an MCP server that enables AI agents to learn and improve over time from their past actions. It uses an LLM and a Milvus vector database to analyze agent trajectories and refine a knowledge base of guidelines.

Security Assessment

The overall risk is rated as Low but requires some caution. A light code audit of 12 files found no dangerous patterns, no hardcoded secrets, and no dangerous permission requests. However, the tool does require an OpenAI API key (or LiteLLM proxy), meaning it makes external network requests to LLM providers. It also runs a local web server (Uvicorn on port 8000) for its user interface. Developers should be careful to secure their API keys and be aware of the background HTTP server.

Quality Assessment

The project is of high quality and actively maintained, with its most recent push occurring today. It uses the permissive Apache-2.0 license, making it safe for most commercial and personal projects. Because it is a relatively new and highly specialized research tool (backed by an arXiv paper), community trust and visibility are currently low at only 8 GitHub stars.

Verdict

Safe to use, provided you follow standard security practices for managing your LLM API keys.

Self improving agents through iterations

Evolve: On‑the‑job learning for AI agents

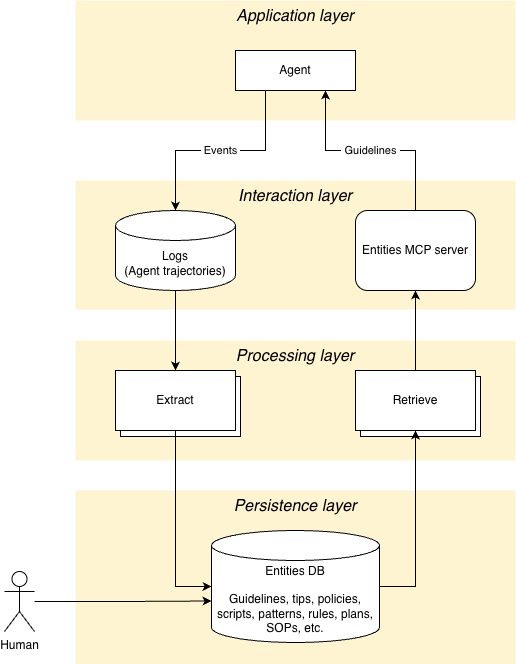

Evolve is a system designed to help agents improve over time by learning from their trajectories. It uses a combination of an MCP server for tool integration, vector storage for memory, and LLM-based conflict resolution to refine its knowledge base.

Features

- MCP Server: Exposes tools to get guidelines and save trajectories.

- Conflict Resolution: Intelligently merges new insights with existing guidelines using LLMs.

- Trajectory Analysis: Automatically analyzes agent trajectories to generate guidelines and best practices.

- Milvus Integration: Uses Milvus (or Milvus Lite) for efficient vector storage and retrieval.

Architecture

Quick Start

Installation

Prerequisites:

- Python 3.12 or higher

uv(recommended) orpip

git clone <repository_url>

cd altk-evolve

uv venv --python=3.12 && source .venv/bin/activate

uv sync

Configuration

For direct OpenAI usage:

export OPENAI_API_KEY=sk-...

For LiteLLM proxy usage and model selection (including global fallback via EVOLVE_MODEL_NAME), see the configuration guide.

Running the MCP Server & UI

Evolve provides both a standard MCP server and a full Web UI (Dashboard & Entity Explorer).

[!IMPORTANT]

Building from Source: If you cloned this repository (rather than installing a pre-built package), you must build the UI before it can be served.cd altk_evolve/frontend/ui npm ci && npm run build cd ../../../See

altk_evolve/frontend/ui/README.mdfor more frontend development details.

Starting Both Automatically

The easiest way to start both the MCP Server (on standard input/output) and the HTTP UI backend is to run the module directly:

uv run python -m evolve.frontend.mcp

This will start the UI server in the background on port 8000 and the MCP server in the foreground. You can then access the UI locally by opening your browser to:http://127.0.0.1:8000/ui/

Starting the UI Standalone

If you only want to access the Web UI and API (without the MCP server stdio blocking the terminal), you can run the FastAPI application directly using uvicorn:

uv run uvicorn evolve.frontend.mcp.mcp_server:app --host 127.0.0.1 --port 8000

Then navigate to http://127.0.0.1:8000/ui/.

Starting only the MCP Server

If you're attaching Evolve to an MCP client that requires a direct command (like Claude Desktop):

uv run fastmcp run altk_evolve/frontend/mcp/mcp_server.py --transport stdio

Or for SSE transport:

uv run fastmcp run altk_evolve/frontend/mcp/mcp_server.py --transport sse --port 8201

Verify it's running:

npx @modelcontextprotocol/inspector@latest http://127.0.0.1:8201/sse --cli --method tools/list

Available tools:

get_entities(task: str, entity_type: str): Get relevant entities for a specific task, filtered by type (e.g., 'guideline', 'policy').get_guidelines(task: str): Get relevant guidelines for a specific task (backward compatibility alias).save_trajectory(trajectory_data: str, task_id: str | None): Save a conversation trajectory and generate new guidelines.create_entity(content: str, entity_type: str, metadata: str | None, enable_conflict_resolution: bool): Create a single entity in the namespace.delete_entity(entity_id: str): Delete a specific entity by its ID.

Tip Provenance

Evolve automatically tracks the origin of every guideline it generates or stores. Every tip entity contains metadata identifying its source:

creation_mode: Identifies how the tip was created (auto-phoenixvia trace observability,auto-mcpvia trajectory saving tools, ormanual).source_task_id: The ID of the original trace or task that inspired the tip, providing full audibility.

See the Low-Code Tracing Guide for more details.

Community & Feedback

Evolve is an active project, and real‑world usage helps guide its direction.

If Evolve is useful or aligned with your work, consider giving the repo a ⭐ — it helps others discover it.

If you’re experimenting with Evolve or exploring on‑the‑job learning for agents, feel free to open an issue or discussion to share use cases, ideas, or feedback.

Documentation

- Documentation Home - Overview of guides, reference docs, and tutorials

- Installation - Setup instructions for supported platforms

- Configuration - Environment variables and backend options

- CLI Reference - Command-line interface documentation

- Evolve Lite - Lightweight Claude Code plugin mode

- Claude Code Demo - End-to-end demo walkthrough

- Policies - Policy support and schema

Development

Running Tests

The test suite is organized into 4 cleanly isolated tiers depending on infrastructure requirements:

Default Local Suite

Runs both fast logic tests (unit) and filesystem script verifications (platform_integrations).uv run pytestUnit Tests (Only)

Fast, fully-mocked tests verifying core logic and offline pipeline schemas.uv run pytest -m unitPlatform Integration Tests

Fast filesystem-level integration tests verifying local tool installation and idempotency.uv run pytest -m platform_integrationsEnd-to-End Infrastructure Tests

Heavy tests that autonomously spin up a background Phoenix server and simulate full agent workflows.uv run pytest -m e2e --run-e2e(See the Low-Code Tracing Guide for more details).

LLM Evaluation Tests

Tests needing active LLM inference to test resolution pipelines (requires LLM API keys).uv run pytest -m llm

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi