hecate

Health Pass

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 10 GitHub stars

Code Fail

- rm -rf — Recursive force deletion command in .claude/settings.json

- spawnSync — Synchronous process spawning in scripts/capture-screenshots.ts

- process.env — Environment variable access in scripts/capture-screenshots.ts

- network request — Outbound network request in scripts/capture-screenshots.ts

Permissions Pass

- Permissions — No dangerous permissions requested

This tool is an open-source AI gateway and agent-task runtime that acts as a central control plane. It sits between AI clients and model providers to manage routing, spend controls, and task execution, while offering observability and multi-tenant management.

Security Assessment

The application inherently manages sensitive data like API keys and environment variables to route AI requests, and it makes outbound network requests to external model providers. However, several specific code patterns raised flags during the audit. A recursive force deletion command (`rm -rf`) was found in a configuration file, and a script uses synchronous process spawning. Additionally, that same script accesses environment variables and makes network requests. There are no hardcoded secrets, and the tool does not request broadly dangerous permissions. Overall risk is rated as Medium.

Quality Assessment

The project is actively maintained, with its most recent push occurring today. It uses the highly permissive MIT license and features a robust README with clear documentation, badges for testing, and Go report card integration. However, community trust and adoption are currently quite low, as evidenced by only having 10 GitHub stars.

Verdict

Use with caution: the tool is active and properly licensed, but its low community adoption and the presence of potentially risky commands in its scripts warrant a careful security review before deploying in production.

Open-source AI gateway and agent-task runtime that gives teams one operational control plane across cloud and local models, with built-in policy, spend controls, and first-class OpenTelemetry.

Hecate

Hecate is an open-source AI gateway and agent-task runtime for teams that want one control plane for model access, cost governance, routing, caching, observability, and controlled agent execution.

It sits between AI clients and model providers. Existing OpenAI-compatible and Anthropic-compatible clients can point at Hecate, while operators get a place to manage providers, costs, traces, cache behavior, and queued agent work. Multi-tenant management is opt-in — the default deployment is a single-user gateway with one admin bearer.

Table Of Contents

- Why Hecate

- Modes

- Quick Start

- Architecture

- Operator UI

- What Works Today

- Configuration

- Documentation

- Contributing

- License

Why Hecate

AI workloads are moving from simple API calls to long-running agents, tool use, local/cloud routing, and budget-sensitive automation. Hecate is built for that messier runtime layer.

- One gateway for many clients — OpenAI Chat Completions and Anthropic Messages shapes.

- Local and cloud providers together — OpenAI, Anthropic, Ollama, LM Studio, LocalAI, llama.cpp-compatible servers, and other shipped presets.

- Operator-controlled spend — balances, pricebook management, rate limits, audit history, and (opt-in) per-tenant API keys with model/provider scoping.

- Runtime visibility — request ledger, route reports, failover details, cost, cache path, trace IDs, and OpenTelemetry export.

- Agent-task runtime — queued tasks, approvals, controlled shell/file/git execution, resumable runs, and MCP integration.

- Single binary deploy — Go gateway with the React operator UI embedded via

go:embed. One process, one port, one volume; no separate frontend service to run.

Modes

Hecate runs in one of two modes. The flag flips at startup; you can switch between runs without losing state.

| Single-user (default) | Multi-tenant (opt-in) | |

|---|---|---|

| Flag | GATEWAY_MULTI_TENANT=false |

GATEWAY_MULTI_TENANT=true |

| Auth | One admin bearer; loopback handshake auto-fills it for same-host browsers. | Admin bearer plus per-tenant API keys, each scoped to allowed providers and models. |

| Operator UI | Chats, Providers, Tasks, Observability, Costs, Settings (Pricing / Policy / Retention). | Same plus the Tenants and Keys tabs in Settings. |

| Observability | Admin sees everything; tenants see nothing because there are no tenants. | Tenants see their own traces / requests / runtime stats via /v1/* mirrors of the /admin/* endpoints. |

| Use when | One operator on one host; local dev; a personal gateway behind a single key. | Multiple consumers, per-key audit, scoped credentials. |

The published Docker image ships single-user. Full breakdown in docs/tenants.md.

Quick Start

Single-user path; for multi-tenant see docs/tenants.md.

1. Run the image

docker run --rm -p 8765:8765 -v hecate-data:/data \

ghcr.io/chicoxyzzy/hecate:0.1.0-alpha.7

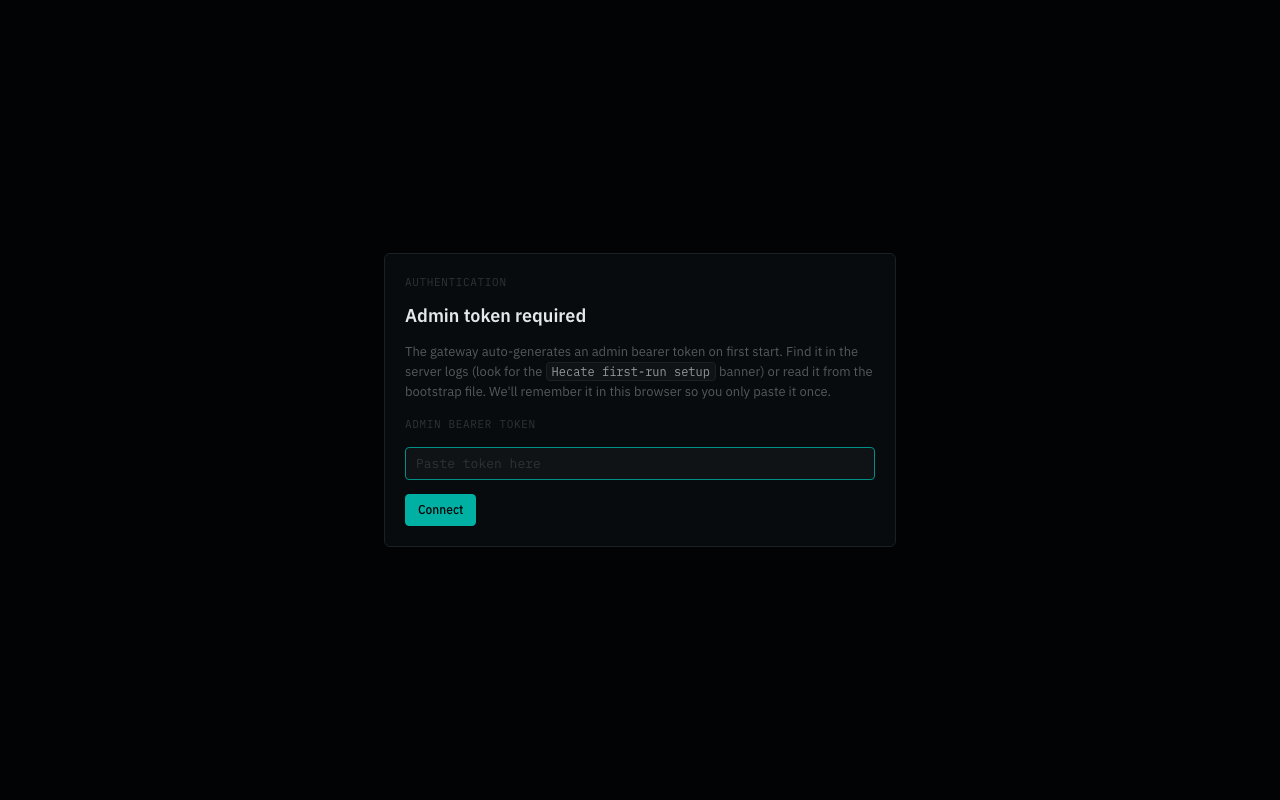

2. Open the UI

Open http://127.0.0.1:8765. On a localhost browser the console picks up the generated admin bearer through a same-origin loopback handshake — no token paste needed.

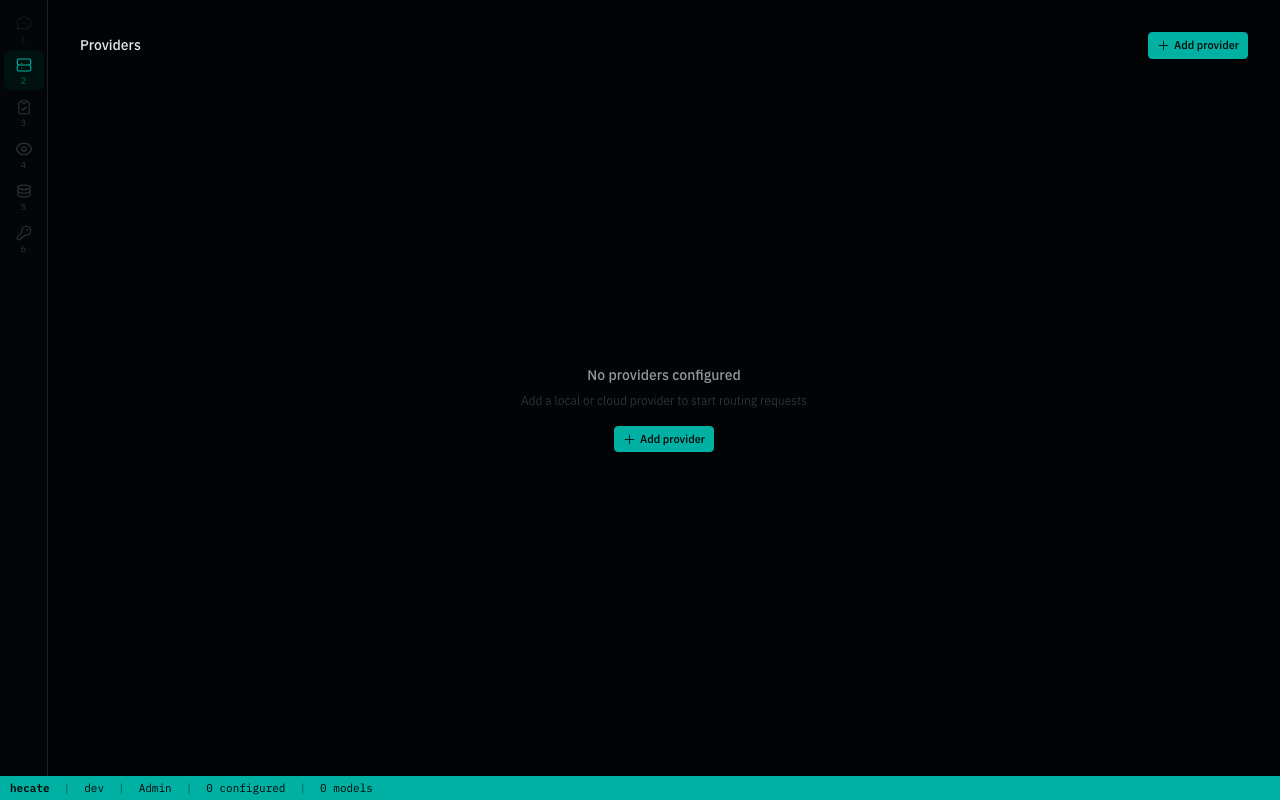

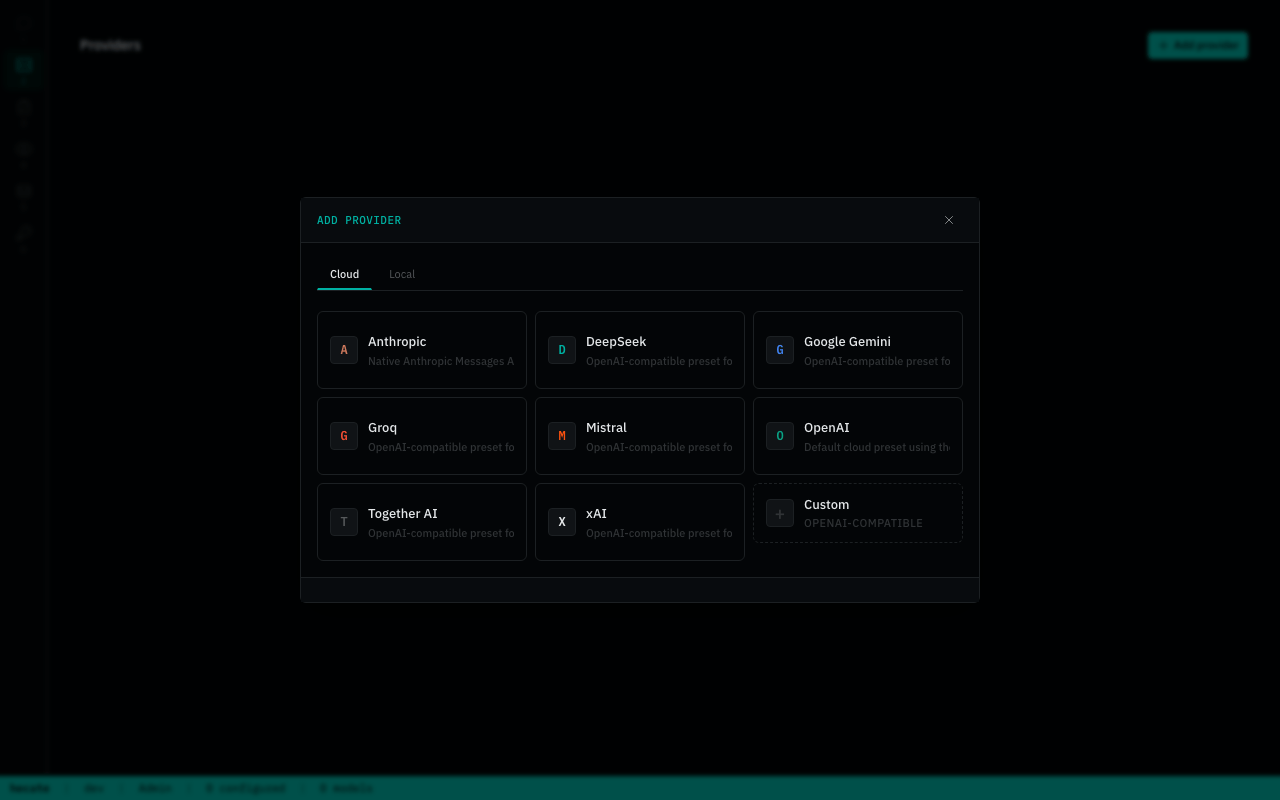

3. Add a provider

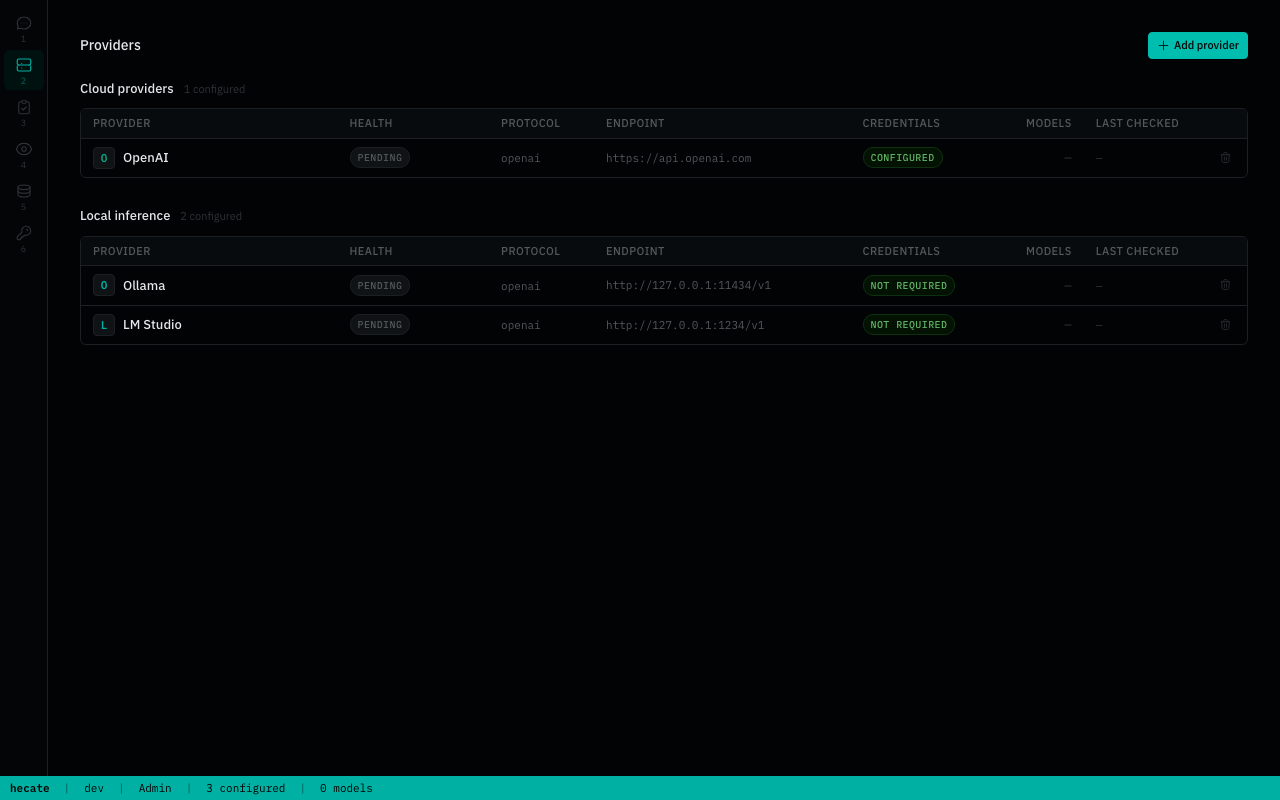

The Providers tab starts empty. Click Add provider, pick a preset (or Custom for any OpenAI-compatible endpoint), and paste an API key (cloud) or endpoint URL (local).

Cloud presets need an API key; local presets just need the runtime listening on its default port. Full catalog, custom-endpoint walk-through, and credential rotation in docs/providers.md.

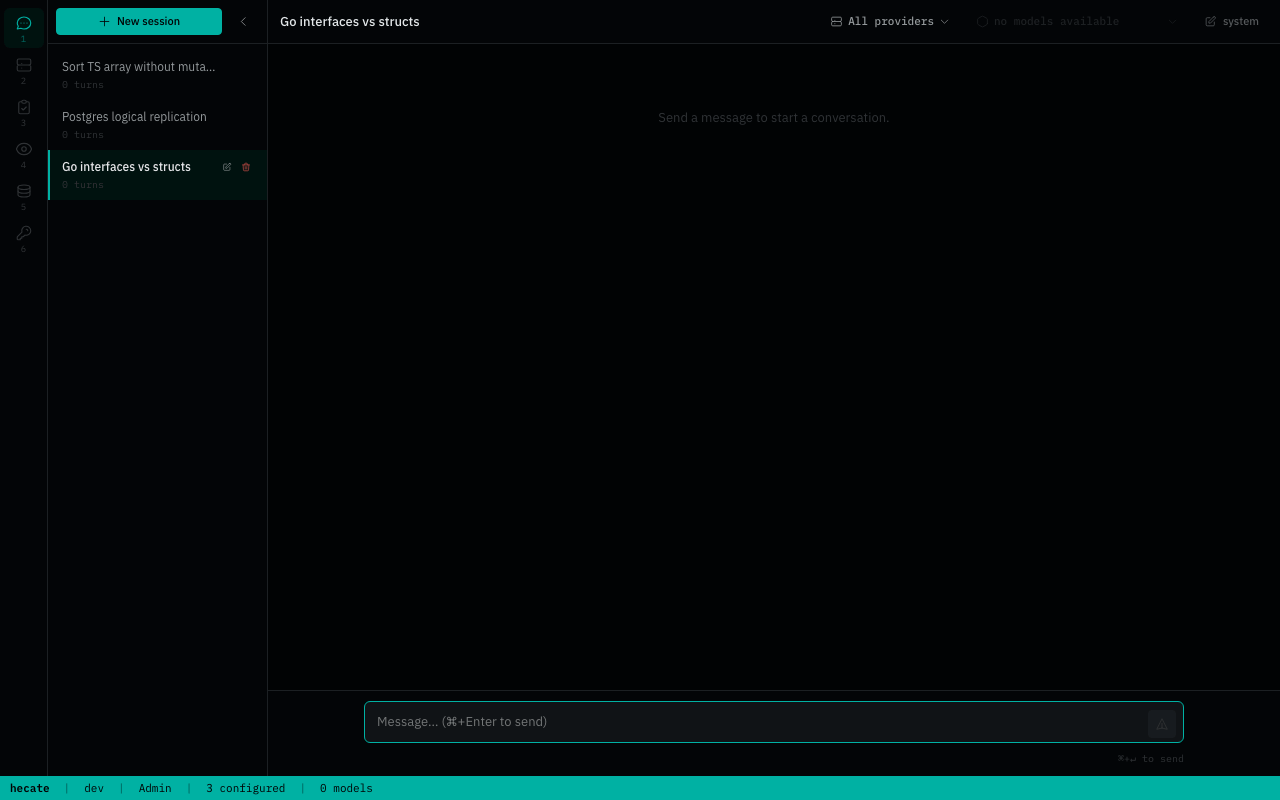

4. Talk to it

The loopback handshake only fires for same-host browsers. Anywhere else (Tailscale, port-forward over SSH, reverse proxy with a different hostname) the UI shows a token paste prompt:

The bootstrap token is printed once to the container logs:

============================================================

Hecate first-run setup — admin bearer token generated.

7f2a91b... (truncated)

Saved to /data/hecate.bootstrap.json (mode 0600).

============================================================

It also lives in hecate.bootstrap.json on the hecate-data volume — recovery instructions in docs/deployment.md.

Cloning the repo lets you pick up optional compose profiles or rebuild from source:

docker compose up # uses the ghcr.io image; first run pulls

docker compose --profile postgres up # adds Postgres for durable state across all subsystems

Local development:

make dev

Pinned image tags, single-file binaries (linux/darwin × amd64/arm64), and checksums are in docs/deployment.md. Local development knobs in docs/development.md.

Provider keys can also be pre-seeded via .env for fleet automation — PROVIDER_<NAME>_API_KEY, _BASE_URL, _DEFAULT_MODEL, plus the _PRECONFIGURED=1 gate. See docs/providers.md. The /admin/control-plane/providers endpoints mirror every UI action for programmatic management.

Architecture

One Go process, one port. Inside it: a chat/messages gateway that mediates client traffic to upstream providers, and a task runtime that queues agent work, drives approvals, and shells out through a sandbox boundary. The React operator UI is embedded into the same binary and served from the same port.

flowchart LR

Clients["Clients<br/>Codex, Claude Code, SDKs"]

Browser["Browser<br/>(operator UI)"]

subgraph Hecate["Hecate (single binary, :8765)"]

direction TB

Gateway["Gateway<br/>/v1/chat/completions<br/>/v1/messages<br/>/v1/models"]

Runtime["Task runtime<br/>/v1/tasks/*<br/>queue + workers + sandbox"]

UI["Embedded UI<br/>(go:embed ui/dist)"]

end

Clients --> Gateway

Clients --> Runtime

Browser --> UI

UI --> Gateway

UI --> Runtime

Gateway --> Providers["Cloud + local providers"]

Gateway --> Cache["Exact + semantic cache"]

Runtime --> Sandbox["sandboxd<br/>(out-of-process exec)"]

Runtime --> MCP["External MCP servers"]

Gateway --> OTel["OpenTelemetry"]

Runtime --> OTel

For deeper internals, read docs/architecture.md, docs/runtime-api.md, and docs/events.md.

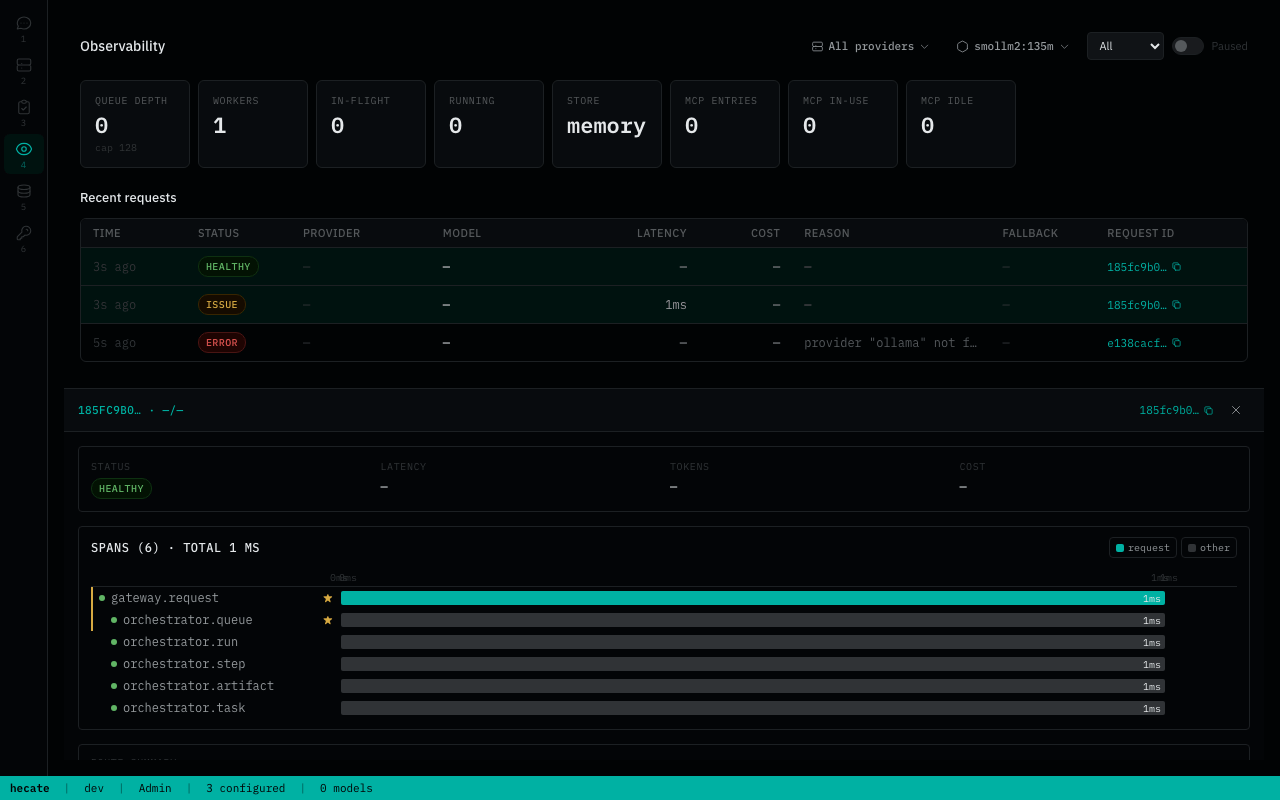

Operator UI

The embedded UI is a runtime console for operators.

- Chats — send requests through Hecate, choose provider/model, inspect per-turn route/cost/cache metadata.

- Providers — manage provider credentials, defaults, model discovery, base URLs, and health.

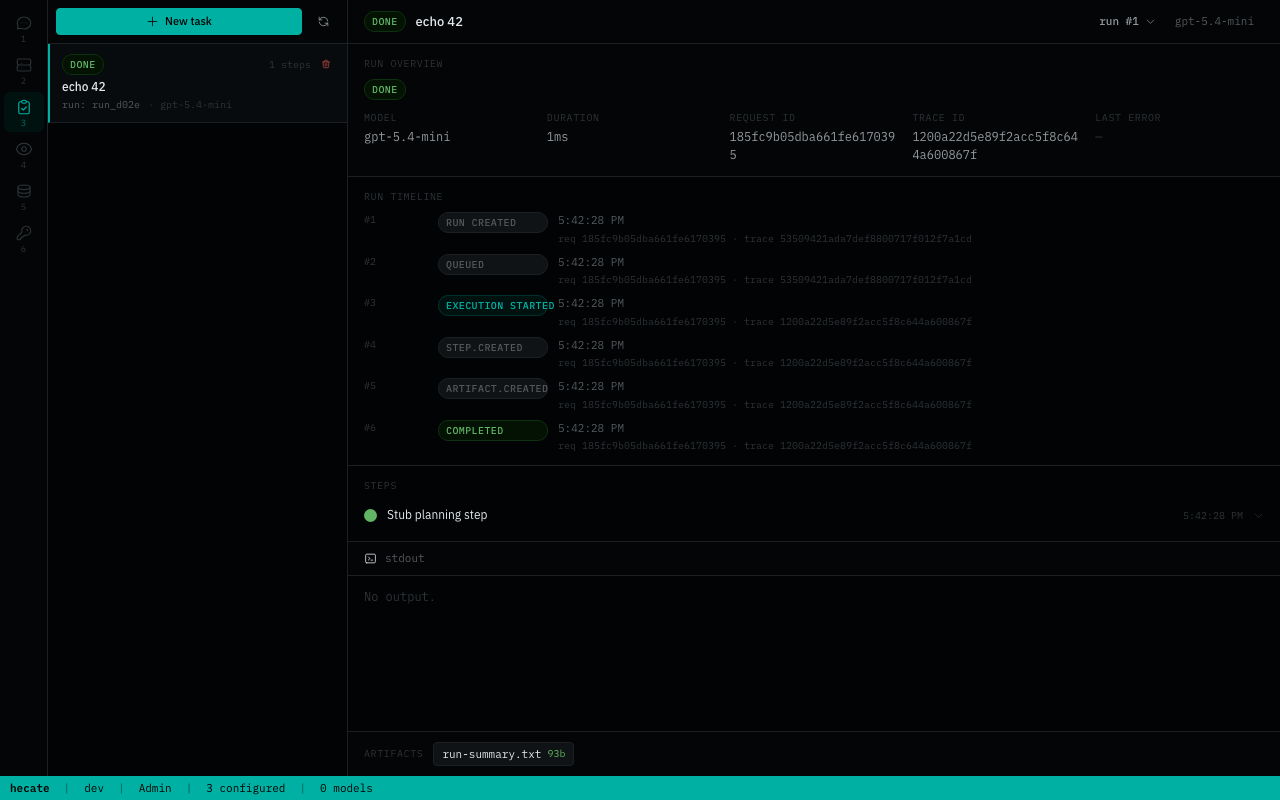

- Tasks — create and manage agent runs, approvals, retries, resumes, and streamed output.

- Observability — inspect requests, route candidates, skip reasons, failover, costs, cache decisions, and trace events.

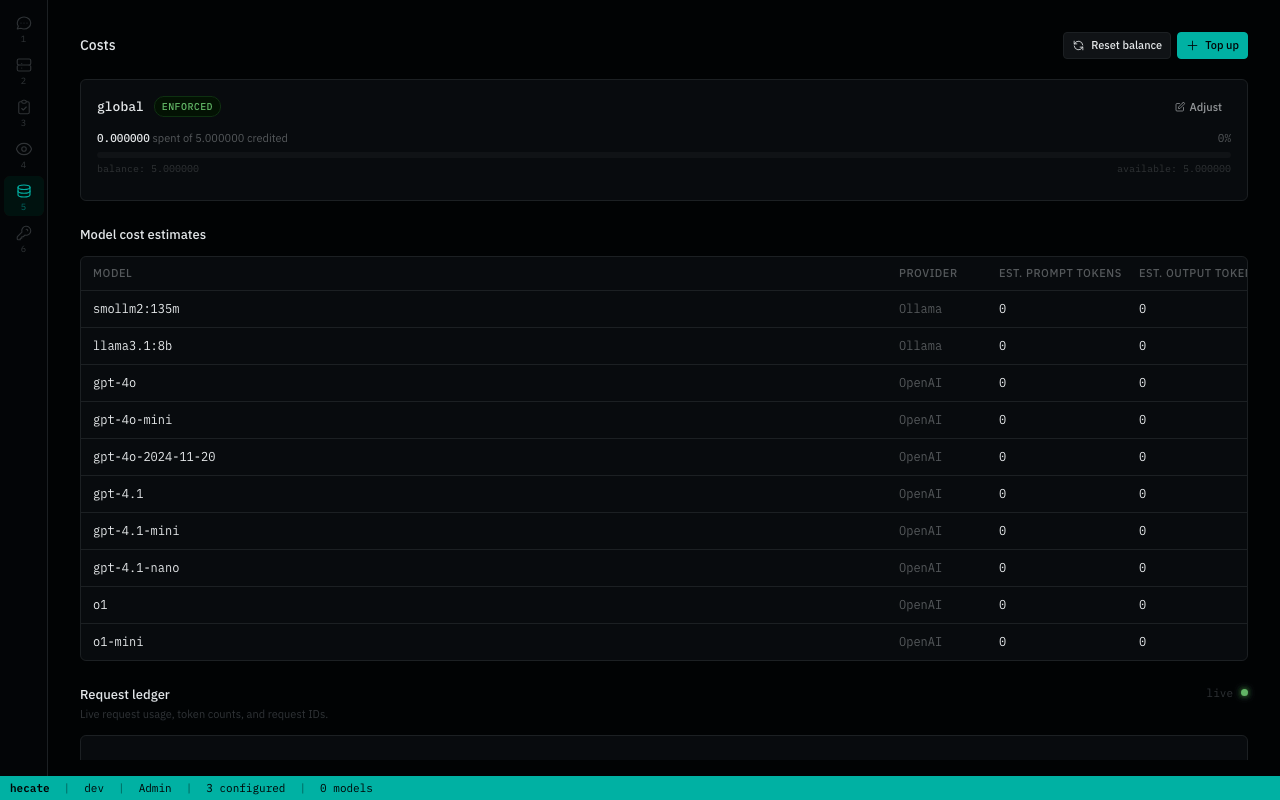

- Costs — balance, top-up / reset, usage table.

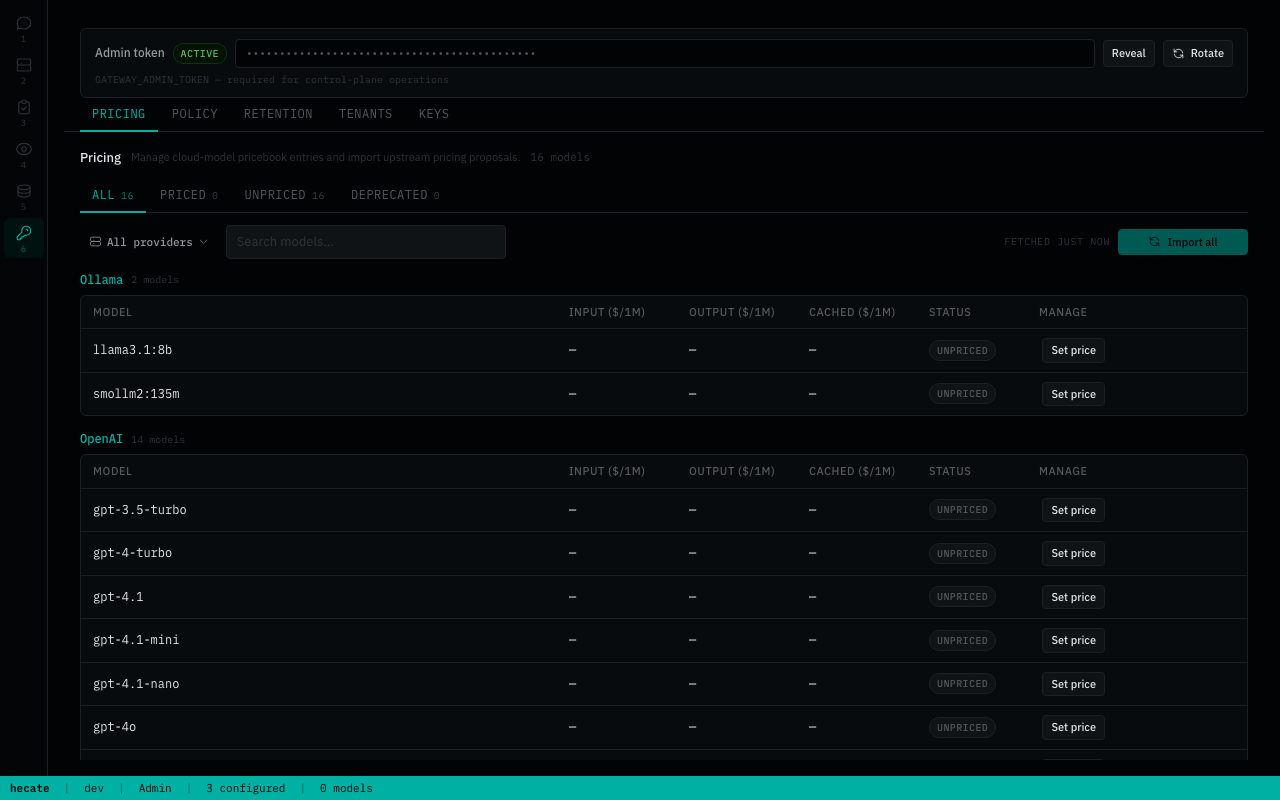

- Settings — pricebook, policy rules, retention, and (when

GATEWAY_MULTI_TENANT=true) tenants + API keys.

What Works Today

Hecate is public-alpha software. The core gateway path is usable; the agent runtime and sandbox are intentionally still evolving.

| Area | State | Notes |

|---|---|---|

| OpenAI-compatible gateway | Usable | Chat Completions, streaming, vision, model discovery |

| Anthropic-compatible gateway | Usable | Messages API shape, streaming translation, Claude Code support |

| Provider catalog | Usable | Built-in presets, encrypted credentials, health, routing readiness |

| Local providers | Usable | Ollama, LM Studio, LocalAI, llama.cpp-compatible servers |

| Auth | Usable | Admin bearer with same-origin loopback handshake; GATEWAY_AUTH_DISABLED for upstream-terminated auth |

| Tenants and API keys | Opt-in | GATEWAY_MULTI_TENANT=true exposes tenant + key management with provider/model scoping |

| Budgets and rate limits | Usable | Balances, warning thresholds, pricebook, 429 rate-limit headers |

| Caching | Usable | Exact cache; semantic cache is available but still early |

| OpenTelemetry | Usable | OTLP traces, metrics, logs, response headers, local trace view |

| Storage tiers | Usable | Memory, SQLite, Postgres, selected per subsystem |

| Operator UI | Usable | Main workflows are present; debugging ergonomics are still improving |

| Agent task runtime | Alpha | Queues, approvals, resumable runs, agent_loop, MCP integration |

| Execution isolation | Alpha | sandboxd boundary exists; stronger OS-level isolation is future work |

Read docs/known-limitations.md before treating Hecate as production-stable.

Configuration

The README intentionally stays light on configuration. The source of truth is:

.env.example— practical first-run environment knobs.- docs/deployment.md — Docker, storage tiers, rate limits, image pinning, reset/recovery.

- docs/providers.md — provider presets, local runtimes, credentials, health.

- docs/telemetry.md — OTLP traces, metrics, logs, collector recipes.

- docs/agent-runtime.md — task runtime, approvals, tools, workspace modes.

- docs/mcp.md — MCP server and MCP tool integration.

Documentation

Browse the full index at docs/README.md. Highlights:

- Run it — Deployment, Providers, Tenants and API keys, Known limitations

- Use it — Runtime API, Agent runtime, Events, MCP integration

- Observe it — Telemetry

- Build it — Architecture, Development, Release, Chat sessions internals

Contributing

See CONTRIBUTING.md. If you work with an AI assistant, start with AGENTS.md; the vendor-neutral agent instruction layer lives in ai/.

License

MIT. See LICENSE.

Third-party data and software notices live in NOTICE.md, including LiteLLM pricing-data attribution.

Reviews (0)

Sign in to leave a review.

Leave a reviewNo results found