skylos

Health Gecti

- License — License: Apache-2.0

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 361 GitHub stars

Code Basarisiz

- eval() — Dynamic code execution via eval() in app.py

- exec() — Shell command execution in app.py

- rm -rf — Recursive force deletion command in app.py

- network request — Outbound network request in app.py

- child_process — Shell command execution capability in editors/vscode/out/ai.js

- exec() — Shell command execution in editors/vscode/out/ai.js

- network request — Outbound network request in editors/vscode/out/ai.js

Permissions Gecti

- Permissions — No dangerous permissions requested

Bu listing icin henuz AI raporu yok.

Open-source Python, TypeScript, and Go SAST with dead code detection. Finds secrets, exploitable flows, and AI regressions. VS Code extension, GitHub Action, and MCP server for AI agents.

Skylos: Open-Source Python SAST, Dead Code Detection, and AI Code Security

Find unused code, hardcoded secrets, exploitable flows, and AI-generated security regressions in Python, TypeScript, and Go. Run locally or gate pull requests in CI/CD.

📖 Website · Documentation · Blog · GitHub Action · VS Code Extension · MCP Server

What is Skylos?

Skylos is an open-source static analysis tool and PR gate for Python, TypeScript, and Go. It helps teams detect dead code, hardcoded secrets, exploitable flows, and AI-generated security regressions before they land in main.

If you use Vulture for dead code, Bandit for security checks, or Semgrep/CodeQL for CI enforcement, Skylos combines those workflows with framework-aware dead code detection and diff-aware regression detection for AI-assisted refactors.

The core use case is straightforward: run it locally, add it to CI, and gate pull requests on real findings with GitHub annotations and review comments. Advanced features like AI defense, remediation agents, VS Code, MCP, and cloud upload are available, but you do not need any of them to get value from Skylos.

Best for

- Python teams that want dead code detection with fewer false positives than Vulture

- Repositories using Cursor, Copilot, Claude Code, or other AI coding assistants

- CI/CD pull request gates with GitHub annotations and review comments

- Python LLM applications that need OWASP LLM Top 10 checks

Available as

- CLI for local scans and CI/CD workflows

- GitHub Action for pull request gating and annotations

- VS Code extension for in-editor findings and AI-assisted fixes

- MCP server for AI agents and coding assistants

Start here

| Goal | Command | What you get |

|---|---|---|

| Scan a repo | skylos . -a |

Dead code, risky flows, secrets, and code quality findings |

| Gate pull requests | skylos cicd init |

A GitHub Actions workflow with a quality gate and inline annotations |

| Audit an LLM app | skylos defend . |

Optional AI defense checks for Python LLM integrations |

Why teams adopt it

- Better dead code signal on real frameworks: Skylos understands FastAPI, Django, Flask, pytest, Next.js, React, and more, so dynamic code produces less noise.

- Diff-aware AI regression detection: Skylos can catch removed auth decorators, CSRF, rate limiting, validation, logging, and other controls that disappear during AI-assisted refactors.

- One workflow instead of three tools: Dead code, security scanning, and PR gating live in the same CLI and CI flow.

- Local-first by default: You can keep scans on your machine and add optional AI or cloud features later if you need them.

- Self-explaining output: Every table prints a legend explaining what each column and number means — no manual required.

Why Skylos over Vulture for Python dead code detection?

| Skylos | Vulture | |

|---|---|---|

| Recall | 98.1% (51/52) | 84.6% (44/52) |

| False Positives | 220 | 644 |

| Framework-aware (FastAPI, Django, pytest) | Yes | No |

| Security scanning (secrets, SQLi, SSRF) | Yes | No |

| AI-powered analysis | Yes | No |

| CI/CD quality gates | Yes | No |

| TypeScript + Go support | Yes | No |

Benchmarked on 9 popular Python repos (350k+ combined stars) + TypeScript (consola). Every finding manually verified. Full case study →

🚀 New to Skylos? Start with CI/CD Integration

# Generate a GitHub Actions workflow in 30 seconds

skylos cicd init

# Commit and push to activate

git add .github/workflows/skylos.yml && git push

What you get:

- Automatic dead code detection on every PR

- Security vulnerability scanning (SQLi, secrets, dangerous patterns)

- Quality gate that fails builds on critical issues

- Inline PR review comments with file:line links

- GitHub Annotations visible in the "Files Changed" tab

No configuration needed - works out of the box with sensible defaults. See CI/CD section for customization.

Table of Contents

- What is Skylos?

- Quick Start

- Technical Debt Hotspots

- Key Capabilities

- Installation

- Skylos vs Vulture

- Projects Using Skylos

- How It Works

- Advanced Workflows

- CI/CD

- MCP Server

- Baseline Tracking

- Gating

- VS Code Extension

- Integration and Ecosystem

- Auditing and Precision

- Coverage Integration

- Filtering

- CLI Options

- FAQ

- Limitations and Troubleshooting

- Contributing

- Roadmap

- License

- Contact

Quick Start

If you are evaluating Skylos, start with the core workflow below. The LLM and AI defense commands are optional.

Core Workflow

| Objective | Command | Outcome |

|---|---|---|

| First scan | skylos . |

Dead code findings with confidence scoring |

| Audit risk and quality | skylos . -a |

Dead code, risky flows, secrets, quality, and SCA findings |

| Higher-confidence dead code | skylos . --trace |

Cross-reference static findings with runtime activity |

| Review only changed lines | skylos . --diff origin/main |

Focus findings on active work instead of legacy debt |

| Gate locally | skylos --gate |

Fail on findings before code leaves your machine |

| Set up CI/CD | skylos cicd init |

Generate a GitHub Actions workflow in 30 seconds |

| Gate in CI | skylos cicd gate --input results.json |

Fail builds when issues cross your threshold |

Optional Workflows

| Objective | Command | Outcome |

|---|---|---|

| Detect Unused Pytest Fixtures | skylos . --pytest-fixtures |

Find unused @pytest.fixture across tests + conftest |

| AI-Powered Analysis | skylos agent scan . --model gpt-4.1 |

Static-first analysis plus judge-all LLM verification for dead code |

| Dead Code Verification | skylos agent verify . --model gpt-4.1 |

Dead-code-only second pass: static findings reviewed by the LLM |

| Security Audit | skylos agent scan . --security |

Deep LLM security review with interactive file selection |

| Auto-Remediate | skylos agent remediate . --auto-pr |

Scan, fix, test, and open a PR — end to end |

| Code Cleanup | skylos agent remediate . --standards |

LLM-guided code quality cleanup against coding standards |

| PR Review | skylos agent scan . --changed |

Analyze only git-changed files |

| PR Review (JSON) | skylos agent scan . --changed --format json -o results.json |

LLM review with code-level fix suggestions |

| Local LLM | skylos agent scan . --base-url http://localhost:11434/v1 --model codellama |

Use Ollama/LM Studio (no API key needed) |

| PR Review (CI) | skylos cicd review -i results.json |

Post inline comments on PRs |

| AI Defense: Discover | skylos discover . |

Map all LLM integrations in your codebase |

| AI Defense: Defend | skylos defend . |

Check LLM integrations for missing guardrails |

| AI Defense: CI Gate | skylos defend . --fail-on critical --min-score 70 |

Block PRs with critical AI defense gaps |

| Whitelist | skylos whitelist 'handle_*' |

Suppress known dynamic patterns |

Technical Debt Hotspots

Use skylos debt <path> to rank structural debt hotspots without collapsing everything into a single urgency number.

scoreis the project-level structural debt score.priorityis the hotspot triage score used for ordering fix candidates.--changedlimits the visible hotspot list to changed files, but keeps the structural debt score anchored to the whole project.

# Full project debt scan

skylos debt .

# Review only changed hotspots without distorting the project score

skylos debt . --changed

# Compare the current project against a saved debt baseline

skylos debt . --baseline

# Save a repo-level debt baseline

skylos debt . --save-baseline

Debt policy files such as skylos-debt.yaml are discovered from the scan target upward, and explicit CLI flags like --top override policy defaults.

Demo

Backup (GitHub): https://github.com/duriantaco/skylos/discussions/82

Key Capabilities

The core product is dead code detection, security scanning, and PR gating. The AI-focused features below are optional layers on top of that baseline workflow.

Security Scanning (SAST)

- Taint Analysis: Traces untrusted input from API endpoints to databases to prevent SQL Injection and XSS.

- Secrets Detection: Hunts down hardcoded API keys (AWS, Stripe, OpenAI) and private credentials before commit.

- Vulnerability Checks: Flags dangerous patterns like

eval(), unsafepickle, and weak cryptography.

AI-Generated Code Guardrails

Skylos can also flag common AI-generated code mistakes. Every finding includes vibe_category and ai_likelihood (high/medium/low) metadata so you can filter them separately if you want.

- Phantom Call Detection: Catches calls to security functions (

sanitize_input,validate_token,check_permission, etc.) that are never defined or imported — AI hallucinates these constantly.hallucinated_reference, high - Phantom Decorator Detection: Catches security decorators (

@require_auth,@rate_limit,@authenticate, etc.) that are never defined or imported.hallucinated_reference, high - Unfinished Generation: Detects functions with only

pass,..., orraise NotImplementedError— AI-generated stubs that silently do nothing in production.incomplete_generation, medium - Undefined Config: Flags

os.getenv("ENABLE_X")referencing feature flags that are never defined anywhere in the project.ghost_config, medium - Stale Mock Detection: Catches

mock.patch("app.email.send_email")wheresend_emailno longer exists — AI renames functions but leaves tests pointing at the old name.stale_reference, medium - Security TODO Scanners: Flags

# TODO: add authplaceholders that AI left behind and nobody finished. - Disabled Security Controls: Detects

verify=False,@csrf_exempt,DEBUG=True, andALLOWED_HOSTS=["*"]. - Credential & Randomness Checks: Catches hardcoded passwords and

random.choice()used for security-sensitive values like tokens and OTPs.

Prompt Injection and Content Scanning

These checks run under --danger and look for prompt injection patterns or obfuscated instructions in repository content.

- Multi-File Prompt Injection Scanner: Scans Python, Markdown, YAML, JSON, TOML, and

.envfiles for hidden instruction payloads — instruction overrides ("ignore previous instructions"), role hijacking ("you are now"), AI-targeted suppression ("do not flag", "skip security"), data exfiltration prompts, and AI-targeting phrases. - Text Canonicalization Engine: NFKC normalization, whitespace folding, and confusable replacement neutralize obfuscation before pattern matching.

- Zero-Width & Invisible Unicode: Detects zero-width spaces, joiners, BOM, and bidi overrides (U+200B–U+202E) that hide payloads from human reviewers.

- Base64 Obfuscation Detection: Automatically decodes base64-encoded strings and re-scans for injection content.

- Homoglyph / Mixed-Script Detection: Flags Cyrillic and Greek characters mixed with Latin text (e.g., Cyrillic 'а' in

password) that bypass visual review. - Location-Aware Severity: Findings in README files, HTML comments, and YAML prompt fields get elevated severity. Test files are automatically skipped.

Advanced: AI Defense for LLM Apps

Static analysis for AI application security that maps every LLM call in your Python codebase and checks for missing guardrails. Python only (TypeScript/Go support planned).

# Discover all LLM integrations

skylos discover .

# Check defenses and get a scored report

skylos defend .

# CI gate: fail on critical gaps, require 70% defense score

skylos defend . --fail-on critical --min-score 70

# JSON output for dashboards and pipelines

skylos defend . --json -o defense-report.json

# Filter by OWASP LLM Top 10 category

skylos defend . --owasp LLM01,LLM04

13 checks across defense and ops:

| Check | Severity | OWASP | What it detects |

|---|---|---|---|

no-dangerous-sink |

Critical | LLM02 | LLM output flowing to eval/exec/subprocess |

untrusted-input-to-prompt |

Critical | LLM01 | Raw user input in prompt with no processing |

tool-scope |

Critical | LLM04 | Agent tools with dangerous system calls |

tool-schema-present |

Critical | LLM04 | Agent tools without typed schemas |

output-validation |

High | LLM02 | LLM output used without structured validation |

prompt-delimiter |

High | LLM01 | User input in prompts without delimiters |

rag-context-isolation |

High | LLM01 | RAG context injected without isolation |

output-pii-filter |

High | LLM06 | No PII filtering on user-facing LLM output |

model-pinned |

Medium | LLM03 | Model version not pinned (floating alias) |

input-length-limit |

Low | LLM01 | No input length check before LLM call |

logging-present |

Medium | Ops | No logging around LLM calls |

cost-controls |

Medium | Ops | No max_tokens set on LLM calls |

rate-limiting |

Medium | Ops | No rate limiting on LLM endpoints |

Defense and ops scores are tracked separately — adding logging won't inflate your security score.

Custom policy via skylos-defend.yaml:

rules:

model-pinned:

severity: critical # Upgrade severity

input-length-limit:

enabled: false # Disable check

gate:

min_score: 70

fail_on: high

Supports OpenAI, Anthropic, Google Gemini, Cohere, Mistral, Ollama, Together AI, Groq, Fireworks, Replicate, LiteLLM, LangChain, LlamaIndex, CrewAI, and AutoGen.

Dead Code Detection & Cleanup

- Find Unused Code: Identifies unreachable functions, orphan classes, and unused imports with confidence scoring.

- Smart Tracing: Distinguishes between truly dead code and dynamic frameworks (Flask/Django routes, Pytest fixtures).

- Safe Pruning: Uses LibCST to safely remove dead code without breaking syntax.

Advanced: Agents, Reviews, and Remediation

- Context-aware audits: Combines static analysis speed with LLM reasoning to validate findings and filter noise.

- Remediation workflow:

skylos agent remediatecan scan, generate fixes, run tests, and optionally open a PR. - Local model support: Supports Ollama and other OpenAI-compatible local endpoints if you want code to stay on your machine.

CI/CD and PR Gating

- 30-Second Workflow Setup:

skylos cicd initgenerates GitHub Actions workflows with sensible defaults. - Diff-Aware Enforcement: Gate only the lines that changed, fail on severity thresholds, and keep legacy debt manageable with baselines.

- PR-Native Feedback: GitHub annotations, inline review comments, and optional dashboard upload keep findings where teams already work.

Safe Cleanup and Workflow Controls

- CST-safe removals: Uses LibCST to remove selected imports or functions (handles multiline imports, aliases, decorators, async etc..)

- Logic Awareness: Deep integration for Python frameworks (Django, Flask, FastAPI) and TypeScript (Tree-sitter) to identify active routes and dependencies.

- Granular Filtering: Skip lines tagged with

# pragma: no skylos,# pragma: no cover, or# noqa

Operational Governance & Runtime

- Coverage Integration: Auto-detects

.skylos-tracefiles to verify dead code with runtime data - Quality Gates: Enforces hard thresholds for complexity, nesting, and security risk via

pyproject.tomlto block non-compliant PRs - Interactive CLI: Manually verify and remove/comment-out findings through an

inquirer-based terminal interface - Security-Audit Mode: Leverages an independent reasoning loop to identify security vulnerabilities

Pytest Hygiene

- Unused Fixture Detection: Finds unused

@pytest.fixturedefinitions intest_*.pyandconftest.py - Cross-file Resolution: Tracks fixtures used across modules, not just within the same file

Multi-Language Support

| Language | Parser | Dead Code | Security | Quality |

|---|---|---|---|---|

| Python | AST | ✅ | ✅ | ✅ |

| TypeScript/TSX | Tree-sitter | ✅ | ✅ | ✅ |

| Java | Tree-sitter | ✅ | ✅ | ✅ |

| Go | Standalone binary | ✅ | - | - |

Languages are auto-detected by file extension. Mixed-language repos work out of the box. No Node.js or JDK required — all parsers are built-in via Tree-sitter.

TypeScript Rules

| Rule | ID | What It Catches |

|---|---|---|

| Dead Code | ||

| Functions | - | Unused functions, arrow functions, and overloads |

| Classes | - | Unused classes, interfaces, enums, and type aliases |

| Imports | - | Unused named, default, and namespace imports |

| Methods | - | Unused methods (lifecycle methods excluded) |

| Security | ||

| eval() | SKY-D201 | eval() usage |

| Dynamic exec | SKY-D202 | exec(), new Function(), setTimeout with string |

| XSS | SKY-D226 | innerHTML, outerHTML, document.write(), dangerouslySetInnerHTML |

| SQL injection | SKY-D211 | Template literal / f-string in SQL query |

| Command injection | SKY-D212 | child_process.exec(), os.system() |

| SSRF | SKY-D216 | fetch()/axios with variable URL |

| Open redirect | SKY-D230 | res.redirect() with variable argument |

| Weak hash | SKY-D207/D208 | MD5 / SHA1 usage |

| Prototype pollution | SKY-D510 | __proto__ access |

| Dynamic require | SKY-D245 | require() with variable argument |

| JWT bypass | SKY-D246 | jwt.decode() without verification |

| CORS wildcard | SKY-D247 | cors({ origin: '*' }) |

| Internal URL | SKY-D248 | Hardcoded localhost/127.0.0.1 URLs |

| Insecure random | SKY-D250 | Math.random() for security-sensitive ops |

| Sensitive logs | SKY-D251 | Passwords/tokens passed to console.log() |

| Insecure cookie | SKY-D252 | Missing httpOnly/secure flags |

| Timing attack | SKY-D253 | ===/== comparison of secrets |

| Storage tokens | SKY-D270 | Sensitive data in localStorage/sessionStorage |

| Error disclosure | SKY-D271 | error.stack/.sql sent in HTTP response |

| Secrets | SKY-S101 | Hardcoded API keys + high-entropy strings |

| Quality | ||

| Complexity | SKY-Q301 | Cyclomatic complexity exceeds threshold |

| Nesting depth | SKY-Q302 | Too many nested levels |

| Function length | SKY-C304 | Function exceeds line limit |

| Too many params | SKY-C303 | Function has too many parameters |

| Duplicate condition | SKY-Q305 | Identical condition in if-else-if chain |

| Await in loop | SKY-Q402 | await inside for/while loop |

| Unreachable code | SKY-UC002 | Code after return/throw/break/continue |

Framework-aware: Next.js convention exports (page.tsx, layout.tsx, route.ts, middleware.ts), config exports (getServerSideProps, generateMetadata, revalidate), React patterns (memo, forwardRef), and exported custom hooks (use*) are automatically excluded from dead code reports.

TypeScript dead code detection tracks: callbacks, type annotations, generics, decorators, inheritance (extends), object shorthand, spread, re-exports, and typeof references. Benchmarked at 95% recall with 0 false positives on alive code.

Installation

Basic Installation

## from pypi

pip install skylos

## with LLM-powered features (agent verify, agent remediate, etc.)

pip install skylos[llm]

## with Rust-accelerated analysis (up to 63x faster)

pip install skylos[fast]

## both

pip install skylos[llm,fast]

## or from source

git clone https://github.com/duriantaco/skylos.git

cd skylos

pip install .

skylos[fast]installs an optional Rust backend that accelerates clone detection (63x), file discovery (5x), coupling analysis, and cycle detection. Same results, just faster. Pure Python works fine without it — the Rust module is auto-detected at runtime.

skylos[llm]installslitellmfor LLM-powered features (skylos agent verify,skylos agent remediate,--llm). Core static analysis works without it.

🎯 What's Next?

After installation, we recommend:

Set up CI/CD (30 seconds):

skylos cicd init git add .github/workflows/skylos.yml && git pushThis will automatically scan every PR for dead code and security issues.

Run your first scan:

skylos . # Dead code only skylos . --danger --secrets # Include security checksKeep scans focused on active work:

skylos . --diff origin/mainTry advanced workflows only if you need them:

skylos agent review . --model gpt-4.1 skylos defend .

See all commands in the Quick Start table

Skylos vs. Vulture Benchmark

We benchmarked Skylos against Vulture on 9 of the most popular Python repositories on GitHub — 350k+ combined stars, covering HTTP clients, web frameworks, CLI tools, data validation, terminal UIs, and progress bars. Every single finding was manually verified against the source code. No automated labelling, no cherry-picking.

Why These 9 Repos?

We deliberately chose projects that stress-test dead code detection in different ways:

| Repository | Stars | What It Tests |

|---|---|---|

| psf/requests | 53k | __init__.py re-exports, Sphinx conf, pytest classes |

| pallets/click | 17k | IO protocol methods (io.RawIOBase subclasses), nonlocal closures |

| encode/starlette | 10k | ASGI interface params, polymorphic dispatch, public API methods |

| Textualize/rich | 51k | __rich_console__ protocol, sentinel vars via f_locals, metaclasses |

| encode/httpx | 14k | Transport/auth protocol methods, zero dead code (pure FP test) |

| pallets/flask | 69k | Jinja2 template globals, Werkzeug protocol methods, extension hooks |

| pydantic/pydantic | 23k | Mypy plugin hooks, hypothesis @resolves, __getattr__ config |

| fastapi/fastapi | 82k | 100+ OpenAPI spec model fields, Starlette base class overrides |

| tqdm/tqdm | 30k | Keras/Dask callbacks, Rich column rendering, pandas monkey-patching |

No repo was excluded for having unfavorable results. We include repos where Vulture beats Skylos (click, starlette, tqdm).

Results

| Repository | Dead Items | Skylos TP | Skylos FP | Vulture TP | Vulture FP |

|---|---|---|---|---|---|

| psf/requests | 6 | 6 | 35 | 6 | 58 |

| pallets/click | 7 | 7 | 8 | 6 | 6 |

| encode/starlette | 1 | 1 | 4 | 1 | 2 |

| Textualize/rich | 13 | 13 | 14 | 10 | 8 |

| encode/httpx | 0 | 0 | 6 | 0 | 59 |

| pallets/flask | 7 | 7 | 12 | 6 | 260 |

| pydantic/pydantic | 11 | 11 | 93 | 10 | 112 |

| fastapi/fastapi | 6 | 6 | 30 | 4 | 102 |

| tqdm/tqdm | 1 | 0 | 18 | 1 | 37 |

| Total | 52 | 51 | 220 | 44 | 644 |

| Metric | Skylos | Vulture |

|---|---|---|

| Recall | 98.1% (51/52) | 84.6% (44/52) |

| False Positives | 220 | 644 |

| Dead items found | 51 | 44 |

Skylos finds 7 more dead items than Vulture with 3x fewer false positives.

Why Skylos Produces Fewer False Positives

Vulture uses flat name matching — if the bare name X appears anywhere as a string or identifier, all definitions named X are considered used. This works well for simple cases but drowns in noise on framework-heavy codebases:

- Flask (260 Vulture FP): Vulture flags every Jinja2 template global, Werkzeug protocol method, and Flask extension hook. Skylos recognizes Flask/Werkzeug patterns.

- Pydantic (112 Vulture FP): Vulture flags all config class annotations,

TYPE_CHECKINGimports, and mypy plugin hooks. Skylos understands Pydantic model fields and__getattr__dynamic access. - FastAPI (102 Vulture FP): Vulture flags 100+ OpenAPI spec model fields (Pydantic

BaseModelattributes likemaxLength,exclusiveMinimum). Skylos recognizes these as schema definitions. - httpx (59 Vulture FP): Vulture flags every transport and auth protocol method. Skylos suppresses interface implementations.

Where Skylos Still Loses (Honestly)

- click (8 vs 6 FP): IO protocol methods (

readable,readinto) onio.RawIOBasesubclasses — called by Python's IO stack, not by direct call sites. - starlette (4 vs 2 FP): Instance method calls across files (

obj.method()) not resolved back to class definitions. - tqdm (18 vs 37 FP, 0 vs 1 TP): Skylos misses 1 dead function in

__init__.pybecause it suppresses__init__.pydefinitions as potential re-exports.

Reproduce any benchmark:

cd real_life_examples/{repo} && python3 ../benchmark_{repo}.pyFull methodology and per-repo breakdowns in the skylos-demo repository.

Skylos vs. Knip (TypeScript)

We also benchmarked Skylos against Knip on a real-world TypeScript library:

| unjs/consola (7k stars, 21 files, ~2,050 LOC) | |

|---|---|

| Dead items | 4 (entire orphaned src/utils/format.ts module) |

| Metric | Skylos | Knip |

|---|---|---|

| Recall | 100% (4/4) | 100% (4/4) |

| Precision | 36.4% | 7.5% |

| F1 Score | 53.3% | 14.0% |

| Speed | 6.83s | 11.08s |

Both tools find all dead code. Skylos has ~5x better precision — Knip incorrectly flags package entry points as dead files (its package.json exports point to dist/ not src/) and reports public API re-exports as unused.

Reproduce:

cd real_life_examples/consola && python3 ../benchmark_consola.py

Projects Using Skylos

If you use Skylos in a public repository, open an issue and add it here. This list is based on self-submissions, so it will stay small until more teams opt in publicly.

| Project | Description |

|---|---|

| Skylos | Uses Skylos on itself for dead code, security, and CI gating |

| Your project here | Add yours |

How It Works

Skylos builds a reference graph of your entire codebase - who defines what, who calls what, across all files.

Parse all files -> Build definition map -> Track references -> Find orphans (zero refs = dead)

High Precision & Confidence Scoring

Static analysis often struggles with Python's dynamic nature (e.g., getattr, pytest.fixture). Skylos minimizes false positives through:

- Confidence Scoring: Grades findings (High/Medium/Low) so you only see what matters.

- Hybrid Verification: Uses LLM reasoning to double-check static findings before reporting.

- Runtime Tracing: Optional

--tracemode validates "dead" code against actual runtime execution.

| Confidence | Meaning | Action |

|---|---|---|

| 100 | Definitely unused | Safe to delete |

| 60 | Probably unused (default threshold) | Review first |

| 40 | Maybe unused (framework helpers) | Likely false positive |

| 20 | Possibly unused (decorated/routes) | Almost certainly used |

| 0 | Show everything | Debug mode |

skylos . -c 60 # Default: high-confidence findings only

skylos . -c 30 # Include framework helpers

skylos . -c 0 # Everything

Framework Detection

When Skylos sees Flask, Django, FastAPI, Next.js, or React imports, it adjusts scoring automatically:

| Pattern | Handling |

|---|---|

@app.route, @router.get |

Entry point → marked as used |

app.add_url_rule(...), app.add_api_route(...), app.add_route(...), app.register_listener(...), app.register_middleware(...) |

Imperative route or lifecycle registration → marked as used |

@pytest.fixture |

Treated as a pytest entrypoint, but can be reported as unused if never referenced |

@pytest.hookimpl, @hookimpl |

Plugin hook implementation → marked as used |

@celery.task |

Entry point → marked as used |

getattr(mod, "func") |

Tracks dynamic reference |

getattr(mod, f"handle_{x}") |

Tracks pattern handle_* |

Next.js page.tsx, layout.tsx, route.ts |

Default/named exports → marked as used |

Next.js getServerSideProps, generateMetadata |

Config exports → marked as used |

React.memo(), forwardRef() |

Wrapped components → marked as used |

Exported use* hooks |

Custom hooks → marked as used |

Test File Exclusion

Tests call code in weird ways that look like dead code. By default, Skylos excludes:

| Detected By | Examples |

|---|---|

| Path | /tests/, /test/, *_test.py |

| Imports | pytest, unittest, mock |

| Decorators | @pytest.fixture, @patch |

# These are auto-excluded (confidence set to 0)

/project/tests/test_user.py

/project/test/helper.py

# These are analyzed normally

/project/user.py

/project/test_data.py # Doesn't end with _test.py

Want test files included? Use --include-folder tests.

Philosophy

When ambiguous, we'd rather miss dead code than flag live code as dead.

Framework endpoints are called externally (HTTP, signals). Name resolution handles aliases. When things get unclear, we err on the side of caution.

Unused Pytest Fixtures

Skylos can detect pytest fixtures that are defined but never used.

skylos . --pytest-fixtures

This includes fixtures inside conftest.py, since conftest.py is the standard place to store shared test fixtures.

Advanced Workflows

These commands are optional. Use them when you want LLM-assisted review, remediation, or AI defense on top of the core scanner and CI gate.

Skylos uses a hybrid architecture that combines static analysis with LLM reasoning:

Why Hybrid?

| Approach | Recall | Precision | Logic Bugs |

|---|---|---|---|

| Static only | Low | High | ❌ |

| LLM only | High | Medium | ✅ |

| Hybrid | Highest | High | ✅ |

Research shows LLMs find vulnerabilities that static analysis misses, while static analysis validates LLM suggestions. However, LLMs are prone to false positives in dead code if they are asked to invent findings from raw source alone.

For dead code, Skylos now uses a stricter contract:

- static analysis generates the candidate list

- repo facts and graph evidence are gathered around each candidate

skylos agent scanandskylos agent verifysend nearly everyreferences == 0candidate through the LLM injudge_allmode- deterministic suppressors still exist, but in

judge_allmode they are attached as evidence instead of silently deciding the outcome

Use --verification-mode production if you want the cheaper deterministic-first path instead of the default judge-all review.

Agent Commands

| Command | Description |

|---|---|

skylos agent scan PATH |

Full hybrid pipeline with fix suggestions and judge-all dead-code verification |

skylos agent scan PATH --no-fixes |

Same pipeline, skip fix suggestions (faster) |

skylos agent scan PATH --changed |

Analyze only git-changed files |

skylos agent scan PATH --security |

Security-only LLM audit with interactive file selection |

skylos agent verify PATH |

Dead-code-only verification pass over static findings |

skylos agent verify PATH --fix --pr |

Verify, generate removal patches, create branch and commit |

skylos agent remediate PATH |

End-to-end: scan, fix, test, and create PR |

skylos agent remediate PATH --standards |

LLM-guided cleanup with built-in standards (or --standards custom.md) |

skylos agent triage suggest |

Show auto-triage candidates from learned patterns |

skylos agent triage dismiss ID |

Dismiss a finding from the queue |

Provider Configuration

Skylos supports cloud and local LLM providers:

# Cloud - OpenAI (auto-detected from model name)

skylos agent scan . --model gpt-4.1

# Cloud - Anthropic (auto-detected from model name)

skylos agent scan . --model claude-sonnet-4-20250514

# Local - Ollama

skylos agent scan . \

--provider openai \

--base-url http://localhost:11434/v1 \

--model qwen2.5-coder:7b

# Cheaper dead-code verification path

skylos agent verify . \

--model claude-sonnet-4-20250514 \

--verification-mode production

Note: You can use the --model flag to specify the model that you want. We support Gemini, Groq, Anthropic, ChatGPT and Mistral.

Keys and configuration

Skylos can use API keys from (1) skylos key, or (2) environment variables.

Recommended (interactive)

skylos key

# opens a menu:

# - list keys

# - add key (openai / anthropic / google / groq / mistral / ...)

# - remove key

Environment Variables

Set defaults to avoid repeating flags:

# API Keys

export OPENAI_API_KEY="sk-..."

export ANTHROPIC_API_KEY="sk-ant-..."

# Default to local Ollama

export SKYLOS_LLM_PROVIDER=openai

export SKYLOS_LLM_BASE_URL=http://localhost:11434/v1

LLM PR Review

skylos agent scan --changed analyzes git-changed files, runs static analysis, then uses the LLM to generate code-level fix suggestions for every finding (security, quality, and dead code).

# Run LLM review and output JSON

skylos agent scan . --changed --model claude-sonnet-4-20250514 --format json -o llm-results.json

# Use with cicd review to post inline comments on PRs

skylos cicd review --input results.json --llm-input llm-results.json

The hybrid pipeline runs in stages:

- Static analysis — finds security, quality, and dead code issues

- Dead-code verification — the LLM judges static dead-code candidates using graph evidence, repo facts, and surrounding context

- Additional LLM analysis — scans for logic/security issues static analysis may miss

- Code fix generation — for each reported finding, generates the problematic code snippet and a corrected version

Each PR comment shows the exact vulnerable lines and a drop-in replacement fix.

What LLM Analysis Detects

| Category | Examples |

|---|---|

| Hallucinations | Calls to functions that don't exist |

| Logic bugs | Off-by-one, incorrect conditions, missing edge cases |

| Business logic | Auth bypasses, broken access control |

| Context issues | Problems requiring understanding of intent |

Local LLM Setup (Ollama)

# Install Ollama

curl -fsSL https://ollama.com/install.sh | sh

# Pull a code model

ollama pull qwen2.5-coder:7b

# Use with Skylos

skylos agent scan ./src \

--provider openai \

--base-url http://localhost:11434/v1 \

--model qwen2.5-coder:7b

Remediation Agent

The remediation agent automates the full fix lifecycle. It scans your project, prioritizes findings, generates fixes via the LLM, validates each fix by running your test suite, and optionally opens a PR.

# Preview what would be fixed (safe, no changes)

skylos agent remediate . --dry-run

# Fix up to 5 critical/high issues, validate with tests

skylos agent remediate . --max-fixes 5 --severity high

# Full auto: fix, test, create PR

skylos agent remediate . --auto-pr --model gpt-4.1

# Use a custom test command

skylos agent remediate . --test-cmd "pytest test/ -x"

Safety guardrails:

- Dry run by default — use

--dry-runto preview without touching files - Fixes that break tests are automatically reverted

- Low-confidence fixes are skipped

- After applying a fix, Skylos re-scans to confirm the finding is actually gone

--auto-pralways works on a new branch, never touches main--max-fixesprevents runaway changes (default 10)

Recommended Models

| Model | Provider | Use Case |

|---|---|---|

gpt-4.1 |

OpenAI | Best accuracy |

claude-sonnet-4-20250514 |

Anthropic | Best reasoning |

qwen2.5-coder:7b |

Ollama | Fast local analysis |

codellama:13b |

Ollama | Better local accuracy |

CI/CD

Run Skylos in your CI pipeline with quality gates, GitHub annotations, and PR review comments.

Quick Start (30 seconds)

# Auto-generate a GitHub Actions workflow

skylos cicd init

# Commit and activate

git add .github/workflows/skylos.yml && git push

That's it! Your next PR will have:

- Dead code detection

- Security scanning (SQLi, SSRF, secrets)

- Quality checks

- Inline PR comments with clickable file:line links

- Quality gate that fails builds on critical issues

Want AI-powered code fixes on PRs?

skylos cicd init --llm --model claude-sonnet-4-20250514

This adds an LLM step that generates code-level fix suggestions — showing the vulnerable code and the corrected version inline on your PR.

Optional GitHub Secrets

For the default skylos cicd init workflow, you do not need any Skylos-specific secrets. Add these only if you enable the matching feature in GitHub Actions (Settings > Secrets and variables > Actions):

| Secret | When needed | Description |

|---|---|---|

ANTHROPIC_API_KEY |

If using Claude models | Your Anthropic API key |

OPENAI_API_KEY |

If using GPT models | Your OpenAI API key |

SKYLOS_API_KEY |

For Skylos Cloud features | Get from skylos.dev |

SKYLOS_TOKEN |

If using --upload |

Upload token from skylos.dev/dashboard/settings |

GH_TOKEN is automatically provided by GitHub Actions — no setup needed for PR comments.

Command Reference

Core Analysis

| Command | Description |

|---|---|

skylos <path> |

Dead code, security, and quality analysis |

skylos debt <path> |

Technical debt hotspot analysis with baseline-aware prioritization |

skylos discover <path> |

Map LLM/AI integrations in your codebase |

skylos defend <path> |

Check LLM integrations for missing defenses |

skylos city <path> |

Visualize codebase as a Code City topology |

AI Agent

| Command | Description |

|---|---|

skylos agent scan <path> |

Hybrid static + LLM analysis |

skylos agent verify <path> |

LLM-verify dead code (100% accuracy) |

skylos agent remediate <path> |

Auto-fix issues and create PR |

skylos agent watch <path> |

Continuous repo monitoring with optional triage pattern learning |

skylos agent pre-commit <path> |

Analyze staged files (git hook) |

skylos agent triage |

Manage finding triage (dismiss/snooze) |

CI/CD

| Command | Description |

|---|---|

skylos cicd init |

Generate GitHub Actions workflow |

skylos cicd gate |

Quality gate (CI exit code) |

skylos cicd annotate |

Emit GitHub Actions annotations |

skylos cicd review |

Post inline PR review comments |

Account

| Command | Description |

|---|---|

skylos login |

Connect to Skylos Cloud |

skylos whoami |

Show connected account info |

skylos key |

Manage API keys |

skylos credits |

Check credit balance |

Utility

| Command | Description |

|---|---|

skylos init |

Initialize config in pyproject.toml |

skylos baseline <path> |

Save current findings as baseline |

skylos whitelist <pattern> |

Manage whitelisted symbols |

skylos badge |

Get badge markdown for README |

skylos rules |

Install/manage community rule packs |

skylos doctor |

Check installation health |

skylos clean |

Remove cache and state files |

skylos tour |

Guided tour of capabilities |

skylos commands |

List all commands (flat) |

Run skylos <command> --help for detailed usage of any command.

Commands (Detailed)

skylos cicd init

Generates a ready-to-use GitHub Actions workflow.

skylos cicd init

skylos cicd init --triggers pull_request schedule

skylos cicd init --analysis security quality

skylos cicd init --python-version 3.11

skylos cicd init --llm --model gpt-4.1

skylos cicd init --upload # include --upload step + SKYLOS_TOKEN env

skylos cicd init --upload --llm --model claude-sonnet-4-20250514 # upload + LLM

skylos cicd init --defend # add AI Defense check step

skylos cicd init --defend --upload # defend + upload results to cloud

skylos cicd init --no-baseline

skylos cicd init -o .github/workflows/security.yml

skylos cicd gate

Checks findings against your quality gate. Exits 0 (pass) or 1 (fail). Uses the same check_gate() as skylos . --gate.

skylos . --danger --quality --secrets --json > results.json 2>/dev/null

skylos cicd gate --input results.json

skylos cicd gate --input results.json --strict

skylos cicd gate --input results.json --summary

You can also use the main CLI directly:

skylos . --gate --summary

Configure thresholds in pyproject.toml:

[tool.skylos.gate]

fail_on_critical = true

max_critical = 0

max_high = 5

max_security = 10

max_quality = 10

skylos cicd annotate

Emits GitHub Actions annotations (::error, ::warning, ::notice). Uses the same _emit_github_annotations() as skylos . --github, with sorting and a 50-annotation cap.

skylos cicd annotate --input results.json

skylos cicd annotate --input results.json --severity high

skylos cicd annotate --input results.json --max 30

skylos . --github

skylos cicd review

Posts inline PR review comments and a summary via gh CLI. Only comments on lines changed in the PR.

skylos cicd review --input results.json

skylos cicd review --input results.json --pr 20

skylos cicd review --input results.json --summary-only

skylos cicd review --input results.json --max-comments 10

skylos cicd review --input results.json --diff-base origin/develop

# With LLM-generated code fixes (vulnerable code → fixed code)

skylos cicd review --input results.json --llm-input llm-results.json

When --llm-input is provided, each inline comment shows the problematic code and the corrected version:

🔴 CRITICAL SKY-D211

Possible SQL injection: tainted or string-built query.

Why: User input is concatenated directly into the SQL query string.

Vulnerable code:

results = conn.execute(f"SELECT * FROM users WHERE name LIKE '%{q}%'").fetchall()

Fixed code:

results = conn.execute("SELECT * FROM users WHERE name LIKE ?", (f"%{q}%",)).fetchall()

In GitHub Actions, PR number and repo are auto-detected. Requires GH_TOKEN.

How It Fits Together

The gate and annotation logic lives in the core Skylos modules (gatekeeper.py and cli.py). The cicd commands are convenience wrappers that read from a JSON file and call the same functions:

skylos cicd command |

Calls |

|---|---|

gate |

gatekeeper.run_gate_interaction(summary=True) |

annotate |

cli._emit_github_annotations(max_annotations=50) |

review |

New — cicd/review.py (PR comments via gh api) |

init |

New — cicd/workflow.py (YAML generation) |

Tips

- Run analysis once, consume many times — use

--json > results.json 2>/dev/nullthen pass--input results.jsonto each subcommand. - Baseline — run

skylos baseline .to snapshot existing findings, then--baselinein CI to only flag new issues. - Local testing — all commands work locally.

gateandannotateprint to stdout.reviewrequiresghCLI.

MCP Server

mcp-name: io.github.duriantaco/skylos

Skylos exposes its analysis capabilities as an MCP (Model Context Protocol) server, allowing AI assistants like Claude Desktop to scan your codebase directly.

Setup

pip install skylos

Add to your Claude Desktop config (~/.config/claude/claude_desktop_config.json on Linux, ~/Library/Application Support/Claude/claude_desktop_config.json on macOS):

{

"mcpServers": {

"skylos": {

"command": "python",

"args": ["-m", "skylos_mcp.server"]

}

}

}

Available Tools

| Tool | Description |

|---|---|

analyze |

Dead code detection (unused functions, imports, classes, variables) |

security_scan |

Security vulnerability scan (--danger equivalent) |

quality_check |

Code quality and complexity analysis (--quality equivalent) |

secrets_scan |

Hardcoded secrets detection (--secrets equivalent) |

remediate |

End-to-end: scan, generate LLM fixes, validate with tests |

generate_fix |

Generate removal patches for confirmed dead code |

verify_dead_code |

LLM-verify dead code findings (reduce false positives) |

learn_triage |

Record a triage decision for pattern learning |

get_triage_suggestions |

Get auto-triage candidates from learned patterns |

Available Resources

| Resource | URI | Description |

|---|---|---|

| Latest result | skylos://results/latest |

Most recent analysis run |

| Result by ID | skylos://results/{run_id} |

Specific analysis run |

| List results | skylos://results |

All stored analysis runs |

Usage in Claude Desktop

Once configured, you can ask Claude:

- "Scan my project for security issues" → calls

security_scan - "Check code quality in src/" → calls

quality_check - "Find hardcoded secrets" → calls

secrets_scan - "Fix security issues in my project" → calls

remediate

Baseline Tracking

Baseline tracking lets you snapshot existing findings so CI only flags new issues introduced by a PR.

# Create baseline from current state

skylos baseline .

# Run analysis, only show findings NOT in the baseline

skylos . --danger --secrets --quality --baseline

# In CI: compare against baseline

skylos . --danger --baseline --gate

The baseline is stored in .skylos/baseline.json. Commit this file to your repo so CI can use it.

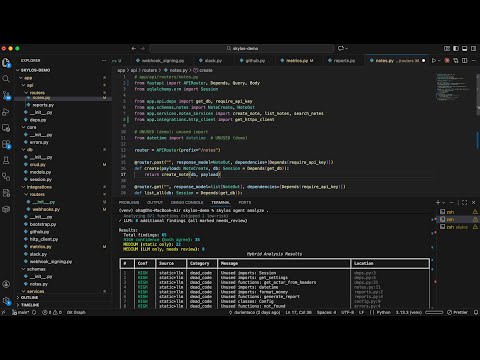

VS Code Extension

Real-time AI-powered code analysis directly in your editor.

Installation

- Search "Skylos" in VS Code marketplace or run:

ext install oha.skylos-vscode-extension

- Make sure the CLI is installed:

pip install skylos

- (Optional) Add your API key for AI features in VS Code Settings →

skylos.openaiApiKeyorskylos.anthropicApiKey

How It Works

| Layer | Trigger | What It Does |

|---|---|---|

| Static Analysis | On save | Runs Skylos CLI for dead code, secrets, dangerous patterns |

| AI Watcher | On idle (2s) | Sends changed functions to GPT-4/Claude for bug detection |

Features

- Real-time Analysis: Detects bugs as you type — no save required

- CodeLens Buttons: "Fix with AI" and "Dismiss" appear inline on error lines

- Streaming Fixes: See fix progress in real-time

- Smart Caching: Only re-analyzes functions that actually changed

- Multi-Provider: Choose between OpenAI and Anthropic

New Features

- MCP Server Support: Connect Skylos directly to Claude Desktop or any MCP client to chat with your codebase.

- CI/CD Agents: Autonomous bots that scan, fix, test, and open PRs automatically in your pipeline.

- Hybrid Verification: Eliminates false positives by verifying static findings with LLM reasoning.

Extension Settings

| Setting | Default | Description |

|---|---|---|

skylos.aiProvider |

"openai" |

"openai" or "anthropic" |

skylos.openaiApiKey |

"" |

Your OpenAI API key |

skylos.anthropicApiKey |

"" |

Your Anthropic API key |

skylos.idleMs |

2000 |

Wait time before AI analysis (ms) |

skylos.runOnSave |

true |

Run Skylos CLI on save |

skylos.enableSecrets |

true |

Scan for hardcoded secrets |

skylos.enableDanger |

true |

Flag dangerous patterns |

Usage

| Action | Result |

|---|---|

| Save a Python file | Skylos CLI scans the workspace |

| Type and pause | AI analyzes changed functions |

| Click "Fix with AI" | Generates fix with diff preview |

Cmd+Shift+P -> "Skylos: Scan Workspace" |

Full project scan |

Privacy

- Static analysis runs 100% locally

- AI features send only changed function code to your configured provider

- We DO NOT collect any telemetry or data

Install from VS Code Marketplace

Gating

Block bad code before it merges. Configure thresholds, run locally, then automate in CI.

Initialize Configuration

skylos init

Creates [tool.skylos] in your pyproject.toml:

[tool.skylos]

# Quality thresholds

complexity = 10

nesting = 3

max_args = 5

max_lines = 50

duplicate_strings = 3

ignore = []

model = "gpt-4.1"

# Language overrides (optional)

[tool.skylos.languages.typescript]

complexity = 15

nesting = 4

# Gate policy

[tool.skylos.gate]

fail_on_critical = true

max_security = 0 # Zero tolerance

max_quality = 10 # Allow up to 10 warnings

strict = false

Free Tier

Run scans locally with exit codes:

skylos . --danger --gate

- Exit code

0= passed - Exit code

1= failed

Use in any CI system:

name: Skylos Quality Gate

on:

pull_request:

branches: [main, master]

jobs:

skylos:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: '3.11'

- run: pip install skylos

- run: skylos . --danger --gate

Limitation: Anyone with repo access can delete or modify this workflow.

Pro Tier

Server-controlled GitHub checks that cannot be bypassed by developers.

Quick Setup

pip install skylos

skylos sync setup

How It Works

- Developer opens PR → GitHub App creates required check ("Queued")

- Scan runs → Results upload to Skylos server

- Server updates check → Pass ✅ or Fail ❌

- Developer cannot merge until check passes

Free vs Pro

| Feature | Free | Pro |

|---|---|---|

| Local scans | ✅ | ✅ |

--gate exit codes |

✅ | ✅ |

| GitHub Actions | ✅ (DIY) | ✅ (auto) |

| Developer can bypass? | Yes | No |

| Server-controlled check | ❌ | ✅ |

| Slack/Discord alerts | ❌ | ✅ |

GitHub App Setup

- Dashboard -> Settings -> Install GitHub App

- Select your repository

- In GitHub repo settings:

- Settings -> Branches -> Add rule ->

main - Require status checks

- Select "Skylos Quality Gate"

- Settings -> Branches -> Add rule ->

Add Token to GitHub

Repo Settings → Secrets → Actions → New secret

- Name:

SKYLOS_TOKEN - Value: (from Dashboard → Settings)

Integration and Ecosystem

Skylos is designed to live everywhere your code does—from your IDE to your deployment pipeline.

1. Integration Environments

| Environment | Tool | Use Case |

|---|---|---|

| VS Code | Skylos Extension | Real-time guarding. Highlights code rot and risks on-save. |

| Web UI | skylos run |

Launch a local dashboard at localhost:5090 for visual auditing. |

| CI/CD | GitHub Actions / Pre-commit | Automated gates that audit every PR before it merges. |

| Quality Gate | skylos --gate |

Block deployment if security or complexity thresholds are exceeded. |

2. Output Formats

Control how you consume the watchdog's findings.

| Flag | Format | Primary Use |

|---|---|---|

--tui |

TUI Dashboard | Launch the interactive TUI dashboard. |

--tree |

Logic Tree | Visualizes code hierarchy and structural dependencies. |

--json |

Machine Raw | Piping results to jq, custom scripts, or log aggregators. |

--sarif |

SARIF | GitHub Code Scanning, IDE integration. Includes CWE taxonomy and per-rule CWE relationships |

--llm |

LLM Report | Structured findings with code context for Claude Code, Codex, or any AI agent. |

-o, --output |

File Export | Save the audit report directly to a file instead of stdout. |

Auditing and Precision

By default, Skylos finds dead code. Enable additional scans with flags.

Dead Code (default)

skylos .

Reading the output:

| Column | Meaning |

|---|---|

| Name | The unused function, import, class, or variable |

| Location | file:line where it's defined |

| Conf | Confidence score (0–100%) — how certain Skylos is that this code is truly unused. Higher = safer to remove |

Security (--danger)

Tracks tainted data from user input to dangerous sinks.

skylos . --danger

Reading the output:

| Column | Meaning |

|---|---|

| Issue | The vulnerability type (e.g. SQL injection, eval) with its rule ID |

| Severity | Risk level: Critical > High > Medium > Low |

| Message | What was found and why it's dangerous |

| Location | file:line where the issue occurs |

| Symbol | The function or scope containing the vulnerable code |

| Rule | ID | What It Catches |

|---|---|---|

| Injection | ||

| SQL injection | SKY-D211 | cur.execute(f"SELECT * FROM users WHERE name='{name}'") |

| SQL raw query | SKY-D217 | sqlalchemy.text(), pandas.read_sql(), Django .raw() with tainted input |

| Command injection | SKY-D212 | os.system(), subprocess(shell=True) with tainted input |

| SSRF | SKY-D216 | requests.get(request.args["url"]) |

| Path traversal | SKY-D215 | open(request.args.get("p")) |

| XSS (mark_safe) | SKY-D226 | Untrusted content passed to mark_safe() / Markup() |

| XSS (template) | SKY-D227 | Inline template with autoescape disabled |

| XSS (HTML build) | SKY-D228 | HTML built from unescaped user input |

| Open redirect | SKY-D230 | User-controlled URL passed to redirect() |

| Dangerous Calls | ||

| eval() | SKY-D201 | Dynamic code execution via eval() |

| exec() | SKY-D202 | Dynamic code execution via exec() |

| os.system() | SKY-D203 | OS command execution |

| pickle.load | SKY-D204 | Unsafe deserialization |

| yaml.load | SKY-D206 | yaml.load() without SafeLoader |

| Weak hash (MD5) | SKY-D207 | hashlib.md5() |

| Weak hash (SHA1) | SKY-D208 | hashlib.sha1() |

| shell=True | SKY-D209 | subprocess with shell=True |

| TLS disabled | SKY-D210 | requests with verify=False |

| Unsafe deserialization | SKY-D233 | marshal.loads, shelve.open, jsonpickle.decode, dill |

| Web Security | ||

| CORS misconfiguration | SKY-D231 | Wildcard origins, credential leaks, overly permissive headers |

| JWT vulnerabilities | SKY-D232 | algorithms=['none'], missing verification, weak secrets |

| Mass assignment | SKY-D234 | Django Meta.fields = '__all__' exposes all model fields |

| Supply Chain | ||

| Hallucinated dependency | SKY-D222 | Imported package doesn't exist on PyPI (CRITICAL) |

| Undeclared dependency | SKY-D223 | Import not declared in requirements.txt / pyproject.toml |

| MCP Security | ||

| Tool description poisoning | SKY-D240 | Prompt injection in MCP tool metadata |

| Unauthenticated transport | SKY-D241 | SSE/HTTP MCP server without auth middleware |

| Permissive resource URI | SKY-D242 | Path traversal via MCP resource URI template |

| Network-exposed MCP | SKY-D243 | MCP server bound to 0.0.0.0 without auth |

| Hardcoded secrets in MCP | SKY-D244 | Secrets in MCP tool parameter defaults |

Full list in DANGEROUS_CODE.md.

Secrets (--secrets)

Detects hardcoded credentials.

skylos . --secrets

Reading the output:

| Column | Meaning |

|---|---|

| Provider | The service the secret belongs to (e.g. AWS, Stripe, GitHub) or "generic" for high-entropy strings |

| Message | Description of the detected credential |

| Preview | A masked snippet of the secret (e.g. sk_live_****) |

| Location | file:line where the secret was found |

Providers: GitHub, GitLab, AWS, Stripe, Slack, Google, SendGrid, Twilio, private keys.

Dependency Vulnerabilities (--sca)

Scans your installed dependencies against the OSV.dev vulnerability database.

skylos . --sca

Reading the output:

| Column | Meaning |

|---|---|

| Package | The dependency and its installed version (e.g. [email protected]) |

| Vuln ID | The CVE or advisory identifier |

| Severity | Risk level: Critical > High > Medium > Low |

| Reachability | Whether your code actually calls the vulnerable code path: Reachable (confirmed risk), Unreachable (safe), or Inconclusive |

| Fix | The patched version to upgrade to |

Quality (--quality)

Flags functions that are hard to maintain.

skylos . --quality

Reading the output:

| Column | Meaning |

|---|---|

| Type | The category: Complexity, Nesting, Structure, Quality (duplicate literals, coupling, cohesion) |

| Name | The function, class, or string literal that triggered the finding |

| Detail | The measured value and the threshold — e.g. Complexity: 14 (max 10) means 14 branches were found but the limit is 10; repeated 5× (max 3) means a string literal appears 5 times but should appear at most 3 |

| Location | file:line where the finding starts |

| Rule | ID | What It Catches |

|---|---|---|

| Complexity | ||

| Cyclomatic complexity | SKY-Q301 | Too many branches/loops (default: >10) |

| Deep nesting | SKY-Q302 | Too many nested levels (default: >3) |

| Async Blocking | SKY-Q401 | Detects blocking calls inside async functions that kill server throughput |

| God class | SKY-Q501 | Class has too many methods/attributes |

| Coupling (CBO) | SKY-Q701 | High inter-class coupling (7 dependency types: inheritance, type hints, instantiation, attribute access, imports, decorators, protocol/ABC) |

| Cohesion (LCOM) | SKY-Q702 | Low class cohesion — disconnected method groups that should be split (LCOM1/4/5 metrics with Union-Find) |

| Architecture | ||

| Distance from Main Sequence | SKY-Q802 | Module far from ideal balance of abstractness vs instability |

| Zone warning | SKY-Q803 | Module in Zone of Pain (rigid) or Zone of Uselessness (throwaway) |

| DIP violation | SKY-Q804 | Stable module depends on unstable module (Dependency Inversion Principle) |

| Structure | ||

| Too many arguments | SKY-C303 | Functions with >5 args |

| Function too long | SKY-C304 | Functions >50 lines |

| Logic | ||

| Mutable default | SKY-L001 | def foo(x=[]) - causes state leaks |

| Bare except | SKY-L002 | except: swallows SystemExit |

| Dangerous comparison | SKY-L003 | x == None instead of x is None |

| Anti-pattern try block | SKY-L004 | Nested try, or try wrapping too much logic |

| Unused exception var | SKY-L005 | except Error as e: where e is never referenced |

| Inconsistent return | SKY-L006 | Function returns both values and None |

| Duplicate string literal | SKY-L027 | Same string repeated 3+ times (see suppressing duplicate strings) |

| Too many returns | SKY-L028 | Function has 5+ return statements |

| Boolean trap | SKY-L029 | Boolean positional parameter harms call-site readability |

| Performance | ||

| Memory load | SKY-P401 | .read() / .readlines() loads entire file |

| Pandas no chunk | SKY-P402 | read_csv() without chunksize |

| Nested loop | SKY-P403 | O(N²) complexity |

| Unreachable | ||

| Unreachable Code | SKY-UC001 | if False: or else after always-true |

| Empty | ||

| Empty File | SKY-E002 | Empty File |

To ignore a specific rule:

# pyproject.toml

[tool.skylos]

ignore = ["SKY-P403"] # Allow nested loops

Tune thresholds and disable rules in pyproject.toml:

[tool.skylos]

# Adjust thresholds

complexity = 15 # Default: 10

nesting = 4 # Default: 3

max_args = 7 # Default: 5

max_lines = 80

Suppressing Duplicate String Findings

Skylos flags string literals that appear 3+ times (rule SKY-L027). If a repeated string is intentional (e.g. a status value checked in multiple places), you have three options:

Option 1: Raise the threshold — only flag strings repeated more than N times:

# pyproject.toml

[tool.skylos]

duplicate_strings = 10 # Default: 3. Set to 999 to effectively disable.

Option 2: Disable the rule entirely:

# pyproject.toml

[tool.skylos]

ignore = ["SKY-L027"]

Option 3: Suppress inline — on the specific line:

if somevar == "lokal": # skylos: ignore

do_something()

Technical Debt (skylos debt)

Ranks structural debt hotspots using the existing static findings from quality, architecture, and dead code analysis.

skylos debt .

skylos debt . --changed

skylos debt . --baseline

skylos debt . --save-baseline

skylos debt . --history

skylos debt . --json

How the debt output works:

| Field | Meaning |

|---|---|

| score | Structural debt score for the hotspot itself |

| priority | Triage priority for what to fix next. Changed files and baseline drift raise this without changing structural debt score |

| project score | Repo-level structural debt score. This stays project-scoped even when --changed is used |

| baseline status | Whether a hotspot is new, worsened, improved, or unchanged versus the saved debt baseline |

--changed is a filter/view mode, not a different scoring model. It limits the visible hotspot list to git-changed files, but the repo debt score still reflects the full project.

Debt baselines and debt history are project-level artifacts. --save-baseline and --history only work when you scan the project root.

Default CLI Options (addopts)

Set default flags in pyproject.toml so you don't have to type them every time — just like pytest's addopts:

[tool.skylos]

addopts = ["--quality", "--danger", "--secrets"]

String format also works:

[tool.skylos]

addopts = "--quality --danger --confidence=80"

CLI flags override addopts, so you can always narrow or widen a run without editing config.

Skylos also honors [tool.skylos].exclude during CLI scans, which is the cleanest place

to keep team-specific paths like custom venv names or .claude/worktrees/.

Legacy AI Flags

# LLM-powered audit (single file)

skylos . --audit

# Specify model

skylos . --audit --model claude-haiku-4-5-20251001

Note: For full project context and better results, use

skylos agent scaninstead. For auto-fixing, useskylos agent remediate.

Combine Everything

skylos . -a # All static scans (danger + secrets + quality + sca)

skylos agent remediate . --dry-run # Preview AI-assisted fixes

Smart Tracing

Static analysis can't see everything. Python's dynamic nature means patterns like getattr(), plugin registries, and string-based dispatch look like dead code—but they're not.

Smart tracing solves this. By running your tests with sys.settrace(), Skylos records every function that actually gets called.

Quick Start

# Run tests with call tracing, then analyze

skylos . --trace

# Trace data is saved to .skylos_trace

skylos .

How It Works

| Analysis Type | Accuracy | What It Catches |

|---|---|---|

| Static only | 70-85% | Direct calls, imports, decorators |

| + Framework rules | 85-95% | Django/Flask routes, pytest fixtures |

+ --trace |

95-99% | Dynamic dispatch, plugins, registries |

Example

# Static analysis will think this is dead because there's no direct call visible

def handle_login():

return "Login handler"

# But it is actually called dynamically at runtime

action = request.args.get("action")

func = getattr(module, f"handle_{action}")

func() # here

| Without Tracing | With --trace |

|---|---|

handle_login flagged as dead |

handle_login marked as used |

When To Use

| Situation | Command |

|---|---|

| Have pytest/unittest tests | skylos . --trace |

| No tests | skylos . (static only; repeated runs reuse .skylos/cache/grep_results.json for grep verification) |

| CI with cached trace | skylos . (reuses .skylos_trace) |

What Tracing Catches

These patterns are invisible to static analysis but caught with --trace:

# 1. Dynamic dispatch

func = getattr(module, f"handle_{action}")

func()

# 2. Plugin or registry patterns

PLUGINS = []

def register(f):

PLUGINS.append(f)

return f

@register

def my_plugin(): ...

# 3. Visitor patterns

class MyVisitor(ast.NodeVisitor):

def visit_FunctionDef(self, node): ... # Called via getattr

# 4. String-based access

globals()["my_" + "func"]()

locals()[func_name]()

Important Notes

- Tracing only adds information. Low test coverage won't create false positives. It just means some dynamic patterns may still be flagged.

- Commit

.skylos_traceto reuse trace data in CI without re-running tests. - Tests don't need to pass. Tracing records what executes, regardless of pass/fail status.

Filtering

Control what Skylos analyzes and what it ignores.

Inline Suppression

Silence specific findings with comments:

# Ignore dead code detection on this line

def internal_hook(): # pragma: no skylos

pass

# this also works

def another(): # pragma: no cover

pass

def yet_another(): # noqa

pass

Folder Exclusion

By default, Skylos excludes: __pycache__, .git, .pytest_cache, .mypy_cache, .tox, htmlcov, .coverage, build, dist, *.egg-info, venv, .venv

# See what's excluded by default

skylos --list-default-excludes

# Add more exclusions

skylos . --exclude-folder vendor --exclude-folder generated

# Skylos also respects project `.gitignore` entries during file discovery

# so ignored folders like custom venvs and worktrees are skipped automatically

# Force include an excluded folder

skylos . --include-folder venv

# Scan everything (no exclusions)

skylos . --no-default-excludes

Use [tool.skylos].exclude in pyproject.toml for team-wide custom exclusions that should apply

even outside .gitignore.

Rule Suppression

Disable rules globally in pyproject.toml:

[tool.skylos]

ignore = [

"SKY-P403", # Allow nested loops

"SKY-L003", # Allow == None

"SKY-S101", # Allow hardcoded secrets (not recommended)

]

Summary

| Want to... | Do this |

|---|---|

| Skip one line | # pragma: no skylos |

| Skip one secret | # skylos: ignore[SKY-S101] |

| Skip a folder | --exclude-folder NAME |

| Skip a rule globally | ignore = ["SKY-XXX"] in pyproject.toml |

| Include excluded folder | --include-folder NAME |

| Skip team-specific folders | exclude = ["customenv", ".claude/worktrees"] in pyproject.toml |

| Run all checks | -a or addopts in pyproject.toml |

| Scan everything | --no-default-excludes |

Whitelist Configuration

Suppress false positives permanently without inline comments cluttering your code.

CLI Commands

# Add a pattern

skylos whitelist 'handle_*'

# Add with reason

skylos whitelist dark_logic --reason "Called via globals() in dispatcher"

# View current whitelist

skylos whitelist --show

Inline Ignores

# Single line

def dynamic_handler(): # skylos: ignore

pass

# Also works

def another(): # noqa: skylos

pass

# Block ignore

# skylos: ignore-start

def block_one():

pass

def block_two():

pass

# skylos: ignore-end

Config File (pyproject.toml)

[tool.skylos.whitelist]

# Glob patterns

names = [

"handle_*",

"visit_*",

"*Plugin",

]

# With reasons (shows in --show output)

[tool.skylos.whitelist.documented]

"dark_logic" = "Called via globals() string manipulation"

"BasePlugin" = "Discovered via __subclasses__()"

# Temporary (warns when expired)

[tool.skylos.whitelist.temporary]

"legacy_handler" = { reason = "Migration - JIRA-123", expires = "2026-03-01" }

# Per-path overrides

[tool.skylos.overrides."src/plugins/*"]

whitelist = ["*Plugin", "*Handler"]

Summary

| Want to... | Do this |

|---|---|

| Whitelist one function | skylos whitelist func_name |

| Whitelist a pattern | skylos whitelist 'handle_*' |

| Document why | skylos whitelist x --reason "why" |

| Temporary whitelist | Add to [tool.skylos.whitelist.temporary] with expires |

| Per-folder rules | Add [tool.skylos.overrides."path/*"] |

| View whitelist | skylos whitelist --show |

| Inline ignore | # skylos: ignore or # noqa: skylos |

| Block ignore | # skylos: ignore-start ... # skylos: ignore-end |

CLI Options

Main Command Flags

Usage: skylos [OPTIONS] PATH

Arguments:

PATH Path to the Python project to analyze

Options:

-h, --help Show this help message and exit

--json Output raw JSON instead of formatted text

--tree Output results in tree format

--tui Launch interactive TUI dashboard

--sarif Output SARIF format for GitHub/IDE integration

--llm Output LLM-optimized report with code context for AI agents

-c, --confidence LEVEL Confidence threshold 0-100 (default: 60)

--comment-out Comment out code instead of deleting

-o, --output FILE Write output to file instead of stdout

-v, --verbose Enable verbose output

--version Checks version

-i, --interactive Interactively select items to remove

--dry-run Show what would be removed without modifying files

--exclude-folder FOLDER Exclude a folder from analysis (can be used multiple times)

--include-folder FOLDER Force include a folder that would otherwise be excluded

--no-default-excludes Don't exclude default folders (__pycache__, .git, venv, etc.)

--list-default-excludes List the default excluded folders

--secrets Scan for api keys/secrets

--danger Scan for dangerous code

--quality Code complexity and maintainability

--sca Scan dependencies for known CVEs (OSV.dev)

-a, --all Enable all checks: --danger --secrets --quality --sca

--trace Run tests with coverage first

--audit LLM-powered logic review (legacy)

--model MODEL LLM model (default: gpt-4.1)

--gate Fail on threshold breach (for CI)

--force Bypass quality gate (emergency override)

Agent Command Flags

Usage: skylos agent <command> [OPTIONS] PATH

Commands:

scan Hybrid static + LLM analysis (replaces analyze/audit/review/security-audit)

verify LLM-verify dead code findings

remediate Scan, fix, test, and create PR (end-to-end)

watch Continuous repo monitoring

pre-commit Staged-files-only analysis for git hooks

triage Manage finding triage (suggest/dismiss/snooze/restore)

status Show active-agent summary

serve Local HTTP API for editor integrations

Agent scan options:

--model MODEL LLM model to use (default: gpt-4.1)

--provider PROVIDER Force provider: openai or anthropic

--base-url URL Custom endpoint for local LLMs

--format FORMAT Output: table, tree, json, sarif

-o, --output FILE Write output to file

--min-confidence LEVEL Filter: high, medium, low

--no-fixes Skip fix suggestions (faster)

--changed Analyze only git-changed files

--security Security-only LLM audit mode

-i, --interactive Interactive file selection (with --security)

Agent remediate options:

--dry-run Show plan without applying fixes (safe preview)

--max-fixes N Max findings to fix per run (default: 10)

--auto-pr Create branch, commit, push, and open PR

--branch-prefix PREFIX Git branch prefix (default: skylos/fix)

--test-cmd CMD Custom test command (default: auto-detect)

--severity LEVEL Min severity filter: critical, high, medium, low

--standards [FILE] Enable LLM cleanup mode (uses built-in standards, or pass custom .md)

Agent watch options:

--once Run one refresh cycle and exit

--interval SECONDS Poll interval for continuous watch mode

--cycles N Stop after N refresh cycles (0 = keep watching)

--learn Enable triage pattern learning during watch mode

--format FORMAT Output: table, json

AI Defense Command Flags

Usage: skylos discover [OPTIONS] PATH

Map all LLM integrations in a Python codebase.

Options:

--json Output as JSON

-o, --output FILE Write output to file

--exclude FOLDER [FOLDER...] Additional folders to exclude

Usage: skylos defend [OPTIONS] PATH

Check LLM integrations for missing defenses.

Options:

--json Output as JSON

-o, --output FILE Write output to file

--min-severity LEVEL Minimum severity to include (critical/high/medium/low)

--fail-on LEVEL Exit 1 if any defense finding at or above this severity

--min-score N Exit 1 if defense score below this percentage (0-100)

--policy FILE Path to skylos-defend.yaml policy file

--owasp IDS Comma-separated OWASP LLM IDs (e.g. LLM01,LLM04)

--exclude FOLDER [FOLDER...] Additional folders to exclude

--upload Upload defense results to Skylos Cloud dashboard

Commands

Commands:

skylos PATH Analyze a project (static analysis)

skylos debt PATH Analyze technical debt hotspots

skylos discover PATH Map LLM integrations in a codebase

skylos defend PATH Check LLM integrations for missing defenses

skylos agent scan PATH Hybrid static + LLM analysis

skylos agent verify PATH LLM-verify dead code findings

skylos agent remediate PATH End-to-end scan, fix, test, and PR

skylos agent triage CMD Manage finding triage

skylos baseline PATH Snapshot current findings for CI baselining

skylos cicd init Generate GitHub Actions workflow

skylos cicd gate Check findings against quality gate

skylos cicd annotate Emit GitHub Actions annotations

skylos cicd review Post inline PR review comments (supports --llm-input)

skylos init Initialize pyproject.toml config

skylos key Manage API keys (add/remove/list)

skylos whitelist PATTERN Add pattern to whitelist

skylos whitelist --show Display current whitelist

skylos run Start web UI at localhost:5090

Whitelist Options:

skylos whitelist PATTERN Add glob pattern (e.g., 'handle_*')

skylos whitelist NAME --reason X Add with documentation

skylos whitelist --show Display all whitelist entries

CLI Output

Skylos displays confidence for each finding:

────────────────── Unused Functions ──────────────────

# Name Location Conf

1 handle_secret app.py:16 70%

2 totally_dead app.py:50 90%

Higher confidence = more certain it's dead code.

Interactive Mode

The interactive mode lets you select specific functions and imports to remove:

- Select items: Use arrow keys and

spacebarto select/unselect - Confirm changes: Review selected items before applying

- Auto-cleanup: Files are automatically updated

FAQ

Q: Why doesn't Skylos find 100% of dead code?

A: Python's dynamic features (getattr, globals, etc.) can't be perfectly analyzed statically. No tool can achieve 100% accuracy. If they say they can, they're lying.

Q: Are these benchmarks realistic?

A: They test common scenarios but can't cover every edge case. Use them as a guide, not gospel.

Q: Why doesn't Skylos detect my unused Flask routes?

A: Web framework routes are given low confidence (20) because they might be called by external HTTP requests. Use --confidence 20 to see them. We acknowledge there are current limitations to this approach so use it sparingly.

Q: What confidence level should I use?

A: Start with 60 (default) for safe cleanup. Use 30 for framework applications. Use 20 for more comprehensive auditing.

Q: What does --trace do?

A: It runs pytest (or unittest) with coverage tracking before analysis. Functions that actually executed are marked as used with 100% confidence, eliminating false positives from dynamic dispatch patterns.

Q: Do I need 100% test coverage for --trace to be useful?

A: No. However, we STRONGLY encourage you to have tests. Any coverage helps. If you have 30% test coverage, that's 30% of your code verified. The other 70% still uses static analysis. Coverage only removes false positives, it never adds them.