nvidia-anthropic-proxy

skill

Uyari

Health Gecti

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 34 GitHub stars

Code Uyari

- network request — Outbound network request in index.js

Permissions Gecti

- Permissions — No dangerous permissions requested

Bu listing icin henuz AI raporu yok.

A Cloudflare Worker proxy that enables Claude Code to use NVIDIA NIM API models

README.md

NVIDIA Anthropic Proxy

A Cloudflare Worker proxy that enables Claude Code to use NVIDIA NIM API models.

- Use NVIDIA NIM open-source models (Llama, Minimax, GLM, etc.) in Claude Code

- Seamless model switching with full Claude Code experience

- Low-latency access via Cloudflare's global edge network

Quick Start

1. Deploy the Proxy

git clone https://github.com/evanlong-me/nvidia-anthropic-proxy.git

cd nvidia-anthropic-proxy

npm run setup

npm run deploy

Prerequisites:

- NVIDIA API Key - Required during

npm run setup - Cloudflare account -

npm run setupwill prompt to login on first run (or setCLOUDFLARE_API_TOKENenv var for non-interactive use)

After deployment, you'll get a Worker URL: https://nvidia-anthropic-proxy.xxx.workers.dev

2. Configure Claude Code

Edit ~/.claude/settings.json:

{

"env": {

"ANTHROPIC_BASE_URL": "https://nvidia-anthropic-proxy.xxx.workers.dev",

"ANTHROPIC_DEFAULT_OPUS_MODEL": "minimaxai/minimax-m2.1",

"ANTHROPIC_DEFAULT_SONNET_MODEL": "minimaxai/minimax-m2.1",

"ANTHROPIC_DEFAULT_HAIKU_MODEL": "z-ai/glm4.7"

}

}

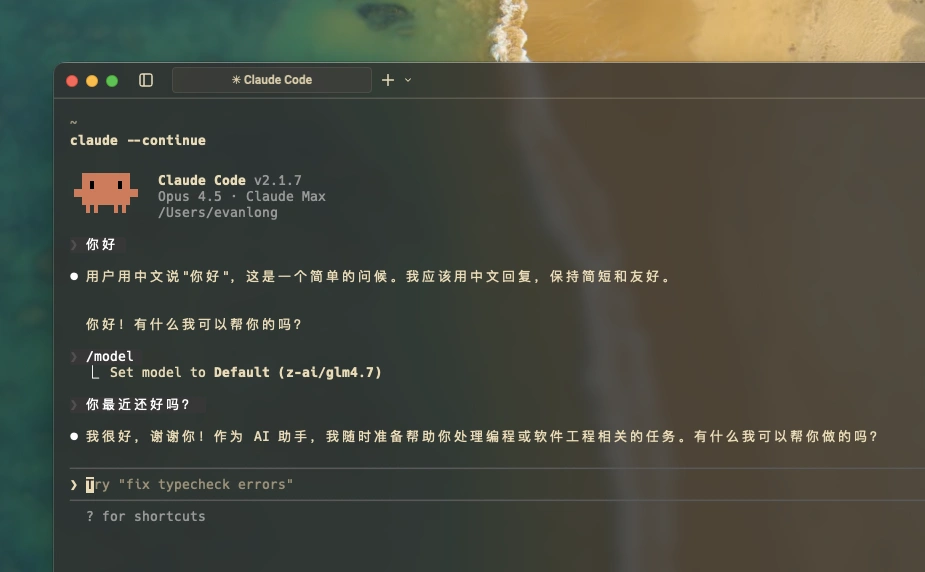

3. Start Using

claude

/model opus→ minimaxai/minimax-m2.1/model sonnet→ minimaxai/minimax-m2.1/model haiku→ z-ai/glm4.7

Supported Models

The proxy uses pass-through mode, supporting all NVIDIA NIM models:

| Model | Description |

|---|---|

minimaxai/minimax-m2.1 |

Minimax latest, strong Chinese capability |

z-ai/glm4.7 |

Zhipu GLM4, fast response |

meta/llama-3.3-70b-instruct |

Meta Llama 3.3 70B |

meta/llama-3.1-405b-instruct |

Meta Llama 3.1 405B |

deepseek-ai/deepseek-r1 |

DeepSeek R1 reasoning model |

qwen/qwen2.5-72b-instruct |

Alibaba Qwen 2.5 |

Full list: build.nvidia.com/models

How It Works

Claude Code (Anthropic format)

↓

This Proxy (Cloudflare Worker)

↓

Format Conversion (Anthropic → OpenAI)

↓

NVIDIA NIM API

Local Development

npm run dev # Start local server

npm run tail # View real-time logs

Star History

License

MIT

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi