EverOS

Health Gecti

- License — License: Apache-2.0

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 3768 GitHub stars

Code Gecti

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

This project provides a comprehensive suite of methods, benchmarks, and use cases for integrating long-term memory capabilities into self-evolving AI agents.

Security Assessment

The overall risk is rated as Low. The automated code scan evaluated 12 files and found no dangerous patterns. The tool does not request any dangerous system permissions, and no hardcoded secrets were detected. Because this is an AI memory framework, it inherently processes and stores conversational data, but there are no signs of unauthorized data exfiltration, unexpected network requests, or hidden shell command execution.

Quality Assessment

The project demonstrates exceptionally high quality and community trust. It is licensed under the permissive Apache-2.0, making it highly suitable for open-source and commercial use. It is actively maintained, with repository updates pushed as recently as today. The strong community validation is evidenced by nearly 3,800 GitHub stars. Furthermore, the project appears to have a solid academic and research foundation, backed by multiple arXiv papers and an active Discord community.

Verdict

Safe to use.

EverOS is a collection of long-term memory methods, benchmarks, and usecases for building self-evolving agents.

[!IMPORTANT]

Project Structure Update

We've unified EverCore, HyperMem, EverMemBench, and EvoAgentBench — along with usecases — into a single repository called EverOS.

EverOS gives developers one place to build, evaluate, and integrate long-term memory into their self-evolving agents. 🎉

Project Overview

EverOS is a collection of long-term memory methods, benchmarks, and usecases for building self-evolving agents.

EverOS Structure

EverOS/

├── benchmarks/

│ ├── EverMemBench/ # Memory quality evaluation

│ └── EvoAgentBench/ # Agent self-evolution evaluation

└── methods/

├── EverCore/ # Long-term memory operating system

└── HyperMem/ # Hypergraph memory architecture

└── usecases/ # Example applications

Methods

EverCoreA self-organizing memory operating system inspired by biological imprinting. Extracts, structures, and retrieves long-term knowledge from conversations — enabling agents to remember, understand, and continuously evolve. |

HyperMemA hypergraph-based hierarchical memory architecture that captures high-order associations through hyperedges. Organizes memory into topic, event, and fact layers for coarse-to-fine long-term conversation retrieval. LoCoMo 92.73%. |

Benchmarks

EverMemBenchThree-layer memory quality evaluation: factual recall, applied reasoning, and personalized generalization. Evaluates memory systems and LLMs under a unified standard. |

EvoAgentBenchAgent self-evolution evaluation — not static snapshots, but longitudinal growth curves. Measures transfer efficiency, error avoidance, and skill-hit quality through controlled experiments with and without evolution. |

All benchmarks are designed as open public standards. Any memory architecture or agent framework can be evaluated under the same ruler.

Use Cases

EverMind + OpenClaw Agent Memory and Plugin

Imagine a 24/7 agent with continuous learning memory that you can carry with you wherever you go. Check out the agent_memory branch and the plugin for more details.

Live2D Character with Memory

Add long-term memory to your anime character that can talk to you in real-time powered by TEN Framework.

See the Live2D Character with Memory Example for more details.

Computer-Use with Memory

Use computer-use to launch screenshot-based analysis, all stored in your memory.

See the live demo for more details.

Game of Thrones Memories

A demonstration of AI memory infrastructure through an interactive Q&A experience with "A Game of Thrones".

See the code for more details.

EverOS Claude Code Plugin

Persistent memory for Claude Code. Automatically saves and recalls context from past coding sessions.

See the code for more details.

Visualize Memories with Graphs

Memory Graph view that visualizes your stored entities and how they relate. This is a pure frontend demo which has not been plugged into the backend yet — we are working on it.

See the live demo.

Quick Start

git clone https://github.com/EverMind-AI/EverOS.git

cd EverOS

Then navigate to the component you need:

| Component | Entry Point | |

|---|---|---|

| EverCore | Build agents with long-term memory | methods/everos/ |

| HyperMem | Use the hypergraph memory architecture | methods/HyperMem/ |

| EverMemBench | Evaluate memory system quality | benchmarks/EverMemBench/ |

| EvoAgentBench | Measure agent self-evolution | benchmarks/EvoAgentBench/ |

Each component has its own installation guide, dependency configuration, and usage examples.

EverCore Quick Start

cd methods/everos

# Start Docker services

docker compose up -d

# Install dependencies

curl -LsSf https://astral.sh/uv/install.sh | sh

uv sync

# Configure API keys

cp env.template .env

# Edit .env and set:

# - LLM_API_KEY (for memory extraction)

# - VECTORIZE_API_KEY (for embedding/rerank)

# Start server

uv run python src/run.py

# Verify installation

curl http://localhost:1995/health

# Expected response: {"status": "healthy", ...}

Server runs at http://localhost:1995 · Full Setup Guide

Basic Usage

Store and retrieve memories with simple Python code:

import requests

API_BASE = "http://localhost:1995/api/v1"

# 1. Store a conversation memory

requests.post(f"{API_BASE}/memories", json={

"message_id": "msg_001",

"create_time": "2025-02-01T10:00:00+00:00",

"sender": "user_001",

"content": "I love playing soccer on weekends"

})

# 2. Search for relevant memories

response = requests.get(f"{API_BASE}/memories/search", json={

"query": "What sports does the user like?",

"user_id": "user_001",

"memory_types": ["episodic_memory"],

"retrieve_method": "hybrid"

})

result = response.json().get("result", {})

for memory_group in result.get("memories", []):

print(f"Memory: {memory_group}")

More Examples · API Reference · Interactive Demos

Evaluation & Benchmarking

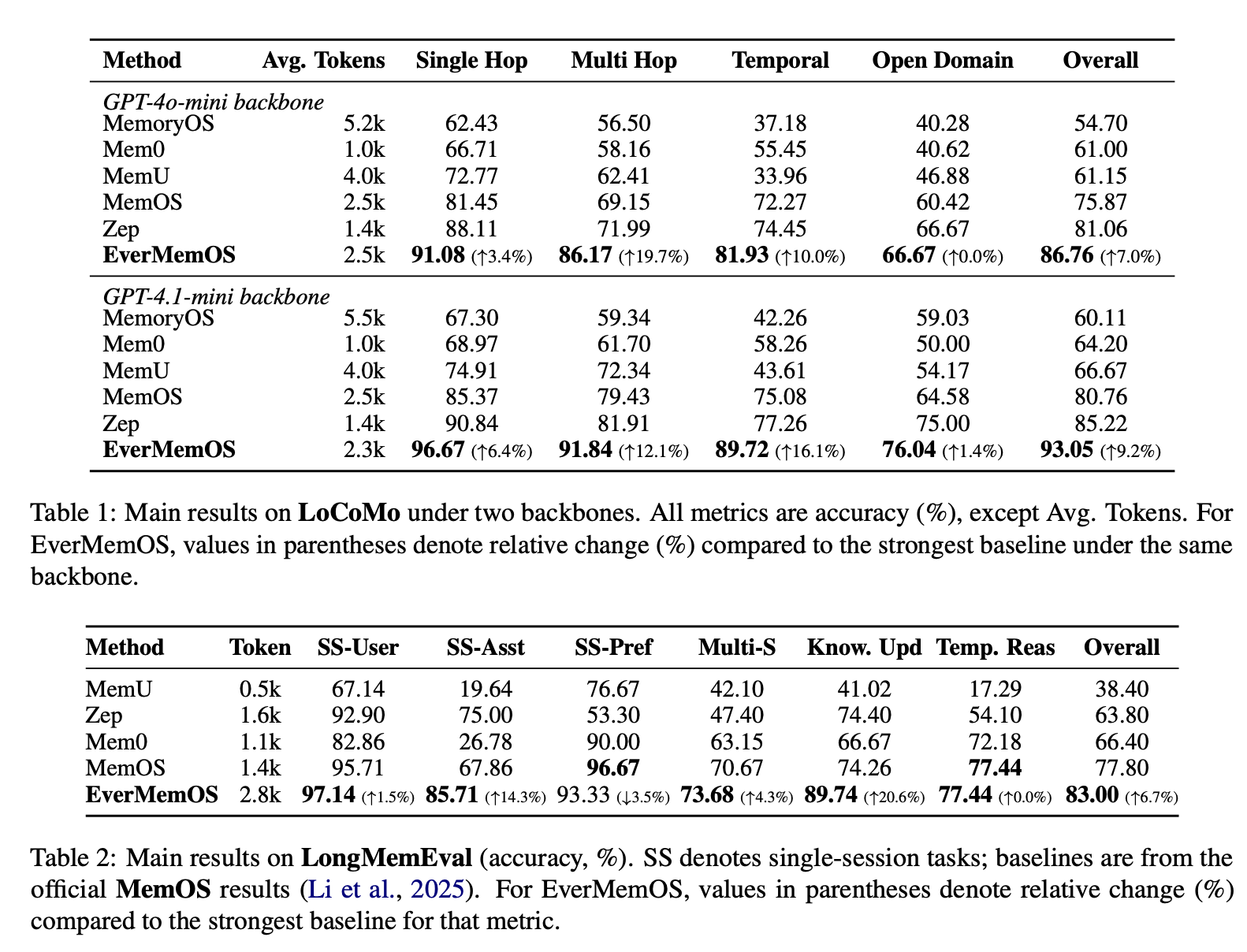

EverOS achieves 93% overall accuracy on the LoCoMo benchmark, outperforming comparable memory systems.

Benchmark Results

Supported Benchmarks

- LoCoMo — Long-context memory benchmark with single/multi-hop reasoning

- LongMemEval — Multi-session conversation evaluation

- PersonaMem — Persona-based memory evaluation

Run Evaluations

# Install evaluation dependencies

uv sync --group evaluation

# Run smoke test (quick verification)

uv run python -m evaluation.cli --dataset locomo --system everos --smoke

# Run full evaluation

uv run python -m evaluation.cli --dataset locomo --system everos

# View results

cat evaluation/results/locomo-everos/report.txt

Full Evaluation Guide · Complete Results

Citation

If EverOS helps your research, please cite:

@article{hu2026evermemos,

title = {EverMemOS: A Self-Organizing Memory Operating System for Structured Long-Horizon Reasoning},

author = {Chuanrui Hu and Xingze Gao and Zuyi Zhou and Dannong Xu and Yi Bai and Xintong Li and Hui Zhang and Tong Li and Chong Zhang and Lidong Bing and Yafeng Deng},

journal = {arXiv preprint arXiv:2601.02163},

year = {2026}

}

@article{yue2026hypermem,

title = {HyperMem: Hypergraph Memory for Long-Term Conversations},

author = {Juwei Yue and Chuanrui Hu and Jiawei Sheng and Zuyi Zhou and Wenyuan Zhang and Tingwen Liu and Li Guo and Yafeng Deng},

journal = {arXiv preprint arXiv:2604.08256},

year = {2026}

}

@article{hu2026evaluating,

title = {Evaluating Long-Horizon Memory for Multi-Party Collaborative Dialogues},

author = {Chuanrui Hu and Tong Li and Xingze Gao and Hongda Chen and Yi Bai and Dannong Xu and Tianwei Lin and Xiaohong Li and Yunyun Han and Jian Pei and Yafeng Deng},

journal = {arXiv preprint arXiv:2602.01313},

year = {2026}

}

Contributing

Browse Issues to find your entry point or connect with maintainers — @elliotchen200 on X and @cyfyifanchen on GitHub.

Code Contributors

License

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi