omni

Health Gecti

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 30 GitHub stars

Code Gecti

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

This tool acts as a semantic signal engine and context-aware terminal interceptor. It distills noisy command-line outputs into high-density intelligence before passing them to AI agents, aiming to reduce token consumption and prevent context window waste.

Security Assessment

Overall Risk: Medium. The tool's core function requires it to intercept and read local terminal outputs, which frequently contain sensitive data such as environment variables, file contents, and API keys. Additionally, its "Pre-Hook" mechanism natively rewrites and executes commands on your system (e.g., intercepting `git` or `cargo` and routing them through `omni exec`). While the light code scan found no hardcoded secrets or dangerous permission requests, granting an application the ability to rewrite and intercept shell commands inherently elevates its risk profile.

Quality Assessment

The project demonstrates strong health indicators. It is written in Rust, which provides excellent memory safety guarantees. It uses the permissive and standard MIT license. The repository is highly active, with its latest push occurring today, and has garnered 30 GitHub stars, indicating a small but growing level of community trust. The automated code audit scanned 12 files and found no dangerous patterns, highlighting a clean codebase.

Verdict

Use with caution. The tool is well-maintained and safe from a code perspective, but its deep system-level access to intercept and rewrite shell commands requires you to fully trust the developer and understand the sensitive terminal data it processes.

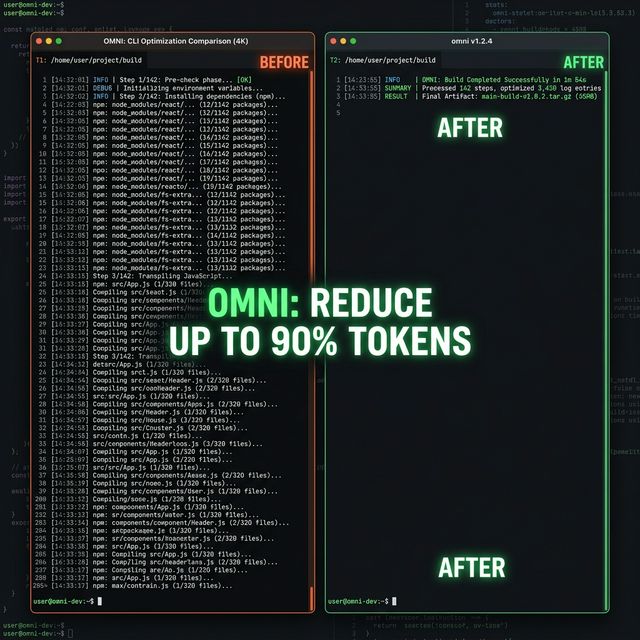

The Semantic Signal Engine that reduce AI token consumption by up to 90%

The Semantic Signal Engine that cuts AI token consumption by up to 90%.

OMNI acts as a context-aware terminal interceptor—distilling noisy command outputs in real-time into high-density intelligence, ensuring your LLM agents work with meaning, not text waste.

Why OMNI?

AI agents are drowning in noisy CLI output. A git diff can easily eat 10K tokens, while a cargo test might dump 25K tokens of redundant noise. Claude and other agents read all of it, but 90% of that data is pure distraction that dilutes reasoning and drains your token budget.

OMNI intercepts terminal output automatically, keeping only what matters for your current task. It’s not just about making output smaller; it’s about making it smarter. By understanding command structures and your active session context, OMNI ensures your agent sees the signal, not the waste.

- Cost & Latency: Large outputs consume your context window rapidly and increase the cost of every message.

- Cognitive Dilution: LLMs can lose track of complex reasoning when buried under megabytes of raw CLI logs.

- Auto-Truncation: Claude Code often cuts off large outputs, potentially missing the exact error or diff line it needs to see.

OMNI is the solution. It acts as a "Sieve" that sits between your terminal and the AI, turning raw data into Semantic Signals.

How It Works: The Signal Lifecycle

OMNI employs a unique, multi-layered native interception strategy to ensure maximum efficiency without losing information:

1. Surgical Pre-Hook (PreToolUse)

Intercepts noisy commands (like git, cargo, npm, pytest) before they execute. By natively rewriting these commands to omni exec, OMNI prevents auto-truncation and ensures the AI sees a distilled, high-density stream from the first line.

2. Safety-Net Post-Hook (PostToolUse)

Automatically distills output from any tool after it runs. This acts as a backup for custom scripts or unknown commands.

Real-time ROI feedback on every distilled command.

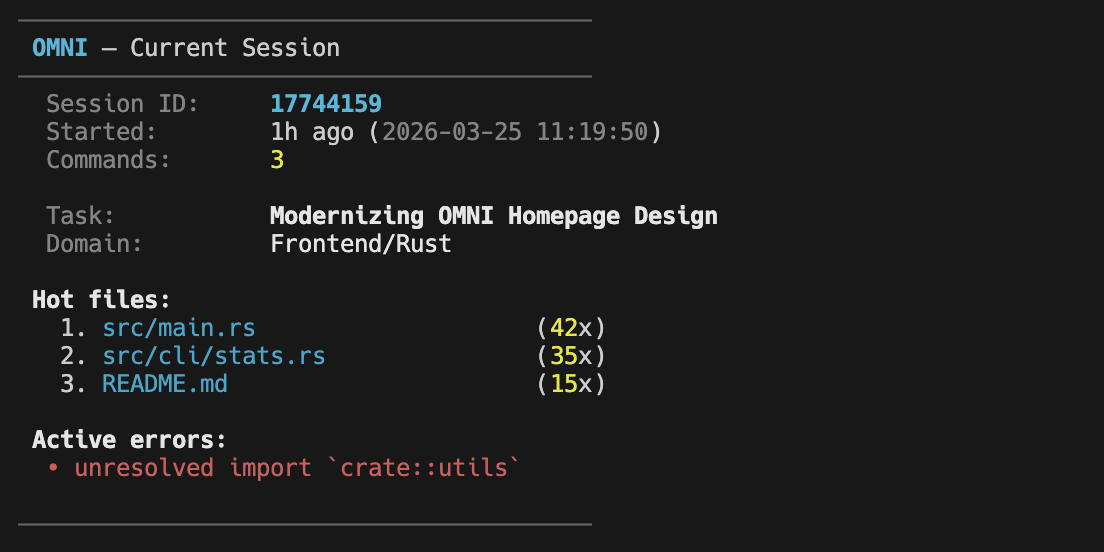

3. Session Continuity (SessionStart)

When you start a new Claude session, OMNI injects a high-level summary of your previous state—hot files, last errors, and active task context—so the agent never reaches for "context" it already had.

4. Smart Compaction (PreCompact)

Before Claude prunes its conversation history to save space, OMNI provides a permanent summary of the work done so far, ensuring long-term project memory stays sharp.

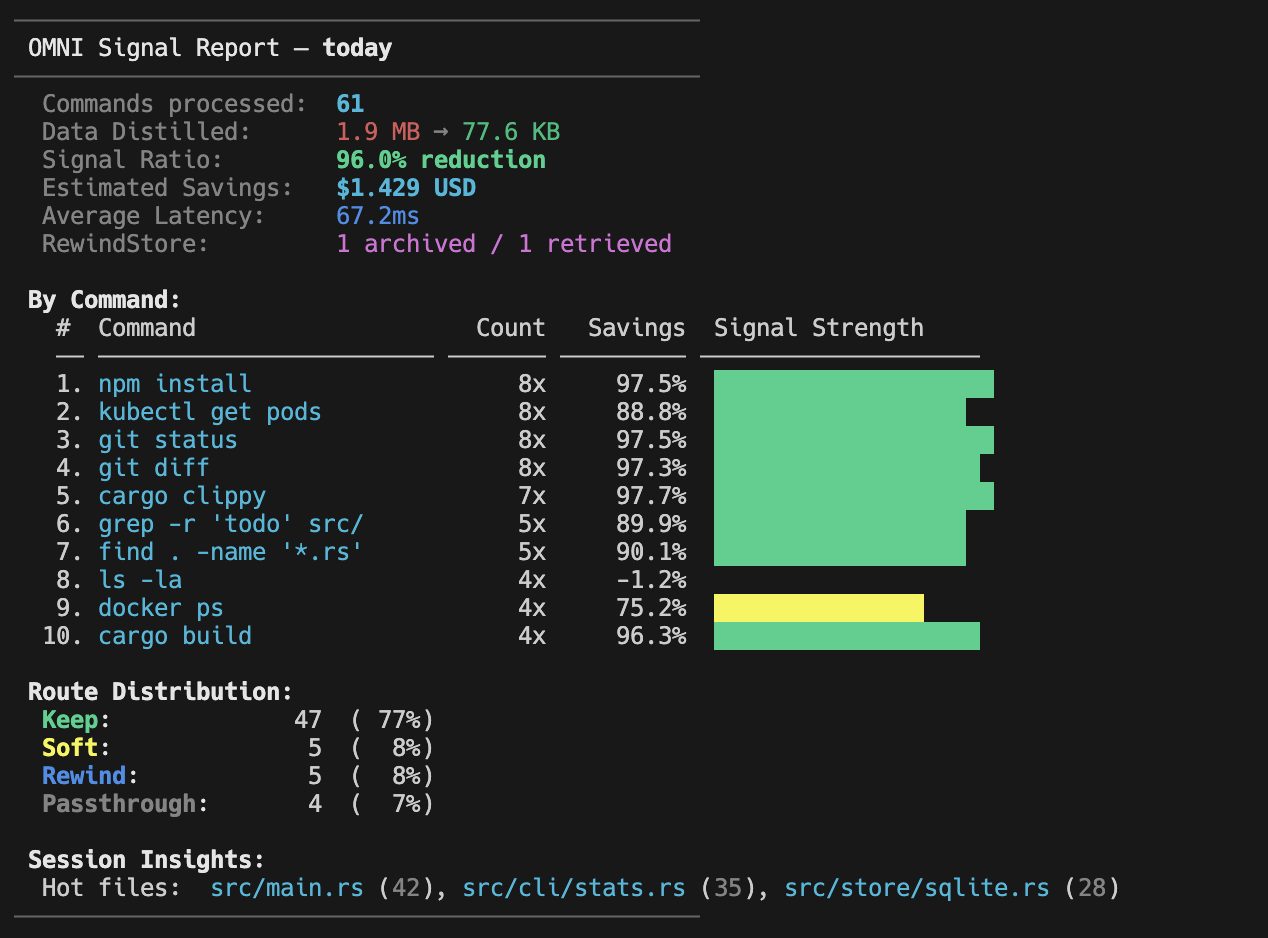

The Impact

Reduce AI Token Usage by up to 90%

Zero Information Loss. Native Binary Performance. Real-time ROI Monitoring.

Key Features

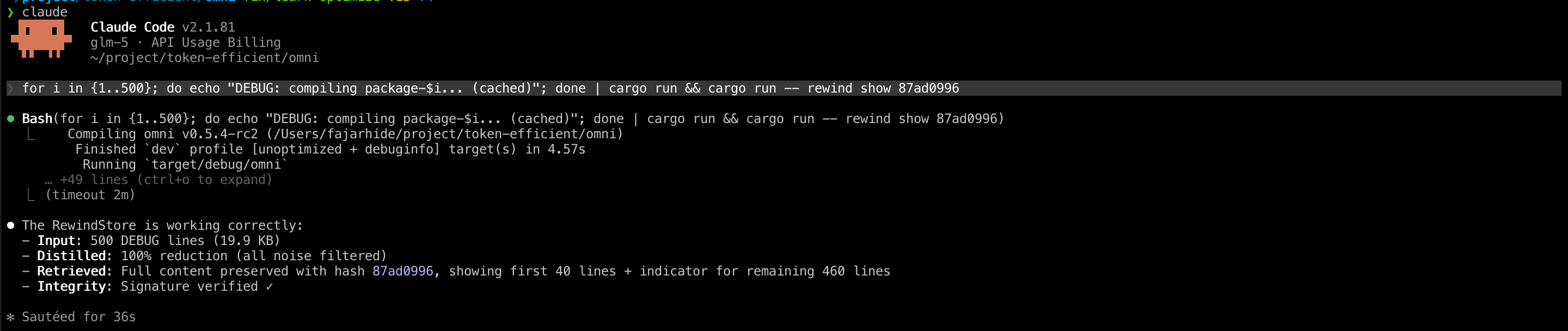

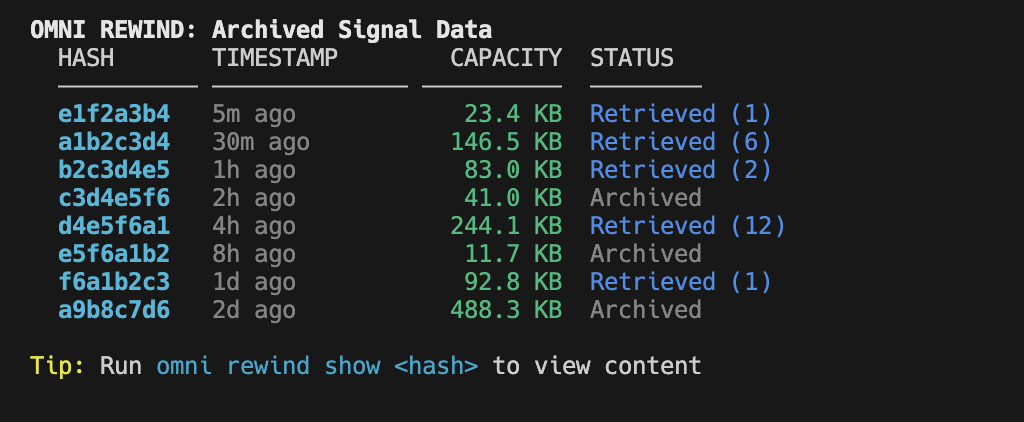

RewindStore: Zero Information Loss

When OMNI distills output, the original raw content isn't discarded—it's archived in the RewindStore with a SHA-256 hash.

- Agent Access: Call

omni_retrieve("hash")via the MCP tool. - Human Access: Use

omni rewind listandomni rewind show <hash>to manage your archives locally.

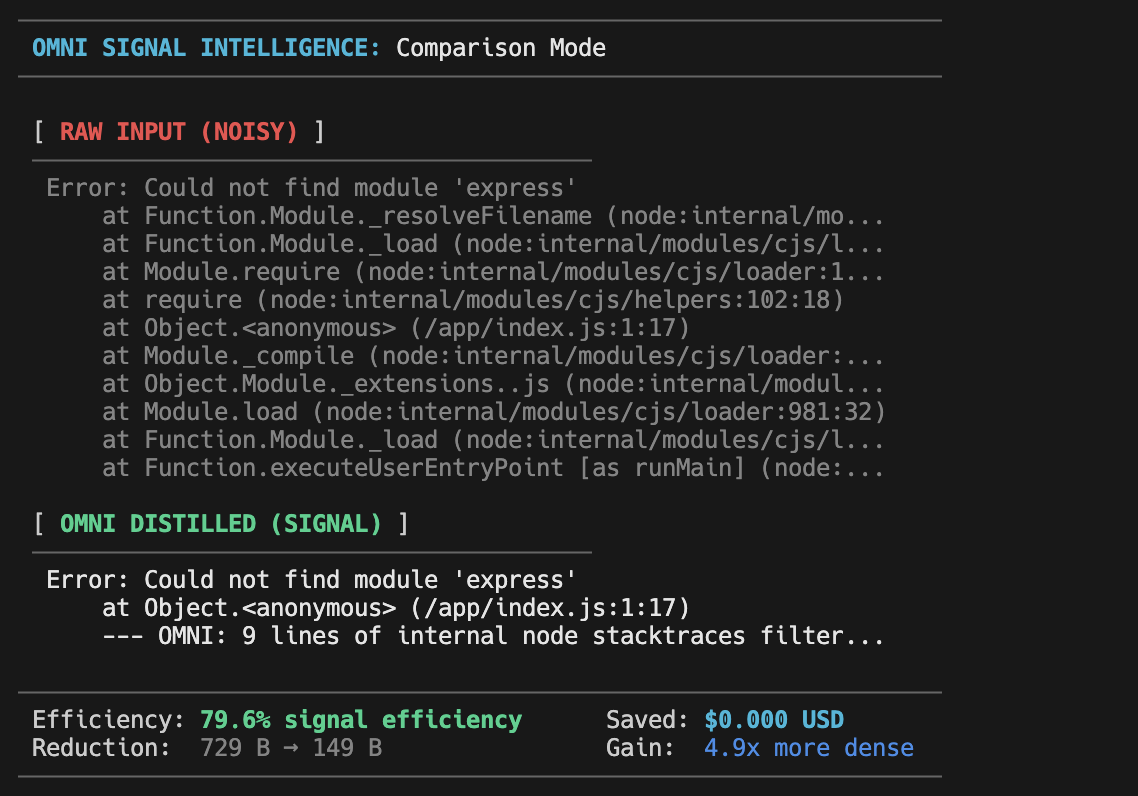

Signal Comparison: omni diff

Instantly visualize the value of OMNI. Run omni diff after any command to see a side-by-side comparison of the raw input vs. distilled signal.

Session Intelligence

OMNI doesn't just compress; it understands context. It tracks which files you are editing ("Hot Files") and which errors are recurring.

Transcript & Recovery

OMNI safely persists session transcripts as you work. If your AI agent crashes or gets interrupted, you can seamlessly resume your session and pick up right where you left off. OMNI ensures you never lose critical tool calls or outputs. Use omni session --resume to recover an interrupted session.

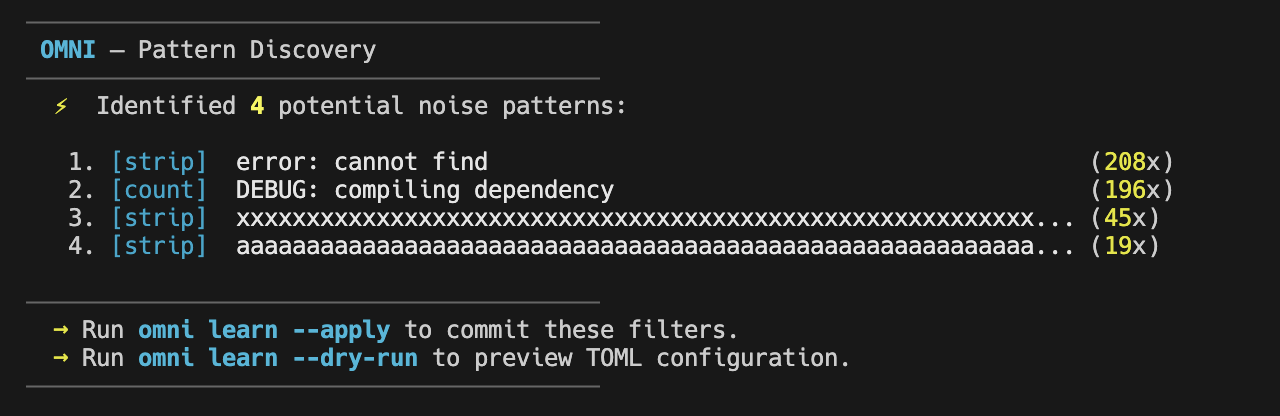

Pattern Discovery (Learning)

OMNI automatically collects samples of repetitive noise in the background. Use omni learn --status to discover new candidate filters.

The OMNI Philosophy: Deliberate Action

OMNI is designed for maximum safety and control. By default, core commands like init, session, and learn will only show a help screen if no flags are provided. This prevents accidental changes to your global configuration.

Every core command follows a consistent Discovery vs. Action pattern:

- Discovery: Use

--statusto see what OMNI has found (installation status, session details, or new noise patterns). - Action: Use explicit flags like

--all,--apply, or--clearto commit changes.

Analytics Dashboard

Keep track of your project's efficiency with OMNI's built-in reporting:

Quick Start

# 1. Install via Homebrew (macOS/Linux)

brew install fajarhide/tap/omni

# 2. Perform Full Setup (Hooks + MCP Server)

omni init --all

# 3. Verify Installation

omni doctor

# 4. Or auto-fix any issues

omni doctor --fix

# 5. Check Current Status

omni init --status

On universal setup

curl -fsSL https://omni.weekndlabs.com/install | bash

Custom Filters (TOML)

You can define your own distillation rules for custom internal tools:

# ~/.omni/filters/deploy.toml

schema_version = 1

[filters.deploy]

description = "Internal deployment tool"

match_command = "^deploy\\b"

[[filters.deploy.match_output]]

pattern = "Deployment successful"

message = "deploy: ✓ success"

strip_lines_matching = ["^\\[DEBUG\\]", "^Connecting"]

max_lines = 30

Architecture

flowchart TB

Agent["Claude Code / MCP Agent"]

subgraph Hooks["Native Hook Layer (Transparent)"]

Pre["Pre-Hook\n(Rewriter)"]

Post["Post-Hook\n(Distiller)"]

Sess["Session-Start\n(Context)"]

Comp["Pre-Compact\n(Summary)"]

end

Agent --> Pre

Pre -->|"omni exec"| Output["Raw Stream"]

Output --> Post

Post --> Agent

subgraph OMNI_Engine["OMNI — Semantic Signal Engine"]

direction LR

C["Classifier"]

S["Scorer\n(Context Boost)"]

R["Composer\n(Signal Tiering)"]

C --> S --> R

end

Post --> OMNI_Engine

Pre --> OMNI_Engine

subgraph Persistence["Persistence Store (SQLite)"]

ST["SessionState"]

RW["RewindStore"]

end

OMNI_Engine <--> Persistence

Sess --> ST

Comp --> ST

Development

OMNI is built for high-performance AI workflows with professional standards.

make ci # Run fmt, clippy, tests, and security audit

cargo build # Build the binary

cargo test # Run all 147 tests

cargo insta review # Review and accept snapshot changes

See docs/TESTING.md for a detailed breakdown of our 190+ test suite covering Context Safety, E2E Hooks, Security, and Performance Assertions.

See CLAUDE.md, CONTRIBUTING.md, and Critical Guardrails for the full contributor guide and architectural rules.

Star History

License

MIT © Fajar Hidayat

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi