agents-remember-md

Health Warn

- No license — Repository has no license file

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Low visibility — Only 5 GitHub stars

Code Warn

- Code scan incomplete — No supported source files were scanned during light audit

Permissions Pass

- Permissions — No dangerous permissions requested

This tool provides a persistent memory system for coding agents like Claude Code, Cursor, and GitHub Copilot. It uses sidecar markdown files to store project context and conventions, allowing agents to retain knowledge about a codebase across different sessions.

Security Assessment

The overall security risk is Low. The project is essentially a structured set of markdown files, conventions, and prompts rather than executable software. It has no software dependencies, does not request dangerous permissions, and does not execute shell commands or make network requests. No hardcoded secrets were found. However, the automated code scan could not verify the contents of every file, so users should quickly review the imported markdown files to ensure they do not contain malicious prompt injections meant to manipulate the coding agent.

Quality Assessment

The project is actively maintained, with its most recent push happening today. However, it has low community visibility, currently sitting at only 5 GitHub stars. Additionally, the repository lacks a formal license file. While the creator notes that this tool is built on "Skills for Claude Code, Cursor, VS Code etc.," the language compatibility and specific agent configurations vary. Because it is a convention-based framework, its overall effectiveness depends entirely on how well your specific AI agent adheres to the provided markdown instructions.

Verdict

Safe to use, but be aware of the unlicensed status and minimal community validation.

Gives coding Agents persistent project memory across sessions. Per-file side cars but 0 bloating. No hallucinations. Your agents will always know the caveats in your codes and be aware of distant but connected pieces even across system boarders. Plus inbuild drift detection

Agents Remember

My agent keeps forgetting everything. So I made it write notes to its future self.

Every source code file has a companion markdown. The agent opens both. Here's what that looks like:

Techstack

Skills for for Claude Code, Cursor, VS Code etc. No software dependendencies.

Just markdown files and conventions.

Quickstart

Clone this repository somewhere next to your existing code — it doesn't go inside any of them. The onboarding tree lives here and mirrors each code repo by name:

projects/

agents-remember/ ← this repo

AGENTS.md

onboarding/

my-app/

overview.md

my-app/ ← your existing repo

src/

The steps are the same regardless of which tool you use:

- Wire up the agent so it reads

AGENTS.mdfrom this repo at session start (tool-specific instructions below). - Run

C-03-repo-bootstrapto scaffold the initial onboarding structure inonboarding/my-app/. A bareoverview.mdis enough — the agent fills in depth as it works. - Start using the agent normally. Chat handles most tasks. The agent reads companion files alongside source files and updates them as it goes.

- Escalate to

W-02-light-task-workfloworW-01-heavy-task-workflowwhen the task needs a written plan or needs to survive beyond a single session.

Coverage builds from real work. The first task on any file writes the companion; every task after reads it.

Claude Code

Add a CLAUDE.md at the root of each code repository:

# my-app

@../agents-remember/AGENTS.md

Claude Code imports the file into context at session start. When a skill applies, the agent reads the corresponding SKILL.md using its normal file tools — no extra configuration needed since agents-remember is already accessible on disk.

Cursor

Create .cursor/rules/agents-remember.mdc in your code repository:

---

description: Agents Remember memory system conventions

alwaysApply: true

---

@../agents-remember/AGENTS.md

Alternatively, use Cursor's built-in GitHub import to sync rules directly from this repo. Skills are read on demand by the agent using standard file access.

VS Code + GitHub Copilot

Open (or create) a .code-workspace file that includes both repositories as folders. Copilot needs the skills directories listed explicitly in chat.agentSkillsLocations — without this setting it won't discover them:

{

"folders": [{ "path": "agents-remember" }, { "path": "my-app" }],

"settings": {

"chat.agentSkillsLocations": {

"agents-remember/skills": true,

"agents-remember/skills/U-01-core-skills": true,

"agents-remember/skills/W-01-heavy-task-workflow": true,

"agents-remember/skills/W-02-light-task-workflow": true,

"agents-remember/skills/P-00-creation": true,

"agents-remember/skills/P-01-research": true,

"agents-remember/skills/P-02-synthesis": true,

"agents-remember/skills/P-03-design": true,

"agents-remember/skills/P-04-planning": true,

"agents-remember/skills/P-05-implementation": true,

"agents-remember/skills/P-06-closing": true,

"agents-remember/skills/P-99-review": true

}

}

}

You can add a .github/copilot-instructions.md in the code repo to layer on any repo-specific overrides.

Windsurf

Add both repositories to your workspace. Windsurf automatically discovers AGENTS.md files within the workspace tree and reads skills on demand from there. You can add repo-specific additions in .windsurf/rules/*.md inside the code repo if needed.

What this is

Most AI coding systems give you a workflow. This one gives you a persistent memory layer for your codebase, and three ways to interact with it.

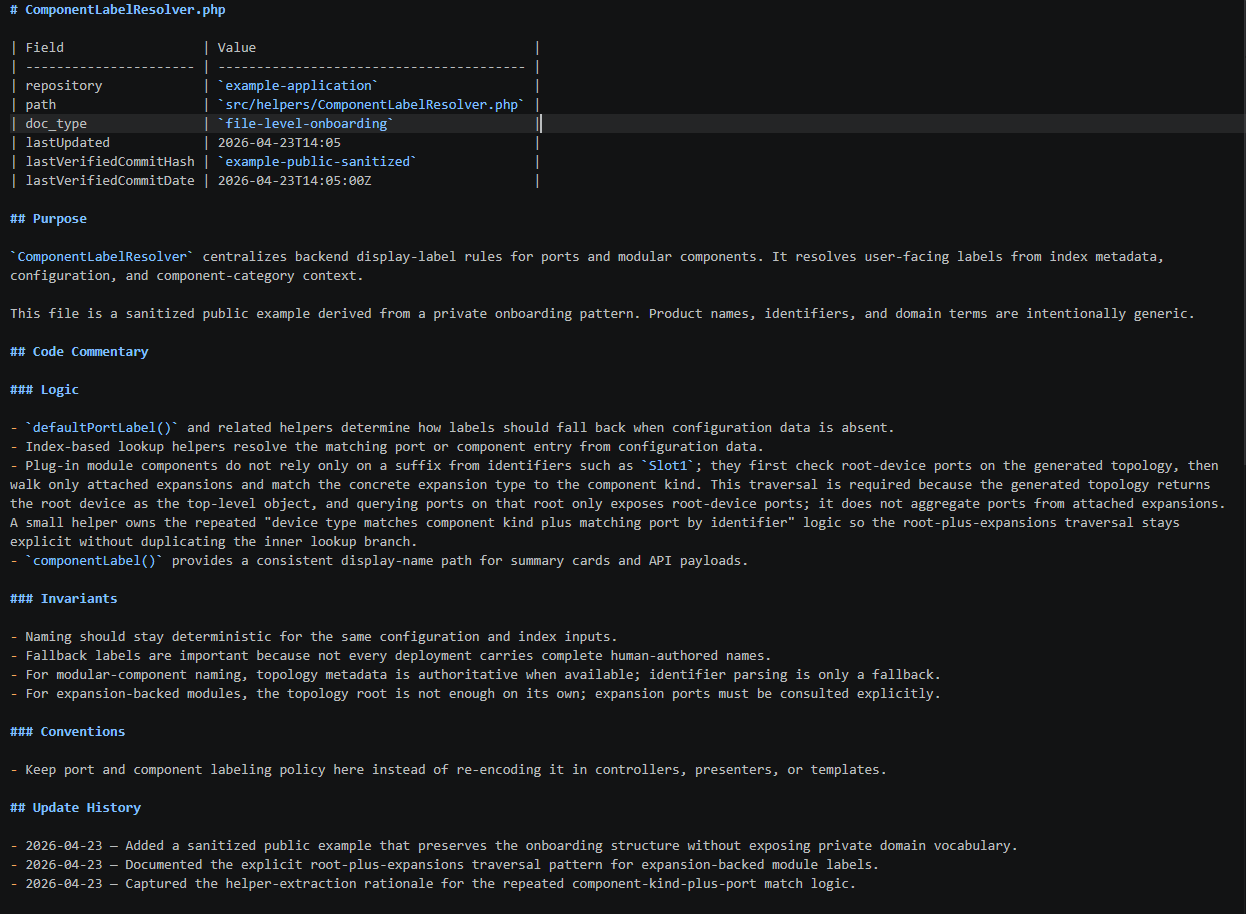

The memory layer is a shadow documentation tree that mirrors your source tree one-to-one. For src/Backend/UserController.php there's an onboarding/src/Backend/UserController.md. No search, no retrieval, no embedding — the doc path is derived from the code path. An agent reading a source file opens its companion file alongside. The companion captures what code can't say on its own: invariants the code assumes, conventions with social rather than syntactic enforcement, the intent behind a pattern, and cross-repo contracts that live between two repositories and are owned by neither.

The memory layer is the product. Everything else in this repo is a way to interact with it.

The three modes

Most tasks don't need a framework. They need an agent that already knows the codebase. That's what the memory layer provides, and that's why the default mode is just chat.

| Mode | When | What the agent does |

|---|---|---|

| Chat (default) | Simple tasks that fit in one session | Reads onboarding alongside code, proposes changes with code examples in chat, implements on approval, updates onboarding |

| Light task | Medium tasks, or tasks likely to outlive one session | Writes a single-page plan to a task file, gets approval, implements, updates onboarding |

| Heavy task | Migrations, cross-repo contracts, changes where "looks right, breaks in production" would be catastrophic | Seven phases with review gates and adversarial checkpoints, projected code+intent before touching real code, task-local docs that promote into onboarding only after implementation is approved |

All three modes share the same three-part discipline:

- Drift check before planning. Before the agent plans against an onboarding file, it verifies the file isn't stale against the source. The

C-02-onboarding-drift-detectionskill runs this check and classifies trust. - Approval before implementation. The agent proposes changes. The developer approves. No implicit approval, no "I'll just make this small edit."

- Onboarding update after approved changes. Onboarding reflects approved code, not speculation. The update happens after the developer approves the change, not before.

The modes differ in how approval happens — a chat turn, a task file review, a phase-gate checkpoint — not in what the discipline is. One system at three resolutions.

In chat mode, the whole loop is small enough to state in full. It lives in AGENTS.md and reads:

1. When planning code changes against onboarding documentation, invoke

`C-02-onboarding-drift-detection` to find drifted onboardings for the

files in question. Do not plan against drifted or missing-verification

onboarding until the drift report has been handed off to

`C-05-create-or-update-onboarding-files` or the caller has explicitly

accepted directional-only trust.

2. Once planned, show the changes to the developer in chat including

code examples for every distinct change you intend to make. Wait for

explicit developer approval before changing any code.

3. After approval, apply the code changes, update the onboarding

documentation, and use the appropriate code quality checks from

`docs/tools.md`.

No task folder, no phase structure. The same discipline the heavier modes enforce through artifacts is carried by chat turns.

Why the memory layer changes things

An AI coding session without persistent memory starts every task from scratch. It re-reads files it read last session, re-discovers cross-repo contracts it found before, re-infers invariants that nobody wrote down. All of that rediscovery consumes context window — and context-window degradation is measurable and severe. Du et al. (EMNLP 2025) showed model accuracy drops 14–85% as input length grows even when the answer is perfectly retrievable. Liu et al. (TACL 2024) showed models attend poorly to the middle of their context, with more than 30% accuracy loss for information placed mid-window. Ord's Half-Life of AI Agent Success Rates found that doubling task duration quadruples failure rate, because each mistake forces correction work that adds more noise.

Persistent memory attacks this at the root. The agent doesn't rediscover — it reads a small, relevant, curated set of companion files and starts with context already loaded. Cross-repo contracts, invariants, and migration direction are visible at read time instead of reconstructed at runtime. The first task on an area pays for the companion file. Every task after that benefits from it.

The same properties that make companion files useful to agents make them useful to developers. When returning to old code months later, reading the captured intent reconstructs context faster than re-reading the code. New engineers read the companion next to the file and see invariants, conventions, and cross-repo edges in one place instead of hunting through wikis and Slack archives.

What makes the memory layer honest

Memory systems fail in two ways. They go stale (the code moves, the docs don't). They get polluted with speculation (an agent writes what it planned to build, not what exists). This system addresses both:

Staleness. Each companion file records the git commit of its source file at last verification. Before any planning work, a diff against that hash tells the agent whether the file has changed. Stale companions are flagged and refreshed before the agent plans against them. This is C-02-onboarding-drift-detection, and it runs as the first step of every mode.

Pollution. The approval gate is global: no unapproved work goes into onboarding. In chat mode, the gate is the developer's approval turn. In light task, it's approval of the plan and of the implementation. In heavy task, it's the promotion step at Closure after CP5 passes. Task-local artifacts — input documentation, projected outputs, implementation plans — stay task-local until implementation is approved. Only then does anything reach the canonical onboarding tree.

Both guarantees hold across all three modes. The memory layer only accepts validated history, the same discipline git applies to main.

Repository bootstrapping

Companion files don't need to exist before you can use the system. A repo with no onboarding can start with a bare overview.md and be scaffolded by using the C-03-repo-bootstrap skill. From there it can grow organically as tasks touch new areas. The first task on a file pays the cost of writing its companion; every task after that benefits.

For bulk coverage the C-03-repo-bootstrap skill can do more. After overview.md you can scaffold an entire repo in phases. Start with the hotspots and then go into detail where needed. You can bootstrap hundreds of files in a session, which is nowadays practical on current models using sub-Agents and parallelism.

What's in this repo

skills/W-01-heavy-task-workflow/— the seven-phase workflow for high-stakes tasksskills/W-02-light-task-workflow/— the single-page-plan workflow for medium tasksskills/U-01-core-skills/— supporting skills used by all modes:C-02-onboarding-drift-detection— staleness detection (used by every mode)C-03-repo-bootstrap— scaffold onboarding for an existing repoC-04-discovery— top-down reading order for unfamiliar codeC-05-create-or-update-onboarding-files— the onboarding file template and maintenance

skills/P-99-review/— the adversarial review package used by heavy taskAGENTS.md— operational principles, including the chat-mode looponboarding/heavy-task-workflow/— this workflow's self-documentation, written in its own format

Comparison

The fundamental choice isn't "task workflow or memory system" — it's whether you want infrastructure that compounds across tasks or infrastructure that regenerates per project.

| Task workflow systems (BMAD, GSD, Spec Kit) | Memory systems (graphify, Ix, claude-mem) | This system | |

|---|---|---|---|

| Persistent knowledge of existing code | No | Yes | Yes |

| Workflow is optional | No — framework is the product | N/A | Yes — default mode is chat |

| Retrieval model | N/A | Search / graph query / embeddings | Path-derived — doc path mirrors code path |

| Cross-repo edges first-class | No | Usually no | Yes |

| Promotion gate for new knowledge | N/A | No | Yes — task-local → canonical after approval |

| Staleness detection | None | Varies | Git-anchored, per-file |

| Substrate | Files, various formats | Opaque backend or derived | Markdown in git, human-readable |

| Infrastructure compounds across tasks | No — regenerated per project | Yes | Yes |

Reviews (0)

Sign in to leave a review.

Leave a reviewNo results found