output

Health Gecti

- License — License: Apache-2.0

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 80 GitHub stars

Code Gecti

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

This is an open-source TypeScript framework designed to help developers build AI workflows and agents. It provides a unified workspace to manage prompts, evaluations, cost tracking, and tracing, and is specifically optimized for AI coding assistants like Claude Code.

Security Assessment

Overall Risk: Low. The automated code scan of 12 files found no dangerous patterns and identified no hardcoded secrets. While the tool inherently interacts with external LLM providers, it does so as part of its core function, meaning network requests to APIs (like OpenAI or Anthropic) are expected. It does not request dangerous system permissions or execute hidden shell commands. As with any AI orchestration framework, you will be trusting it with your API keys, so standard environment variable security practices are recommended.

Quality Assessment

The project appears to be in active development, with its last repository push occurring today. It has garnered 80 GitHub stars, indicating a healthy and growing level of early community trust. The codebase is legally safe to use and build upon, covered under the standard Apache-2.0 license. The repository is well-documented and clearly outlines its features and intended use cases.

Verdict

Safe to use.

The open-source TypeScript framework for building AI workflows and agents. Designed for Claude Code describe what you want, Claude builds it, with all the best practices already in place.

Output

The open-source TypeScript framework for building AI workflows and agents. Designed for Claude Code — describe what you want, Claude builds it, with all the best practices already in place.

One framework. Prompts, evals, tracing, cost tracking, orchestration, credentials. No SaaS fragmentation. No vendor lock-in. Everything in your codebase, everything your AI coding agent can reach.

Why Output

Every piece of the AI stack is becoming a separate subscription. Prompts in one tool. Traces in another. Evals in a third. Cost tracking across five dashboards. None of them talk to each other. Half of them will get acquired or shut down before your product ships.

Output brings everything together. One TypeScript framework, extracted from thousands of production AI workflows. Best practices baked in so beginners ship professional code from day one, and experienced AI engineers stop rebuilding the same infrastructure.

Build AI using AI

Output is the first framework designed for AI coding agents. The entire codebase is structured so Claude Code can scaffold, plan, generate, test, and iterate on your workflows. Every workflow is a folder — code, prompts, tests, evals, traces, all together. Your agent reads one folder and has full context.

Own your prompts

.prompt files with YAML frontmatter and Liquid templating. Version-controlled, reviewable in PRs, deployed with your code. Switch providers by changing one line. No subscription needed to manage your own prompts.

See everything that happens

Every LLM call, HTTP request, and step traced automatically. Token counts, costs, latency, full prompt/response pairs. JSON in logs/runs/. Zero config. Claude Code analyzes your traces and fixes issues — because the data is in your file system.

Test AI like software

LLM-as-judge evaluators with confidence scores. Inline evaluators for production retry loops. Offline evaluators for dataset testing. Deterministic assertions and subjective quality judges.

Use any model

Anthropic, OpenAI, Azure, Vertex AI, Bedrock. One API. Structured outputs, streaming, tool calling — all work the same regardless of provider.

Scale without worrying

Temporal under the hood. Automatic retries with exponential backoff. Workflow history. Replay on failure. Child workflows. Parallel execution with concurrency control. You don't think about Temporal until you need it — then it's already there.

Keep secrets secret

AI apps need a lot of API keys. Sharing .env files is risky, and coding agents shouldn't see your secrets. Output encrypts credentials with AES-256-GCM, scoped per environment and workflow, managed through the CLI. No external vault subscription needed.

Quick Start

Prerequisites

- Node.js 20+

- Docker Desktop

- An LLM API key (e.g. Anthropic)

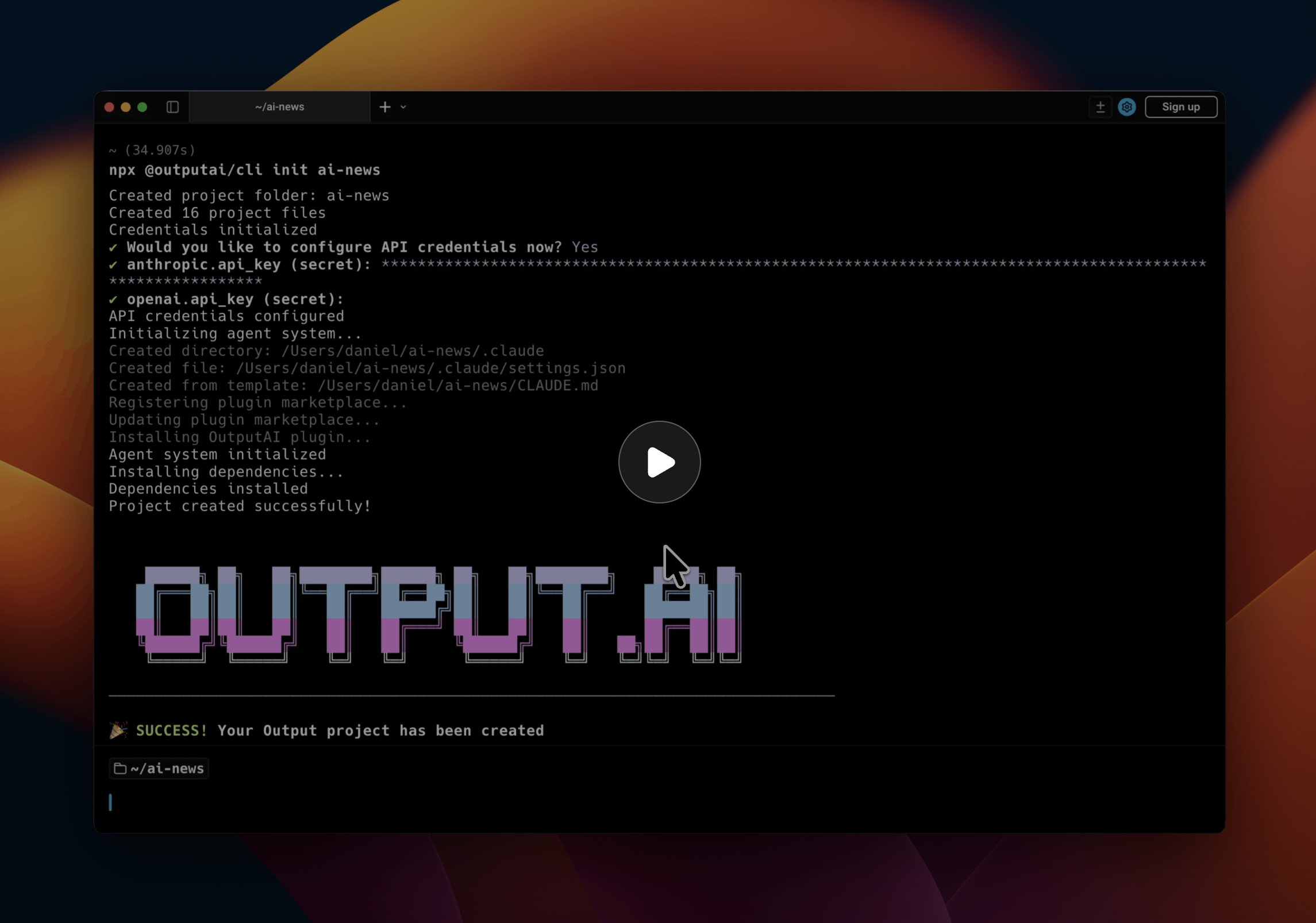

Create your project

npx @outputai/cli init

cd <project-name>

Add your API key to .env:

ANTHROPIC_API_KEY=sk-ant-...

Start developing

npx output dev

This starts the full development environment:

- Temporal server for workflow orchestration

- API server for workflow execution

- Worker with hot reload for your workflows

- Temporal UI at http://localhost:8080

Run your first workflow

npx output workflow run blog_evaluator paulgraham_hwh

Inspect the execution:

npx output workflow debug <workflow-id>

For the full getting started guide, see the documentation.

Core Concepts

Workflows

Orchestration layer — deterministic coordination logic, no I/O.

// src/workflows/research/workflow.ts

workflow({

name: 'research',

fn: async (input) => {

const data = await gatherSources(input);

const analysis = await analyzeContent(data);

const quality = await checkQuality(analysis);

return quality.passed ? analysis : await reviseContent(analysis, quality);

}

});

Steps

Where I/O happens — API calls, LLM requests, database queries. Each step runs once and its result is cached for replay.

// src/workflows/research/steps.ts

step({

name: 'gatherSources',

fn: async (input) => {

const results = await searchApi(input.topic);

return { sources: results };

}

});

Prompts

.prompt files with YAML configuration and Liquid templating.

---

provider: anthropic

model: claude-sonnet-4-20250514

temperature: 0

---

<system>You are a research analyst.</system>

<user>Analyze the following sources about {{ topic }}: {{ sources }}</user>

Evaluators

LLM-as-judge evaluation with confidence scores and reasoning.

// src/workflows/research/evaluators.ts

evaluator({

name: 'checkQuality',

fn: async (content) => {

const { output } = await generateText({

prompt: 'evaluate_quality',

variables: { content },

output: Output.object({

schema: z.object({

isQuality: z.boolean(),

confidence: z.number().describe('0-100'),

reasoning: z.string()

})

})

});

return new EvaluationBooleanResult({

value: output.isQuality,

confidence: output.confidence,

reasoning: output.reasoning

});

}

});

SDK Packages

| Package | Description |

|---|---|

| @outputai/core | Workflow, step, and evaluator primitives |

| @outputai/llm | Multi-provider LLM with prompt management |

| @outputai/http | HTTP client with tracing |

| @outputai/cli | CLI for project init, dev environment, and workflow management |

Example Workflows

See test_workflows/ for complete examples:

- Simple — Basic workflow with steps

- HTTP — API integration with HTTP client

- Prompt — LLM generation with prompts

- Evaluation — Quality evaluation workflows

- Stream Text — Streaming text generation

Configuration

LLM Providers

ANTHROPIC_API_KEY=sk-ant-...

OPENAI_API_KEY=sk-...

AZURE_OPENAI_API_KEY=...

AZURE_OPENAI_ENDPOINT=...

AWS_ACCESS_KEY_ID=... # For Amazon Bedrock

AWS_SECRET_ACCESS_KEY=...

AWS_REGION=us-east-1

Temporal

For local development, output dev handles everything. For production, use Temporal Cloud or self-hosted Temporal:

TEMPORAL_ADDRESS=your-namespace.tmprl.cloud:7233

TEMPORAL_NAMESPACE=your-namespace

TEMPORAL_API_KEY=your-api-key

Tracing

# Local tracing (writes JSON to disk; default under "logs/runs/")

OUTPUT_TRACE_LOCAL_ON=true

# Remote tracing (upload to S3 on run completion)

OUTPUT_TRACE_REMOTE_ON=true

OUTPUT_REDIS_URL=redis://localhost:6379

OUTPUT_TRACE_REMOTE_S3_BUCKET=my-traces

Contributing

git clone https://github.com/growthxai/output-sdk.git

cd output-sdk

pnpm install && npm run build:packages

npm run dev # Start dev environment

npm test # Run tests

npm run lint # Lint code

./run.sh validate # Validate everything

Project structure:

sdk/— SDK packages (core, llm, http, cli)api/— API server for workflow executiontest_workflows/— Example workflows

License

Apache 2.0 — see LICENSE file.

Acknowledgments

Built with Temporal, Vercel AI SDK, Zod, LiquidJS.

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi