claude-carbon

Health Gecti

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 30 GitHub stars

Code Gecti

- Code scan — Scanned 9 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

This tool tracks and estimates the carbon footprint of your Claude Code sessions. It adds a live CO2 estimate to your status line, stores historical data in a local SQLite database, and can generate shareable image reports of your emissions.

Security Assessment

The tool accesses local JSONL transcript files inside the `~/.claude` directory to calculate token usage, which means it interacts with your session history. It executes local shell scripts and modifies your Claude Code settings file to set up hooks and status line integrations. No hardcoded secrets or dangerous permissions were found, and the automated code scan flagged no malicious patterns. However, the recommended installation method pipes a remote script directly into bash (`curl | bash`), which is a common vector for supply-chain attacks. While the tool currently appears safe, this installation method relies on implicitly trusting the repository maintainer. Overall risk: Low.

Quality Assessment

The project is actively maintained, with the most recent push occurring today. It uses the permissive MIT license, which is excellent for open-source adoption. It has garnered 30 GitHub stars, indicating a modest but growing level of community trust and interest. The repository includes a clear description, documentation, and structured release flows.

Verdict

Safe to use, though it is recommended to manually clone and inspect the repository rather than relying on the automated `curl | bash` installation method to ensure ongoing safety.

Track the carbon footprint of your Claude Code sessions

claude-carbon

Track the carbon footprint of your Claude Code sessions.

🟢 Opus 4.6 (1M context) ░░░░ 6% | $3.20 | 145g CO₂ | claude cowork

What it does

- Adds a live CO2 estimate to the Claude Code status line, next to the session cost

- Persists each session to a local SQLite database

- Backfills historical data from existing

~/.claudetranscripts - Generates shareable PNG report cards for LinkedIn

- Exposes a

/claude-carbon:reportskill for a full emissions breakdown

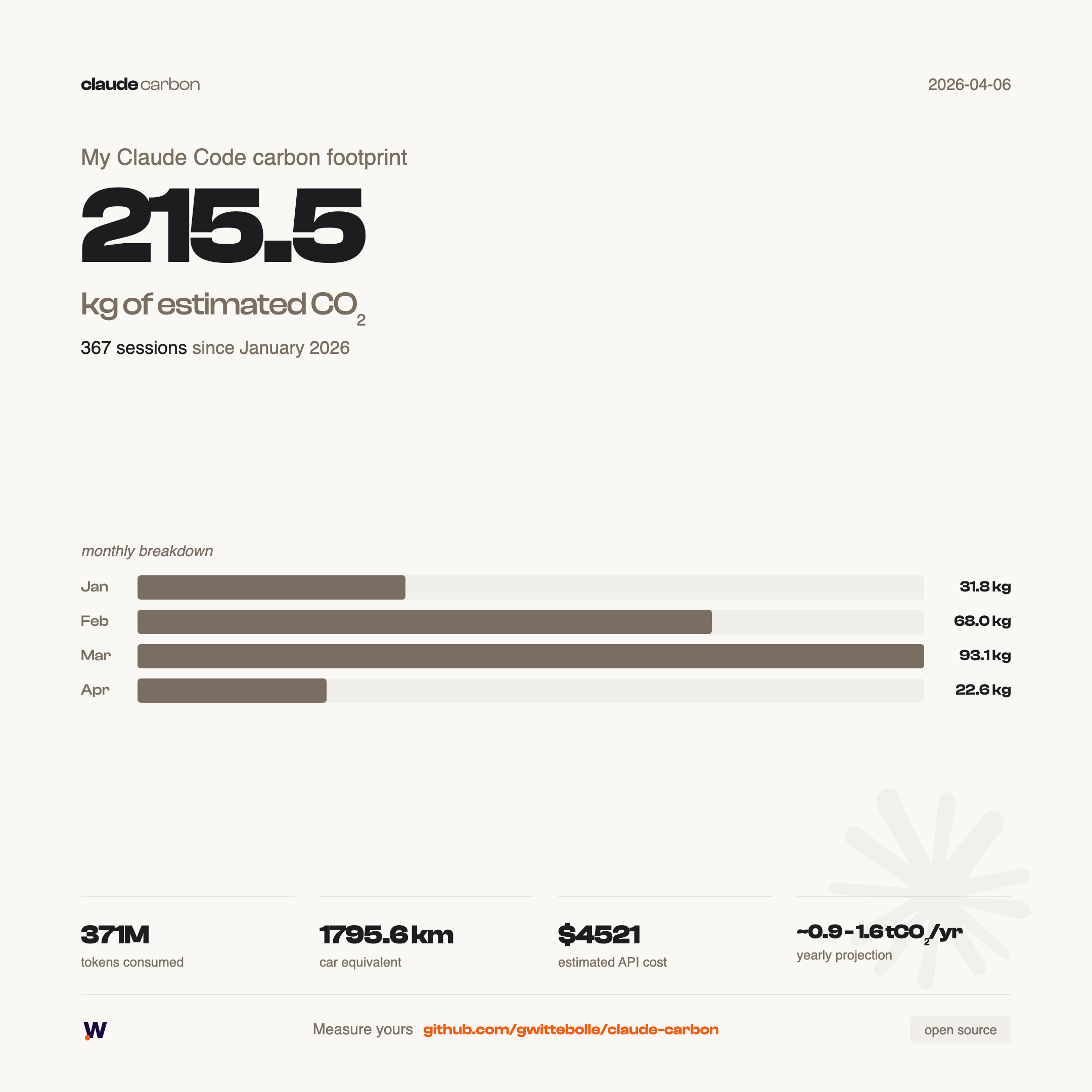

Example report

Generate yours:

# Since January 1st (default)

bash scripts/generate-report.sh

# Since a specific date

bash scripts/generate-report.sh --since 2026-03-01

# All time

bash scripts/generate-report.sh --all

Exports two PNGs to exports/: a summary card and a detailed card with per-project breakdown.

Install

curl -fsSL https://raw.githubusercontent.com/gwittebolle/claude-carbon/main/install.sh | bash

This clones the repo, creates the database, backfills your existing Claude Code sessions, and configures ~/.claude/settings.json automatically. Restart Claude Code and the CO2 estimate appears in the status line.

To install in a custom directory:

CLAUDE_CARBON_DIR=~/my-path/claude-carbon curl -fsSL https://raw.githubusercontent.com/gwittebolle/claude-carbon/main/install.sh | bash

git clone https://github.com/gwittebolle/claude-carbon.git ~/code/claude-carbon

bash ~/code/claude-carbon/scripts/setup.sh

Then add to ~/.claude/settings.json:

{

"statusLine": {

"type": "command",

"command": "~/code/claude-carbon/scripts/statusline.sh"

},

"hooks": {

"Stop": [

{

"matcher": "",

"hooks": [

{

"type": "command",

"command": "~/code/claude-carbon/scripts/persist-session.sh"

}

]

}

]

}

}

Restart Claude Code.

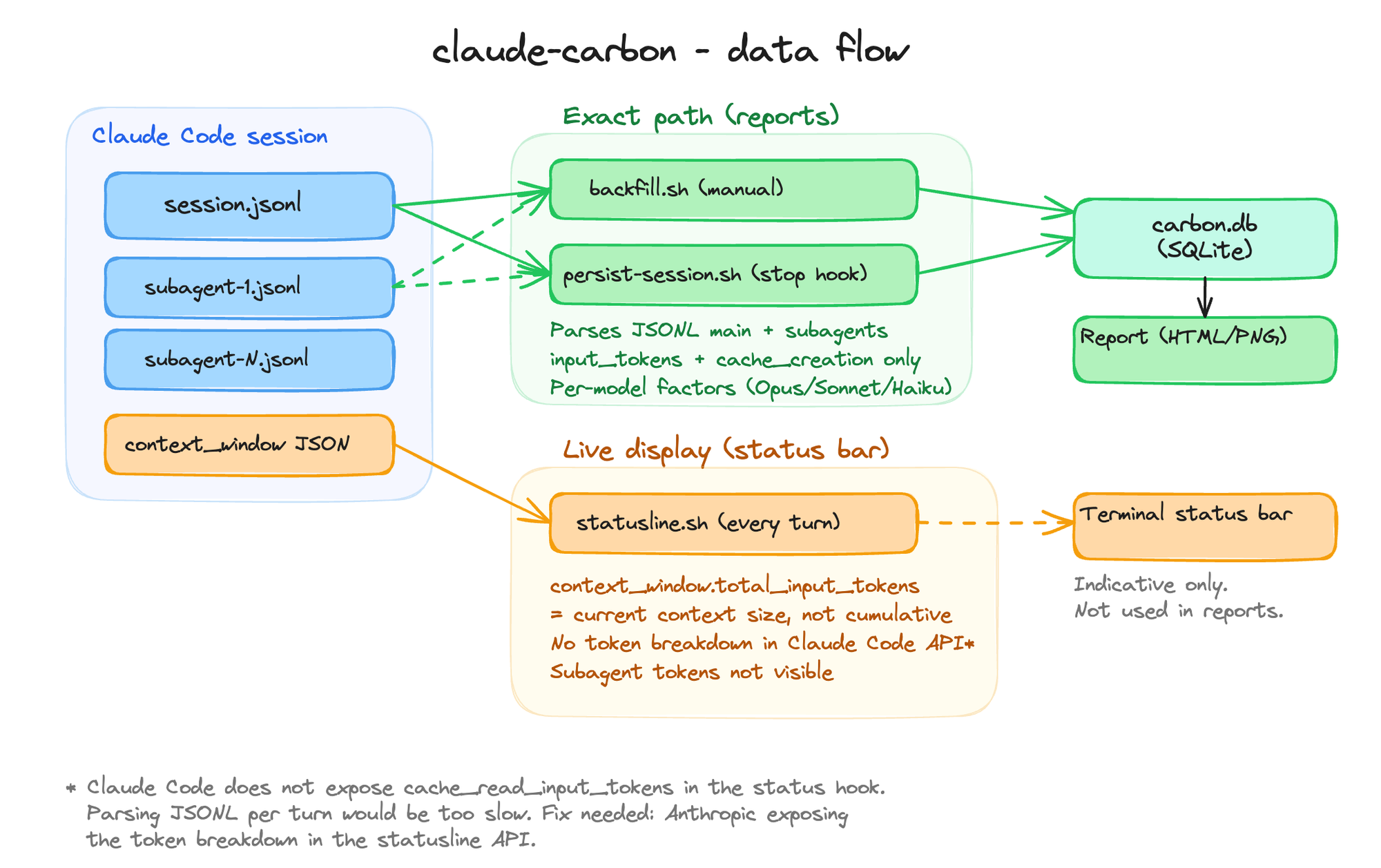

How it works

Three data paths, two levels of accuracy:

| Script | Trigger | Data source | Subagents | Cache reads | Accuracy |

|---|---|---|---|---|---|

backfill.sh |

Manual / setup | JSONL files | Included | Excluded | Best estimate |

persist-session.sh |

Stop hook (session end) | JSONL files | Included | Excluded | Best estimate |

statusline.sh |

Every turn (live) | context_window JSON |

Not included | Included* | Approximate |

backfill and persist-session parse the raw JSONL transcripts (main session + subagent files), applying per-model emission factors. Only input_tokens and cache_creation_input_tokens are counted. These feed the SQLite database used by reports.

statusline reads context_window.total_input_tokens from Claude Code at each turn. This value represents the current context size (not a cumulative total), includes cache reads, and does not account for subagent tokens. It's an indicative live display, not a data source for reports.

*Claude Code does not expose cache_read_input_tokens separately in the statusline JSON. Parsing JSONL incrementally would be too slow for a per-turn display. A proper fix would require Anthropic to expose the token breakdown in the status hook API.

Commands

| Command | What it does |

|---|---|

setup.sh |

Init database, backfill historical sessions, show total |

statusline.sh |

Status line script (called automatically by Claude Code) |

persist-session.sh |

Stop hook (saves session data on exit) |

backfill.sh |

Re-parse all historical JSONL transcripts (incl. subagents) |

generate-report.sh |

Export shareable PNG report cards |

/claude-carbon:report |

In-session text report with totals, equivalences, top sessions |

Emission factors

Factors from Jegham et al. 2025, a peer-reviewed study measuring energy consumption of LLM inference on AWS infrastructure.

| Model | Input (gCO2e/Mtok) | Output (gCO2e/Mtok) | Basis |

|---|---|---|---|

| Opus | 500 | 3000 | Extrapolated (3x Sonnet) |

| Sonnet | 190 | 1140 | Measured |

| Haiku | 95 | 570 | Extrapolated (0.5x Sonnet) |

Important: these are order-of-magnitude estimates, not precise measurements.

- Sonnet factors are derived from Jegham et al. direct measurements. Opus and Haiku are extrapolated (no public data from Anthropic on per-model energy consumption).

- Cache read tokens are excluded from the calculation (only

input_tokensandcache_creation_input_tokensare counted). Cache reads represent the majority of tokens in Claude Code but consume negligible energy. - Carbon intensity uses AWS grid-average (0.287 kgCO2e/kWh), not real-time grid data.

- Anthropic does not publish Scope 1, 2, or 3 emissions. These estimates are independent and based on academic research, not provider data.

Factors are editable in data/factors.json. See METHODOLOGY.md for the full scientific basis, formula, and equivalences.

Dependencies

jq- JSON parsingsqlite3- local databaseplaywright-core- PNG export only (optional)

jq and sqlite3 are pre-installed on macOS. On Linux: apt install jq sqlite3.

Reduce your footprint

Measuring is step one. Here are concrete levers to reduce your AI carbon footprint, ranked by impact.

Use the right model for the task

Output tokens cost 5x more energy than input tokens. Opus consumes ~3x more than Sonnet per token.

{

"env": {

"CLAUDE_CODE_SUBAGENT_MODEL": "claude-haiku-4-5"

}

}

Use Opus for architecture and planning. Sonnet for daily work. Haiku for subagents (exploration, file reading, reviews). This alone can cut your emissions by 60%.

Install RTK (Rust Token Killer)

RTK is a CLI proxy that filters noise from shell outputs (progress bars, verbose logs, passing tests) before they hit the context window. 60-90% token reduction on CLI commands, zero quality loss.

brew install rtk-ai/tap/rtk

rtk init -g

Reduce thinking tokens

Claude's extended thinking can use up to 32k hidden tokens per message. Capping it reduces consumption without degrading quality on routine tasks.

{

"env": {

"MAX_THINKING_TOKENS": "10000"

}

}

Compact earlier

By default, Claude Code compacts context at 95% usage. Compacting earlier keeps context cleaner and avoids bloated sessions.

{

"env": {

"CLAUDE_AUTOCOMPACT_PCT_OVERRIDE": "50"

}

}

Write concise instructions

Add to your project's CLAUDE.md:

Be concise. No preamble, no summaries unless asked.

Output tokens are the most expensive in both cost and energy.

Combined impact

| Lever | Estimated reduction |

|---|---|

| Right model per task | -60% vs all-Opus |

| RTK | -70% on CLI tokens |

| Thinking cap at 10k | -70% on thinking tokens |

| Haiku subagents | -80% on exploration |

| All combined | -50 to 70% total |

Further reading

- IEA - Energy and AI (2025) - data center projections

- Jegham et al. - How Hungry is AI? - per-model energy measurements

- UCL/UNESCO - 90% AI energy reduction - frugal AI approaches

- GreenIT.fr - AI impacts 2025-2030 - French data

Why

Every Claude Code session uses real compute, real energy, real emissions. The number is small per query, but it adds up. Making it visible is the first step to owning it.

Open source

claude-carbon is free and open source under the MIT license. Contributions welcome.

Built by Gaetan Wittebolle.

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi