DevoChat

Health Gecti

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 10 GitHub stars

Code Gecti

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

Bu listing icin henuz AI raporu yok.

Unified Web AI Chat UI & MCP Client

DevoChat

English | 한국어

⚠️ Breaking Change:

capabilities.imagekey has been renamed tocapabilities.visioncapabilities.inferencekey has been renamed tocapabilities.reasoning- Please update your configuration files.

Unified AI Chat Platform

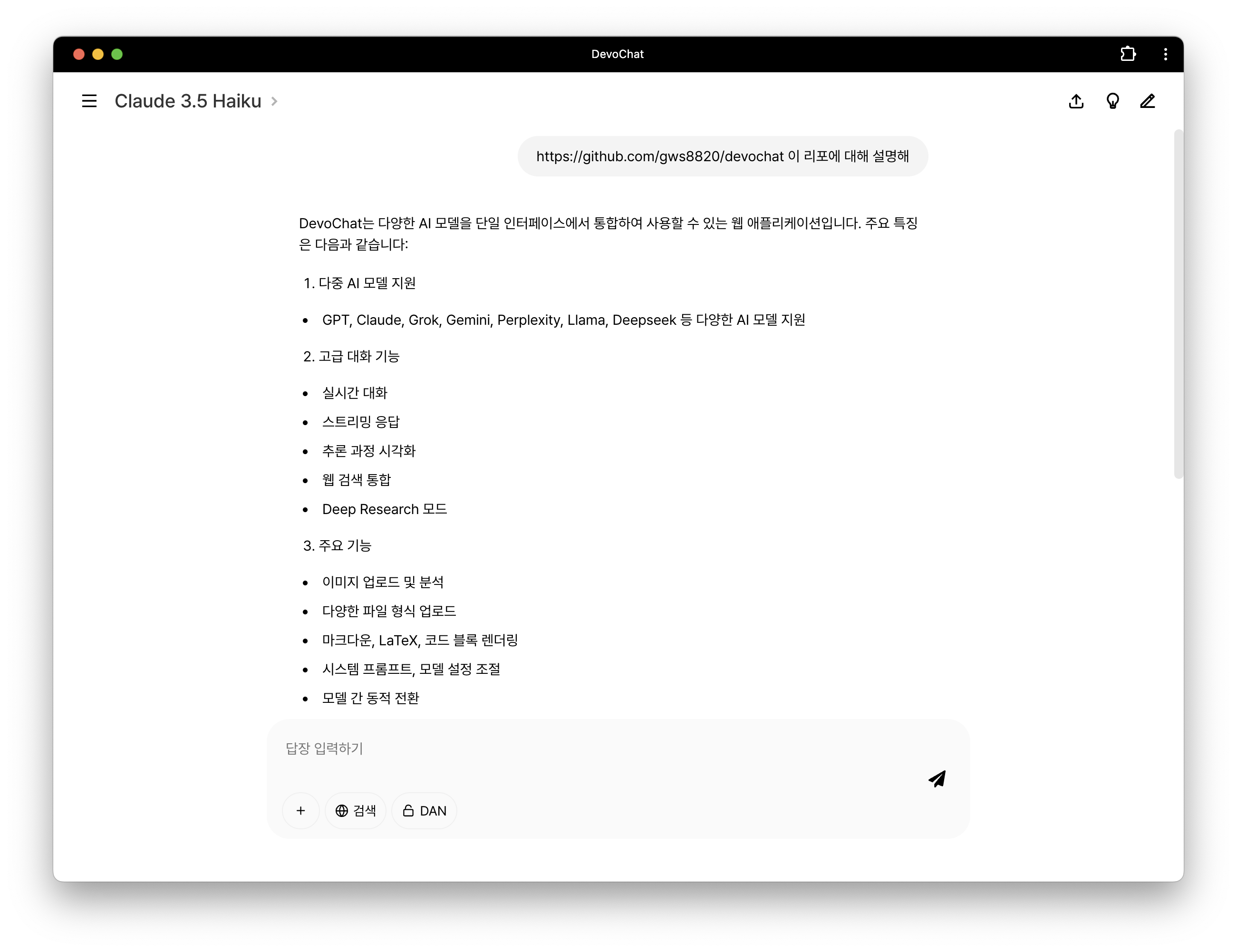

DevoChat is a web application that allows you to use various multimodal AI models and MCP (Model Context Protocol) servers through a single interface. Check out the live demo.

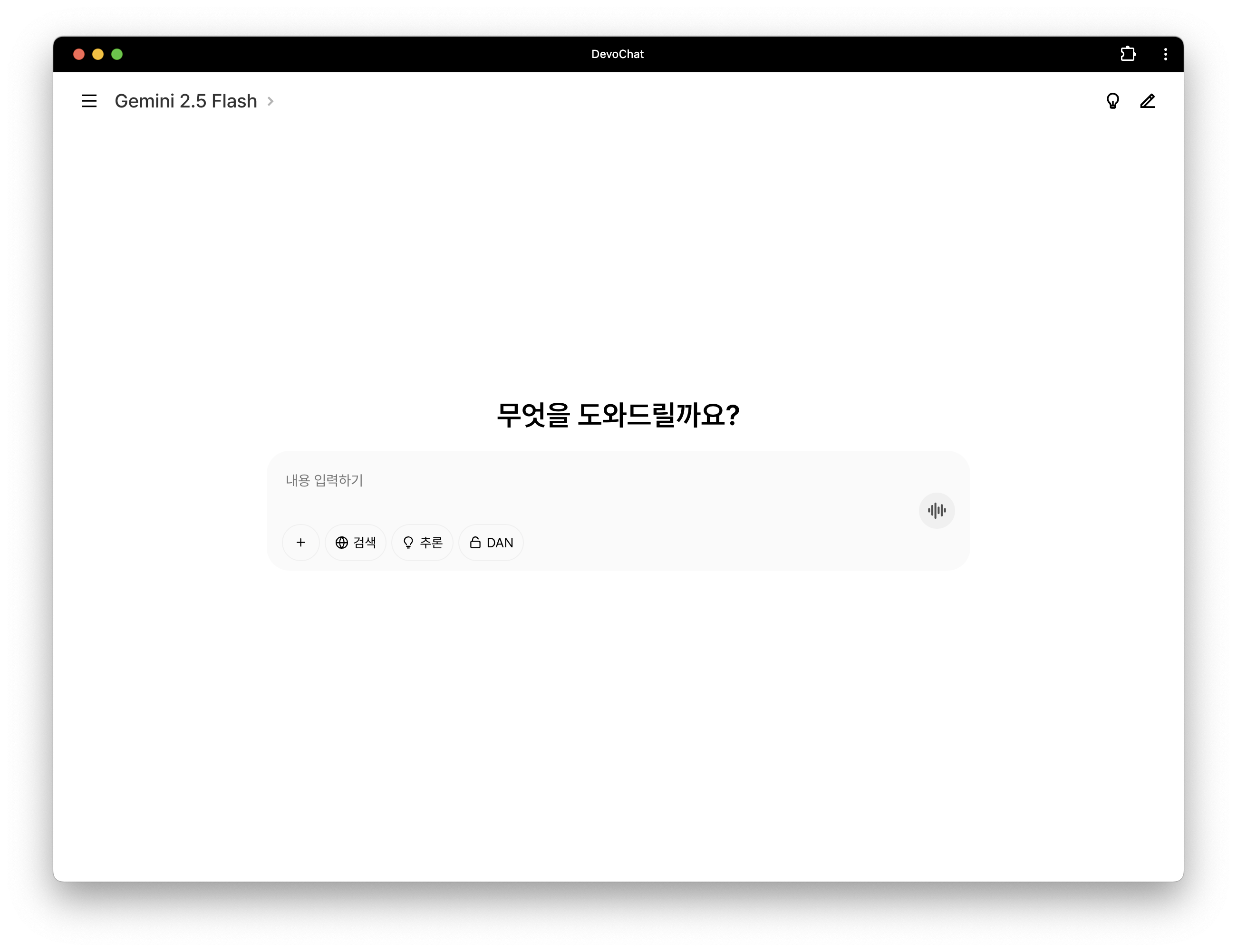

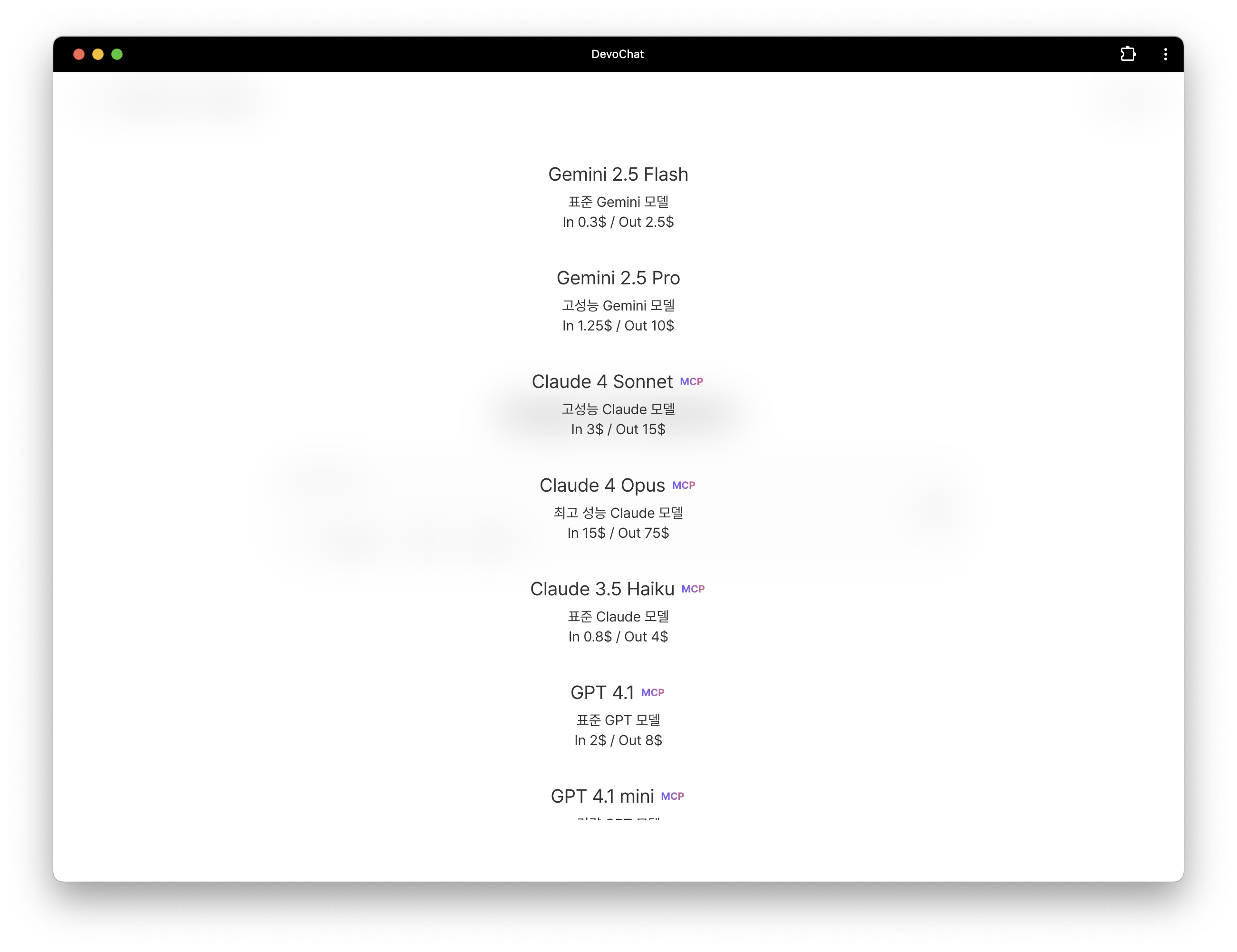

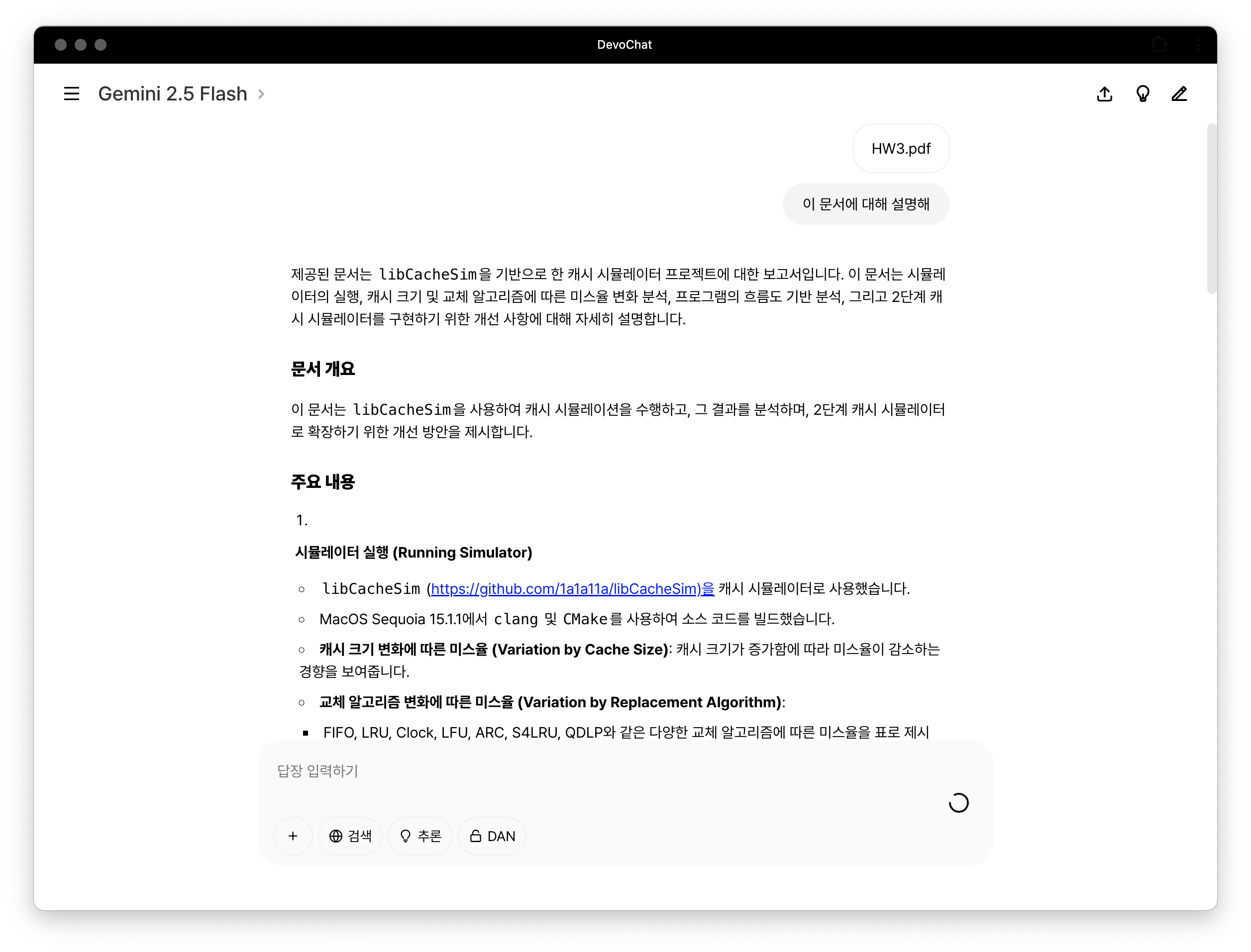

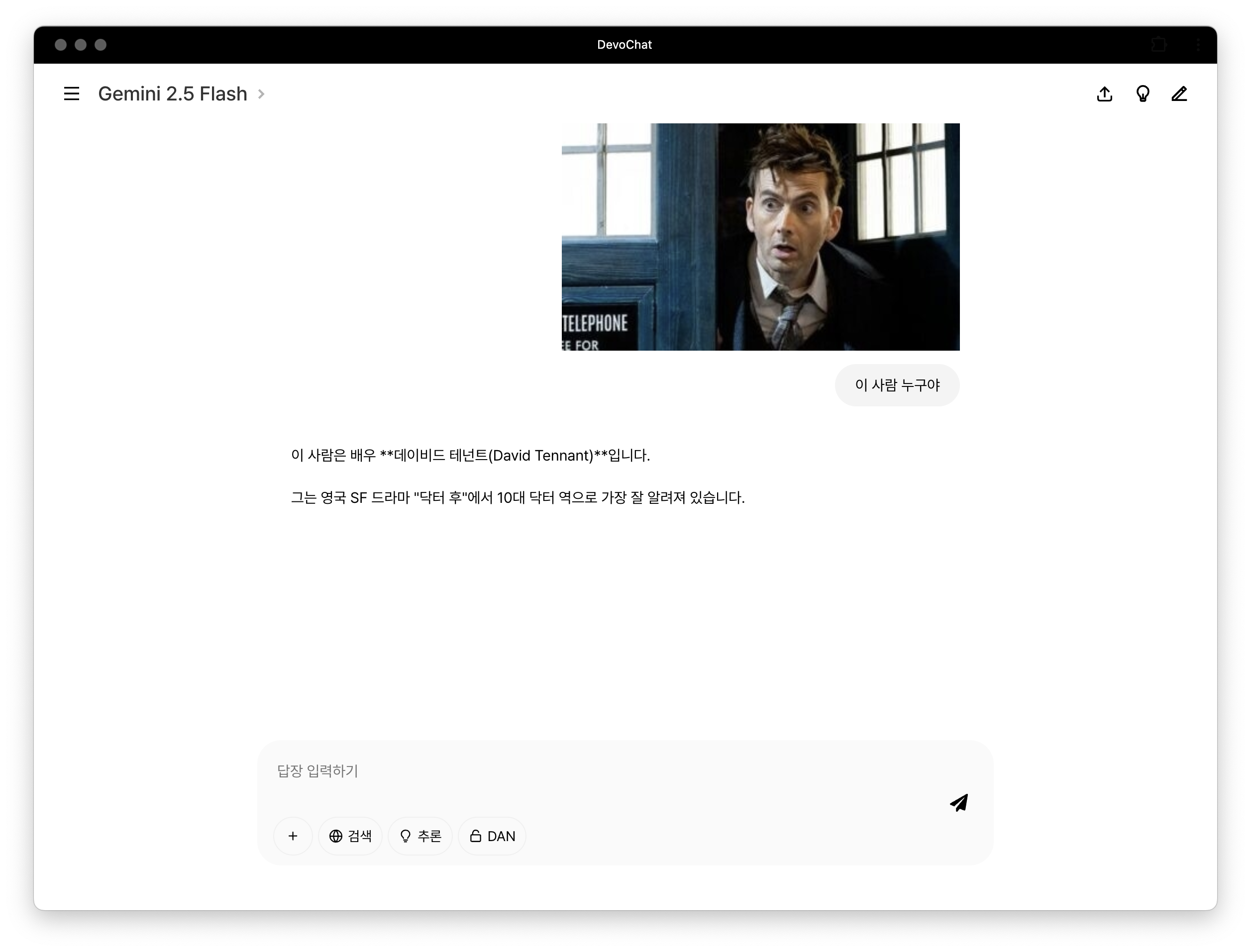

Screenshots

Main Page |

Model Selection |

File Upload |

Image Upload |

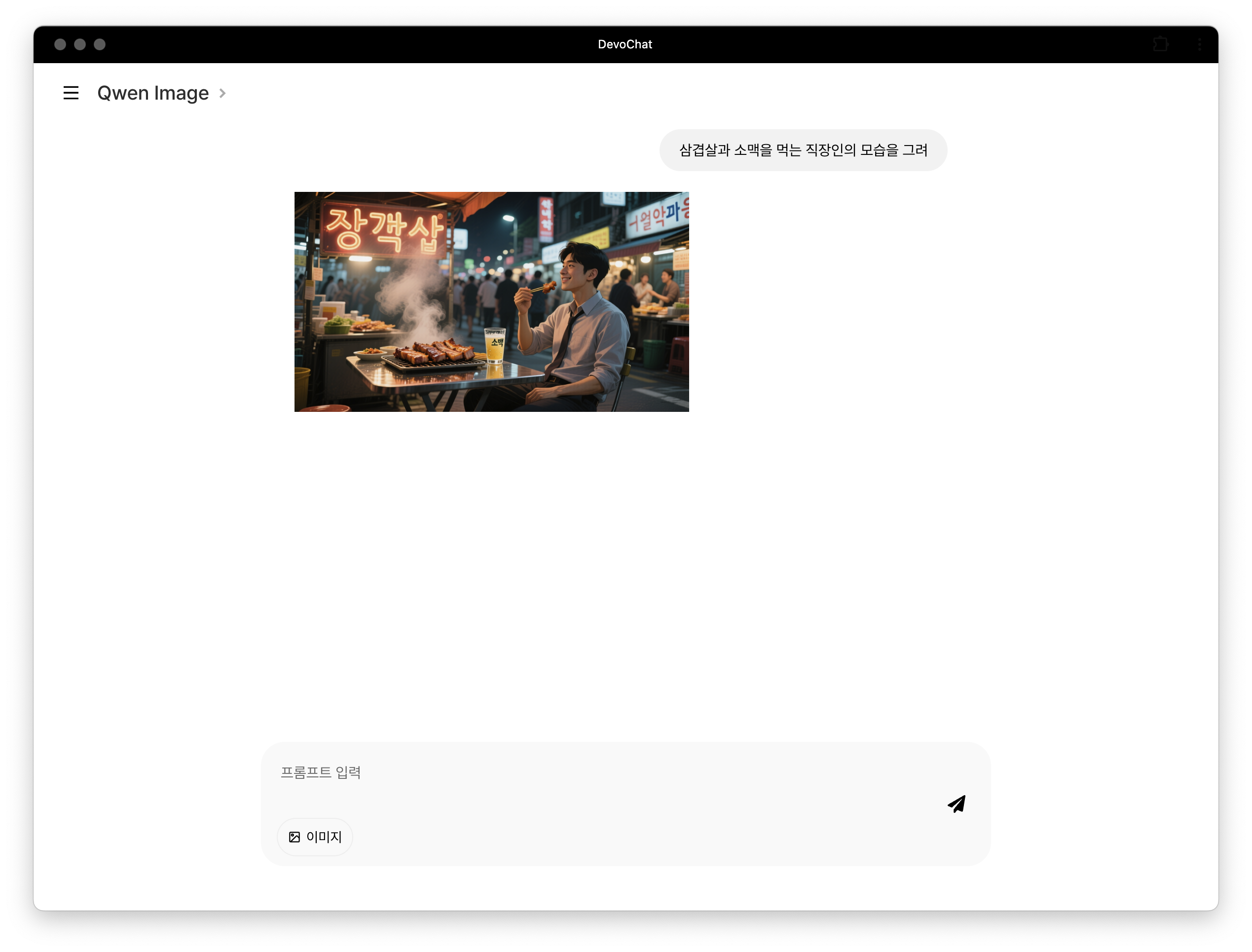

Image Generation |

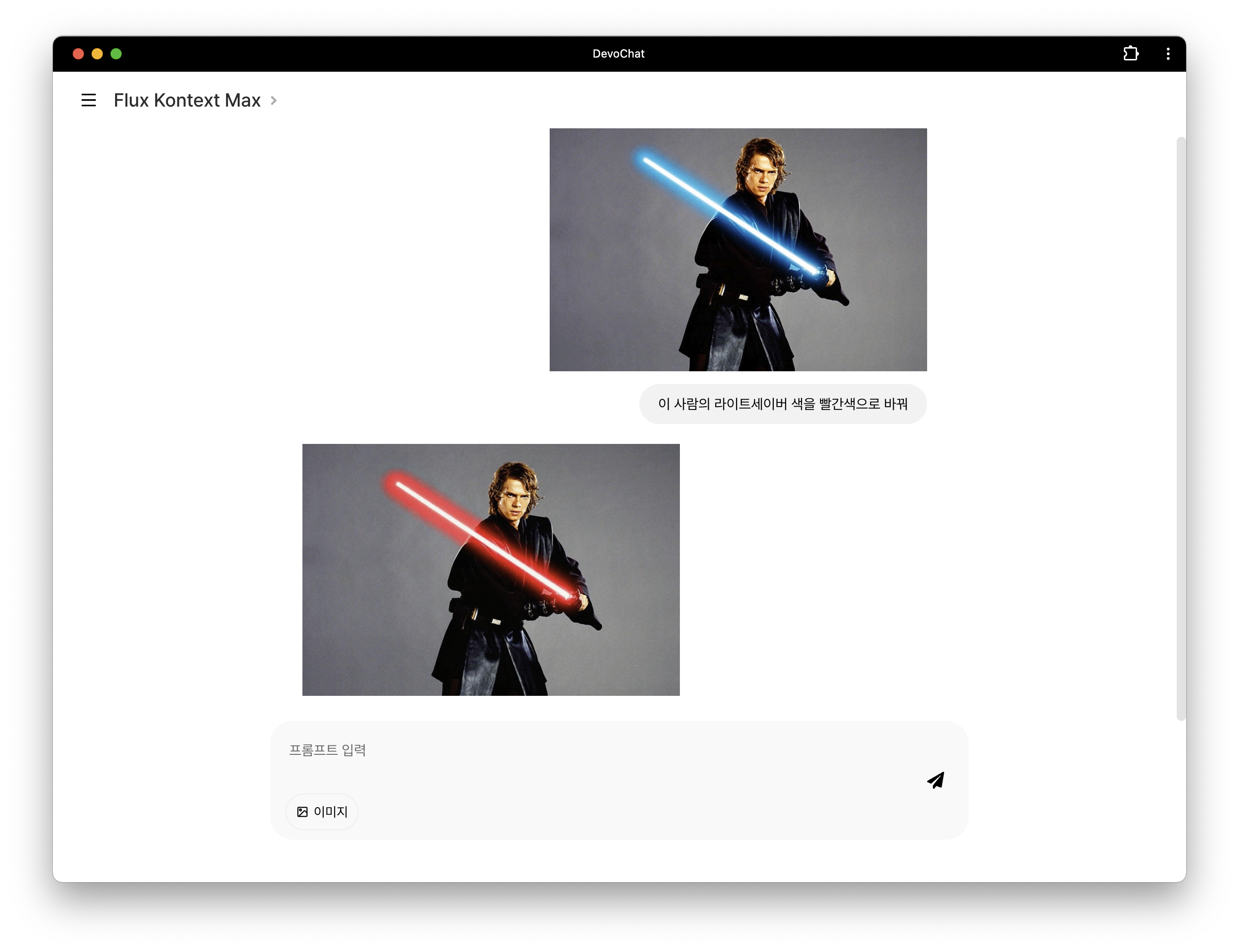

Image Editing |

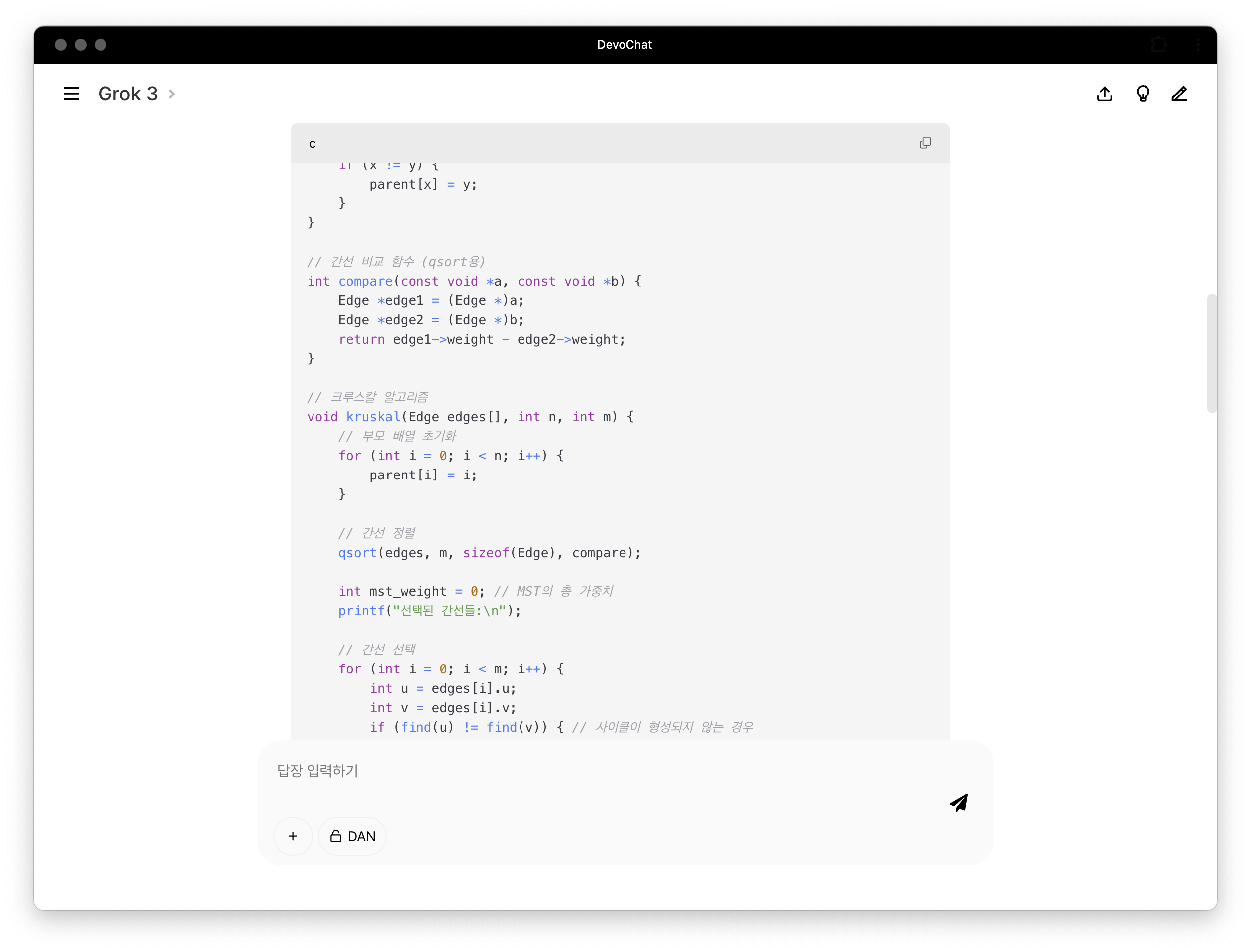

Code Highlighting |

Formula Rendering |

URL Processing |

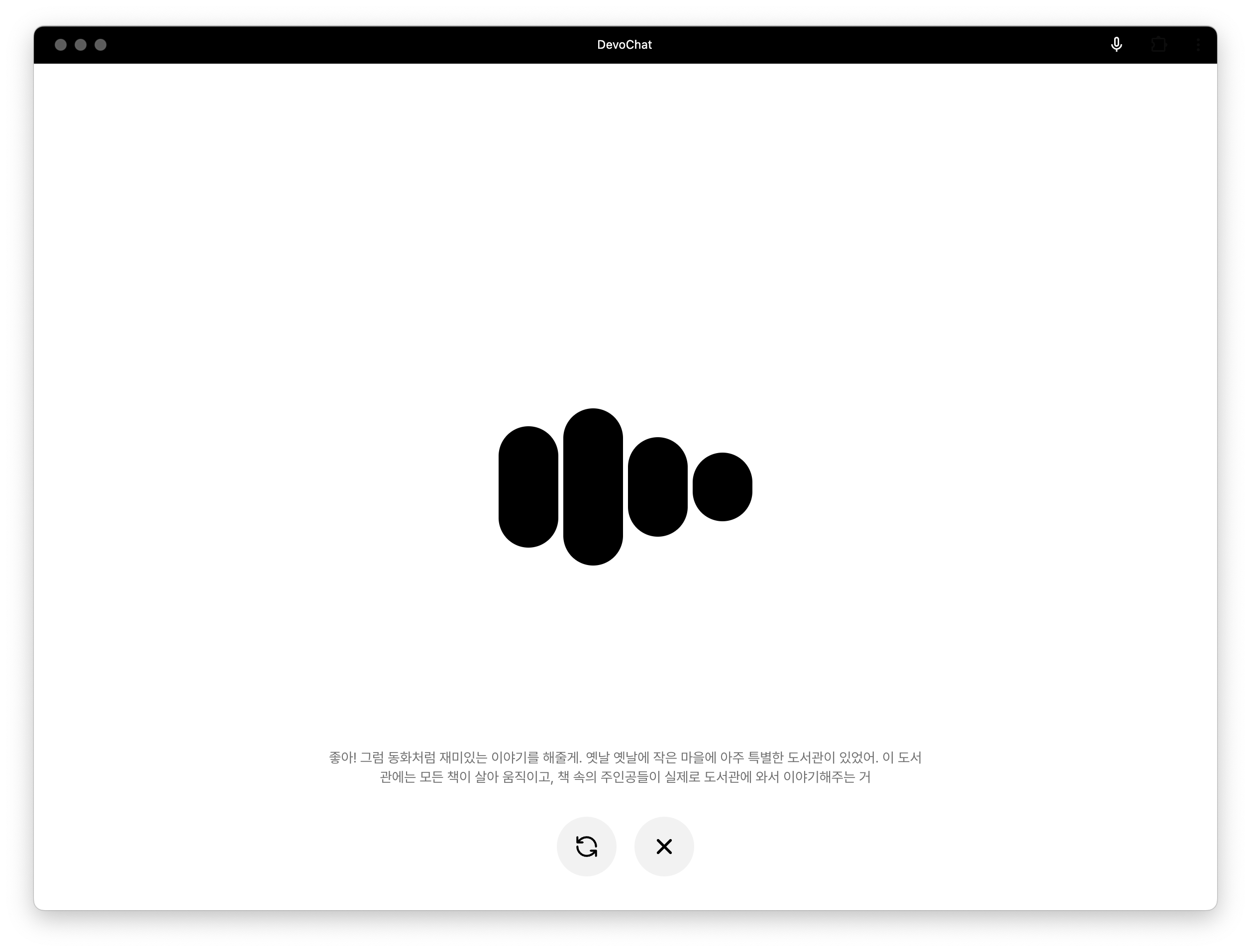

Real-time Conversation |

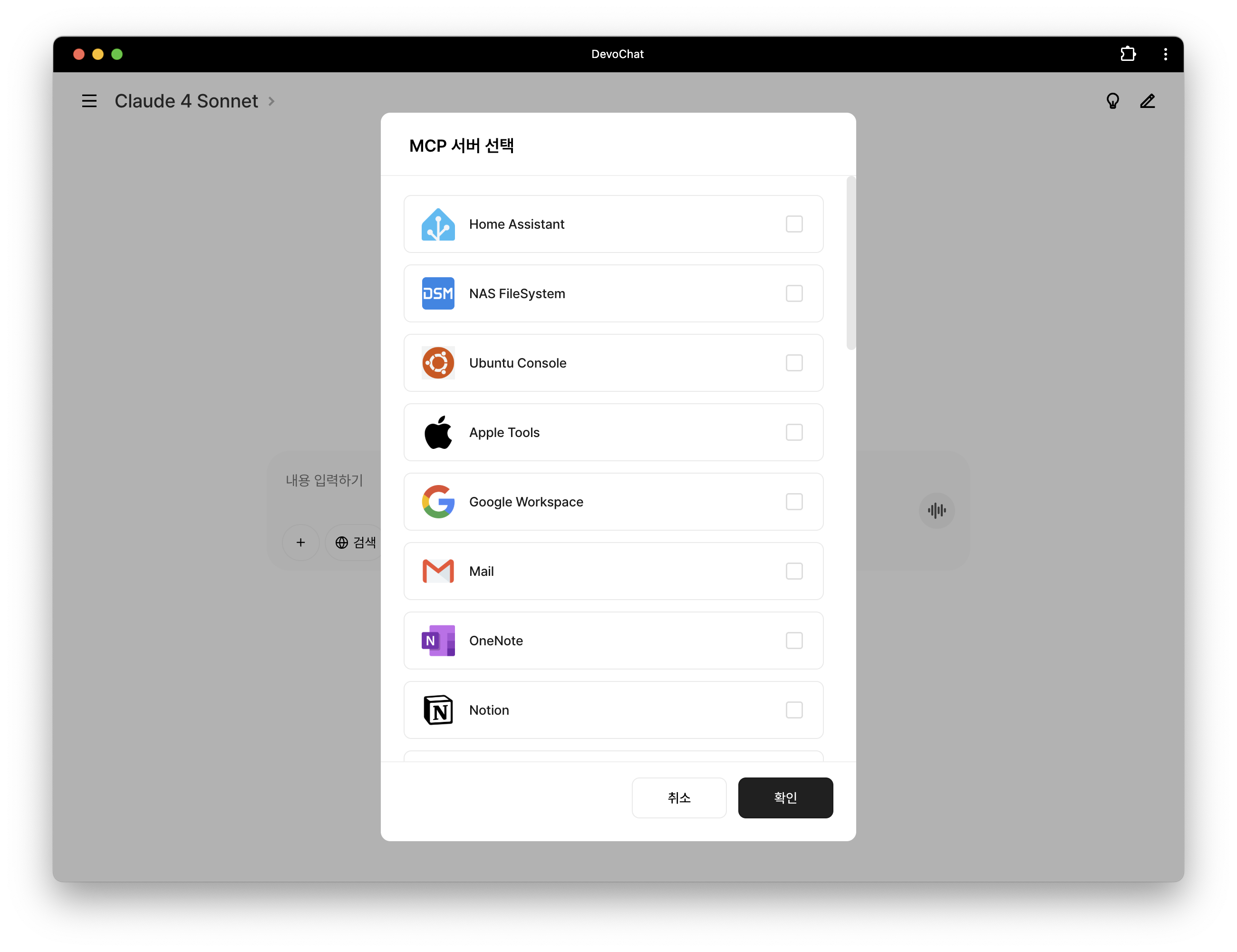

MCP Server Selection |

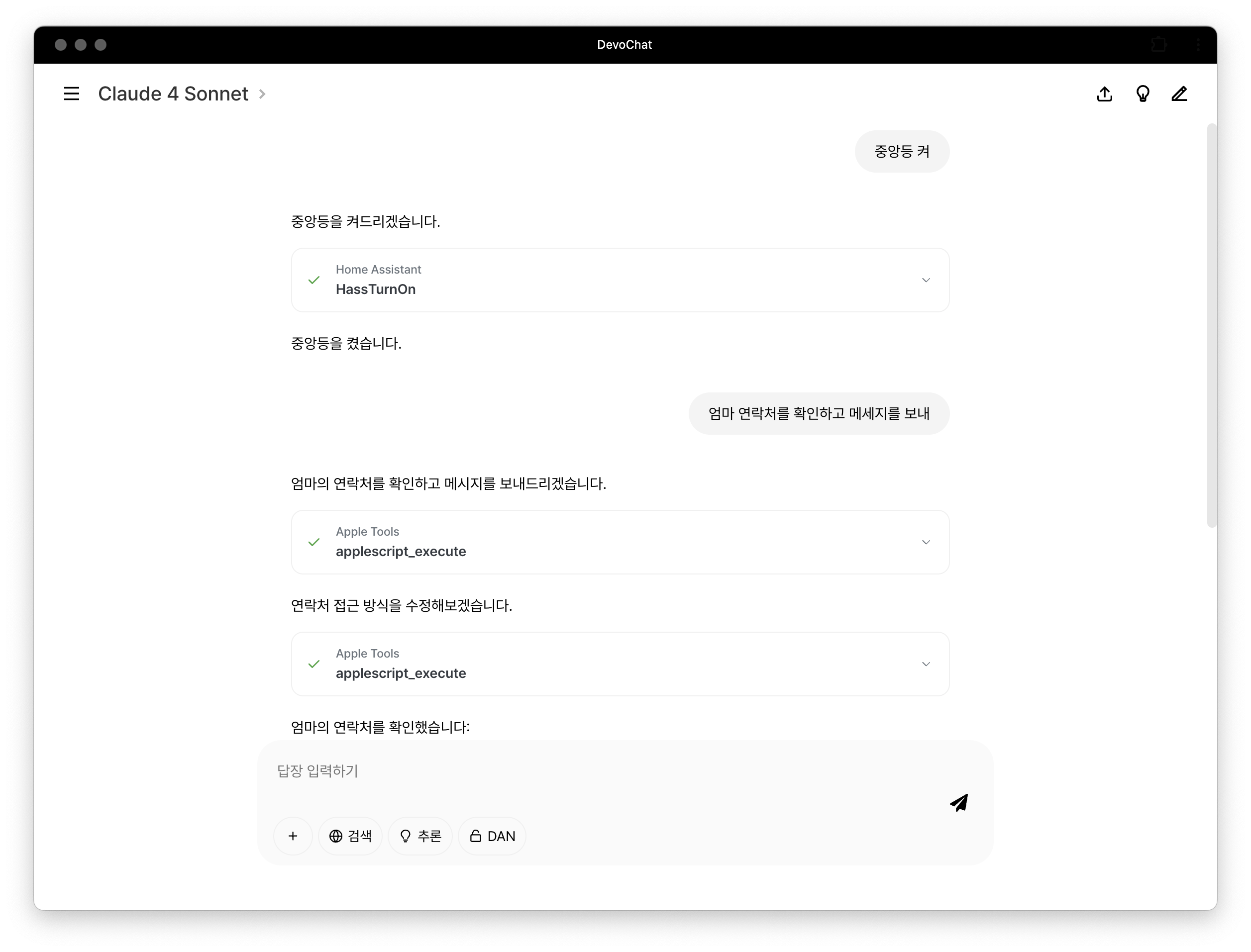

MCP Server Usage |

Key Features

Unified Conversation System

- Uses MongoDB-based unified schema to freely switch between AI models during conversations without losing context.

- Provides client layers that normalize data to meet the API requirements of each AI provider.

- Offers an integrated management environment for various media files including images, PDFs, and documents.

Advanced Conversation Feature

- Provides parameter controls including temperature, reasoning intensity, response length, and system prompt modification.

- Supports markdown, LaTeX formula, and code block rendering.

- Enables streaming responses and simulates streaming for non-streaming models by sending complete responses in chunks.

- Supports image generation via Text-to-Image and Image-to-Image models.

- Supports real-time/low-latency STS (Speech-To-Speech) conversations through RealTime API.

Model Switching Architecture

- Allows immediate addition of various AI models to the system through JSON modification without code changes.

- Supports toggling of additional features like reasoning, search, and deep research for hybrid models.

- Enables linking separate provider models (e.g., Qwen3-235B-A22B-Instruct-2507, Qwen3-235B-A22B-Thinking-2507) with a "switch" variant to function as a single hybrid model.

Web-based MCP Client

- Connects directly to all types of MCP servers (SSE, Local) from web browsers.

- Provides simple access to local MCP servers from anywhere on the web using the secure-mcp-proxy package.

- Supports visual monitoring of real-time tool calls and execution processes.

Project Structure

devochat/

├── frontend/ # React frontend

│ ├── src/

│ │ ├── components/ # UI components

│ │ │ ├── Header.js # Main header

│ │ │ ├── ImageHeader.js # Image generation page header

│ │ │ ├── ImageInputContainer.js # Image generation input container

│ │ │ ├── InputContainer.js # Chat input container

│ │ │ ├── MarkdownRenderers.js # Markdown renderers

│ │ │ ├── MCPModal.js # MCP server selection modal

│ │ │ ├── Message.js # Message rendering

│ │ │ ├── Modal.js # Generic modal

│ │ │ ├── Orb.js # Real-time conversation visualization

│ │ │ ├── SearchModal.js # Search modal

│ │ │ ├── Sidebar.js # Sidebar navigation

│ │ │ ├── Toast.js # Notification messages

│ │ │ ├── ToolBlock.js # MCP tool block

│ │ │ └── Tooltip.js # Tooltips

│ │ ├── contexts/ # State management

│ │ │ ├── ConversationsContext.js # Conversations list state

│ │ │ └── SettingsContext.js # Model/settings state

│ │ ├── pages/ # Page components

│ │ │ ├── Admin.js # Admin page

│ │ │ ├── Chat.js # Main chat page

│ │ │ ├── Home.js # Home page

│ │ │ ├── ImageChat.js # Image generation page

│ │ │ ├── ImageHome.js # Image generation home

│ │ │ ├── Login.js # Login page

│ │ │ ├── Realtime.js # Real-time conversation page

│ │ │ ├── Register.js # Registration page

│ │ │ └── View.js # Conversation viewer page

│ │ ├── resources/ # Static resources

│ │ ├── styles/ # CSS stylesheets

│ │ ├── utils/ # Utility functions

│ │ │ └── useFileUpload.js # File upload hook

│ │ └── App.js # Main app component

│

├── backend/ # FastAPI backend

│ ├── config/ # Configuration files

│ │ ├── chat_models.json # Text AI model settings

│ │ ├── image_models.json # Image generation AI model settings

│ │ ├── mcp_servers.json # MCP server settings

│ │ └── realtime_models.json # Real-time conversation model settings

│ ├── prompts/ # System prompts

│ ├── routes/ # API routers

│ │ ├── chat_clients/ # Text AI model clients

│ │ │ ├── anthropic_client.py

│ │ │ ├── google_client.py

│ │ │ ├── grok_client.py

│ │ │ ├── openai_client.py

│ │ │ └── openrouter_client.py

│ │ ├── image_clients/ # Image generation AI model clients

│ │ │ ├── flux_client.py

│ │ │ ├── google_client.py

│ │ │ ├── grok_client.py

│ │ │ ├── openai_client.py

│ │ │ └── wavespeed_client.py

│ │ ├── auth.py # Authentication/authorization management

│ │ ├── common.py # Common utilities

│ │ ├── conversations.py # Conversation management API

│ │ ├── realtime.py # Real-time communication

│ │ └── uploads.py # File upload handling

│ ├── logging_util.py # Logging utility

│ ├── main.py # FastAPI application entry point

│ └── requirements.txt # Python dependencies

Tech Stack

Installation and Setup

Frontend

Environment Variables

WDS_SOCKET_PORT=0

REACT_APP_FASTAPI_URL=http://localhost:8000

Package Installation and Start

$ cd frontend

$ npm install

$ npm start

Build and Deploy

$ cd frontend

$ npm run build

$ npx serve -s build

Backend

Python Virtual Environment Setup

$ cd backend

$ python -m venv .venv

$ source .venv/bin/activate # Windows: .venv\Scripts\activate

$ pip install -r requirements.txt

Environment Variables

MONGODB_URI=mongodb+srv://username:[email protected]/chat_db

PRODUCTION_URL=https://your-production-domain.com

DEVELOPMENT_URL=http://localhost:3000

AUTH_KEY=your_auth_secret_key

# API Key Configuration

OPENAI_API_KEY=...

ANTHROPIC_API_KEY=...

GEMINI_API_KEY=...

PERPLEXITY_API_KEY=...

HUGGINGFACE_API_KEY=...

XAI_API_KEY=...

MISTRAL_API_KEY=...

OPENROUTER_API_KEY=...

FIREWORKS_API_KEY=...

FRIENDLI_API_KEY=...

FLUX_API_KEY=...

BYTEPLUS_API_KEY=...

ALIBABA_API_KEY=...

Run FastAPI Server

$ uvicorn main:app --host=0.0.0.0 --port=8000 --reload

Usage

chat_models.json Configuration

Define the AI models available in the application and their properties through the chat_models.json file.

{

"default": "gpt-5-mini",

"models": [

{

"model_name": "claude-sonnet-4-20250514",

"model_alias": "Claude 4 Sonnet",

"description": "High-performance Claude model",

"endpoint": "/claude",

"billing": {

"in_billing": "3",

"out_billing": "15"

},

"capabilities": {

"stream": true,

"vision": true,

"reasoning": "toggle",

"web_search": "toggle",

"deep_research": false

},

"controls": {

"temperature": "conditional",

"reason": ["low", "medium", "high", "xhigh"],

"verbosity": false,

"instructions": true

},

"admin": false

},

{

"model_name": "grok-4",

"model_alias": "Grok 4",

"description": "High-performance Grok model",

"endpoint": "/grok",

"billing": {

"in_billing": "3",

"out_billing": "15"

},

"capabilities": {

"stream": true,

"vision": false,

"reasoning": false,

"web_search": false,

"deep_research": false

},

"controls": {

"temperature": true,

"reason": false,

"verbosity": false,

"instructions": true

},

"admin": false

},

{

"model_name": "o3",

"model_alias": "OpenAI o3",

"description": "High-performance reasoning GPT model",

"endpoint": "/gpt",

"billing": {

"in_billing": "2",

"out_billing": "8"

},

"variants": {

"deep_research": "o3-deep-research"

},

"capabilities": {

"stream": true,

"vision": true,

"reasoning": true,

"web_search": false,

"deep_research": "switch"

},

"controls": {

"temperature": false,

"reason": ["low", "medium", "high", "xhigh"],

"verbosity": ["low", "medium", "high"],

"instructions": true

},

"admin": false

}

...

]

}

Parameter Description

| Parameter | Description |

|---|---|

model_name |

The actual identifier of the model used in API calls |

model_alias |

User-friendly name displayed in the UI |

description |

Brief description of the model for reference when selecting |

endpoint |

API path for handling model requests in the backend (e.g., /gpt, /claude, /gemini) |

billing |

Object containing model usage cost information |

billing.in_billing |

Billing cost for input tokens (prompts). Unit: USD per million tokens |

billing.out_billing |

Billing cost for output tokens (responses). Unit: USD per million tokens |

variants |

Defines models to switch to for "switch" type |

capabilities |

Defines the features supported by the model |

capabilities.stream |

Whether streaming response is supported |

capabilities.vision |

Whether image input is supported |

capabilities.reasoning |

Whether reasoning is supported. Possible values: true, false, "toggle", "switch" |

capabilities.web_search |

Whether web search is supported. Possible values: true, false, "toggle", "switch" |

capabilities.deep_research |

Whether Deep Research is supported. Possible values: true, false, "toggle", "switch" |

capabilities.mcp |

Whether MCP server integration is supported. Possible values: true, false |

controls |

Defines user control options supported by the model |

controls.temperature |

Whether temperature adjustment is possible. Possible values: true, false, "conditional" |

controls.reason |

Defines selectable reasoning intensity levels. Possible values: false or an array of strings (e.g. ["low", "medium", "high"], ["low", "medium", "high", "xhigh"], ["low", "medium", "high", "max"]) |

controls.verbosity |

Defines selectable response length levels. Possible values: false or an array of strings (e.g. ["low", "medium", "high"]) |

controls.instructions |

Whether custom instructions setting is possible. Possible values: true, false |

admin |

If true, only admin users can access/select this model |

Value Description

true

The feature is always enabled.

false

The feature is not supported.

toggle

For hybrid models, users can turn this feature on or off as needed.

switch

When a user toggles this feature, it switches to another individual model. Dynamic switching occurs to models defined in the variants object.

conditional

Available in standard mode, but not available in reasoning mode.

string array

Used by controls.reason and controls.verbosity to define the selectable levels shown in the UI.

image_models.json Configuration

Define the image generation AI models available in the application and their properties through the image_models.json file:

{

"default": "seedream-3-0-t2i-250415",

"models": [

{

"model_name": "flux-kontext-max",

"model_alias": "Flux Kontext Max",

"description": "Black Forest Labs",

"endpoint": "/image/flux",

"billing": {

"in_billing": "0",

"out_billing": "0.08"

},

"capabilities": {

"vision": true,

"max_input": 4

},

"admin": false

},

{

"model_name": "seedream-3-0-t2i-250415",

"model_alias": "Seedream 3.0",

"description": "BytePlus",

"endpoint": "/image/byteplus",

"billing": {

"in_billing": "0",

"out_billing": "0.03"

},

"variants": {

"vision": "seededit-3-0-i2i-250628"

},

"capabilities": {

"vision": "switch"

},

"admin": false

}

]

}

Image Model Parameter Description

| Parameter | Description |

|---|---|

capabilities.vision |

Whether image input is supported. true: supported, false: not supported, "switch": switch to variant model |

capabilities.max_input |

Maximum number of images that can be input simultaneously |

Model Switching System (Variants)

You can define various variants of models through the variants object.

Example

{

"model_name": "sonar",

"variants": {

"reasoning": "sonar-reasoning",

"deep_research": "sonar-deep-research"

},

"capabilities": {

"reasoning": "switch",

"deep_research": "switch"

}

},

{

"model_name": "sonar-reasoning",

"variants": {

"base": "sonar"

},

"capabilities": {

"reasoning": "switch"

}

}

MCP Server Configuration

DevoChat is a web-based MCP (Model Context Protocol) client.

You can define external servers to connect to in the mcp_servers.json file.

mcp_servers.json

{

"server-id": {

"url": "https://example.com/mcp/endpoint",

"authorization_token": "your_authorization_token",

"name": "Server_Display_Name",

"admin": false

}

}

Recommended MCP Servers

Local MCP Server Integration

To connect local MCP servers, use secure-mcp-proxy:

git clone https://github.com/gws8820/secure-mcp-proxy

cd secure-mcp-proxy

uv run python -m secure_mcp_proxy --named-server-config servers.json --port 3000

Contributing

- Fork this repository

- Create a new branch (

git checkout -b feature/amazing-feature) - Commit your changes (

git commit -m 'Add amazing feature') - Push to the branch (

git push origin feature/amazing-feature) - Create a Pull Request

License

This project is distributed under the MIT License.

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi