awesome-llm-knowledge-systems

Health Gecti

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 69 GitHub stars

Code Gecti

- Code scan — Scanned 1 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

This project is an informational knowledge base and guide that maps out the landscape of LLM knowledge engineering. It serves as an educational resource covering topics like RAG, context engineering, and agent memory systems.

Security Assessment

The overall risk is Low. As a documentation repository, the tool does not execute shell commands, access sensitive data, or request dangerous permissions. A code scan found no hardcoded secrets or dangerous patterns. It poses no direct security threat to your system, though it does contain links to external sites like arXiv and other reference materials.

Quality Assessment

The project is highly active and up to date, with its last push occurring today. It is legally safe to use and share thanks to its standard MIT license. Additionally, it has garnered positive community attention, reflected in its 69 GitHub stars, and is well-maintained with detailed updates and changelogs.

Verdict

Safe to use.

The Map Everyone's Missing: LLM Knowledge Engineering in 2026 — First unified guide connecting RAG, Context Engineering, Harness Engineering, Skill Systems, Agent Memory, MCP, and Progressive Disclosure

The Map Everyone's Missing: LLM Knowledge Engineering in 2026

English | 繁體中文 | 简体中文 | 日本語 | 한국어 | Español

What's new in May 2026 (click to expand)I analyzed 50+ awesome lists, surveys, and guides -- none of them connected the dots. RAG papers don't mention harness engineering. Memory frameworks ignore skill systems. MCP docs skip progressive disclosure. This guide draws the complete map.

Late-April / early-May 2026 added seven structurally significant timeline entries plus a full attribution-audit pass. If you visited before May 6, here is what shifted (full chronological log: CHANGELOG.md):

- April 7 Mythos breach addendum — the Glasswing distribution model was breached within ~14 hours of public announcement, foreshadowing the offensive-side cyber thesis (Ch11)

- April 8 Anthropic Managed Agents — first frontier-vendor primitive that meters the orchestrator seat itself, separately from inference (Ch04 §4.9, Ch11, glossary)

- April 23 Memory for Managed Agents — closes the loop with the March 31 Claude Code source-leak finding (three-layer self-healing memory now ships as a vendor-managed primitive) (Ch04 §4.9, Ch11)

- April 27 Microsoft–OpenAI restructure — cloud exclusivity ends, AGI provision no longer load-bearing; reframes the substrate-portability narrative (Ch08, Ch11)

- April 28 Bedrock Managed Agents (AWS × OpenAI) — first time the OpenAI agent harness is named and sold as a separate product surface; cross-vendor convergence on the Managed Agents pattern (Ch04 §4.9, Ch11)

- April 28 AHE paper (arXiv 2604.25850) — observability-driven harness evolution; 69.7% → 77.0% on Terminal-Bench 2 with cross-family transfer (Ch04 §4.5, Ch11)

- Late April AgentFlow (arXiv 2604.20801) — harness synthesis as a viable engineering surface; 84.3% TerminalBench-2 plus ten externally-validated zero-day CVEs on Chrome with Kimi K2.5 (Ch04, Ch07, Ch11)

## The Patternupdated — adds a fifth thread (harness synthesis), revises the cloud-native-primitives thread to reflect substrate / triggering / memory unbundling- Attribution audit, all chapters — twelve fixes across Ch01 / Ch02 / Ch03 / Ch04 / Ch05 / Ch06 / Ch07 / Ch09 / Ch11 / Ch12. Notable factual corrections: §4.2 renamed "The Böckeler Taxonomy" (was misattributed Fowler 2025; actual is Birgitta Böckeler April 2026 on martinfowler.com); Ch03 §3.5 cited two academic surveys that conflated or fabricated (real survey is Mei et al. arXiv 2507.13334); Ch06 MIRIX was described as four-layer (actual is six memory types per arXiv 2507.07957)

TL;DR

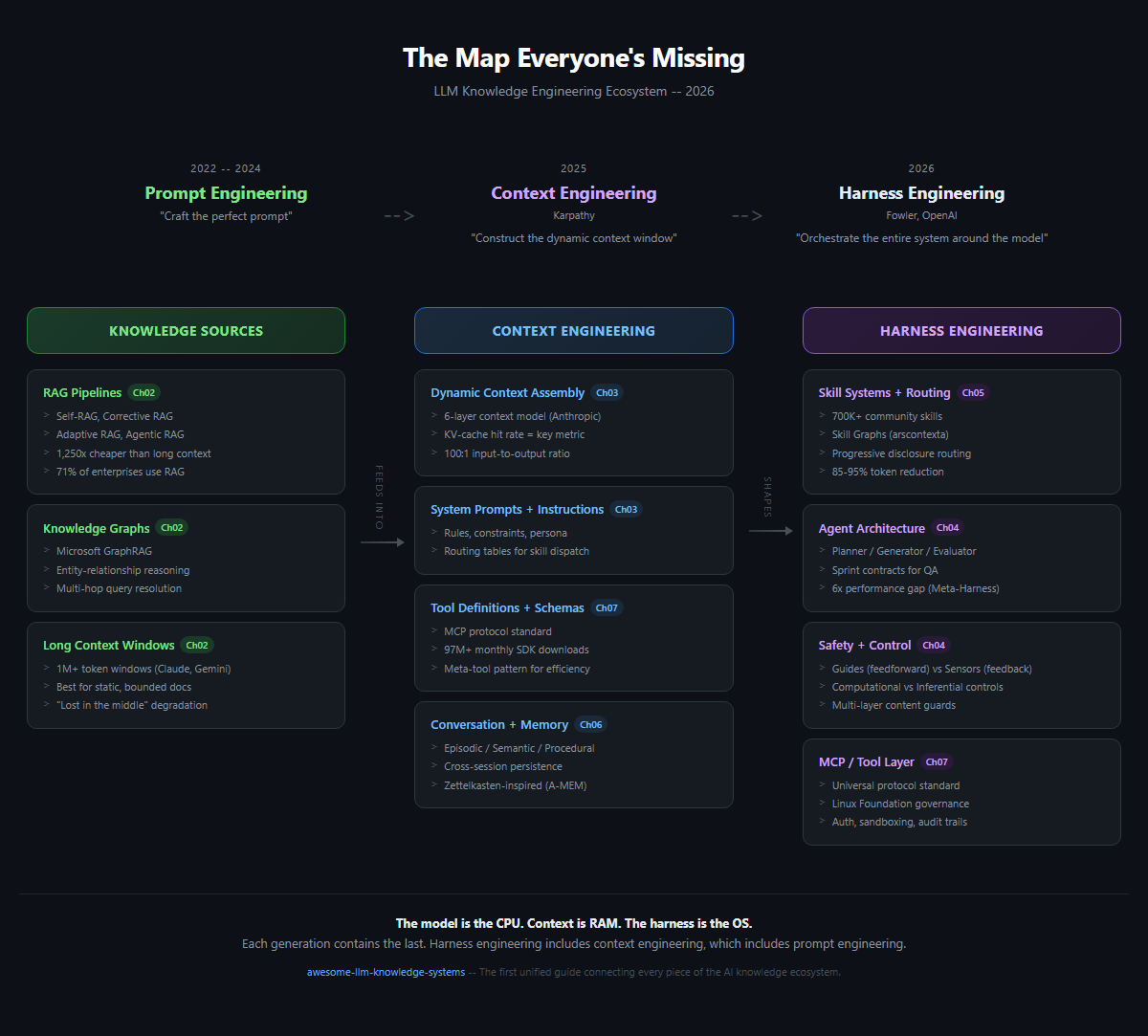

- Prompt engineering was just the beginning. The field has evolved through three generations: Prompt Engineering (2022-2024), Context Engineering (2025), and Harness Engineering (2026). Each layer subsumes the last.

- RAG is not dead. 71% of enterprises that tried context-stuffing came back to RAG within 12 months (Gartner Q4 2025). Hybrid architectures are winning.

- Context engineering is about what surrounds the call, not the call itself. Andrej Karpathy's mid-2025 reframe shifted focus from crafting prompts to constructing the entire context window dynamically.

- Harness engineering is the operating system layer. Birgitta Böckeler (writing in Martin Fowler's Exploring Generative AI memo series, April 2026) and the OpenAI Codex team's harness-design framing formalized this -- the model is the CPU, context is RAM, and the harness is the OS that orchestrates everything.

- No single guide connected all of this until now. RAG, knowledge graphs, long context, MCP, skill routing, memory systems, and progressive disclosure are all part of one ecosystem. This is the map.

Start Here

AI tools are getting smarter every year, but they only work well when they receive the right information at the right time. This guide explains how that works -- from the basics of telling an AI what to do, all the way up to designing entire systems around AI models.

Think of AI like a brilliant new employee on their first day. Prompt engineering is giving them a single task. Context engineering is giving them all the background information they need to do the task well. Harness engineering is designing their entire work environment -- their desk, their tools, their filing system, their team structure -- so they can do their best work consistently. This guide covers all three, and shows how they connect.

If you are new to this topic, start with the Glossary for definitions of key terms. If you build AI applications, jump straight into the chapters below. If you just want the big picture, look at the Ecosystem Map diagram further down this page.

Which Path Should You Take?

Not sure where to start? Pick the description that fits you best:

- "I just want to understand what all these AI buzzwords mean." -- Start with the Glossary, then read Chapter 1: The Three Generations.

- "I'm building an AI application." -- Read Ch02: RAG, Long Context & Knowledge Graphs, then Ch03: Context Engineering, then Ch04: Harness Engineering.

- "I want to make my AI tools work better." -- Read Ch05: Skill Systems, then Ch06: Agent Memory, then Ch10: Case Study.

- "I want to see real examples." -- Jump straight to Ch10: Case Study.

- "I work with Chinese AI tools." -- Start with Ch09: The Chinese AI Ecosystem.

- "I want the complete picture." -- Read front to back, starting with Chapter 1.

Use Cases

This guide helps you design systems for these real-world scenarios. Each row links to the chapters that matter most for that build:

| Scenario | What You're Building | Core Chapters |

|---|---|---|

| Personal Second Brain | Personal notes + papers + web clippings searchable via natural-language queries | Ch02 · Ch05 · Ch08 |

| Internal Company Knowledge Base | Employees query policy / handbooks / runbooks — low hallucination bar, citations required | Ch02 · Ch04 · Ch06 |

| Developer Documentation Assistant | Engineers query codebases / API docs / past incident postmortems across multi-repo environments | Ch02 · Ch05 · Ch07 |

| Support / QA Agent | Customer or internal tickets → context-aware replies with cited sources and follow-up memory | Ch03 · Ch06 · Ch04 |

| Domain-Specific Knowledge Automation (legal, healthcare, finance, engineering) | Reuse decades of domain documents — regulated, IP-sensitive, often requires local models and audit trails | Ch02 · Ch09 · Ch12 |

If your scenario doesn't fit cleanly, it's probably a composition of these — start from the closest row and adapt.

The Evolution

2022-2024 2025 2026

PROMPT ENG --> CONTEXT ENG --> HARNESS ENG

(Karpathy) (Fowler, OpenAI)

"Craft the "Construct the "Orchestrate the

perfect prompt" dynamic context entire system

window" around the model"

Each generation does not replace the last -- it contains it. Harness engineering includes context engineering, which includes prompt engineering.

The Lifecycle

The Ecosystem Map shows what the pieces are. The Lifecycle shows how data moves through them:

┌───── feedback ──────────────┐

▼ │

INGEST ───▶ PROCESS ───▶ STORE ───▶ QUERY ───▶ IMPROVE

│ │ │ │ │

Docs Chunking Vector DB RAG Evals

APIs Embeddings Graph DB GraphRAG Feedback

Web clips Cleaning Cache Agents Fine-tune

Crawlers Multi-modal Long doc Tool use Skill updates

│ │ │ │ │

Ch02 Ch02 · Ch03 Ch02-08 Ch02-07 Ch06

flowchart LR

I[INGEST<br/>Docs · APIs · Webscrape] --> P[PROCESS<br/>Chunking · Embeddings · Cleaning]

P --> S[STORE<br/>Vector DB · Graph DB · Cache]

S --> Q[QUERY<br/>RAG · GraphRAG · Agents]

Q --> M[IMPROVE<br/>Evals · Feedback · Fine-tune]

M -. feedback .-> I

Every production system moves data through all five stages — even if some are implicit. A good harness design makes each stage inspectable and replaceable. Ch02 covers Ingest/Process/Store; Ch03–Ch07 cover Query; Ch06 and Ch10 cover Improve.

Ecosystem Map

+---------------------------+ +---------------------------+ +---------------------------+

| KNOWLEDGE SOURCES | | CONTEXT ENGINEERING | | HARNESS ENGINEERING |

| | | | | |

| +---------------------+ | --> | +---------------------+ | --> | +---------------------+ |

| | RAG Pipelines | | | | Dynamic Context | | | | Skill Systems | |

| | - Self-RAG | | | | Assembly | | | | - Routing Logic | |

| | - Corrective RAG | | | | | | | | - Progressive | |

| | - Adaptive RAG | | | | KV-Cache | | | | Disclosure | |

| +---------------------+ | | | Optimization | | | +---------------------+ |

| | | | | | | |

| +---------------------+ | | | System Prompts | | | +---------------------+ |

| | Knowledge Graphs | | | | + Instructions | | | | Memory Frameworks | |

| | - GraphRAG | | | | | | | | - Short-term | |

| | - Entity Relations | | | | Tool Definitions | | | | - Long-term | |

| | - Multi-hop Queries | | | | + Schemas | | | | - Episodic | |

| +---------------------+ | | | | | | +---------------------+ |

| | | | Few-shot Examples | | | |

| +---------------------+ | | | | | | +---------------------+ |

| | Long Context | | | | Conversation | | | | MCP / Tool Layer | |

| | - 1M+ token windows | | | | History | | | | - Protocol Std | |

| | - Static doc ingest | | | +---------------------+ | | | - Tool Routing | |

| +---------------------+ | +---------------------------+ | | - Auth + Sandboxing | |

+---------------------------+ | +---------------------+ |

| |

| +---------------------+ |

| | Agent Runtime | |

| | - Planning Loops | |

| | - Error Recovery | |

| | - Multi-agent | |

| | Coordination | |

| +---------------------+ |

+---------------------------+

graph LR

subgraph Sources["Knowledge Sources"]

RAG[RAG Pipelines]

KG[Knowledge Graphs]

LC[Long Context Windows]

end

subgraph Context["Context Engineering"]

DCA[Dynamic Context Assembly]

KVC[KV-Cache Optimization]

SP[System Prompts + Instructions]

TD[Tool Definitions + Schemas]

FSE[Few-shot Examples]

CH[Conversation History]

end

subgraph Harness["Harness Engineering"]

SK[Skill Systems + Routing]

MEM[Memory Frameworks]

MCP[MCP / Tool Layer]

AR[Agent Runtime + Planning]

PD[Progressive Disclosure]

end

Sources --> Context --> Harness

Table of Contents

Chapters

| # | Chapter | Description |

|---|---|---|

| 01 | The Three Generations | From prompt engineering to context engineering to harness engineering |

| 02 | RAG, Long Context & Knowledge Graphs | The knowledge retrieval layer -- what works, what doesn't, and why hybrid wins |

| 03 | Context Engineering | The art of filling the context window -- KV-cache, the 100:1 ratio, dynamic assembly |

| 04 | Harness Engineering | Building the OS around the model -- guides, sensors, and the 6x performance gap |

| 05 | Skill Systems & Skill Graphs | From flat files to traversable graphs -- progressive disclosure in practice |

| 06 | Agent Memory | The missing layer -- episodic, semantic, and procedural memory architectures |

| 07 | MCP: The Standard That Won | Model Context Protocol -- from launch to 97M+ monthly downloads |

| 08 | AI-Native Knowledge Management | Tools landscape -- Notion AI, Obsidian, Mem, and the AI-native gap |

| 09 | The Chinese AI Ecosystem | Dify, RAGFlow, DeepSeek, Kimi -- a parallel universe of innovation |

| 10 | Case Study: A Real-World Knowledge Harness | How one developer built a complete harness with 65% token reduction |

| 11 | Timeline | Key moments in LLM knowledge engineering, 2022-2026 |

| 12 | Local Models for Knowledge Engineering | Run your knowledge harness locally -- embedding, RAG, compilation, and the fine-tuning endgame |

Why This Guide Exists

The LLM ecosystem in 2026 has a fragmentation problem. Not a lack of information -- an excess of disconnected information.

There are mass surveys on RAG. Comprehensive prompt engineering guides. MCP specification documents. Agent framework comparisons. Memory system papers. Each one is excellent in isolation. None of them show you how the pieces fit together.

This guide is that missing layer. It connects RAG to context engineering, context engineering to harness engineering, harness engineering to agent runtimes -- and shows you the decisions that matter at each boundary.

Contributing

Contributions are welcome! This list is community-maintained.

- Add a resource: Submit a Pull Request -- see CONTRIBUTING.md for guidelines

- Suggest a resource: Open an Issue

- Report a broken link: Open an Issue

- Discuss: Join the Discussion

- Translations: Translation PRs go in

/translations/. Maintain the same file structure. Available translations: 繁體中文 | 简体中文 | 日本語 | 한국어 | Español

Please keep the tone professional but accessible. Cite sources. No hype.

License

MIT License. See LICENSE for details.

Use this however you want. Attribution appreciated but not required.

Last updated: May 2026

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi