agent-zero-to-hero

Health Gecti

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 12 GitHub stars

Code Basarisiz

- eval() — Dynamic code execution via eval() in chapters/ch00_welcome.py

- eval() — Dynamic code execution via eval() in chapters/ch04_one_tool.py

Permissions Gecti

- Permissions — No dangerous permissions requested

This project is a 7-week educational course and code repository designed to teach developers how to build an AI coding agent harness from scratch using Python, without relying on external frameworks.

Security Assessment

The overall risk is Low, though developers should be aware of a few nuances. The rule-based scan flagged the use of `eval()` for dynamic code execution in two chapter files (`ch00_welcome.py` and `ch04_one_tool.py`). While `eval()` is generally discouraged in production due to security risks, its presence here is almost certainly for demonstration purposes to teach how agents dynamically process and execute code. The tool does not request dangerous system permissions, and no hardcoded secrets or API keys were found. However, running the actual agent requires you to manually provide your own Anthropic API key via an environment variable, which the tool will use to make external network requests to the LLM provider.

Quality Assessment

The project exhibits strong overall health and maintenance. It is protected by the highly permissive MIT license, making it completely free for personal and commercial use. The repository is highly active, with its latest code push occurring today. It features 42 unit tests that run rapidly without requiring an API key, demonstrating a solid commitment to code reliability and a developer-friendly experience. While the repository currently has 12 GitHub stars—indicating it is a relatively new or niche project rather than a heavily battle-tested community standard—the documentation is exceptionally clear, and the educational structure is highly organized.

Verdict

Use with caution: The educational content is safe, well-documented, and MIT-licensed, but treat the example code as a learning tool rather than a drop-in solution for production environments.

Build a Claude-Code-shaped agent harness from scratch. 7-week course, 19 chapters, ~4500 lines of Python, 42 tests, 3 LLM providers, no frameworks.

Build Claude Code in 4,500 lines of Python.

19 chapters · the entire agent loop in 6 lines · 3 providers · 42 tests pass without an API key ·

$0.50speedrun · zero frameworks.

📺 75-second narrated walkthrough:

assets/explainer.mp4(click the GIF or open directly).

⚡ Run it in 30 seconds (no API key)

git clone https://github.com/KeWang0622/agent-zero-to-hero.git

cd agent-zero-to-hero && pip install -e .

pytest tests/ # 42 passed in 0.6s

To run the actual agent:

export ANTHROPIC_API_KEY=sk-ant-...

python -m chapters.ch00_welcome "what is 17 * 23?" # your first agent

python agent.py "build me Tetris in one HTML file" # the climax

python microsite/build_site.py "Brooklyn ramen shop" # the capstone

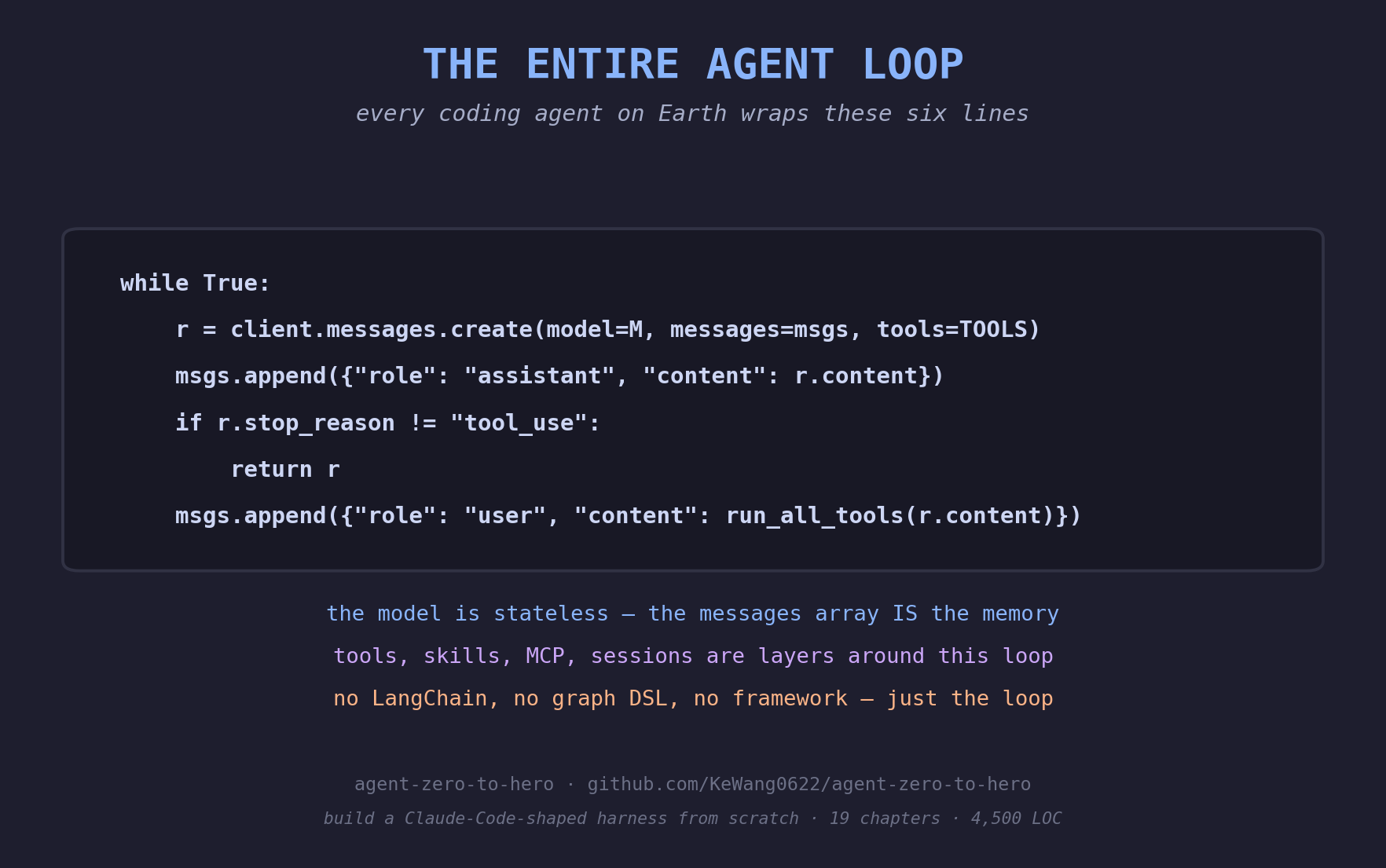

🎯 The entire agent loop is 6 lines

while True:

r = client.messages.create(model=M, messages=msgs, tools=TOOLS)

msgs.append({"role": "assistant", "content": r.content})

if r.stop_reason != "tool_use":

return r

msgs.append({"role": "user", "content": run_all_tools(r.content)})

That's Claude Code. That's Cursor. That's Devin. Every coding agent on Earth wraps these six lines. By the end of chapter 5 you'll write this from memory.

flowchart LR

User([👤 user prompt]) --> Msgs[/messages array<br/><i>ch02 · the only memory</i>/]

Msgs --> Model[the model<br/><i>claude · openai · gemini<br/>ch01 · ch17</i>]

Tools[🔧 tools<br/><i>ch04-06</i>] -.-> Model

Skills[📜 skills<br/><i>ch12</i>] -.-> Model

MCP[🔌 MCP servers<br/><i>ch13-14</i>] -.-> Model

Model --> Stop{stop_reason?<br/><i>ch03</i>}

Stop -- end_turn --> Answer([💬 final answer<br/><i>saved to session.jsonl · ch09</i>])

Stop -- tool_use --> Run[run all tools<br/>append tool_results]

Run --> Msgs

style Msgs fill:#1e1e2e,stroke:#89b4fa,color:#89b4fa

style Model fill:#1e1e2e,stroke:#cba6f7,color:#cba6f7

style Stop fill:#1e1e2e,stroke:#f9e2af,color:#f9e2af

style Answer fill:#1e1e2e,stroke:#94e2d5,color:#94e2d5

style Run fill:#1e1e2e,stroke:#f9e2af,color:#f9e2af

style Tools fill:#1e1e2e,stroke:#a6e3a1,color:#a6e3a1

style Skills fill:#1e1e2e,stroke:#fab387,color:#fab387

style MCP fill:#1e1e2e,stroke:#f5c2e7,color:#f5c2e7

style User fill:#313244,stroke:#cdd6f4,color:#cdd6f4

The model is stateless. The messages array is the only memory. Tools, skills, sessions, MCP — they're how the harness extends the model. They're not the agent. The loop is.

👀 Who this is for

You'll get the most out of it if you:

- Can write basic Python (loops, dicts, functions). No advanced async, types, or web frameworks needed.

- Have used a coding agent (Claude Code, Cursor, Devin) and wonder what's actually happening inside.

- Want to read the source of a real agent harness and recognize every primitive by name.

Not for you if you want a plug-and-play framework. Use LangGraph or smolagents.

📑 The 19 chapters

Each chapter is one Python file + a matching learning page (chapters/chNN_topic.py + .md). Read the .md, run the .py, do the homework.

| # | Chapter | What you'll learn |

|---|---|---|

| 00 | welcome | A complete working agent in 30 lines. The whole shape, in 5 minutes. |

| 01 | raw_call | One HTTP POST. The Messages API. No SDK. |

| 02 | messages_array | The API is stateless. The messages array IS the memory. |

| 03 | stop_reasons | The seven ways out of the loop. Handle each correctly. |

| 04 | one_tool | The tool_use → tool_result protocol. One round-trip. |

| 05 | the_loop | THE LOOP. Six lines. Decomposition, ReAct, planning. The pivot chapter. |

| 06 | parallel_tools | Multiple tool_use blocks in one turn. The single-user-message rule. |

| 07 | errors | Tool errors as content. is_error: true. Refusals. |

| 08 | system_prompts | What goes in system vs messages. Persona that survives compaction. |

| 08b | observability | The dollar ticker. response.usage × prices = no bill shock. |

| 08c | prompt_caching | The 5× cost lever. Per-model thresholds, breakpoints, TTL, foot-guns. |

| 09 | sessions | JSONL on disk. Resume after Ctrl-C. |

| 10 | compaction | The chapter that pays for itself. Surgery, not GC. |

| 11 | subagents | Context isolation as a feature. 10× cheaper. |

| 12 | skills | Markdown loaded on demand. Progressive disclosure. |

| 13 | mcp_wire | MCP demystified — JSON-RPC over stdio with three method calls. |

| 14 | mcp_agent | Wire your own MCP server into the agent loop. |

| 15 | streaming_text | SSE basics. Render text deltas as they arrive. |

| 16 | streaming_tools | input_json_delta accumulation. The hard chapter. |

| 17 | multi_provider | Same loop, three wires (Anthropic / OpenAI / Gemini). |

| ★ | agent.py | The climax. ~840-line Claude-Code-shaped CLI built from chapter primitives. |

| ★ | microsite/ | The capstone. Build a working website from one prompt. |

Every chapter ends with Summary, Homework, and References (papers + docs + reference repos).

🗺️ The 7-week journey

| Week | Theme | Chapters |

|---|---|---|

Week 1 |

Foundations. From one HTTP call to the agent loop. | 00 · 01 · 02 · 03 · 04 · 05 |

Week 2 |

Tool engineering. Parallel calls, errors, system prompts. | 06 · 07 · 08 |

Week 3 |

Cost & observability. The dollar ticker. The 5× cache lever. Compaction. | 08b · 08c · 10 |

Week 4 |

Persistence & scale. Sessions on disk. Subagents. | 09 · 11 |

Week 5 |

Skills & MCP. Markdown loaded on demand. Three JSON-RPC calls. | 12 · 13 · 14 |

Week 6 |

Engineering polish. Streaming. Three providers, one loop. | 15 · 16 · 17 |

Week 7 |

Capstone. Read agent.py. Run microsite/. Build something. |

agent.py · microsite |

Bold chapters are load-bearing concepts — read them twice.

Full schedule with problem sets, labs, and the final exam: SYLLABUS.md.

📅 How to take it

| Pace | Time / week | Total | |

|---|---|---|---|

| 🎓 Full course | One week per module + capstone | ~3-4 hrs | ~25 hrs |

| ⚡ Speedrun | Skip homework, run speedrun.sh | — | ~5 hrs |

| 🛠️ Reference | Read agent.py cover-to-cover, dip into chapters as needed |

— | ~2 hrs |

API spend: about $0.50 for the speedrun, $5–$10 for the full course (the capstone is the most expensive turn). You can verify the install without an API key — pytest tests/ runs against mocked LLMs and a real MCP subprocess.

🐢 Quotable mottos

| Chapter | Motto |

|---|---|

| 02 messages | "The messages array IS the memory. There is no other memory." |

| 05 the_loop | "An agent loop is just while True of one talking to the other." |

| 08c caching | "It's not a feature. It's a placement problem." |

| 10 compaction | "Surgery, not GC. Replace the older half with one synthetic message." |

| 11 subagents | "Context isolation as a feature. 10× cheaper." |

| 13 mcp_wire | "Three method calls. JSON-RPC over stdio. That's all." |

Hi! I'm GuiGui 🐢 — the mascot for this course

The chapters are written in plain prose; I show up in the illustrations to keep the energy up. If you spot me with a graduation cap, you've reached week 7.

📂 Repo layout

chapters/ 19 numbered Python files + matching .md walkthroughs

agent.py the climax — Claude-Code-shaped CLI built from chapter primitives

microsite/ capstone — build a website from one prompt

skills/ example SKILL.md files (haiku-master, landing-page)

mcp_servers/ example MCP servers (calculator)

tests/ verify your install, no API key required

docs/ ADAPTING.md (port to OpenAI/Gemini), FAQ.md

SYLLABUS.md 7-week schedule with problem sets and exam

AGENT.md project context auto-loaded by agent.py

🎓 For instructors

This course is MIT-licensed and built to be adopted. All chapters are runnable in 30 seconds. 25 students × 7 weeks ≈ $50 in total API spend. See SYLLABUS.md for problem sets, labs, and final exam. Open an issue if you adopt this for a class — we'll add your school here.

📤 Share-ready images

If you want to tweet about this course, assets/share/ has 8 PNGs designed for screenshot-friendly sharing — the 6-line loop poster, a side-by-side comparison vs LangChain / LangGraph / CrewAI / smolagents, and 6 motto cards in tweet-card aspect ratio. MIT-licensed; attribution appreciated, not required.

📈 Star history

🙏 Acknowledgements

- @karpathy for the literary genre of educational repos (

nanoGPT,nanochat,micrograd). - Anthropic for shipping the cleanest tool-use protocol of any major LLM provider.

- Simon Willison — "Claude Skills are maybe a bigger deal than MCP" inspired chapter 12.

Written by Ke Wang — agent identity & memory at Pika. Previously: Samsung, Adobe (Marc Levoy's team). PhD in computational imaging.

License

MIT. See LICENSE.

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi