PyOmniTS

Health Gecti

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 59 GitHub stars

Code Gecti

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

This is a researcher and agent-friendly Python framework designed for highly extensible time series analysis. It allows users to seamlessly train almost any model on any dataset without worrying about breaking existing code.

Security Assessment

Overall Risk: Low

The automated code scan of 12 files found no dangerous patterns, and the tool does not request any risky system permissions. Based on the repository details, there is no evidence of hardcoded secrets, unwanted shell command execution, or unauthorized access to sensitive local data. Standard network requests are limited to expected machine learning operations, such as downloading public datasets or interacting with Hugging Face spaces.

Quality Assessment

The project demonstrates strong quality indicators and excellent maintenance health, with its last code push occurring just today. It is officially backed by a legitimate academic pedigree, serving as the repository for papers accepted at major conferences like ICLR 2026 and ICML 2025. It is properly licensed under the permissive and standard MIT license, allowing for broad usage and modification. With nearly 60 GitHub stars, it shows early but solid community trust and engagement from the data science field.

Verdict

Safe to use.

🔬 A Researcher&Agent-Friendly Framework for Time Series Analysis. Train Any Model on Any Dataset!

A Researcher&Agent-Friendly Framework for Time Series Analysis.

Train Any Model on Any Dataset.

📊 Time series analysis leaderboard is now available on our 🤗 Hugging Face space. Discover the performance of different models!

This is also the official repository for the following paper:

Learning Recursive Multi-Scale Representations for Irregular Multivariate Time Series Forecasting (ICLR 2026) [OpenReview] [arXiv]

@inproceedings{li_LearningRecursiveMultiScale_2026, author = {Li, Boyuan and Liu, Zhen and Luo, Yicheng and Ma, Qianli}, booktitle = {International Conference on Learning Representations}, title = {Learning Recursive Multi-Scale Representations for Irregular Multivariate Time Series Forecasting}, year = {2026} }HyperIMTS: Hypergraph Neural Network for Irregular Multivariate Time Series Forecasting (ICML 2025) [poster] [OpenReview] [arXiv]

@inproceedings{li_HyperIMTSHypergraphNeural_2025, author = {Li, Boyuan and Luo, Yicheng and Liu, Zhen and Zheng, Junhao and Lv, Jianming and Ma, Qianli}, booktitle = {Forty-Second International Conference on Machine Learning}, title = {HyperIMTS: Hypergraph Neural Network for Irregular Multivariate Time Series Forecasting}, year = {2025} }

1. ✨ Hightlighted Features

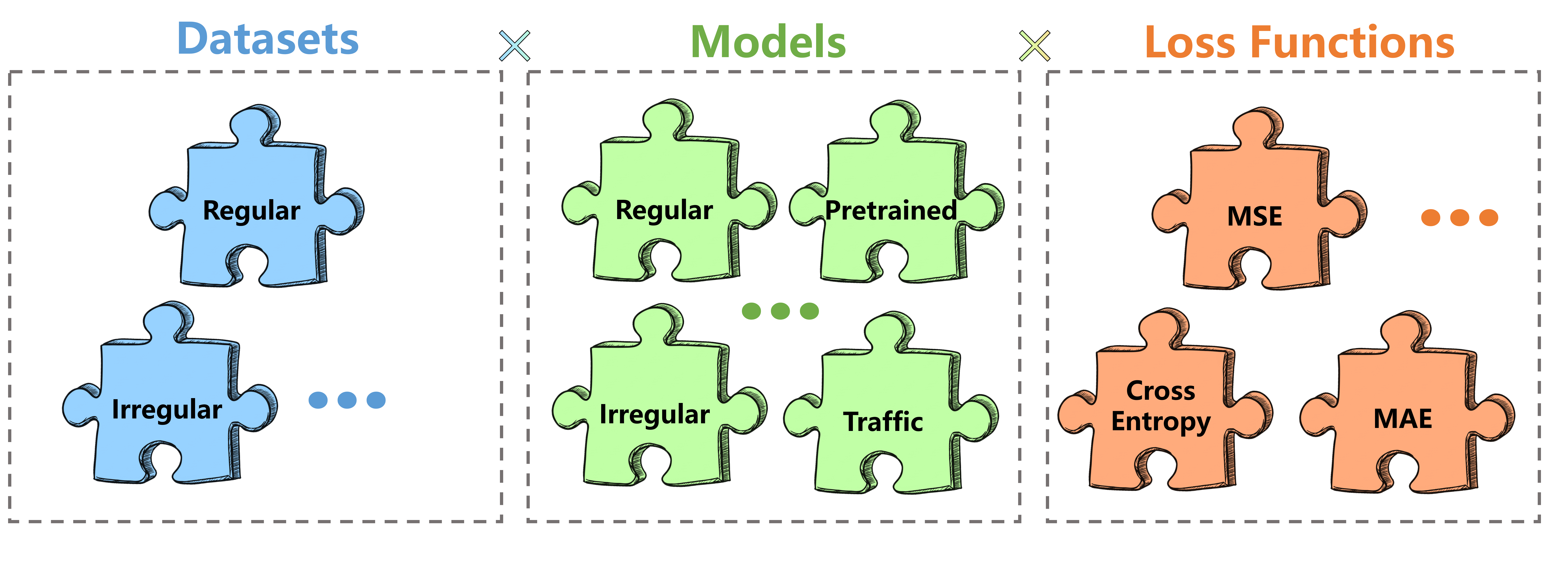

- Extensibility: Adapt your model/dataset once, train almost any combination of "model" $\times$ "dataset" $\times$ "loss function".

- Compatibility: Accept models with any number/type of arguments in

forward; Accept datasets with any number/type of return values in__getitem__; Accept tailored loss calculation for specific models. - Maintainability: No need to worry about breaking the training codes of existing models/datasets/loss functions when adding new ones.

- Reproducibility: Minimal library dependencies for core components. Try the best to get rid of fancy third-party libraries (e.g., PyTorch Lightning, EasyTorch).

- Efficiency: Multi-GPU parallel training; Python built-in logger; structured experimental result saving (json)...

- Transferability: Even if you don't like our framework, you can still easily find and copy the models/datasets you want. No overwhelming encapsulation.

2. 🧭 Documentation

Checkout the new documentation website.

Using 🦞agent? Check out our official PyOmniTS skill on clawhub. Your agent will understand the essentials of our framework, and even automate the code replication process by adapting other papers' codes into PyOmniTS!

3. 🤖 Models

51 models, covering regular, irregular, pretrained, and traffic models, have been included in PyOmniTS, and more are coming.

Model classes can be found in models/, and their dependencies can be found in layers/

- ✅: supported

- ❌: not supported

- '-': not implemented

- MTS: regularly sampled multivariate time series

- IMTS: able to handle irregularly sampled multivariate time series

| Model | Venue | Type | Forecasting | Classification | Imputation |

|---|---|---|---|---|---|

| Ada-MSHyper | NeurIPS 2024 | MTS | ✅ | ✅ | ✅ |

| APN | AAAI 2026 | IMTS | ✅ | - | - |

| Autoformer | NeurIPS 2021 | MTS | ✅ | ✅ | ✅ |

| Scaleformer | ICLR 2023 | MTS | ✅ | - | ✅ |

| BigST | VLDB 2024 | MTS | ✅ | ✅ | ✅ |

| Crossformer | ICLR 2023 | MTS | ✅ | ✅ | ✅ |

| CRU | ICML 2022 | IMTS | ✅ | ❌ | ✅ |

| DLinear | AAAI 2023 | MTS | ✅ | ✅ | ✅ |

| ETSformer | arXiv 2022 | MTS | ✅ | ✅ | ✅ |

| FEDformer | ICML 2022 | MTS | ✅ | ✅ | ✅ |

| FiLM | NeurIPS 2022 | MTS | ✅ | ✅ | ✅ |

| FourierGNN | NeurIPS 2023 | MTS | ✅ | ✅ | ✅ |

| FreTS | NeurIPS 2023 | MTS | ✅ | ✅ | ✅ |

| GNeuralFlow | NeurIPS 2024 | IMTS | ✅ | ❌ | ✅ |

| GraFITi | AAAI 2024 | IMTS | ✅ | ✅ | ✅ |

| GRU-D | Scientific Reports 2018 | IMTS | ✅ | ✅ | ✅ |

| HD-TTS | ICML 2024 | IMTS | ✅ | - | ✅ |

| Hi-Patch | ICML 2025 | IMTS | ✅ | ✅ | ✅ |

| higp | ICML 2024 | MTS | ✅ | ✅ | ✅ |

| HyperIMTS | ICML 2025 | IMTS | ✅ | - | ✅ |

| Informer | AAAI 2021 | MTS | ✅ | ✅ | ✅ |

| iTransformer | ICLR 2024 | MTS | ✅ | ✅ | ✅ |

| Koopa | NeurIPS 2023 | MTS | ✅ | ❌ | ✅ |

| Latent_ODE | NeurIPS 2019 | IMTS | ✅ | ❌ | ✅ |

| Leddam | ICML 2024 | MTS | ✅ | ✅ | ✅ |

| LightTS | arXiv 2022 | MTS | ✅ | ✅ | ✅ |

| Mamba | Language Modeling 2024 | MTS | ✅ | ✅ | ✅ |

| MICN | ICLR 2023 | MTS | ✅ | ✅ | ✅ |

| MOIRAI | ICML 2024 | Any | ✅ | - | ✅ |

| mTAN | ICLR 2021 | IMTS | ✅ | ✅ | ✅ |

| NeuralFlows | NeurIPS 2021 | IMTS | ✅ | ❌ | ✅ |

| NHITS | AAAI 2023 | MTS | ✅ | - | ✅ |

| Nonstationary Transformer | NeurIPS 2022 | MTS | ✅ | ✅ | ✅ |

| PatchTST | ICLR 2023 | MTS | ✅ | ✅ | ✅ |

| Pathformer | ICLR 2024 | MTS | ✅ | - | ✅ |

| PrimeNet | AAAI 2023 | IMTS | ✅ | ✅ | ✅ |

| Pyraformer | ICLR 2022 | MTS | ✅ | ✅ | ✅ |

| Raindrop | ICLR 2022 | IMTS | ✅ | ✅ | ✅ |

| Reformer | ICLR 2020 | MTS | ✅ | ✅ | ✅ |

| ReIMTS | ICLR 2026 | IMTS | ✅ | ✅ | - |

| SeFT | ICML 2020 | IMTS | ✅ | ✅ | ✅ |

| SegRNN | arXiv 2023 | MTS | ✅ | ✅ | ✅ |

| Temporal Fusion Transformer | arXiv 2019 | MTS | ✅ | - | - |

| TiDE | TMLR 2023 | MTS | ✅ | ✅ | ✅ |

| TimeCHEAT | AAAI 2025 | MTS | ✅ | ✅ | ✅ |

| TimeMixer | ICLR 2024 | MTS | ✅ | ✅ | ✅ |

| TimesNet | ICLR 2023 | MTS | ✅ | ✅ | ✅ |

| tPatchGNN | ICML 2024 | IMTS | ✅ | ✅ | ✅ |

| Transformer | NeurIPS 2017 | MTS | ✅ | ✅ | ✅ |

| TSMixer | TMLR 2023 | MTS | ✅ | ✅ | ✅ |

| Warpformer | KDD 2023 | IMTS | ✅ | ✅ | ✅ |

4. 💾 Datasets

Dataest classes are put in data/data_provider/datasets, and dependencies can be found in data/dependencies:

11 datasets, covering regular and irregular ones, have been included in PyOmniTS, and more are coming.

- ✅: supported

- ❌: not supported

- '-': not implemented

- MTS: regularly sampled multivariate time series

- IMTS: irregularly sampled multivariate time series

| Dataset | Type | Field | Forecasting |

|---|---|---|---|

| ECL | MTS | electricity | ✅ |

| ETTh1 | MTS | electricity | ✅ |

| ETTm1 | MTS | electricity | ✅ |

| Human Activity | IMTS | biomechanics | ✅ |

| ILI | MTS | healthcare | ✅ |

| MIMIC III | IMTS | healthcare | ✅ |

| MIMIC IV | IMTS | healthcare | ✅ |

| PhysioNet'12 | IMTS | healthcare | ✅ |

| Traffic | MTS | traffic | ✅ |

| USHCN | IMTS | weather | ✅ |

| Weather | MTS | weather | ✅ |

Datasets for classification and imputation have not released yet.

5. 📉 Loss Functions

The following loss functions are included under loss_fns/:

| Loss Function | Task | Note |

|---|---|---|

| CrossEntropyLoss | Classification | - |

| MAE | Forecasting/Imputation | - |

| ModelProvidedLoss | - | Some models prefer to calculate loss within forward(), such as GNeuralFlows. |

| MSE_Dual | Forecasting/Imputation | |

| MSE | Forecasting/Imputation | - |

6. 🚧 Roadmap

PyOmniTS is continously evolving:

- More tutorials.

- Classification support in core components.

- Imputation support in core components.

- Optional python package management via uv.

Yet Another Code Framework?

We encountered the following problems when using existing ones:

Argument & return value chaos for models'

forward():Different models usually take varying number and shape of arguments, especially ones from different domains.

Changes to training logic are needed to support these differences.Return value chaos for datasets'

__getitem__():datasets can return a number of tensors in different shapes, which have to be aligned with arguments of models'

forward()one by one.

Changes to training logic are also needed to support these differences.Argument & return value chaos for loss functions'

forward():loss functions take different types of tensors as input, require aligning with return values from models'

forward().Overwhelming dependencies:

some existing pipelines use fancy high-level packages in building the pipeline, which can lower the flexibility of code modification.

Contributors

Ladbaby 💻 🐛 |

Acknowledgement

- Time Series Library: Models and datasets for regularly sampled time series are mostly adapted from it.

- BasicTS: Documentation design reference.

- Google Gemini: Icon creation.

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi