SPEAR

Health Gecti

- License — License: Apache-2.0

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 34 GitHub stars

Code Gecti

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

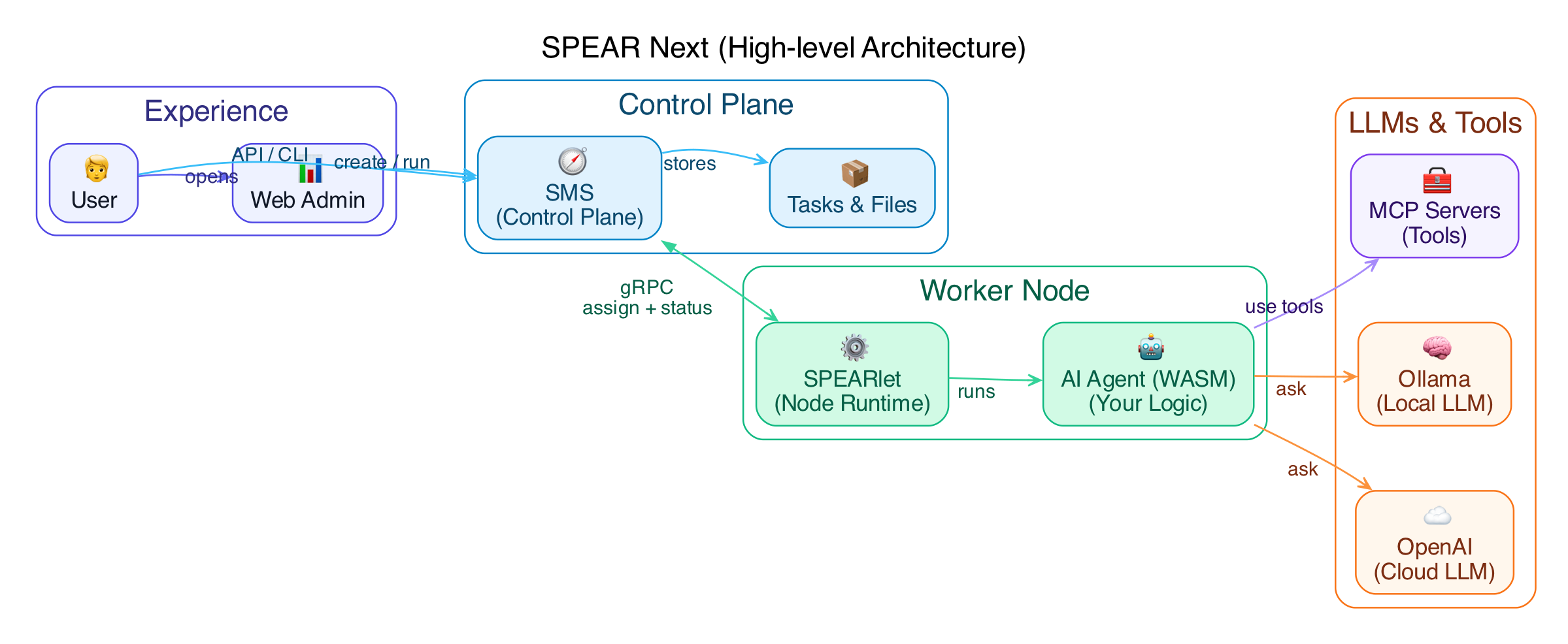

This tool is a distributed cloud-edge collaborative AI agent platform. It provides a metadata control-plane server (SMS) and a node-side runtime agent (SPEARlet) for managing and routing AI workloads, including integration with local LLMs like Ollama.

Security Assessment

Overall Risk: Low. The project is written in Rust, a memory-safe language that inherently prevents many common vulnerabilities. It acts as a networked service, exposing HTTP, gRPC, and Web Admin APIs, meaning it handles network requests by design. It does not appear to execute arbitrary local shell commands based on the scanned files. The documentation explicitly warns against putting secrets in configuration files, instead recommending the use of environment variables for API keys, which is a secure practice. No hardcoded secrets or dangerous permissions were found during the light code scan.

Quality Assessment

The project is healthy and actively maintained, with its most recent code push occurring today. It uses the permissive and standard Apache-2.0 license. Community trust is modest but positive, currently backed by 34 GitHub stars. It features solid developer documentation, a clear build process, and a well-structured configuration hierarchy.

Verdict

Safe to use.

Distributed Cloud-Edge Collaborative AI Agent Platform

SPEAR Next

SPEAR Next is the Rust/async implementation of SPEAR’s core services:

- SMS: the metadata/control-plane server.

- SPEARlet: the node-side agent/runtime.

Chinese README: README.zh.md

Repository layout

src/apps/sms: SMS binary entrypointsrc/apps/spearlet: SPEARlet binary entrypointweb-admin/: Web Admin frontend sourceassets/admin/: built Web Admin static assets embedded/served by SMSsamples/wasm-c/: C-based WASM samples (WASI)docs/: design notes and usage guides

Architecture

Quick start

Prerequisites

- Rust toolchain (latest stable recommended)

This repo uses protoc-bin-vendored, so you typically don’t need to install protoc manually.

Build

make build

# release

make build-release

# build with Rust features (e.g. sled / rocksdb)

make FEATURES=sled build

# enable local microphone capture implementation (optional)

make FEATURES=mic-device build

# macOS shortcut (equivalent to FEATURES+=mic-device)

make mac-build

Run SMS

./target/debug/sms

# enable Web Admin

./target/debug/sms --enable-web-admin --web-admin-addr 127.0.0.1:8081

Useful endpoints:

- HTTP gateway:

http://127.0.0.1:8080 - Swagger UI:

http://127.0.0.1:8080/swagger-ui/ - OpenAPI spec:

http://127.0.0.1:8080/api/openapi.json - gRPC:

127.0.0.1:50051 - Web Admin (when enabled):

http://127.0.0.1:8081/admin

Run SPEARlet

SPEARlet connects to SMS once you provide --sms-grpc-addr (then it auto-registers by default).

./target/debug/spearlet --sms-grpc-addr 127.0.0.1:50051

Configuration

Config file locations

- SMS:

~/.sms/config.toml(or--config <path>) - SPEARlet:

~/.spear/config.toml(or--config <path>)

Repo-shipped examples:

- SMS:

config/sms/config.toml - SPEARlet:

config/spearlet/config.toml

Priority

- CLI

--configfile - Home config (

~/.sms/config.tomlor~/.spear/config.toml) - Environment variables (

SMS_*,SPEARLET_*) - Built-in defaults

Secrets

Do not put secrets into config files. Use spearlet.llm.credentials[].api_key_env to reference environment variables and bind them from backends via credential_ref.

LLM backend notes:

[[spearlet.llm.backends]] hostingis required and must belocalorremote.credential_refis optional. If set, the referenced env var must exist (otherwise the backend is filtered). If not set, the backend is treated as “no-auth” (useful for self-hosted proxies).

Ollama discovery

SPEARlet can import models from a local Ollama on startup and materialize them as LLM backends.

- Docs:

docs/ollama-discovery-en.md

Routing and debugging

- Route by model: if some backends are configured with

model = "...", requests can be routed by setting onlymodel(no explicitbackendrequired). - Observe the selected backend:

cchat_recvJSON includes a top-level_spear.backend/_spear.model.- Router emits a

router selected backenddebug log after selection.

Web Admin

Web Admin provides Nodes/Tasks/Files/AI Models pages.

- AI Models provides an aggregated view across nodes, split into Local/Remote.

- Local AI Models supports creating/deleting model deployments on a node.

Local model provisioning (llamacpp):

modelis just a display key; actual download usesparams.model_urlwhen the model file is missing.- Supported params:

model_url: http/https URL to a.gguffile (large files are supported).download_timeout_s: total download budget in seconds (default: 3600).model_path: absolute path, or relative tospearlet.local_models_dir.skip_download=1: fail if the model file is missing (no download).

Docs:

docs/web-admin-overview-en.mddocs/web-admin-ui-guide-en.md

WASM samples

make samples

Artifacts are written to samples/build/ (C) and samples/build/js/ (WASM-JS, compat: samples/build/rust/).

Docs:

docs/samples-build-guide-en.md

Development

make help

make dev

make ci

UI tests (Playwright):

make test-ui

Documentation

docs/INDEX.md

License

Apache-2.0. See LICENSE.

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi