opensmith

Health Uyari

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Low visibility — Only 5 GitHub stars

Code Gecti

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

This tool provides a local-first, open-source alternative to LangSmith. It allows developers to trace, inspect, and debug Python LLM pipelines entirely on their local machine without relying on cloud services.

Security Assessment

Overall Risk: Low. The automated code scan reviewed 12 files and found no dangerous patterns, hardcoded secrets, or requests for elevated permissions. By design, the tool prioritizes data privacy, keeping all tracing data localized using SQLite rather than sending it over the internet. It functions as an observability wrapper and does not appear to execute unauthorized shell commands or exfiltrate data.

Quality Assessment

The project has a solid foundation for personal or early-stage use. It benefits from a clear MIT license and active maintenance, with repository updates pushed as recently as today. However, community trust and visibility are currently very low. With only 5 GitHub stars, the tool has not yet been widely tested or adopted by the broader developer community. This means potential edge cases might be undocumented, and community support will be limited.

Verdict

Safe to use, keeping in mind that it is a very young and low-visibility project.

The open-source, local-first alternative to LangSmith. No cloud. No setup.

██████ ██████ ███████ ███ ██ ███████ ███ ███ ██ ████████ ██ ██ ██ ██ ██ ██ ██ ████ ██ ██ ████ ████ ██ ██ ██ ██ ██ ██ ██████ █████ ██ ██ ██ ███████ ██ ████ ██ ██ ██ ███████ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██████ ██ ███████ ██ ████ ███████ ██ ██ ██ ██ ██ ██

The open-source, local-first alternative to LangSmith.

opensmith

The open-source, local-first alternative to LangSmith.

opensmith is to LangSmith what Ollama is to OpenAI — the local-first, privacy-first alternative.

Why opensmith?

| LangSmith | opensmith | |

|---|---|---|

| Setup | Cloud account required | pip install opensmith |

| Data privacy | Sends traces to cloud | 100% local, SQLite only |

| Framework | Best with LangChain | Works with any Python code |

| Cost | Free tier then paid | Free forever, open source |

| Offline | No | Yes |

| Docker | No | No |

| Dashboard | Hosted | localhost:7823 |

Why opensmith

LangSmith is powerful, but it is built around cloud-hosted tracing and is most natural inside the LangChain ecosystem. opensmith is a local-first alternative: install it with pip, use it with any Python LLM pipeline, and inspect traces on your machine without accounts, hosted services, Docker, or configuration. No trace data leaves your machine.

Install

pip install opensmith

Quickstart

Example 1: @trace decorator

from opensmith import trace

@trace

def call_llm(prompt: str):

return openai.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}],

)

@trace

def my_pipeline(question: str):

# search_docs is your own retrieval function

docs = search_docs(question)

return call_llm(docs + question)

Async functions are supported:

from opensmith import trace

@trace(tags=["production", "rag"])

async def call_llm(prompt: str):

return await openai.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}],

)

Example 2: context manager

from opensmith import trace

with trace("my_pipeline", tags=["debug"]) as t:

t.log("query", query)

response = openai.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": query}],

)

t.log("response", response)

Example 3: autopatch() zero code changes

from opensmith import autopatch

autopatch()

Patch only selected backends:

from opensmith import autopatch

autopatch(only=["openai"])

Patch everything except selected backends:

from opensmith import autopatch

autopatch(exclude=["chromadb"])

Console mode

Print trace results to the terminal as they complete:

from opensmith import set_console_mode, trace

set_console_mode(True)

@trace

def my_func():

return "ok"

Configuration

opensmith reads opensmith.json from the current working directory on import:

{

"db_path": "./my_traces.db",

"console_mode": false,

"autopatch": ["openai", "qdrant"]

}

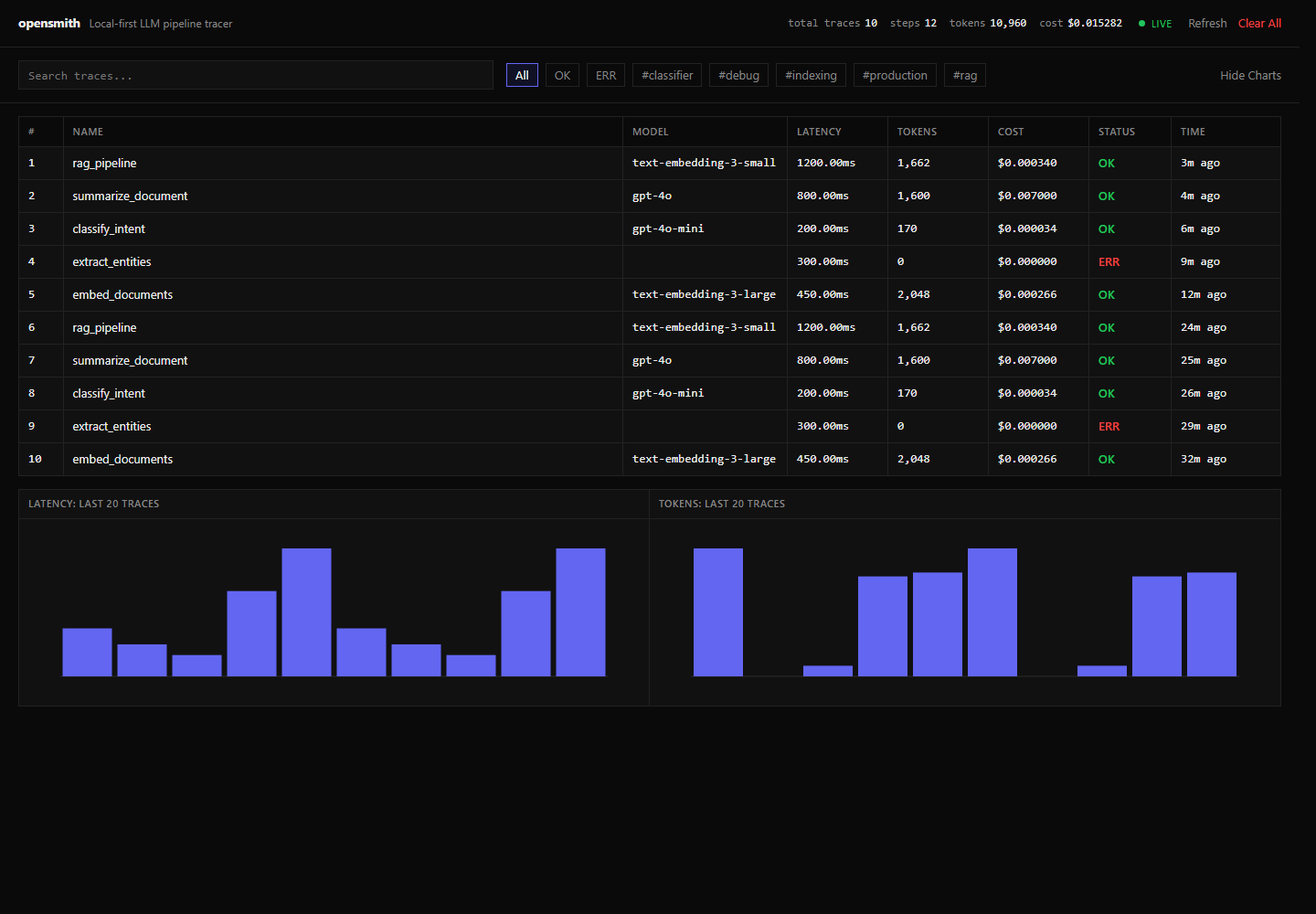

Dashboard

opensmith ui

Open http://localhost:7823.

CLI reference

| Command | Description |

|---|---|

opensmith ui |

Start the local dashboard at localhost:7823. |

opensmith traces |

List recent traces in the terminal. |

opensmith stats |

Show aggregate trace, step, token, and cost statistics. |

opensmith clear |

Delete all locally stored traces after confirmation. |

Supported backends

| Backend | Package | Status |

|---|---|---|

| openai | openai | ✅ |

| anthropic | anthropic | ✅ |

| litellm | litellm | ✅ |

| qdrant | qdrant-client | ✅ |

| chromadb | chromadb | ✅ |

| pinecone | pinecone-client | ✅ |

Storage

Traces are stored locally at ~/.opensmith/traces.db unless overridden with opensmith.json or set_default_db_path().

Star History

License

MIT

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi