smart-mcp

Health Gecti

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 12 GitHub stars

Code Gecti

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

This tool acts as a semantic routing proxy for AI agents. It reduces context window bloat by indexing all available tool schemas using FAISS embeddings and exposing only the relevant ones to the AI model based on the user's natural language request.

Security Assessment

Overall Risk: Low. The tool acts as a local proxy and handles tool schemas rather than sensitive user data. It relies on FAISS and sentence-transformers for local, offline semantic indexing. The automated code scan checked 12 files and found no dangerous patterns, hardcoded secrets, or requests for elevated system permissions. As a routing proxy, it facilitates communication between your AI client and other MCP servers over standard I/O (stdio). While this means it technically passes data between services, it does not independently execute arbitrary shell commands or make unauthorized external network requests.

Quality Assessment

The project is highly approachable and actively maintained, with its most recent push occurring today. It uses the standard, permissive MIT license and is distributed as a valid Python package on PyPI. Community trust is currently low but genuine, represented by 12 GitHub stars. The codebase is small and light, making it easy for developers to manually review and audit the logic before integrating it into their workflow.

Verdict

Safe to use.

A semantic tool routing proxy for AI agents. Indexes all MCP tools with FAISS embeddings, surfaces only what's relevant. 97% context reduction.

smartmcp

PyPI package: smartmcp-router (CLI: smartmcp)

Intelligent MCP tool routing that reduces context bloat by serving only the tools your AI actually needs.

In my own setup with 8 MCP servers and 224 tools, every AI request was loading ~66,000 tokens of tool schemas before the model even started thinking. With smartmcp, that dropped to ~1,600 tokens. A 97% reduction on every request.

| Without smartmcp | With smartmcp | |

|---|---|---|

| Tools in context | All 224 | 2 (search_tools + call_discovered_tool) |

| Tokens per request | ~66,000 | ~1,600 |

| Scales with | Every tool you add (O(n)) | Always 2 fixed tools (O(1)) |

Token counts estimated at ~4 characters per token. Actual counts vary by model tokenizer.

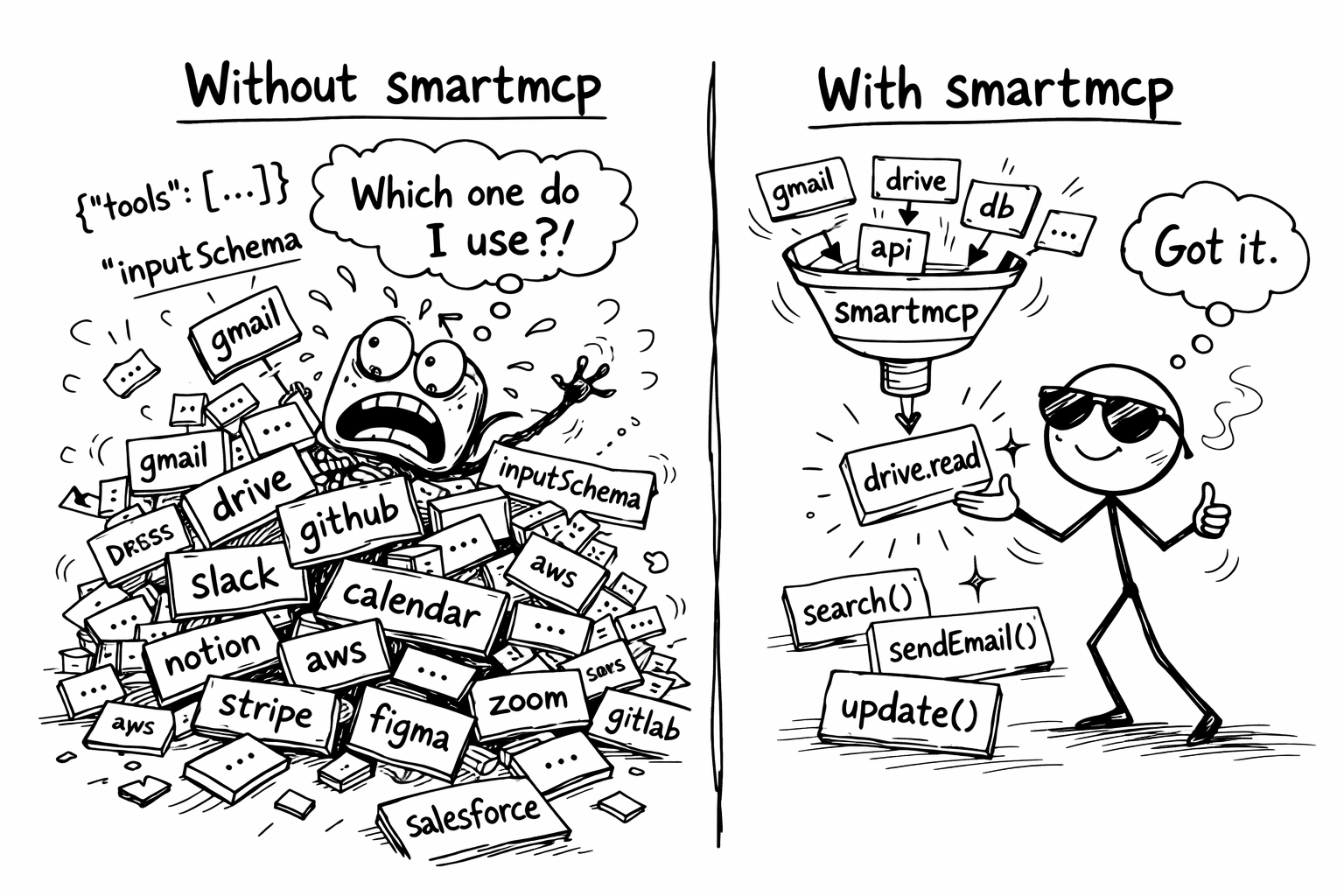

Most MCP setups expose every tool from every server to the AI at once. With 5+ servers, that's 50–200+ tool schemas crammed into the context window before the AI even starts thinking. smartmcp fixes this.

smartmcp is a proxy MCP server that sits between your AI client and your upstream MCP servers. It indexes all available tools using semantic embeddings and exposes a fixed two-tool surface: search_tools to discover the right upstream tool and call_discovered_tool to invoke it. The tool list never changes mid-session, so smartmcp works even with clients that ignore notifications/tools/list_changed.

How it works

AI Client (Claude Desktop / Cursor / your agent)

↕ stdio

smartmcp (proxy server)

↕ stdio (one connection per server)

[github] [filesystem] [google-workspace] [git] [memory] [puppeteer] ...

smartmcp uses a two-phase flow: discover, then call.

Phase 1: Discovery

- On startup, smartmcp connects to all your configured MCP servers, collects every tool schema, and builds a FAISS vector index using sentence-transformer embeddings.

- The AI always sees exactly two tools:

search_toolsandcall_discovered_tool. It callssearch_toolswith a natural language query, for examplesearch_tools({ "query": "create a GitHub issue" }). - smartmcp runs semantic search across all indexed tools and finds the top-k matches.

search_toolsreturns structured JSON. Each match includes the exacttargetidentifier, the upstreamserverandname, thedescription, the relevancescore, and the full upstreaminput_schema.

Phase 2: Calling

- The AI reads the matching tool's

input_schemafrom the search response and constructs validarguments. - The AI calls

call_discovered_tool({ "target": "<exact target from search>", "arguments": { ... } }). smartmcp resolves the target (for examplegithub__create_issue), routes the call to the correct upstream server, and returns the result. Arguments are forwarded to the upstream tool unchanged.

The intelligence is in the discovery step. The AI still does its own parameter construction based on the schema returned by search_tools. smartmcp just narrows down which tools it sees, and the static two-tool surface means the flow works even with clients that snapshot tools/list once and never refresh.

Installation

pip install smartmcp-router

Requires Python 3.10+.

Quick start

1. Create a config file

Create a smartmcp.json with your upstream MCP servers (same format as Claude Desktop / Cursor config):

{

"mcpServers": {

"filesystem": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-filesystem", "/home/user/documents"]

},

"github": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-github"],

"env": {

"GITHUB_TOKEN": "ghp_your_token_here"

}

},

"slack": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-slack"],

"env": {

"SLACK_BOT_TOKEN": "xoxb-your-token-here"

}

}

},

"top_k": 5,

"embedding_model": "all-MiniLM-L6-v2"

}

2. Add smartmcp to your AI client

Add smartmcp as your single MCP server entry. It now manages all your upstream servers defined in smartmcp.json.

Claude Desktop

Add to your claude_desktop_config.json:

{

"mcpServers": {

"smartmcp": {

"command": "smartmcp",

"args": ["--config", "/path/to/smartmcp.json"]

}

}

}

Cursor

Add to your .cursor/mcp.json:

{

"mcpServers": {

"smartmcp": {

"command": "smartmcp",

"args": ["--config", "/path/to/smartmcp.json"]

}

}

}

Custom agents

Point your MCP client at smartmcp the same way you would any stdio MCP server:

smartmcp --config /path/to/smartmcp.json

3. Use it

Your AI now sees two tools: search_tools and call_discovered_tool. When it needs to do something, it searches and then invokes:

AI calls:

search_tools({ "query": "read files from disk" })smartmcp returns: JSON with up to top-k matches. Each match includes a

target(such asfilesystem__read_file), the upstream tool'sdescription, and the fullinput_schema.AI sees: The complete parameter definitions inside each match. It picks the right one, builds

argumentsagainst that match'sinput_schema, and callscall_discovered_tool({ "target": "filesystem__read_file", "arguments": { ... } }).smartmcp proxies the call to the filesystem server unchanged and returns the result.

Configuration reference

| Field | Type | Default | Description |

|---|---|---|---|

mcpServers |

object | (required) | Map of server names to MCP server configs |

mcpServers.<name>.command |

string | (required) | Command to spawn the server |

mcpServers.<name>.args |

string[] | [] |

Arguments for the command |

mcpServers.<name>.env |

object | {} |

Environment variables for the server |

top_k |

integer | 5 |

Default number of tools returned per search |

embedding_model |

string | "all-MiniLM-L6-v2" |

Sentence-transformers model for embeddings |

Why smartmcp?

- Less context waste: Instead of 100 tool schemas in every request, the AI sees a fixed two-tool surface and only inspects the few schemas returned by

search_tools. - Better tool selection: Semantic search finds the right tools even when the AI doesn't know the exact name.

- Full schema in search results: Each match returned by

search_toolsincludes the upstreaminput_schema, so the AI can construct calls correctly without any furthertools/listrefresh. - Client-agnostic: The tool list never changes mid-session, so smartmcp works with clients that ignore

notifications/tools/list_changed(such as Qwen Code and similar agents). - Works with any MCP server: If it speaks MCP over stdio, smartmcp can proxy it.

- Drop-in replacement: Replace your list of MCP servers with one smartmcp entry. No code changes needed.

- Graceful degradation: If some upstream servers fail to connect, smartmcp continues with whatever is available.

Contributing

smartmcp is early-stage and actively improving. Contributions are welcome, especially around search accuracy, embedding strategies, and support for new transports.

If you have ideas, find bugs, or want to add features, open an issue or submit a PR on GitHub.

License

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi