antigravity-workspace-template

Health Gecti

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 1 days ago

- Community trust — 1073 GitHub stars

Code Gecti

- Code scan — Scanned 12 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

Bu listing icin henuz AI raporu yok.

🪐 The ultimate starter kit for AI IDEs, Claude code,codex, and other agentic coding environments.

AI Workspace Template

The missing cognitive layer for AI-powered IDEs.

Dynamic multi-agent cluster. Every AI IDE becomes an expert on your codebase.

Language: English | 中文 | Español

Why Antigravity?

An AI Agent's capability ceiling = the quality of context it can read.

Don't give your AI IDE an encyclopedia. Give it a ChatGPT for your codebase.

Most teams stuff CLAUDE.md with documentation nobody reads. Antigravity takes the opposite approach: instead of a static knowledge dump, it deploys a dynamic multi-agent cluster — each code module gets its own Agent that autonomously reads code and generates knowledge docs, with a Router that intelligently routes questions to the right Agent.

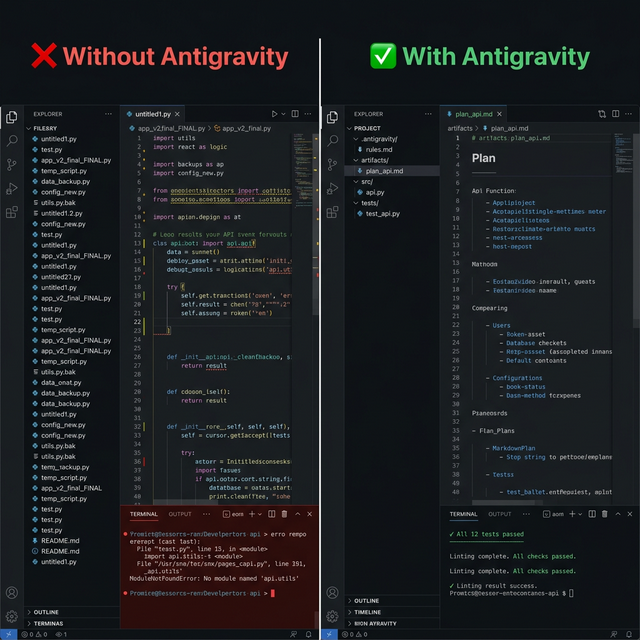

Traditional approach: Antigravity approach:

CLAUDE.md = 5000 lines of docs Claude Code calls ask_project("how does auth work?")

Agent reads it all, forgets most Router → ModuleAgent reads actual source, returns exact answer

Hallucination rate stays high Grounded in real code, file paths, and git history

| Problem | Without Antigravity | With Antigravity |

|---|---|---|

| Agent forgets coding style | Repeats the same corrections | Reads .antigravity/conventions.md — gets it right the first time |

| Onboarding a new codebase | Agent guesses at architecture | ag-refresh → ModuleAgents self-learn each module |

| Switching between IDEs | Different rules everywhere | One .antigravity/ folder — every IDE reads it |

| Asking "how does X work?" | Agent reads random files | ask_project MCP → Router routes to the responsible ModuleAgent |

Architecture is files + a live Q&A engine, not plugins. Portable across any IDE, any LLM, zero vendor lock-in.

Quick Start

Option A — Context files only (any IDE, no LLM needed)

pip install git+https://github.com/study8677/antigravity-workspace-template.git#subdirectory=cli

ag init my-project && cd my-project

# Your IDE now reads .antigravity/rules.md, .cursorrules, CLAUDE.md, AGENTS.md automatically

Option B — Full setup with multi-agent Q&A engine (recommended for Claude Code)

# 1. Inject context files

pip install git+https://github.com/study8677/antigravity-workspace-template.git#subdirectory=cli

ag init my-project && cd my-project

# 2. Install the engine

pip install "git+https://github.com/study8677/antigravity-workspace-template.git#subdirectory=engine"

# 3. Configure .env with your LLM API key, then refresh knowledge base (ModuleAgents self-learn)

ag-refresh

# 4. Register as MCP server — Claude Code can now call ask_project as a tool

claude mcp add antigravity ag-mcp -- --workspace $(pwd)

Now when Claude Code needs to understand your codebase, it calls ask_project("...") — Router auto-routes to the right ModuleAgent.

Features at a Glance

ag init Inject context files into any project (--force to overwrite)

│

▼

.antigravity/ Shared knowledge base — every IDE reads from here

│

├──► ag-refresh Dynamic multi-agent self-learning → module knowledge docs + structure map

├──► ag-ask Router → ModuleAgent Q&A with live code evidence

└──► ag-mcp MCP server → Claude Code calls directly

Dynamic Multi-Agent Cluster — During ag-refresh, each code module gets a RefreshModuleAgent that autonomously reads the code and generates a deep knowledge doc. During ag-ask, Router reads the structure map and routes questions to the right ModuleAgent. Agents can handoff across modules. Powered by OpenAI Agent SDK + LiteLLM.

GitAgent — A dedicated agent for analyzing git history — understands who changed what and why.

GitNexus Enhancement (optional) — Install GitNexus to auto-unlock semantic search, call graphs, and impact analysis for every ModuleAgent.

CLI Commands

| Command | What it does | LLM needed? |

|---|---|---|

ag init <dir> |

Inject cognitive architecture templates | No |

ag init <dir> --force |

Re-inject, overwriting existing files | No |

ag-refresh |

Multi-agent self-learning of codebase, generates module knowledge docs + conventions.md + structure.md |

Yes |

ag-ask "question" |

Router → ModuleAgent/GitAgent routed Q&A | Yes |

ag-mcp --workspace <dir> |

Start MCP server — exposes ask_project + refresh_project to Claude Code |

Yes |

ag report "message" |

Log a finding to .antigravity/memory/ |

No |

ag log-decision "what" "why" |

Log an architectural decision | No |

All commands accept --workspace <dir> to target any directory.

Two Packages, One Workflow

antigravity-workspace-template/

├── cli/ # ag CLI — lightweight, pip-installable

│ └── templates/ # .cursorrules, CLAUDE.md, .antigravity/, ...

└── engine/ # Multi-agent engine + Knowledge Hub

└── antigravity_engine/

├── _cli_entry.py # ag-ask / ag-refresh entry points

├── config.py # Pydantic configuration

├── hub/ # ★ Core: multi-agent cluster

│ ├── agents.py # Router + ModuleAgent + GitAgent

│ ├── pipeline.py # refresh / ask orchestration

│ ├── ask_tools.py # Code exploration + GitNexus tools

│ ├── scanner.py # Project scanning + module detection

│ └── mcp_server.py# MCP server (ag-mcp)

├── mcp_client.py # MCP consumer (connects external tools)

├── memory.py # Persistent interaction memory

├── tools/ # MCP query tools + extensions

├── skills/ # Skill loader

└── sandbox/ # Code execution (local / microsandbox)

CLI (pip install .../cli) — Zero LLM deps. Injects templates, logs reports & decisions offline.

Engine (pip install .../engine) — Multi-agent runtime. Powers ag-ask, ag-refresh, ag-mcp. Supports Gemini, OpenAI, Ollama, or any OpenAI-compatible API.

New skill packaging updates:

engine/antigravity_engine/skills/graph-retrieval/— graph-oriented retrieval tools for structure and call-path reasoning.engine/antigravity_engine/skills/knowledge-layer/— project knowledge-layer tools for semantic context consolidation.

# Install both for full experience

pip install "git+https://...#subdirectory=cli"

pip install "git+https://...#subdirectory=engine"

How It Works

1. ag init — Inject context files

ag init my-project

# Already initialized? Use --force to overwrite:

ag init my-project --force

Creates .antigravity/rules.md, .cursorrules, CLAUDE.md, AGENTS.md, .windsurfrules — each IDE reads its native config file, all pointing to the same .antigravity/ knowledge base.

2. ag-refresh — Multi-agent self-learning

ag-refresh --workspace my-project

5-step pipeline:

- Scan codebase (languages, frameworks, structure)

- Multi-agent pipeline generates

conventions.md - Generate

structure.mdstructure map - Dynamically create RefreshModuleAgents — one per code module, each autonomously reads code and writes a deep knowledge doc to

.antigravity/modules/*.md - RefreshGitAgent analyzes git history, generates

_git_insights.md

3. ag-ask — Router-based Q&A

ag-ask "How does auth work in this project?"

Router reads the structure.md map and routes questions to the right ModuleAgent (pre-loaded with that module's knowledge doc) or GitAgent (understands git history). For cross-module questions, agents can handoff to each other.

IDE Compatibility

Architecture is encoded in files — any agent that reads project files benefits:

| IDE | Config File |

|---|---|

| Cursor | .cursorrules |

| Claude Code | CLAUDE.md |

| Windsurf | .windsurfrules |

| VS Code + Copilot | .github/copilot-instructions.md |

| Gemini CLI / Codex | AGENTS.md |

| Cline | .clinerules |

| Google Antigravity | .antigravity/rules.md |

All generated by ag init. All reference .antigravity/ for shared project context.

Advanced Features

MCP Server — Give Claude Code a ChatGPT for your codebaseInstead of reading hundreds of documentation files, Claude Code can call ask_project as a live tool — backed by a dynamic multi-agent cluster: Router routes questions to the right ModuleAgent, returning grounded answers with file paths and line numbers.

Setup:

# Install engine

pip install "git+https://github.com/study8677/antigravity-workspace-template.git#subdirectory=engine"

# Refresh knowledge base first (ModuleAgents self-learn each module)

ag-refresh --workspace /path/to/project

# Register as MCP server in Claude Code

claude mcp add antigravity ag-mcp -- --workspace /path/to/project

Tools exposed to Claude Code:

| Tool | What it does |

|---|---|

ask_project(question) |

Router → ModuleAgent/GitAgent answers codebase questions. Returns file paths + line numbers. |

refresh_project(quick?) |

Rebuild knowledge base after significant changes. ModuleAgents re-learn the code. |

The engine's core is a dynamically created Agent cluster per code module:

ag-refresh: ag-ask:

┌─ RefreshModule_engine ──→ engine.md Router (reads structure.md map)

├─ RefreshModule_cli ────→ cli.md ├──→ Module_engine (pre-loaded engine.md)

└─ RefreshGitAgent ──────→ _git.md ├──→ Module_cli (pre-loaded cli.md)

├──→ GitAgent (pre-loaded _git.md)

└──→ Agents can handoff to each other

# ModuleAgents self-learn your codebase

ag-refresh

# Only scan files changed since last refresh

ag-refresh --quick

# Router intelligently routes to the right ModuleAgent

ag-ask "What testing patterns does this project use?"

# Log findings and decisions (no LLM needed)

ag report "Auth module needs refactoring"

ag log-decision "Use PostgreSQL" "Team has deep expertise"

Works with Gemini, OpenAI, Ollama, or any OpenAI-compatible endpoint. Powered by OpenAI Agent SDK + LiteLLM.

MCP Integration (Consumer) — Let agents call external toolsMCPClientManager lets your agents connect to external MCP servers (GitHub, databases, etc.), auto-discovering and registering tools.

// mcp_servers.json

{

"servers": [

{

"name": "github",

"transport": "stdio",

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-github"],

"enabled": true

}

]

}

Set MCP_ENABLED=true in .env.

GitNexus is a third-party tool that builds a code knowledge graph using Tree-sitter AST parsing. Antigravity provides built-in integration hooks — when you install GitNexus separately, ag-ask automatically detects it and unlocks three additional tools:

| Tool | What it does |

|---|---|

gitnexus_query |

Hybrid search (BM25 + semantic) — better than grep for "how does auth work?" |

gitnexus_context |

360-degree symbol view: callers, callees, references, definition |

gitnexus_impact |

Blast radius analysis — what breaks if you change a symbol? |

Note: GitNexus is NOT bundled with Antigravity. It is an independent project that requires separate installation via npm. Antigravity works fully without it — GitNexus is an optional enhancement for deeper code understanding.

How to enable (3 steps):

# 1. Install GitNexus (requires Node.js)

npm install -g gitnexus

# 2. Index your project (one-time, creates a local knowledge graph)

cd my-project

gitnexus analyze .

# 3. Use ag-ask as usual — GitNexus tools are auto-detected

ag-ask "How does the authentication flow work?"

How the integration works: ask_tools.py checks if the gitnexus CLI is available on your system. If found, it registers gitnexus_query, gitnexus_context, and gitnexus_impact as additional tools for every ModuleAgent. If not found, these tools are simply absent — zero overhead, no errors.

| Variable | Default | Options |

|---|---|---|

SANDBOX_TYPE |

local |

local · microsandbox |

SANDBOX_TIMEOUT_SEC |

30 |

seconds |

See Sandbox docs.

Real-World Demo: NVIDIA API + Kimi K2.5

Tested end-to-end with Moonshot Kimi K2.5 via NVIDIA's free API tier. Any OpenAI-compatible endpoint works the same way.

1. Configure .env

OPENAI_BASE_URL=https://integrate.api.nvidia.com/v1

OPENAI_API_KEY=nvapi-your-key-here

OPENAI_MODEL=moonshotai/kimi-k2.5

2. Scan your project

$ ag-refresh --workspace .

Updated .antigravity/conventions.md

Updated .antigravity/structure.md

Generated output (by Kimi K2.5):

# Project Conventions

## Primary Language & Frameworks

- **Language**: Python (5,135 files, 99%+ of codebase)

- **Infrastructure**: Docker, Docker Compose

- **CI/CD**: GitHub Actions

...

3. Ask questions

$ ag-ask "What LLM backends does this project support?"

Based on the context, the project supports NVIDIA API with Kimi K2.5.

The architecture uses OpenAI-compatible format, supporting any endpoint

including local LLMs via LiteLLM, NVIDIA NIM models, etc.

4. Log decisions (no LLM needed)

$ ag report "Auth module needs refactoring"

Logged report to .antigravity/memory/reports.md

$ ag log-decision "Use PostgreSQL" "Team has deep expertise"

Logged decision to .antigravity/decisions/log.md

Works with any OpenAI-compatible provider: NVIDIA, OpenAI, Ollama, vLLM, LM Studio, Groq, etc.

Documentation

| 🇬🇧 English | docs/en/ |

| 🇨🇳 中文 | docs/zh/ |

| 🇪🇸 Español | docs/es/ |

Contributing

Ideas are contributions too! Open an issue to report bugs, suggest features, or propose architecture.

Contributors

⭐ Lling0000 Major Contributor · Creative suggestions · Project administrator · Project ideation & feedback |

Alexander Daza Sandbox MVP · OpenSpec workflows · Technical analysis docs · PHILOSOPHY |

Chen Yi First CLI prototype · 753-line refactor · DummyClient extraction · Quick-start docs |

Subham Sangwan Dynamic tool & context loading (#4) · Multi-agent swarm protocol (#3) |

shuofengzhang Memory context window fix · MCP shutdown graceful handling (#28) |

goodmorning10 Enhanced ag ask context loading — added CONTEXT.md, AGENTS.md, and memory/*.md as context sources (#29)

|

Abhigyan Patwari GitNexus — code knowledge graph natively integrated into ag ask for symbol search, call graphs, and impact analysis

|

BBear0115 Skill packaging & KG retrieval enhancements · Multi-language README sync (#30) |

Star History

License

MIT License. See LICENSE for details.

Built for the AI-native development era

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi