aiden

Health Uyari

- License — License: AGPL-3.0

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Low visibility — Only 5 GitHub stars

Code Uyari

- process.env — Environment variable access in bin/npx-init.ts

- network request — Outbound network request in cloudflare-worker/license-server.js

- network request — Outbound network request in cloudflare-worker/license-server.ts

- process.env — Environment variable access in coordination/commandGate.ts

- process.env — Environment variable access in core/agentShield.ts

Permissions Gecti

- Permissions — No dangerous permissions requested

This tool is a local-first AI operating system that runs directly on your machine to provide a wide variety of autonomous skills and tools without requiring a cloud account.

Security Assessment

Overall risk: Medium. The application acts as an autonomous agent with broad capabilities, including executing code, managing files, and controlling the screen, which inherently requires strict oversight. It makes outbound network requests, specifically through a license server component. It also accesses environment variables across multiple modules (such as an agent shield and command gate) to handle configurations. The automated scan did not find any hardcoded secrets or explicitly dangerous requested permissions. However, because the tool is designed to take autonomous actions on your system, you should thoroughly inspect what the agent is permitted to execute and ensure any outbound connections are acceptable for your environment.

Quality Assessment

The project is actively maintained, with repository activity as recent as today. It uses a standard open-source license (AGPL-3.0) and provides a highly detailed README with clear multi-platform support instructions. However, community trust and visibility are currently very low. With only 5 stars on GitHub, the tool has not yet been widely adopted or battle-tested by a large audience, meaning unforeseen bugs or security edge-cases are more likely.

Verdict

Use with caution — the tool is highly capable and active, but its autonomous system-level actions and low community visibility require you to thoroughly review the source code before deploying.

local-first AI OS for Linux & Windows — 1500+ skills · 89+ tools · 14+ providers · AGPL-3.0

█████╗ ██╗██████╗ ███████╗███╗ ██╗

██╔══██╗██║██╔══██╗██╔════╝████╗ ██║

███████║██║██║ ██║█████╗ ██╔██╗ ██║

██╔══██║██║██║ ██║██╔══╝ ██║╚██╗██║

██║ ██║██║██████╔╝███████╗██║ ╚████║

╚═╝ ╚═╝╚═╝╚═════╝ ╚══════╝╚═╝ ╚═══╝

local-first AI operating system

1500+ skills · 89+ tools · 14+ providers · AGPL-3.0

Windows · Linux · WSL · macOS (API mode)

Website · Contact · Discord · Download

v3.11 — Custom provider routing + Claude Haiku 4.5

Full custom OpenAI-compatible provider support: plug in any endpoint via config with no code changes. BayOfAssets Claude Haiku 4.5 ships as the new default tier-1 provider. Fixes silent Groq fallback incallLLM, greeting memory double-label, and health endpoint missing custom providers. See changelog below.

Aiden is a local-first AI operating system. It runs entirely on

your machine — no cloud account required, no telemetry, no data leaving your

hardware unless you configure a cloud provider. It ships with a signed Windows

installer, and runs in headless API mode on Linux, WSL, and macOS. Features:

1500+ composable skills, 89+ autonomous tools, a 6-layer memory architecture,

self-healing provider routing, and the ability to control your screen, browse

the web, run code, send emails, manage files, and hold a full conversation —

offline via Ollama.

Platform support

| Platform | GUI app | API + CLI | Skills available |

|---|---|---|---|

| Windows 10/11 | ✅ signed installer | ✅ | All 1500+ (including Windows-only skills) |

| Linux | — | ✅ headless | ~1491 (Windows-only skills auto-skipped) |

| WSL 2 | — | ✅ headless | ~1491 (Windows-only skills auto-skipped) |

| macOS | — | ✅ headless | ~1491 (Windows-only skills auto-skipped) |

Windows-only skills (clipboard history, Defender, OneNote, Outlook COM, registry, etc.) are tagged platform: windows and are silently skipped on other platforms at load time.

Install

Windows

irm aiden.taracod.com/install.ps1 | iex

Or download the installer manually. Windows 10/11, 64-bit, ~500 MB disk space.

Linux / WSL / macOS

curl -fsSL aiden.taracod.com/install.sh | bash

Or install manually:

# Prerequisites: Node.js 20+, git, Ollama (recommended)

git clone https://github.com/taracodlabs/aiden.git

cd aiden

cp .env.example .env # configure OLLAMA_HOST, API keys, etc.

npm install

npm run build

npm start # starts the API server (headless)

# In a second terminal:

npm run cli # interactive TUI

Set AIDEN_HEADLESS=true to suppress the Electron GUI when running the packaged app.

Screenshots

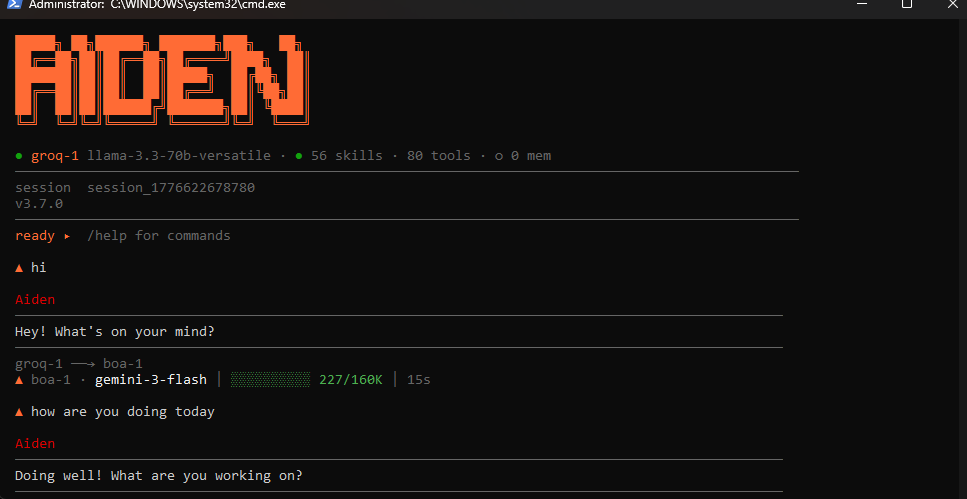

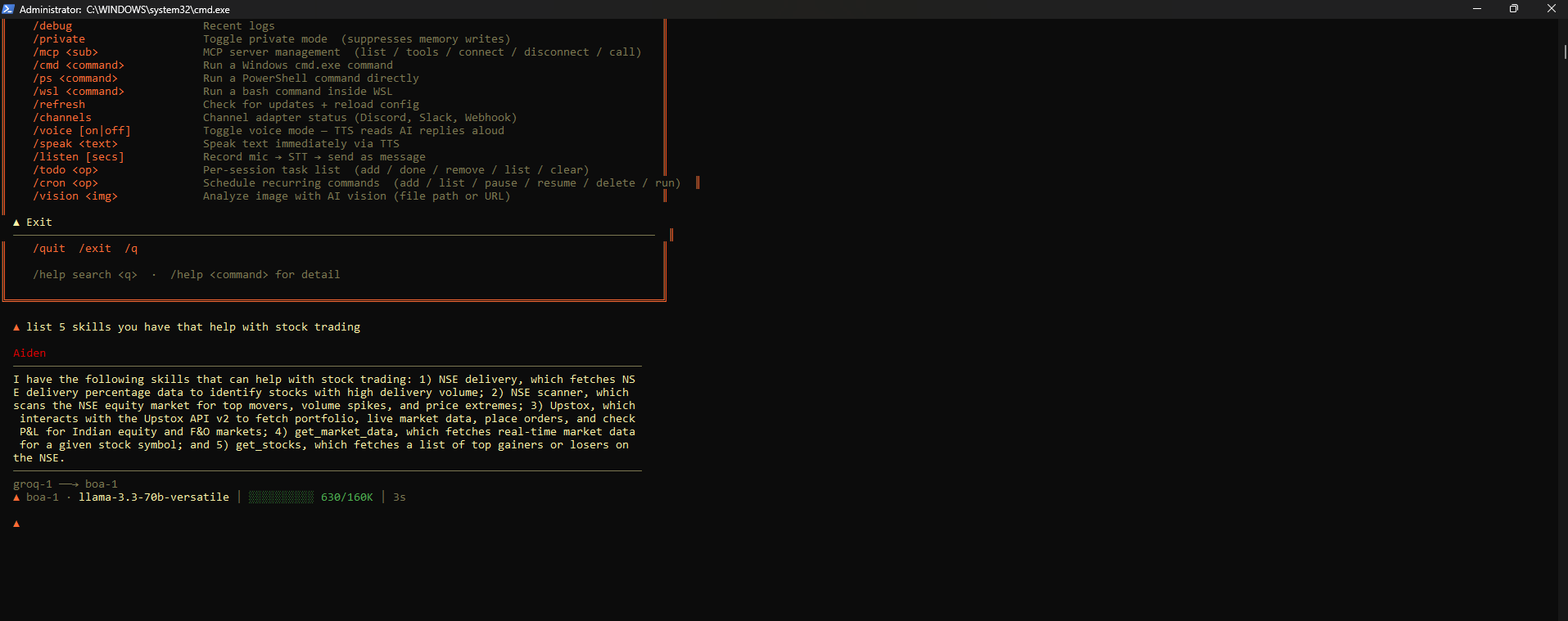

Terminal (TUI)

Full command palette, 1500+ skills, 89+ tools, automatic provider routing (Groq → BOA → Ollama). Runs in any terminal.

Desktop app

Full chat interface with live activity panel. Local-first, connects to Ollama or any of 14+ cloud providers via your own API key.

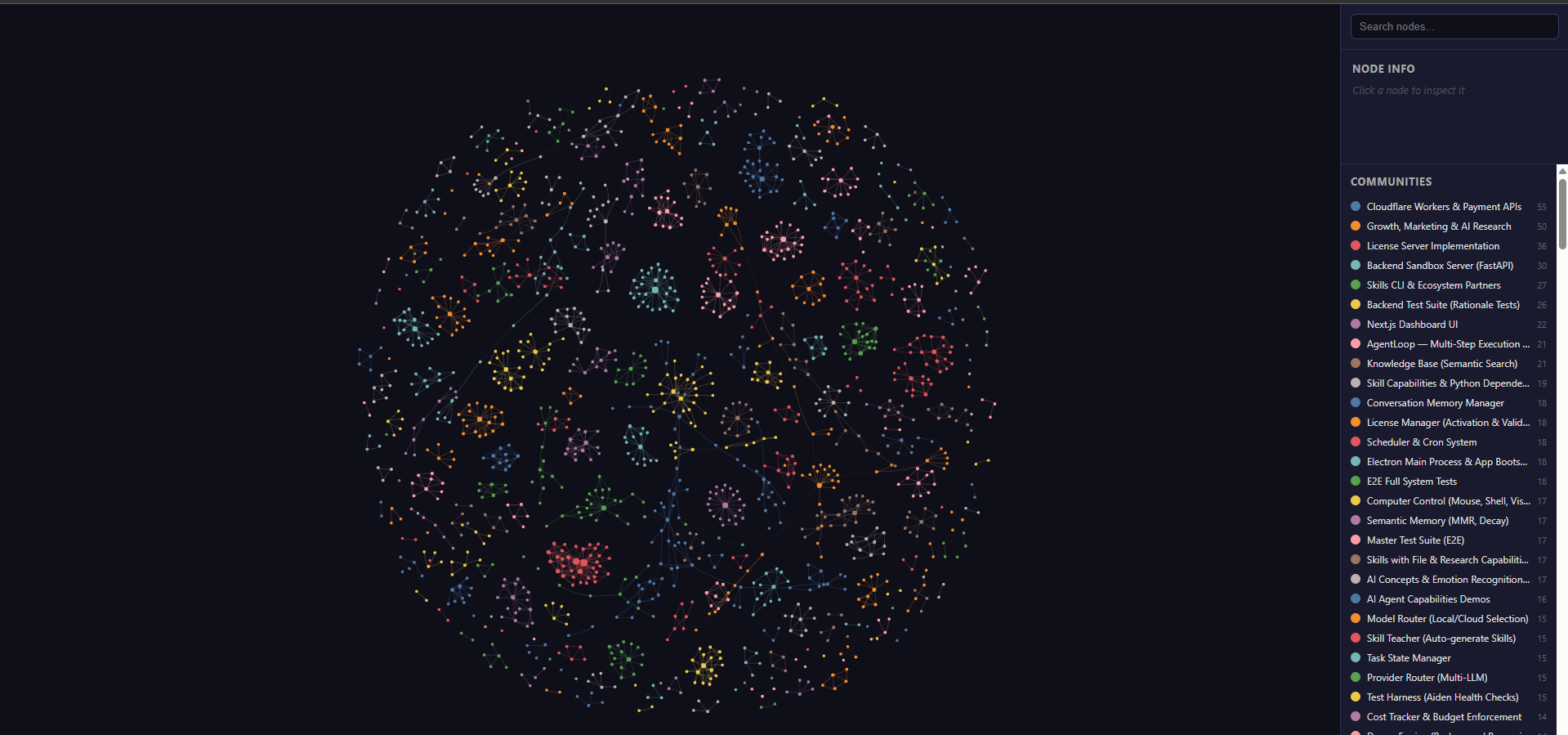

Memory graph

6-layer memory visualized — every conversation, task, and learned pattern becomes a node in the knowledge graph. Fully local, persisted to disk, searchable.

Features

| Category | What Aiden does |

|---|---|

| Inference & providers | Local Ollama (Llama 3, Mistral, Qwen, Gemma, Phi…) with optional cloud fallback to OpenAI, Anthropic, Groq, Cerebras, NVIDIA NIM, OpenRouter, and more — 14+ providers including custom OpenAI-compatible endpoints |

| 89+ tools | Web search, file read/write, shell execution, Playwright browser automation, screen capture & OCR, calendar, email (IMAP/SMTP), code execution sandbox, clipboard, system info |

| 1500+ skills | Composable plugins each with a SKILL.md prompt, tool implementations, and optional sandbox runner — install per-session or globally |

| Subagent swarm | Spawn N parallel agents on any task; vote, merge, or pick the best result automatically |

| 6-layer memory | Episodic (in-context), BM25 keyword, vector semantic, procedural (skill), goal tracking, and LESSONS.md permanent-failure moat that grows every session |

| Voice | Speech-to-text (Groq → OpenAI → local Whisper.cpp) + text-to-speech (Edge TTS → ElevenLabs → Windows SAPI); full offline voice loop |

| Channel adapters | Discord, Slack, Telegram, WhatsApp, Email, Webhook, Twilio — any channel triggers the same agent loop |

| Computer use | Screenshots, screen state reader, GUI automation via keyboard/mouse when asked — full OS control mode |

Architecture

User input (any channel)

│

▼

┌─────────────┐

│ Planner │ ← breaks task into steps

└──────┬──────┘

│

▼

┌─────────────┐ ┌──────────────────┐

│ Agent loop │────▶│ Tool dispatcher │──▶ 89+ tools

│ agentLoop │ └──────────────────┘

└──────┬──────┘

│

▼

┌─────────────────────────────────┐

│ Memory (6 layers) │

│ episodic · BM25 · vector · │

│ procedural · goal · LESSONS.md │

└─────────────────────────────────┘

│

▼

┌─────────────┐

│ Provider │ ← self-healing chain, 14+ providers

│ router │

└─────────────┘

│

▼

Response (streamed to originating channel)

See ARCHITECTURE.md for a full layer-by-layer breakdown, data flow diagrams, and the skill system design.

Configuration

Copy .env.example to .env in the Aiden data directory.

cp .env.example .env

Key environment variables:

| Variable | Default | Notes |

|---|---|---|

OLLAMA_HOST |

http://127.0.0.1:11434 |

Override if Ollama runs on a different host/port |

OLLAMA_MODEL |

mistral-nemo:12b |

Default chat model |

ANTHROPIC_API_KEY |

— | Optional cloud fallback |

OPENAI_API_KEY |

— | Optional cloud fallback |

GROQ_API_KEY |

— | Free tier: fast Llama 3 inference |

DAILY_BUDGET_USD |

5.00 |

Hard cap on daily cloud API spend |

See .env.example for the full list of ~90 variables covering voice, messaging integrations, search, computer use, and more.

Contributing

Contributions are welcome — see CONTRIBUTING.md for the full guide.

- Bug fixes and new skills are the easiest entry points

- All contributors sign the CLA once via PR comment

- Follow Conventional Commits

- Run

npx tsc --noEmitbefore opening a PR

Resources

| Download installer | Latest release |

| Releases & changelog | github.com/taracodlabs/aiden-releases |

| License | AGPL-3.0 core · Apache-2.0 skills |

Changelog

v3.11.0 — 2026-04-25

Custom provider routing

- Full support for custom OpenAI-compatible endpoints via

customProvidersindevos.config.json— add any endpoint with abaseUrl,apiKey, andmodel; no code changes required - Fixed silent Groq fallback bug in

callLLM: custom providers now correctly route to their configuredbaseUrlinstead of falling back to the Groq URL - Fixed

raceProviderspin-first logic:primaryProvideris now resolved fromcustomProviderslist when not found inproviders.apis - Fixed health/status endpoint (

/api/providers) to include custom providers in the returned list, tier-sorted

BayOfAssets Claude Haiku 4.5 as default primary

- Swapped default primary provider to BayOfAssets Claude Haiku 4.5 (

claude-haiku-4-5) at tier 1 - Groq and Gemini remain as tier-2 fallback chain

Memory & greeting

- Fixed

buildGreetingPreambledouble-label bug:"Active goals: Active goals:\n..."→ compact single-line goal titles - Added empty-string guard on greeting reply: blank preamble no longer produces

"Currently tracking: . What do you need?"

v3.10.0 — 2026-04-09

See releases page for older changelogs.

License

| Component | License |

|---|---|

Core (src/, cli/, api/, core/, providers/, dashboard-next/) |

AGPL-3.0-only |

Skills (skills/) |

Apache-2.0 |

Commercial use

Aiden's core is AGPL-3.0. You can self-host, modify, and study it freely. Embedding it in a commercial product or offering it as a hosted service requires either releasing your modifications under AGPL-3.0 or purchasing a commercial license.

Skills in skills/ are Apache-2.0 and can be used in commercial products without copyleft obligations.

For commercial licensing and enterprise deployments: aiden.taracod.com/contact?type=enterprise

Built by Taracod · Built by Shiva Deore · AGPL-3.0

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi