slopometry

Health Gecti

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 10 GitHub stars

Code Uyari

- process.env — Environment variable access in .github/workflows/ci.yml

Permissions Gecti

- Permissions — No dangerous permissions requested

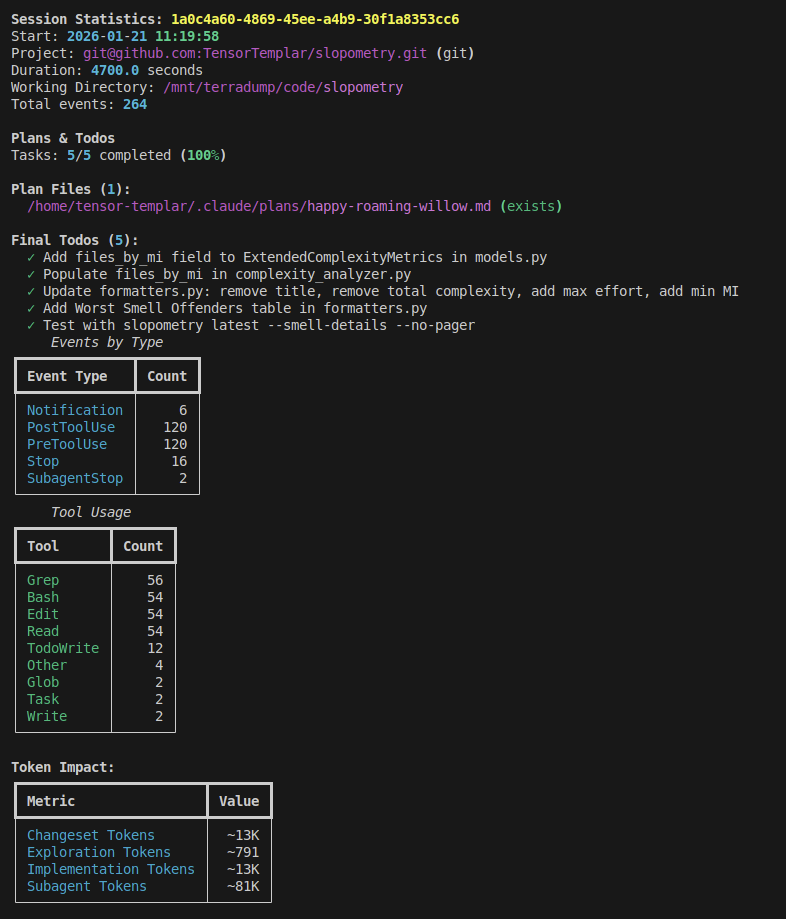

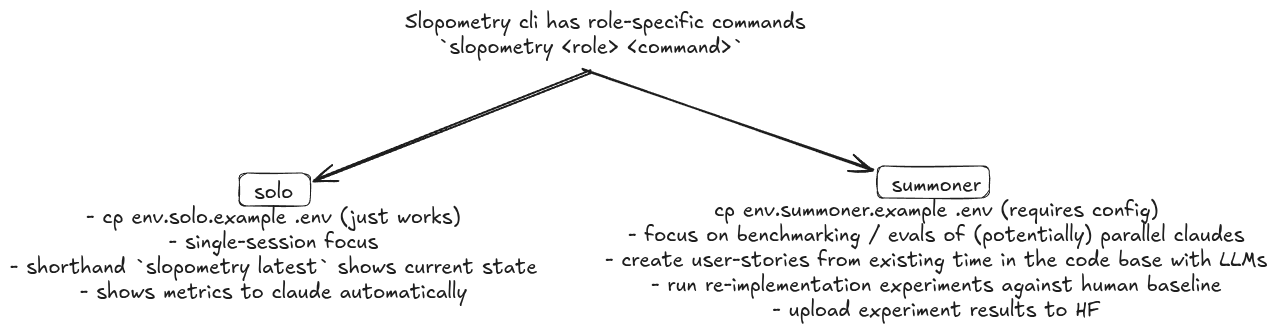

This is a CLI tool and agent that tracks and analyzes coding agent sessions (like Claude Code) to provide metrics on code quality, token usage, and agent behavior. It helps developers quantify how well their AI coding assistants are performing.

Security Assessment

The tool reads local session data and repository files to generate its metrics, which is expected for this type of analysis. It relies on environment variables for configuration, including a toggle for a humorous "Galen metric" feature. The automated scan flagged environment variable access within its GitHub Actions CI workflow, which is a standard practice for running tests and is not a security concern for end users. No hardcoded secrets, dangerous permissions, or malicious network requests were detected. Overall risk: Low.

Quality Assessment

The project appears to be actively maintained, with recent updates and feature additions documented in the README. It uses the permissive MIT license, which is excellent for open-source adoption. While the community is currently small (10 GitHub stars), the documentation is thorough and the developer has a sense of humor (evidenced by the HR-approved metrics and jokes about "reward hacking"). The tone is tongue-in-cheek, but the underlying tooling—like detecting silently swallowed exceptions in AI-generated code—addresses genuine and serious developer concerns.

Verdict

Safe to use.

Quantify cli agent vibes, run experiments, look at pretty numbers

Slopometry

A tool that lurks in the shadows, tracks and analyzes Claude Code sessions providing metrics that none of you knew you needed.

NEWS:

April 2026: Behavioral pattern detection. Sessions are now scanned for ownership dodging ("pre-existing", "not introduced by") and simple workaround ("simplest", "for now", "quick fix") phrases in assistant output, reported as per-minute rates. Rates are persisted per-repo and

current-impactshows rolling average trends. Display reordered: plans, token impact, and behavioral patterns now appear first. Also: newly written files no longer incorrectly flagged as blind spots, and single-method class detection skips data classes with only@propertymethods.February 2026: OpenCode 1.2.10+ now supported for solo features, including stop hook feedback! See plugin doc.

January 2026: BREAKING CHANGE - replaced

radon, which is abandoned for 5+ years withrust-code-analysisthat is only abandoned for 3+ years.

This allows us to support various unserious languages for analysis in the future (like c++ and typescript) but requires installation from wheels due to rust bindings.

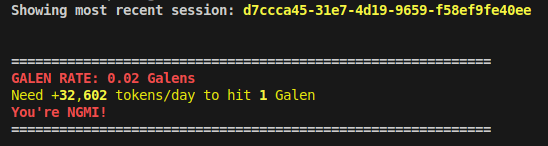

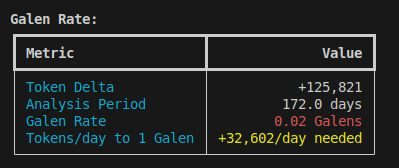

Bindings are pre-built for MacOS and Linux with Python 3.13, 3.14 and the free-threaded variants with at.December 2025: for microsoft employees we now support the Galen metric (Python only for now).

Set SLOPOMETRY_ENABLE_WORKING_AT_MICROSOFT=true slopometry latest or edit your .env to get encouraging messages approved by HR!

Please stop contacting us with your cries for mercy - this is between you and your unsafe (memory) management.

Features / FAQ

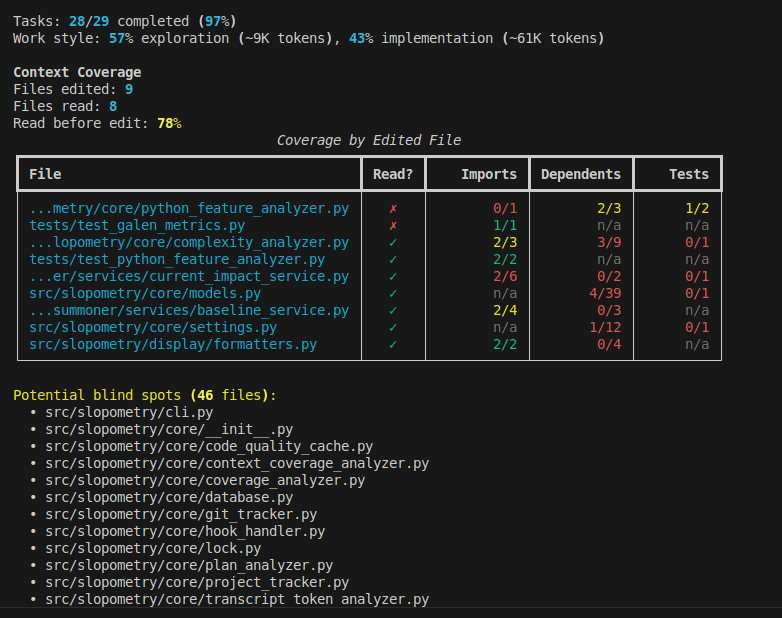

Q: How do i know if claude is lazy today?

A: Eyeball progress based on overall session-vibes, plan items, todos, how many tokens read/edited etc.

slopometry latest

Worst offenders and overall slop at a glance

See more examples and FAQ in details below:

Q: I don't need to verify when my tests are passing, right?

A: lmao

Agents love to reward hack (I blame SWE-Bench, btw). Naive "unit-test passed" rewards teach the model to cheat by skipping them in clever ways.

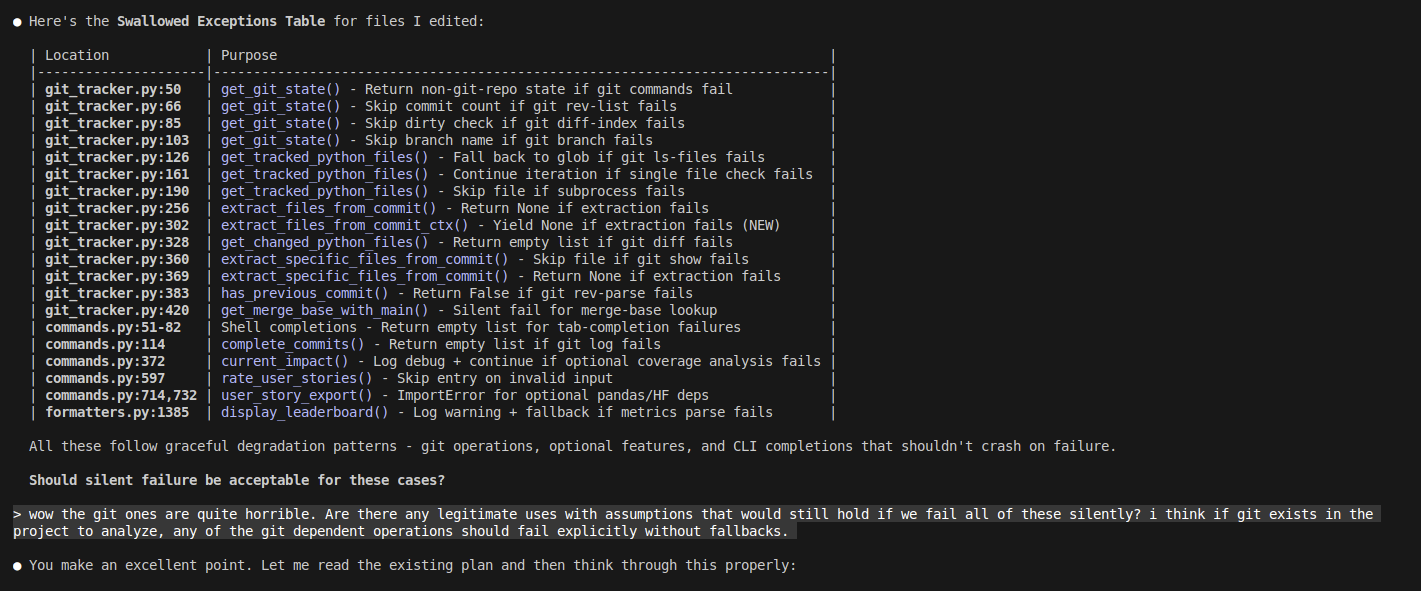

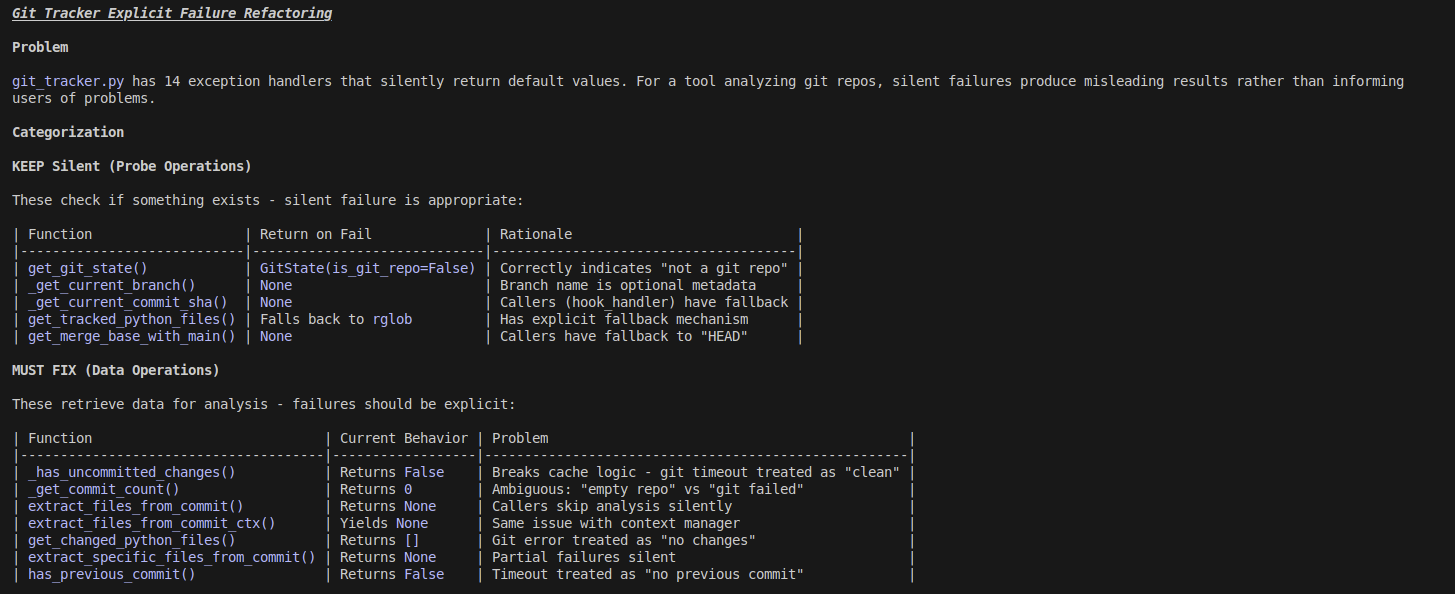

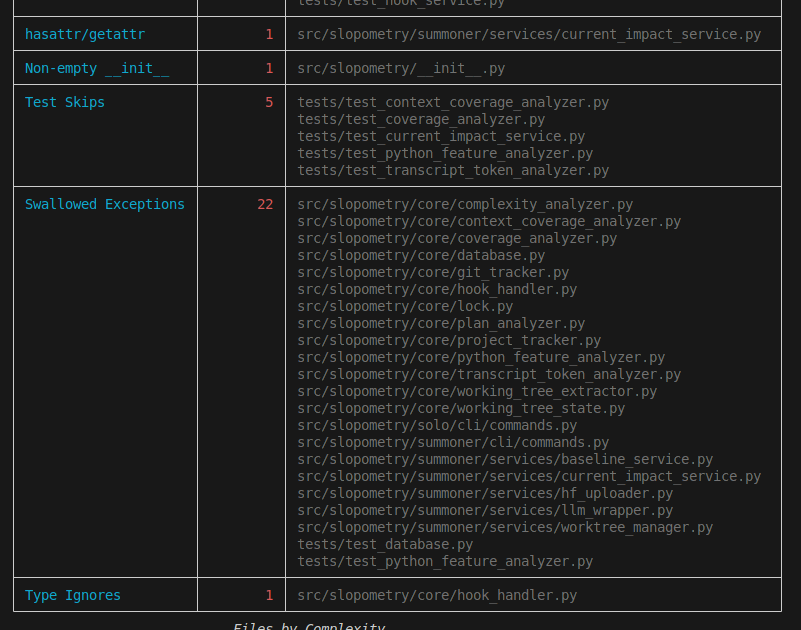

What clevery ways you ask? Silent exception swallowing upstream ofc!

Slopometry forces agents to state the purpose of swallowed exceptions and skipped tests, this is a simple LLM-as-judge call for your RL pipeline (you're welcome)

Here is Opus 4.5, which is writing 90% of your production code by 2026:

"> But tensor, i don't use slopometry and already committed to production!?"

Don't worry, your customers probably don't read their code either, and their agents will just run python -c "<1600 LOC adhoc fix>" as a workaround for each api call.

Q: I am a junior and all my colleagues were replaced with AI before I learned good code taste, is this fine?

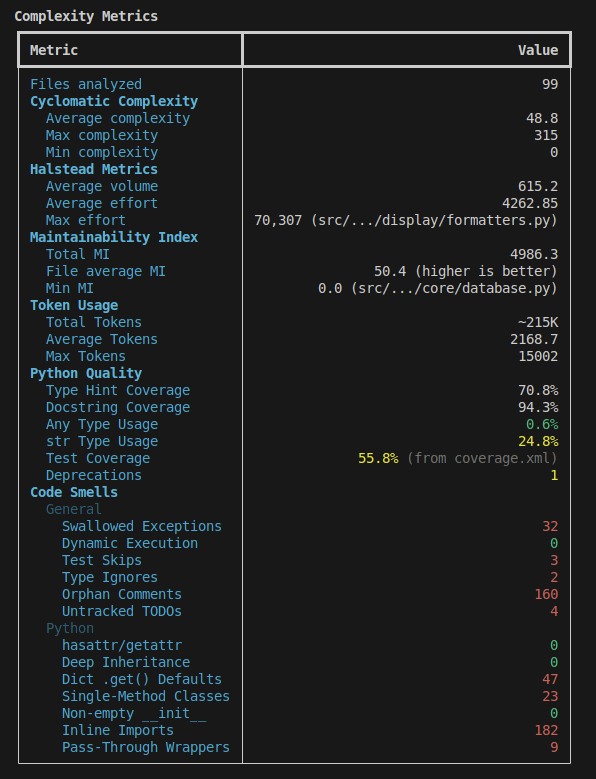

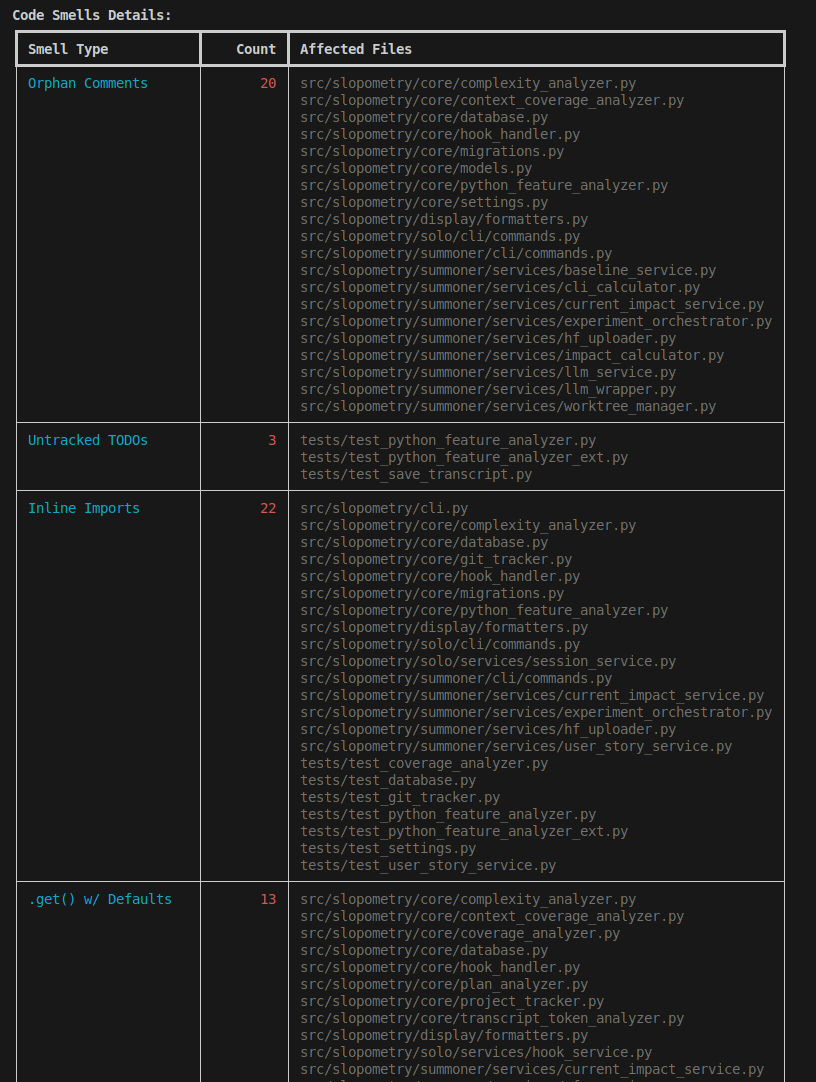

A: Here are some dumb practices agents love to add, that would typically require justification or should be the exception, not the norm:

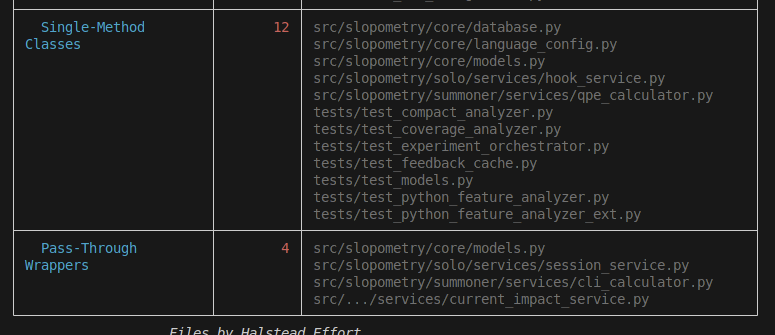

Q: I have been vibe-coding this codebase for a while now and learned prooompt engineering. Clearly the code is better now?

A: You're absolutely right (But we verify via code trends for the last ~100 commits anyway):

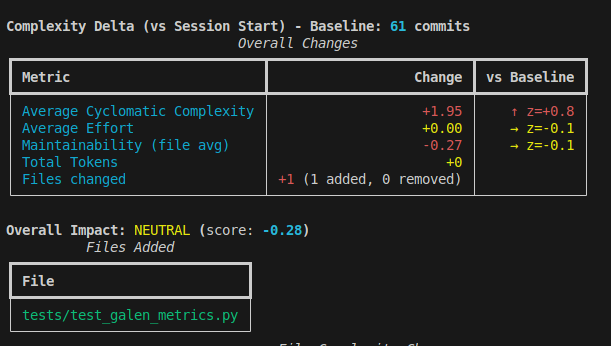

Q: I use Cursor/BillionDollarVSCodeForkFlavourOfTheWeek, which uses embeddings and RAG on my code in the cloud, so my agent always knows which file are related to the current task, right?

A: Haha, sure, maybe try a trillion dollar vscode fork, or a simple AST parser that checks imports for edited files and tests instead. Spend the quadrillions saved on funding researchers who read more than 0 SWE books during their careers next time.

Q: My boss is llm-pilled and asks me to report my progress every 5 minutes, but human rights forbid keylogging in my country, what do I do?

A: export your claude code transcripts, including plan and todos, and commit them into the codebase!

legal disclaimer: transcripts are totally not for any kind of distillation, but merely for personal entertainment purposes

Q: Are these all the features, how is the RL meme even related?

A: There are advanced features for temporal and cross-project measurement of slop, but these require reading, thinking and being an adult.

Limitations

Runtime: Almost all metrics are trend-relative and the first run will do a long code analysis before caching, but if you consider using this tool, you are comfortable with waiting for agents anyway.

Git: This tool requires git to be installed and available in PATH. Most features (baseline comparison, commit analysis, cache invalidation) depend on git operations.

Compat: This software was tested mainly on Linux with Python codebases. There are plans to support Rust at some point but not any kind of cursed C++ or other unserious languages like that. I heard someone ran it on MacOS successfully once but we met the person on twitter, so YMMV.

Seriously, please do not open PRs with support for any kind of unserious languages. Just fork and pretend you made it. We are ok with that. Thank you.

Installation

Both Anthropic models and MiniMax-M2 are fully supported as the claude code drivers.

To setup MiniMax-M2 instead of Sonnet, check out this guide

Install claude code (needs an account or api key)

curl -fsSL http://claude.ai/install.sh | bash

Install slopometry as a uv tool

uv tool install git+https://github.com/TensorTemplar/slopometry.git --find-links "https://github.com/Droidcraft/rust-code-analysis/releases/expanded_assets/python-2026.1.31"

uv tool update-shell

Restart your terminal or run:

source ~/.zshrc # for zsh

or: source ~/.bashrc # for bash

After making code changes, reinstall to update the global tool

uv tool install . --reinstall --find-links "https://github.com/Droidcraft/rust-code-analysis/releases/expanded_assets/python-2026.1.31"

## Quick Start

Note: tested on Ubuntu linux 24.04.1

```bash

# Install hooks globally (recommended)

slopometry install --global

# Use claude code or opencode normally

claude

opencode

# View tracked sessions and code delta vs. the previous commit or branch parent

# Note solo commands will scope project-relative completions and candidates

slopometry solo ls

slopometry solo show <session_id>

# Alias for latest session, works from everywhere

slopometry latest

# Save session artifacts (transcript, plans, tasks) to .slopometry/<session_id>/

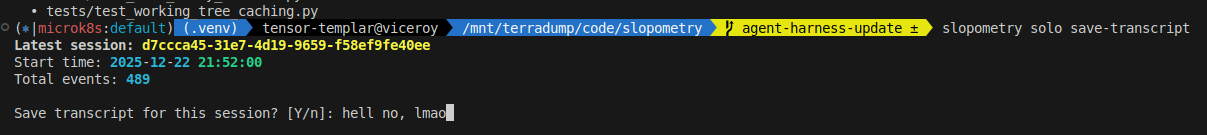

slopometry solo save-transcript # latest

slopometry solo save-transcript <session_id>

Shell Completion

Enable autocompletion for your shell:

# For bash

slopometry shell-completion bash

# For zsh

slopometry shell-completion zsh

# For fish

slopometry shell-completion fish

The command will show you the exact instructions to add to your shell configuration.

Upgrading

# Upgrade from git

uv tool install --reinstall git+https://github.com/TensorTemplar/slopometry.git \

--find-links "https://github.com/Droidcraft/rust-code-analysis/releases/expanded_assets/python-2026.1.31"

# Or if installed from local directory

cd slopometry

git pull

uv tool install . --reinstall --find-links "https://github.com/Droidcraft/rust-code-analysis/releases/expanded_assets/python-2026.1.31"

# Note: After upgrading, you may need to reinstall hooks and completions if the api changed

slopometry install

Configuration

Slopometry can be configured using environment variables or a .env file:

- Global configuration:

~/.config/slopometry/.env(Linux respects$XDG_CONFIG_HOME) - Project-specific:

.envin your project directory

# Create config directory and copy example config

mkdir -p ~/.config/slopometry

# Copy example config

curl -o ~/.config/slopometry/.env https://raw.githubusercontent.com/TensorTemplar/slopometry/main/.env.solo.example

# Or if you have the repo cloned:

# cp .env.solo.example ~/.config/slopometry/.env

# Edit ~/.config/slopometry/.env with your preferences

Development Installation

git clone https://github.com/TensorTemplar/slopometry

cd slopometry

uv sync --extra dev

uv run pytest

Customize via .env file or environment variables:

SLOPOMETRY_DATABASE_PATH: Custom database location (optional)- Default locations:

- Linux:

~/.local/share/slopometry/slopometry.db(or$XDG_DATA_HOME/slopometry/slopometry.dbif set) - macOS:

~/Library/Application Support/slopometry/slopometry.db - Windows:

%LOCALAPPDATA%\slopometry\slopometry.db

- Linux:

- Default locations:

SLOPOMETRY_ENABLE_COMPLEXITY_ANALYSIS: Collect complexity metrics (default:true)SLOPOMETRY_ENABLE_COMPLEXITY_FEEDBACK: Provide feedback to Claude (default:false)

Cite

@misc{slopometry,

title = {Slopometry: Opinionated code quality metrics for code agents and humans},

year = {2025},

author = {TensorTemplar},

publisher = {GitHub},

howpublished = {\url{https://github.com/TensorTemplar/slopometry}}

}

Roadmap

[x] - Actually make a package so people can install this

[ ] - Add hindsight-justified user stories with acceptance criteria based off of future commits

[x] - Add plan evolution log based on claude's todo shenanigans

[ ] - Rename the readme.md to wontreadme.md because it takes more than 15 seconds or whatever the attention span is nowadays to read it all. Maybe make it all one giant picture? Anyway, stop talking to yourself in the roadmap.

[ ] - Finish git worktree-based NFP-CLI (TM) training objective implementation so complexity metrics can be used as additional process reward for training code agents

[ ] - Extend stop hook feedback with LLM-as-Judge to support guiding agents based on smells and style guide

[ ] - Not go bankrupt from having to maintain open source in my free time, no wait...

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi