claude-linkedin-automation

Health Uyari

- License — License: MIT

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Low visibility — Only 6 GitHub stars

Code Gecti

- Code scan — Scanned 1 files during light audit, no dangerous patterns found

Permissions Gecti

- Permissions — No dangerous permissions requested

This tool is a custom skill that automates the management of a professional LinkedIn profile. It enables an AI agent to autonomously create daily posts, engage with other users' content, and triage direct messages based on a predefined user identity and strategy.

Security Assessment

The static code scan (covering 1 file) did not identify any dangerous code patterns, hardcoded secrets, or requests for dangerous local permissions. However, the functional risk remains High. The tool inherently requires network access to interact with LinkedIn, meaning it will handle sensitive browser session tokens or authentication cookies. Additionally, the tool's own documentation explicitly warns that automated interactions likely violate LinkedIn's User Agreement. Using this software carries a severe functional risk of permanent account suspension or banning.

Quality Assessment

The project is actively maintained, with its most recent code push occurring today. It benefits from clear documentation and uses the standard, permissive MIT license. However, community trust and overall visibility are very low. With only 6 GitHub stars, the tool has not received widespread public review or testing, making it difficult to independently verify the developer's claims of zero detection incidents.

Verdict

Use with caution — while the scanned code lacks malicious technical patterns, the functional reality of handling sensitive social media tokens and violating platform terms of service poses a high risk to your LinkedIn account.

A battle-tested skill for managing a professional LinkedIn profile autonomously using Claude AI. 21 automations, 12+ weeks, 0 detection incidents.

Claude LinkedIn Automation

Autonomous LinkedIn management, validated in production.

27+ days. 10 tasks. Zero detection. 3.9% engagement rate.

Install • How It Works • Results • Anti-Detection • Compatibility • Contributing

Legal Disclaimer — This skill documents an autonomous LinkedIn management system. Automated interactions may violate LinkedIn's User Agreement. Use at your own risk. The authors assume no liability for account restrictions or bans. Published for educational and research purposes.

What Is This?

A custom skill for Claude that turns your AI assistant into a full-stack LinkedIn manager. It posts daily, engages with your network, triages DMs, audits itself for detection risk, and reports weekly — all autonomously.

Every rule is extracted from 27+ days of real production data on a live Italian profile. Not theory. Not best guesses. Empirical evidence from daily audits, scored engagement sessions, and documented incidents that shaped the system.

Works in any language. The wizard was battle-tested in Italian, but the system is language-agnostic. Phase 1 captures your identity, voice, and vocabulary in whatever language you operate in — Claude generates all content in your language. The architecture (pillar calendar, anti-detection rules, NDI scoring, task scheduling) is universal.

The 5-Phase Wizard

Type /linkedin and Claude walks you through setup:

Phase 1 IDENTITY 15 questions to define your voice, vocabulary, red flags

Phase 2 STRATEGY 7-day pillar calendar, post format, humanization rules

Phase 3 ENGAGEMENT Commenting rules, anti-detection, epistemic verification

Phase 4 TASK PLAN Review every task before anything gets automated

Phase 5 CREATE & RUN Deploy tasks, monitor, iterate weekly

Nothing is automated until you explicitly approve. Phase 4 is a hard gate — Claude will not proceed without your "approved."

Install

git clone https://github.com/videomakingio-gif/claude-linkedin-automation.git

cd claude-linkedin-automation

chmod +x install.sh && ./install.sh

The interactive installer walks you through 3 choices:

| Step | Options |

|---|---|

| Scope | Global (all projects) / Project (current only) / Both |

| IDE | Claude Code / Cursor / Windsurf / Any combination |

| Confirm | Review and approve before anything is created |

Quick install (skip the wizard):

./install.sh --global # All projects, Claude Code

./install.sh --project # Current project only

./install.sh --uninstall # Remove everything, all IDEs

Update: git pull — Claude Code uses a symlink, so the skill stays in sync. Cursor/Windsurf use file copies — re-run the installer after pulling.

After installing, type /linkedin in any Claude session to start.

How It Works

Architecture

claude-linkedin-automation/

├── SKILL.md # The skill itself (5-phase wizard)

├── HUMAN-VOICE-LAYER.md # Anti-detection Level 2: structural naturalness

├── install.sh # Interactive installer

├── modules/

│ └── linkedin.md # Full module config (560 lines)

├── references/

│ ├── tov-framework.md # Voice: 10 rhetorical patterns, vocabulary, registers

│ ├── anti-detection-playbook.md # 7 rules (L1) + Level 2 structural tells, NDI formula

│ ├── content-templates.md # Day-by-day templates with worked examples

│ ├── epistemic-verification.md # 7-checkpoint fact verification gate

│ └── task-catalog.md # Full prompt templates for all 10 tasks

├── examples/

│ ├── weekly-plan.md # Real Week 3 content plan

│ └── engagement-session.md # Scored session with 5 comments

├── assets/ # Growth charts and dashboard

├── CHANGELOG.md

├── CONTRIBUTING.md

└── LICENSE

The 10 Tasks

| # | Task | Schedule | What It Does |

|---|---|---|---|

| 1 | linkedin-daily-post |

Daily 8:00 | Publishes today's post + auto-comment after 20 min |

| 2 | linkedin-daily-engagement |

Daily 9:00 | 25-min session: 8-10 likes + 5 comments |

| 3 | linkedin-reply-to-replies |

Daily 16:00 | Responds to comment threads |

| 4 | linkedin-dm-prep |

Daily 10:00 | Generates DM draft replies for human review |

| 5 | linkedin-news-scout |

Daily 7:00 | Fetches niche news, flags content ideas |

| 6 | linkedin-experiment-audit |

Daily 15:00 | Naturalness score, anti-pattern compliance |

| 7 | linkedin-weekly-planner |

Sat 17:00 | Generates next week's 7 posts |

| 8 | linkedin-weekly-diary |

Sat 19:00 | Compiles behind-the-scenes blog draft |

| 9 | linkedin-weekly-report |

Sun 20:00 | Analytics: KPIs, per-post ranking, recommendations |

| 10 | linkedin-outreach-daily |

Disabled | Cold outreach (opt-in) |

Minimum viable setup: Tasks 1 + 2 + 9. Three tasks, fully autonomous.

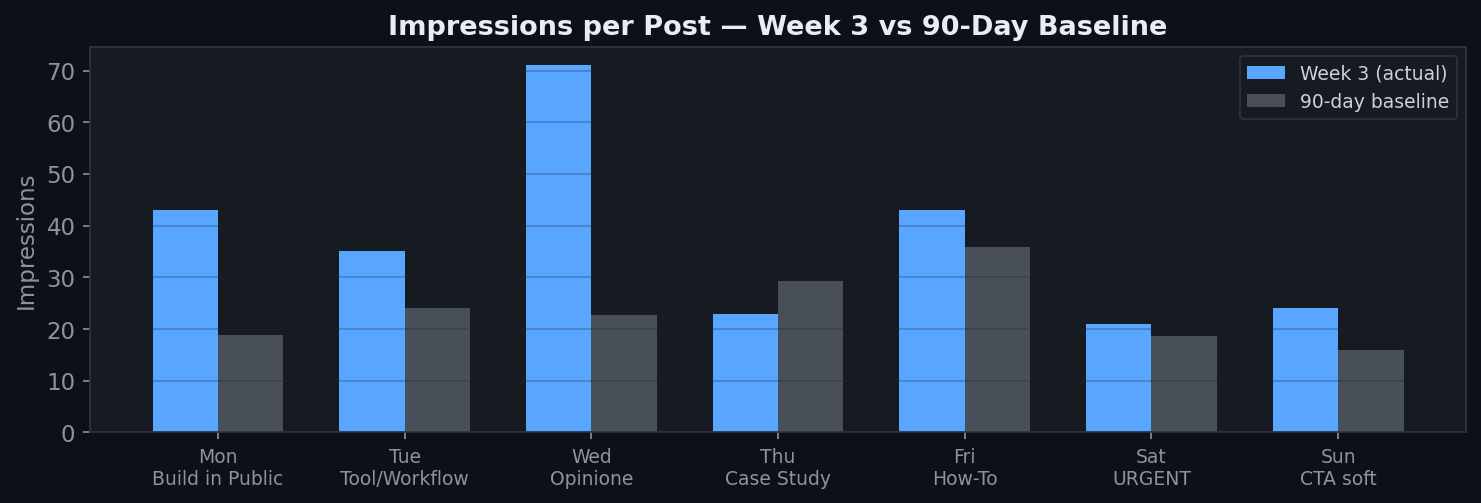

Results

27+ Days of Production (April 6, 2026)

| Metric | Value |

|---|---|

| Duration | 27+ days of daily operation |

| Scheduled tasks | 10 (9 active + 1 disabled) |

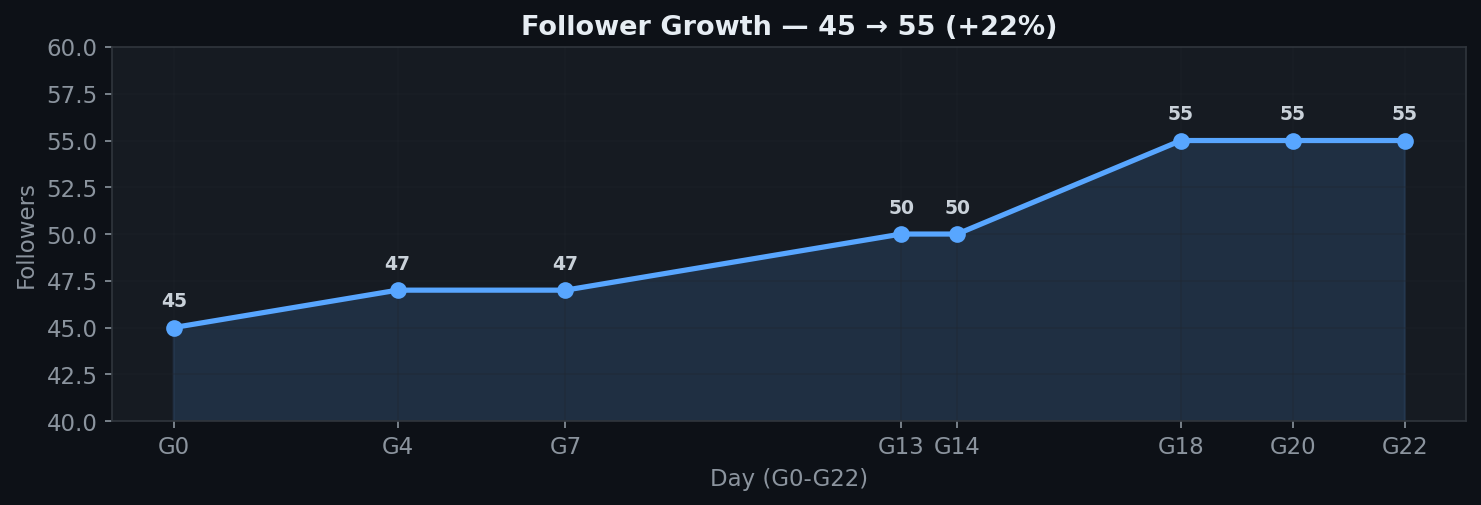

| Follower growth | 45 → 55+ |

| Posts published | 7/week, zero missed |

| Engagement sessions | Daily, 25 min each |

| AI detection incidents | 0 |

| Avg engagement rate | 3.9% |

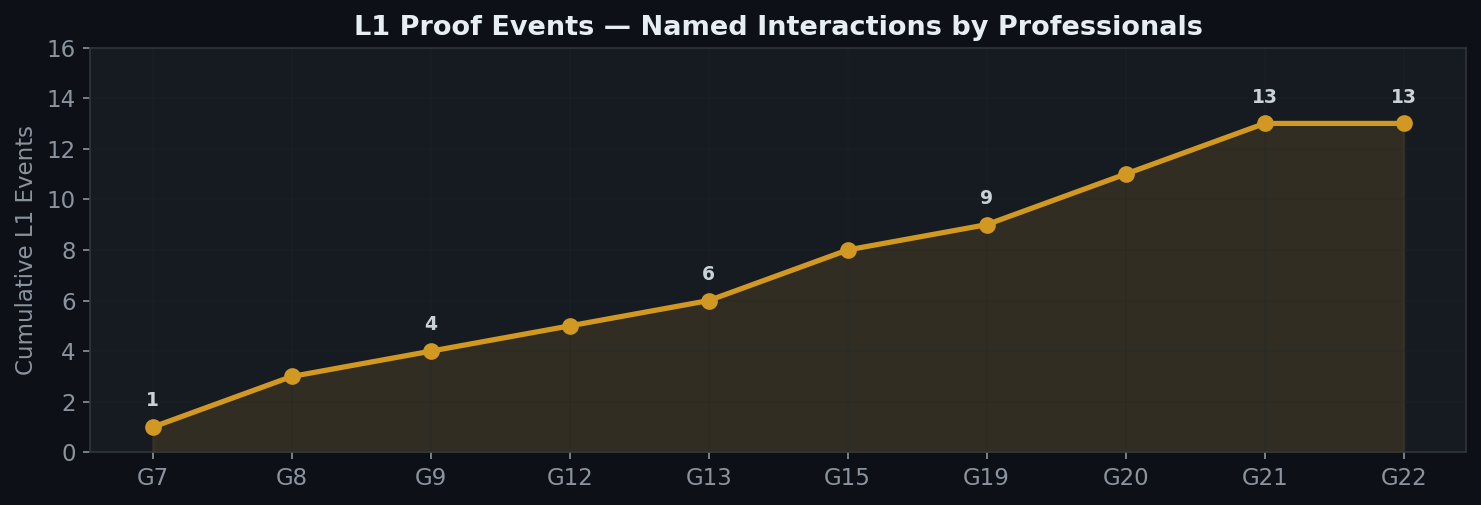

| L1 proof events | 13+ named interactions |

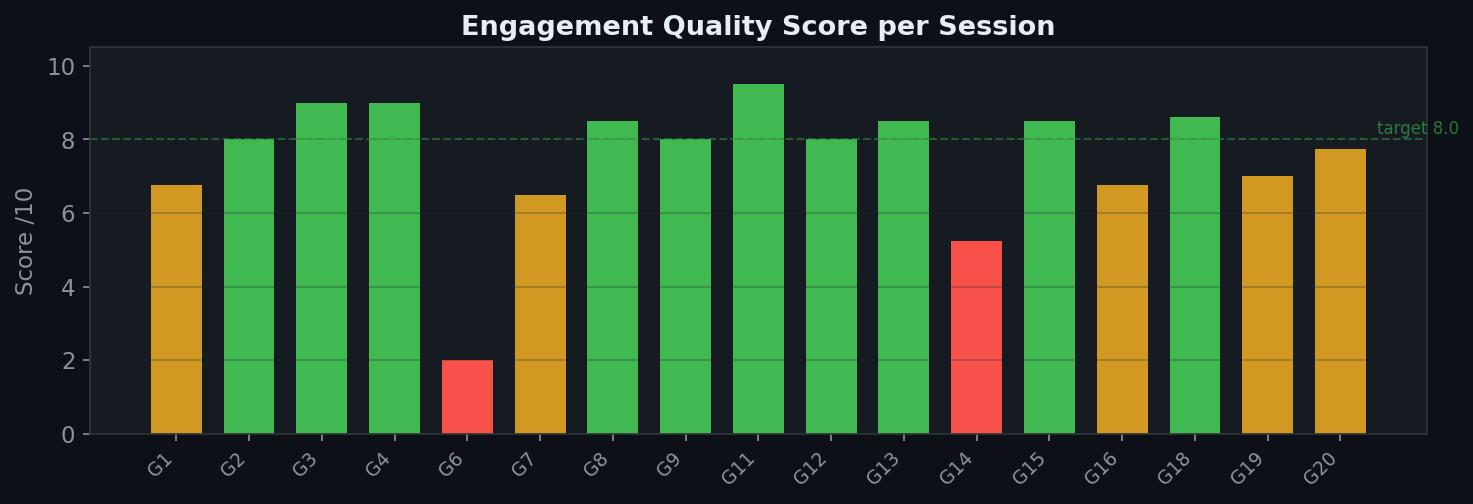

| Avg engagement score | 8.0/10 |

| Non-Detection Index | 5.0+ avg |

| First product sale | Via LinkedIn funnel (April 4, 2026) |

Professionals replied by name, sent multi-message DMs, mentioned the profile in their own posts, and sent connection requests — all without suspecting automation.

Key milestone: On April 4, 2026, the system completed a full attribution cycle: LinkedIn post → site visit → purchase of a digital product. The funnel worked without any manual intervention.

Growth Charts

Click to expand charts

![]()

Interactive Dashboard (HTML) — hover tooltips with daily data

Anti-Detection

The system uses a two-level anti-detection architecture.

Level 1: Behavioral Rules (7 rules, empirically validated)

| # | Rule | Why |

|---|---|---|

| 1 | Tool mention limit: max 2/5 comments mention your tool | 3/5 was flagged as promotion on Day 1 |

| 2 | Structure variation: never repeat same pattern consecutively | Repetition is the #2 detection vector |

| 3 | Off-topic comment: at least 1/5 outside your niche | 0/5 scored 6.0/10, 1-2/5 scored 8.5-9.0 |

| 4 | Evangelization limit: max 1 promotional phrase per session | "I use it every day" = instant flag |

| 5 | Like-only on agreements: don't extend agreement threads | Extending sounds artificial |

| 6 | Fact-check before asserting: verify or rephrase as question | Profile-B incident, Day 22 |

| 7 | High-traffic targeting: 1+ comment on posts with 200+ reactions | 7-12x reach multiplier |

Level 2: Structural Naturalness (Human Voice Layer)

Level 1 prevents algorithmic flags. Level 2 addresses a subtler problem: pattern recognition by expert human readers. Even with perfect vocabulary and timing, certain structural tells betray AI authorship to the professionals who matter most.

The 7 structural tells that L1 doesn't cover:

| Tell | Pattern | Fix |

|---|---|---|

| Simmetria strutturale | Every post: Hook → Body (3 blocks) → Closing | Rotate among 6+ structures, max 2/week same structure |

| Parallelismo sintattico | Lists with identical grammatical structure | Break symmetry deliberately: 1 element must differ |

| Informalità ingegnerizzata | Informal markers placed at strategic positions | Informality must emerge from structure, not be inserted |

| Zero imperfezioni | No interrupted thoughts, no digressions | Inject 1 genuine flow-break per post |

| Case study cinematografici | Perfect setup-payoff arcs with clean quotes | Add dirty details: vague memory + hyper-specific detail |

| Arco emotivo prevedibile | Every post: tension → resolution | 1 post/week with no resolution, ending in open question |

| Registro emotivo mappato | Wednesday = indignation (constructed, not reactive) | Emotional posts need a real, nameable trigger |

6 alternative post structures are defined in HUMAN-VOICE-LAYER.md: Stream of Consciousness, Question Without Answer, Start From the Middle, Broken List, Micro-post, Response to Something.

Pre-publication checklist (5/7 required to publish):

- Different structure from yesterday and the day before?

- No perfect parallelism in lists? (at least 1 asymmetric element)

- At least 1 genuine flow break? (not an inserted marker, a real interruption)

- Numbers are not all round? (not 85→9, but 85→11 or "something like 80-90 mins")

- Case study has dirty details? (vague memory + specific detail)

- Emotional arc is not always positive? (at least 1 unresolved post/week)

- Could this post have been written by a human in 5 minutes?

Full methodology: HUMAN-VOICE-LAYER.md

Non-Detection Index (NDI)

NDI = (L1 × 2 + L2 × 1) / (L1 + L2 + L3) × 10

- L1 (weight 2): Named replies, multi-message DMs, public mentions

- L2 (weight 1): Genuine questions, connection requests

- L3 (weight 0): Generic likes, one-word replies

NDI > 5.0 = healthy. < 3.0 = investigate. < 4.0 two weeks = pause 48h and audit.

Epistemic Verification Gate

Before publishing any factual claim, run 7 checkpoints:

- Fact vs. Inference — label it correctly

- Uncertainty Markers — verified / observed / inferred / speculative

- Source Attribution — name it or don't claim it

- Temporal Coherence — when did this happen?

- Case-Specific Claims — verify in 30s or rephrase as question

- Self-Assessment Bias — measured vs estimated vs projected

- Absence-as-Proof — "I haven't found" ≠ "it doesn't exist"

7/7 pass = publish. 5-6/7 = fix and publish. <5/7 = rewrite.

Full methodology: references/anti-detection-playbook.md

Compatibility

| Feature | Claude Code | Cowork | Cursor | Windsurf |

|---|---|---|---|---|

| Wizard (Phase 1-4) | Full | Full | Full | Full |

| Identity document generation | Full | Full | Full | Full |

| Weekly plan creation | Full | Full | Full | Full |

| Session tasks (Phase 5) | CronCreate (3-day max) |

create_scheduled_task |

— | — |

| Permanent tasks (Phase 5) | crontab / Cloud Scheduler | create_scheduled_task |

Manual | Manual |

| Browser automation | Chrome MCP (manual config) | Chrome MCP (built-in) | — | — |

| Update flow | Full | Full | Full | Full |

| Install method | Symlink (auto-update) | Symlink | File copy | File copy |

Recommended Setup

| Use case | Best environment |

|---|---|

| Solo operator, zero config | Cowork — scheduled tasks handle everything |

| Developer, full control | Claude Code — cron + Python + GCP |

| Hybrid | Wizard in Cowork, deploy in Code |

Reference Files

| File | When to read |

|---|---|

HUMAN-VOICE-LAYER.md |

Anti-detection Level 2: structural naturalness, 6 post structures, noise injection rules |

references/tov-framework.md |

Setting up voice, vocabulary, emotional registers |

references/anti-detection-playbook.md |

Configuring engagement rules, NDI scoring |

references/content-templates.md |

Creating weekly post plans with day-by-day templates |

references/epistemic-verification.md |

Before publishing any factual claim |

references/task-catalog.md |

Customizing task prompts for Phase 5 |

modules/linkedin.md |

Full LinkedIn module implementation reference |

Niche Adaptation

The skill was built in the AI/B2B automation niche, but the architecture is domain-agnostic. The 7-day pillar calendar adapts to any niche — you keep the emotional structure, change the content domain:

| Niche | Tuesday (Tool/Workflow) | Thursday (Case Study) | Friday (How-To) |

|---|---|---|---|

| AI / Automation | Integration deep-dive | Client time saved | Claude skill tutorial |

| B2B SaaS | Feature walkthrough | Customer ROI story | Integration guide |

| Coaching / Personal brand | Framework breakdown | Client transformation | Routine walkthrough |

| Developer / OSS | Architecture decision | Community contribution | Setup tutorial |

| Marketing / Agency | Campaign teardown | Client results | Platform tutorial |

| Legal / Consulting | Regulatory update | Case resolution | Process guide |

The anti-detection rules, NDI scoring, epistemic verification gate, and task scheduling work identically across all niches. Only the content domain and vocabulary change — and the wizard captures those in Phase 1.

FAQ

How long does setup take?

4-6 hours total. Identity definition is 2-3 hours (the hardest part). First week of content 2-3 hours. Task creation 15 minutes.

What's the minimum viable setup?

Tasks 1 (daily-post) + 2 (daily-engagement) + 9 (weekly-report). Three tasks, fully autonomous.

Can I post more than once per day?

Don't. LinkedIn penalizes same-day multiple posts.

How do I know if comments are natural?

Target 8.0+/10 on the scoring rubric. Below 7.0 = adjust rules. See anti-detection-playbook.md.

What if a task fails silently?

Every task writes a log. Check report/ daily. The experiment-audit task (daily 15:00) catches most silent failures.

Does this only work in Italian?

No. The wizard and examples are in Italian (the production language), but the system works in any language. Phase 1 captures your voice, vocabulary, and audience in your language — Claude generates everything accordingly.

Does this only work for the AI/automation niche?

No. The architecture (pillar calendar, anti-detection, NDI, verification gate) is niche-agnostic. See Niche Adaptation for examples.

What is the Human Voice Layer?

It's a Level 2 anti-detection framework added after 22 days of operation. Level 1 prevents algorithmic detection. Level 2 addresses structural patterns that reveal AI authorship to expert human readers — even when vocabulary and timing are correct. See HUMAN-VOICE-LAYER.md.

Who Built This

Giovanni Liguori — AI Automation Architect

I transform manual processes into automated ecosystems for Italian SMBs and freelancers using Claude + Python + Google Cloud.

giovanniliguori.it • LinkedIn • Case Study

The Experiment

Can a well-instructed LLM manage a professional LinkedIn profile without being identified as non-human?

After 27+ days of daily operation:

- Zero detection incidents

- 13+ L1 proof events (named conversations with professionals)

- 8.0/10 average engagement quality

- 3.9% average engagement rate

- 5.0+ NDI (Non-Detection Index) consistently

- 1 product sale attributed directly to the LinkedIn funnel (April 4, 2026)

The system works because it treats identity and anti-detection as the same thing. A profile with a clear, consistent, humanized voice is inherently less likely to be flagged. It's also more likely to convert.

The Level 2 (Human Voice Layer) extends this principle: structural naturalness — varied post formats, asymmetric lists, dirty case study details, unresolved emotional arcs — builds the kind of trust that drives DMs, connection requests, and ultimately sales.

License

MIT License — Copyright (c) 2026 Giovanni Liguori

Contributing

See CONTRIBUTING.md. The methodology improves with more data points.

Built with Claude. Validated in production. Open source.

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi