clash-for-ai

Health Gecti

- License — License: AGPL-3.0

- Description — Repository has a description

- Active repo — Last push 0 days ago

- Community trust — 20 GitHub stars

Code Basarisiz

- spawnSync — Synchronous process spawning in apps/desktop/scripts/build-core.mjs

- process.env — Environment variable access in apps/desktop/scripts/build-core.mjs

- fs module — File system access in apps/desktop/scripts/cleanup-mac-artifacts.cjs

- spawnSync — Synchronous process spawning in apps/desktop/scripts/electron-builder-with-root-version.mjs

- process.env — Environment variable access in apps/desktop/scripts/electron-builder-with-root-version.mjs

Permissions Gecti

- Permissions — No dangerous permissions requested

Bu listing icin henuz AI raporu yok.

One-click switching and management of major relay API services, with native integration for official large language models. It provides a unified interface for local AI tools, eliminating the need to manually switch configuration files. | 一键切换管理各大中转API服务、支持官方原生大模型接入。为本地AI工具提供统一接口,无需手动切换配置文件。

Clash for AI

Public Docs | Deep Link Import Guide

Clash for AI is a local desktop gateway for people who switch between multiple AI gateways or API relay providers.

Its role is:

- Provide one local API entry point

- Switch different upstream gateways behind that local entry point

- Manage providers, health checks, and request logs from a desktop UI

It is not primarily a manager for one specific AI tool. It is better understood as:

- a local relay gateway for tool traffic

- a multi-provider control plane

- a local native-model source manager for upstream models that need their own registry and routing layer

It currently gives you:

- One stable local endpoint for your tools

- A desktop control plane for switching providers

- A local model-source gateway for managing native upstream models

- Local request logs and health checks for debugging provider issues

Core Idea

The model is simple:

- Your tools all point to one local gateway

- Upstream gateway switching happens in the desktop app

- Real provider credentials, health status, and request logs stay managed locally

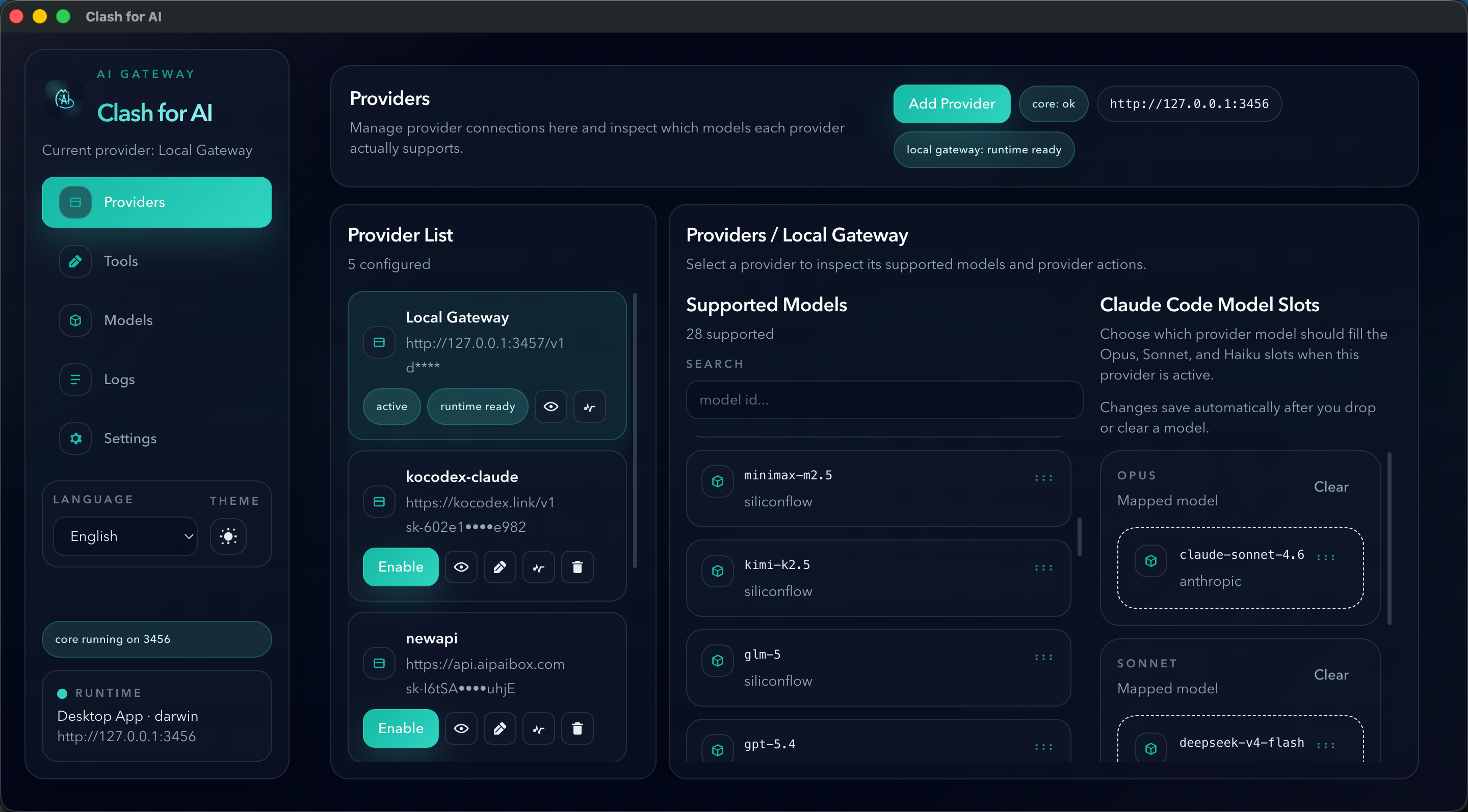

Screenshot

What Problem It Solves

Clash for AI is designed for people who depend on multiple AI gateways in daily use.

It mainly addresses two problems:

- API relay providers can be unstable, so you may need to switch between different gateways frequently

- If you use multiple coding tools, chat clients, or SDK scripts, changing providers often means repeatedly updating configuration in each tool

The current version also addresses a third problem:

- Native model upstreams are harder to manage consistently because they do not always fit the old “one active provider” switching model, so Clash for AI now includes a dedicated local Models Gateway to register and expose those sources in one place

Clash for AI puts one local gateway in front of those tools.

You configure a single local endpoint once, then switch the upstream relay provider from the desktop app.

What It Does

Clash for AI runs a local API gateway on your machine.

Most editors, chat clients, CLI tools, or custom scripts connect to the local endpoint:

http://127.0.0.1:3456/v1

Then Clash for AI forwards requests to the currently active provider you configured in the desktop app.

In the current version, the local access path is most mature around an OpenAI-compatible local entry point. Anthropic-compatible upstream handling and some Claude-style tool integrations are present, but that part of the stack is still being refined.

This means:

- You do not need to reconfigure every tool when switching providers

- Provider credentials stay managed locally in one place

- You can inspect health status and request logs from the desktop UI

Desktop Modules

The desktop app is organized into five main modules.

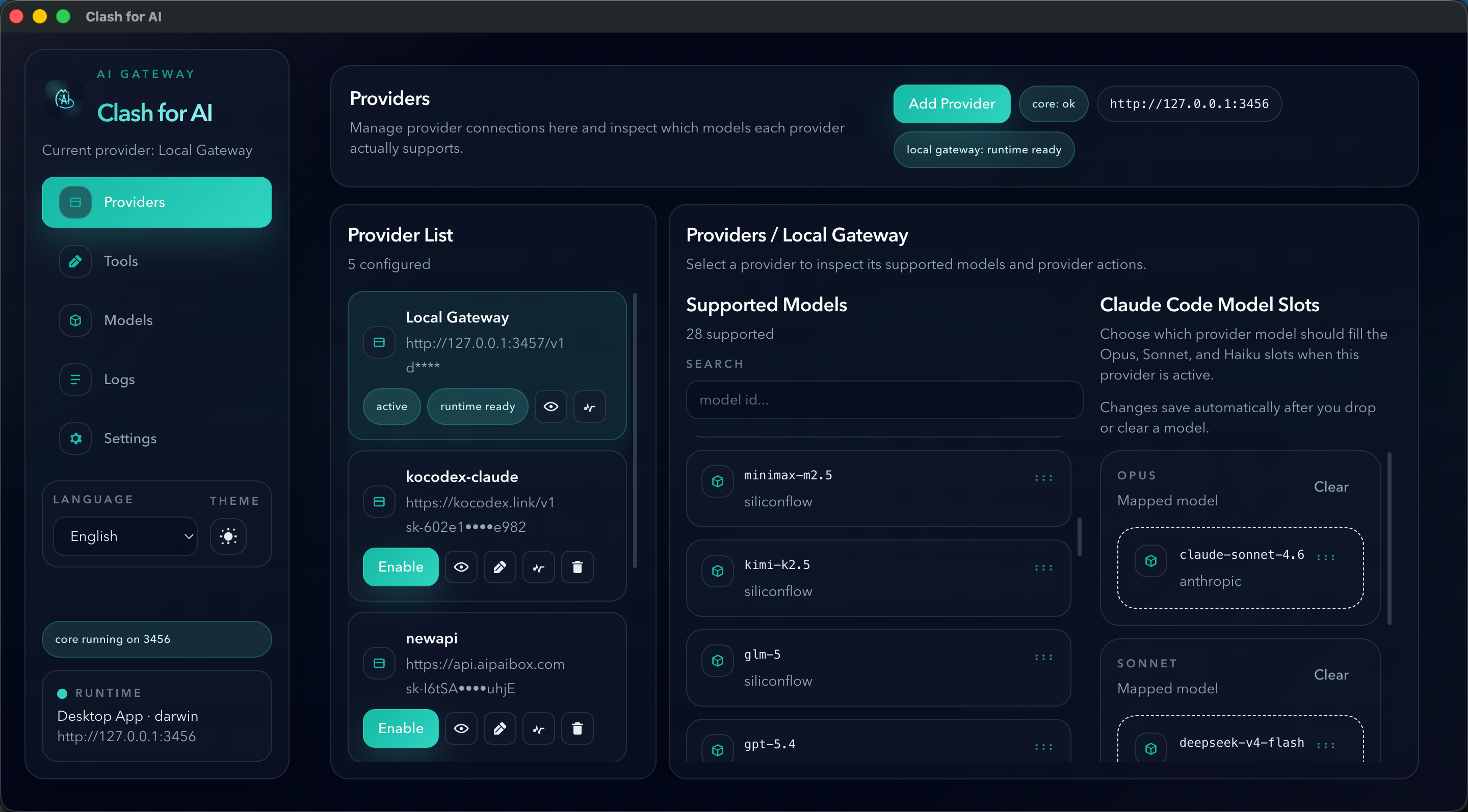

1. Providers

The Providers page is where you manage the upstream services that the main local gateway can route to.

In the simplest mental model:

Providersis for managing relay services- these are usually remote aggregation or proxy platforms

- examples include services similar to

new-api,one-api, orsub2api

Use it to:

- Add or edit provider connections

- Switch the currently active upstream provider

- Run provider health checks

- Inspect the models a provider exposes

- Configure Claude Code model slot mapping for the active provider

So if a user mainly wants to switch between different remote relay providers, Providers is the primary page.

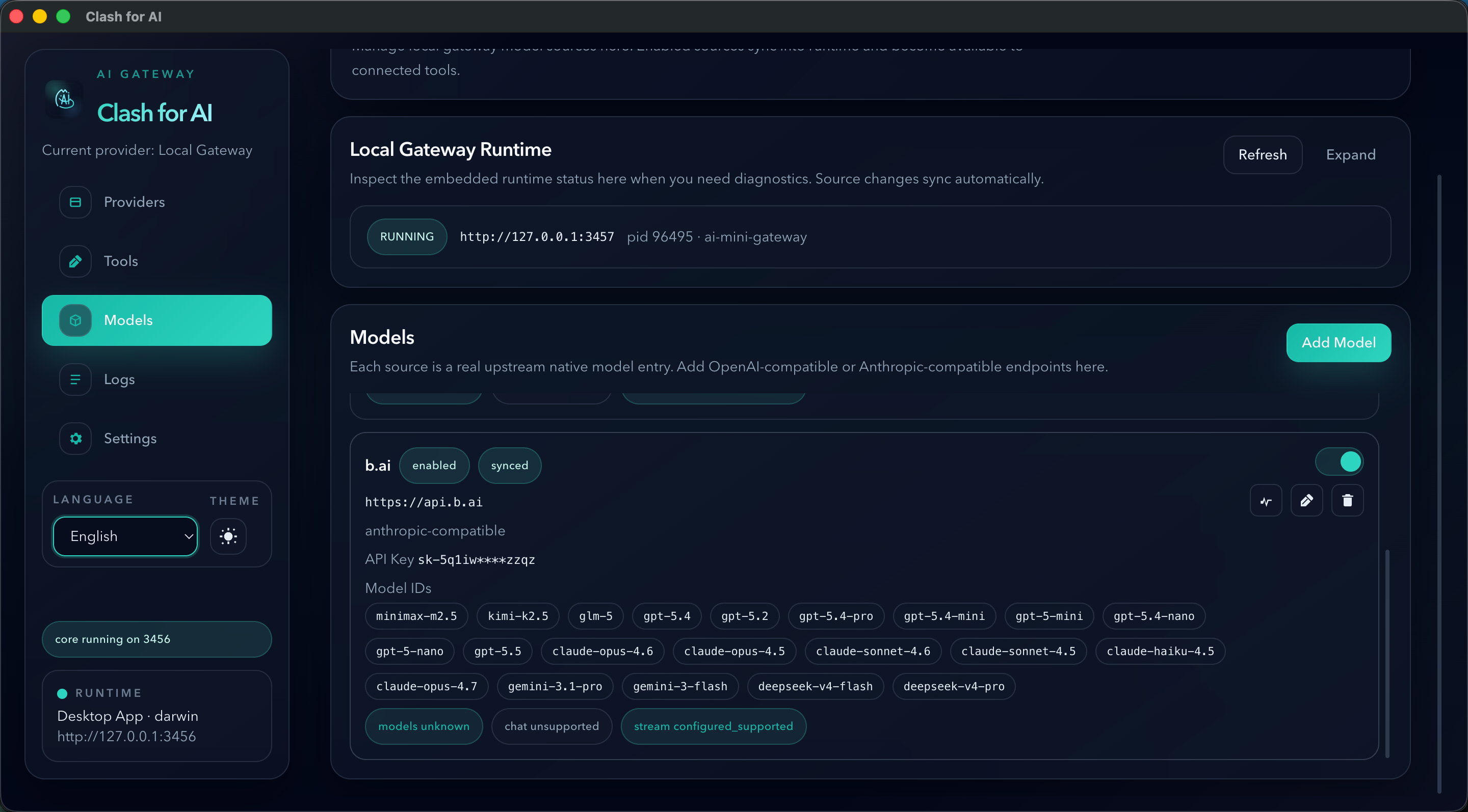

2. Models

The Models page exists for a different problem.

It manages a local gateway that runs on your own machine and exposes native model sources in a unified way. Each entry is a model source that can point to:

- an OpenAI-compatible upstream

- an Anthropic-compatible upstream

Use it to:

- Add local gateway model sources

- Auto-detect or manually define model IDs

- Enable or disable model sources

- Sync those sources into the embedded local gateway runtime

Why this module exists:

- Many native model upstreams are not best managed as one switched relay provider

- Different native upstreams expose different model ids and model lists

- Users may want a local service that behaves more like running a small

new-apiorsub2apistyle gateway on their own machine - That local gateway can then expose many native upstream models through one controlled local layer

So the Models page introduces a separate local Models Gateway layer. Instead of only switching one active Provider, Clash for AI can now maintain a set of native model sources locally and expose them through a local compatibility gateway.

In practical terms:

Providersmanages remote relay services and also shows the local Models Gateway as one selectable providerModelsmanages the internal source list that powers that local Models Gateway- the local Models Gateway is added into the Provider management list by default, so tools can still treat it as one provider option on the main gateway side

This means the relationship is:

Modelsconfigures the local gateway's native model sources- that local gateway becomes one provider option inside

Providers Providersremains the place where the user selects between remote relay services and the local Models Gateway

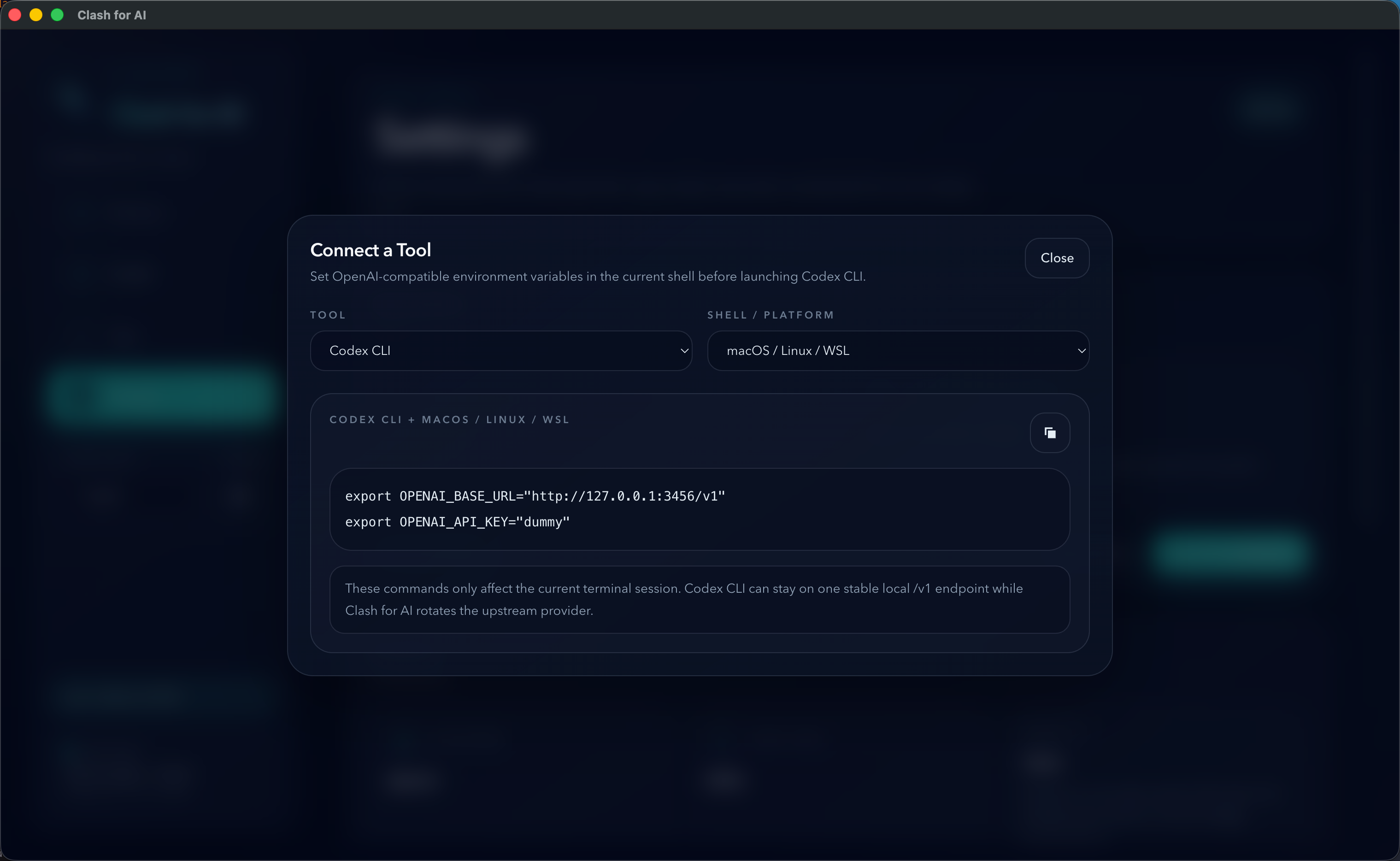

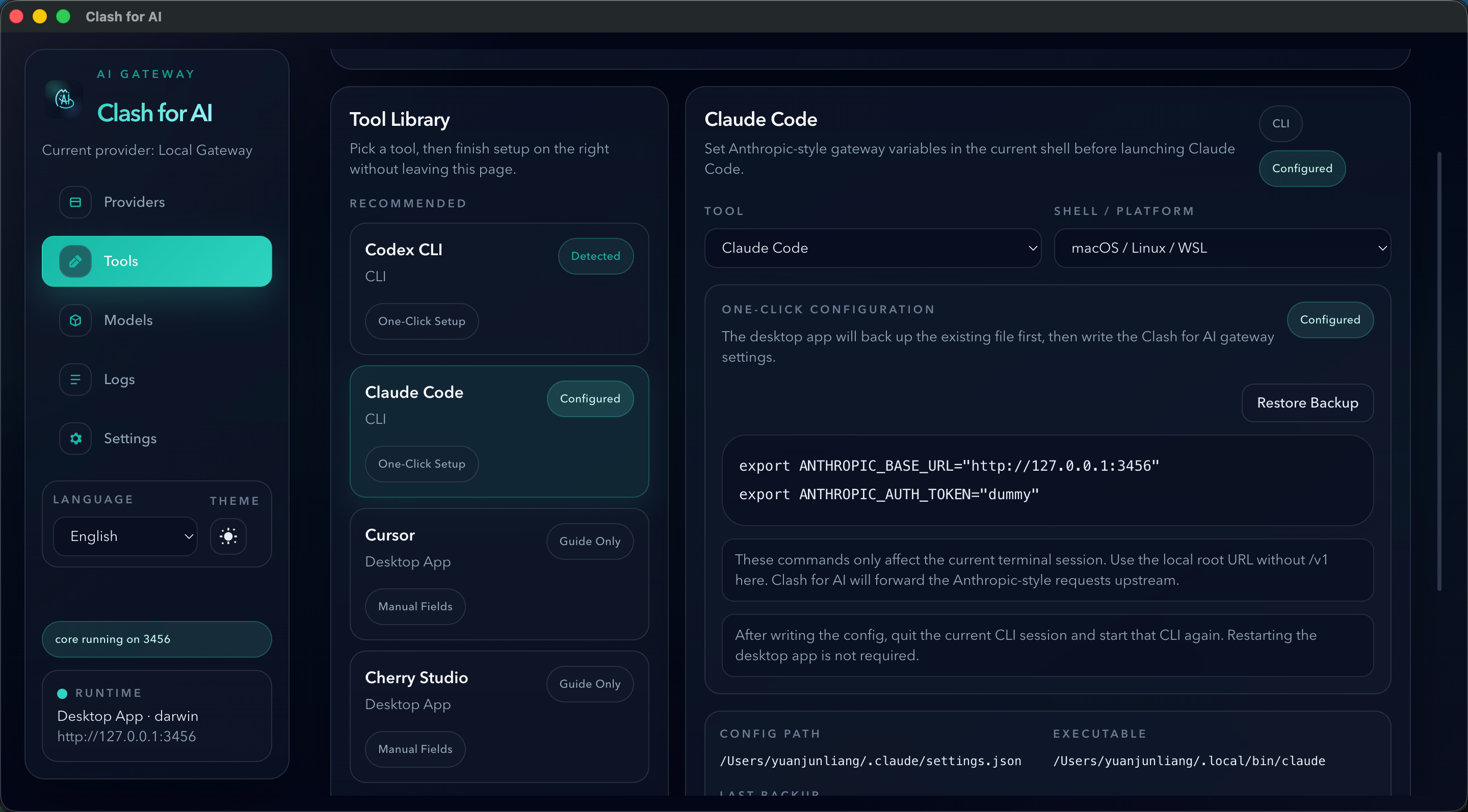

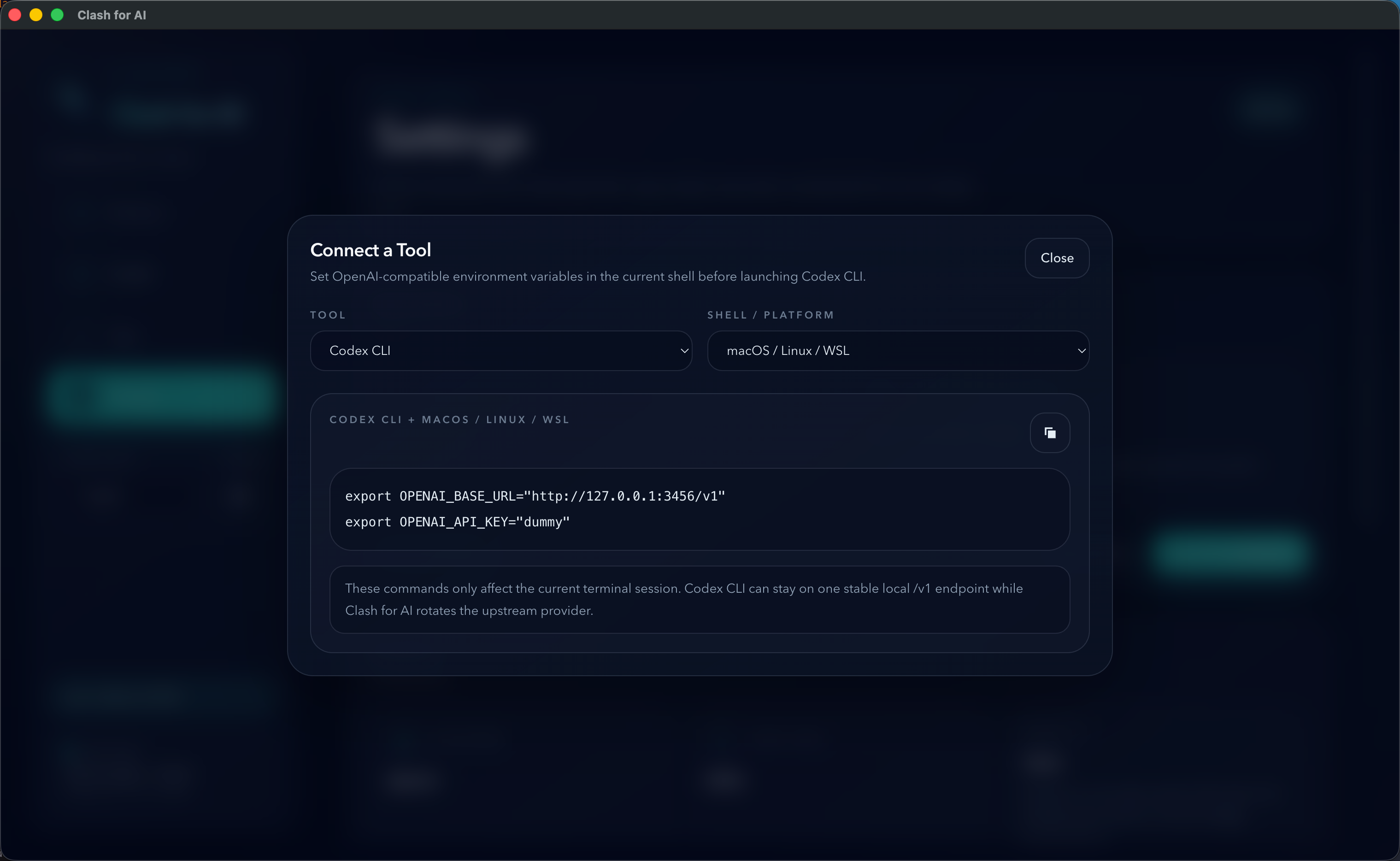

3. Tools

The Tools page helps client tools connect to Clash for AI correctly.

Use it to:

- Copy ready-to-use local endpoint values

- Run one-click setup for supported tools such as Codex CLI and Claude Code

- Follow guided setup for tools like Cursor, Cherry Studio, and SDK scripts

- Drag supported models into Claude Code model slots, then switch between them in Claude Code with the

/modelcommand

4. Logs

The Logs page shows request history flowing through the local gateway.

Use it to:

- Inspect recent requests

- See provider, model, path, and latency information

- Read failures when an upstream provider behaves incorrectly

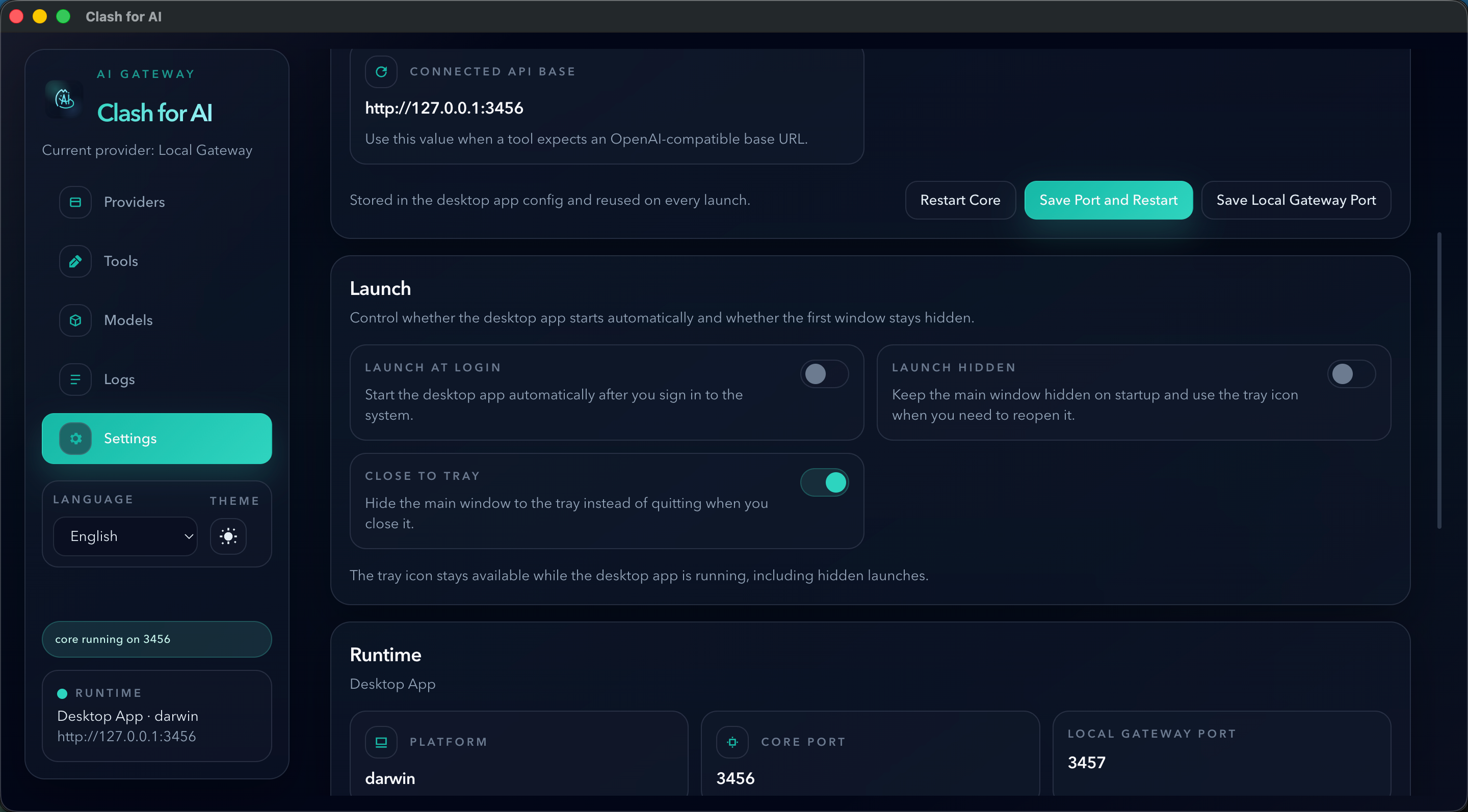

5. Settings

The Settings page is the system control area for the desktop app itself.

Use it to:

- View runtime status

- Adjust local ports

- Check for desktop updates

- Control launch and tray behavior

- Launch at login

- Launch hidden

- Close to tray

Quick Start

If you do not want to read the full guide yet, use one of these quick setup patterns.

WSL / Linux Server

If you want to deploy Clash for AI on WSL or a plain Linux server instead of using the desktop app:

curl -fsSL https://raw.githubusercontent.com/xiaoyuandev/clash-for-ai/main/scripts/install.sh | bash

After installation, the default endpoints are:

- Web management UI:

http://127.0.0.1:3456 - OpenAI-compatible local endpoint:

http://127.0.0.1:3456/v1

Production installer:

scripts/install.shdownloads the latest stable GitHub Release assets.scripts/install-from-source.shis only for development, local validation, or unreleased branches.

Full guide:

1. Add a provider in Clash for AI

Open the Providers page in the desktop app and fill in:

NameBase URLAPI Key

For OpenAI-compatible relay providers, the Base URL usually ends with /v1.

For other compatible APIs, whether /v1 should be included depends on the upstream implementation. At the moment, OpenAI-compatible upstreams are the clearest and most mature path in Clash for AI.

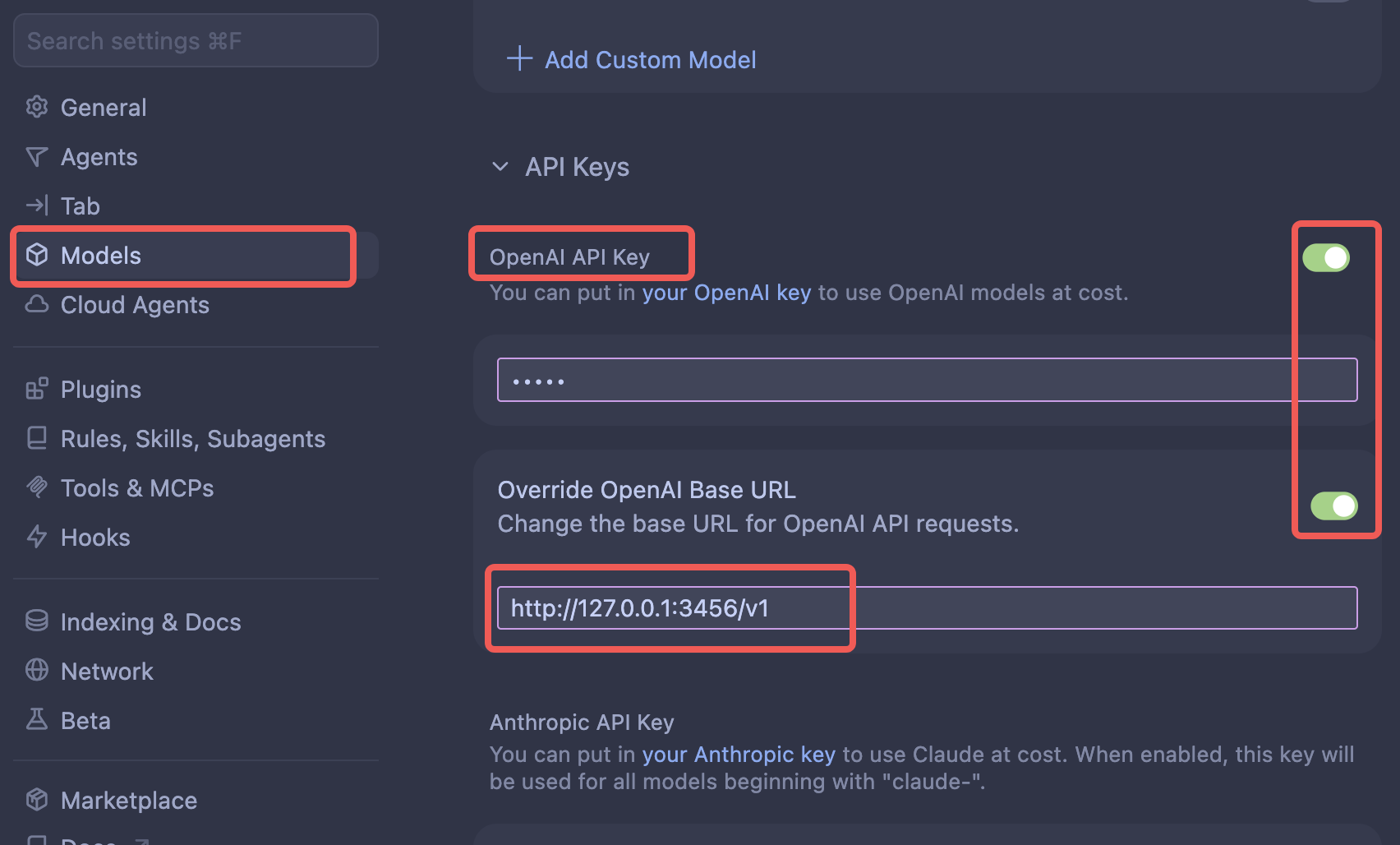

2. Point your tool to the local endpoint

In most supported tools, configure:

Base URL: http://127.0.0.1:3456/v1

API Key: dummy

If the local app selects another port at runtime, use the actual connected api base shown in the desktop UI.

3. Use the Tools page when you need tool-specific setup

The Tools page provides:

- Copy-ready connection values

- One-click setup for Codex CLI and Claude Code

- Setup guidance for tools such as Cursor, Cherry Studio, and SDK scripts

- Drag-and-drop model mapping for Claude Code model slots, so you can switch mapped models in Claude Code with the

/modelcommand

CLI Tools

For OpenAI-compatible CLI tools such as Codex CLI, set environment variables in the current shell before launching the tool:

export OPENAI_BASE_URL="http://127.0.0.1:3456/v1"

export OPENAI_API_KEY="dummy"

Then start the CLI from the same terminal session.

For Claude Code style tools, Clash for AI currently provides an Anthropic-style environment variable setup flow:

export ANTHROPIC_BASE_URL="http://127.0.0.1:3456"

export ANTHROPIC_AUTH_TOKEN="dummy"

Inside Clash for AI, you can also open the Tools page and use the built-in one-click setup flow for supported CLIs.

One clarification: the most stable local access path in the current release is still the OpenAI-compatible one. Anthropic-style local access and upstream compatibility are still being improved. If your tool also supports a custom OpenAI-compatible endpoint, prefer http://127.0.0.1:3456/v1.

IDEs And Plugins

For IDEs, editor plugins, and desktop chat clients, open the provider settings and fill in:

Base URL: http://127.0.0.1:3456/v1

API Key: dummy

Inside Clash for AI, open the Tools page to find the recommended connection values for supported tools.

For tools like Cursor or Cherry Studio, if there is a provider type or protocol field, choose an OpenAI-compatible custom provider mode first, then paste the values above.

In Cursor specifically, open its custom provider settings, choose an OpenAI-compatible provider mode, then fill in the local Base URL and dummy API key.

SDK Scripts And Local Apps

If you want to interact with the currently active model provider from your own scripts, point your SDK or HTTP client to the local Clash for AI gateway instead of the upstream relay directly.

Example with the OpenAI SDK:

import OpenAI from "openai";

const client = new OpenAI({

apiKey: "dummy",

baseURL: "http://127.0.0.1:3456/v1"

});

const response = await client.responses.create({

model: "gpt-4.1",

input: "Say hello from Clash for AI."

});

console.log(response.output_text);

You can do the same thing with plain HTTP requests:

curl http://127.0.0.1:3456/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer dummy" \

-d '{

"model": "gpt-4.1",

"messages": [

{ "role": "user", "content": "Say hello from Clash for AI." }

]

}'

The actual model that responds still depends on the model name your script sends and on which provider is currently active in the desktop app.

Documentation

If you want fuller step-by-step guidance, tool-specific examples, and troubleshooting notes, continue with:

- User Guide

- WSL / Linux Server Deployment Guide

- WSL / Linux Server Deployment Guide (English)

- 中文 README

If you are deploying on WSL or Linux server, prefer the server guide first. It also includes pinned release installation using CLASH_FOR_AI_VERSION.

How To Read Protocol Support Today

In practice, many upstream gateways expose both OpenAI-compatible and Anthropic-compatible APIs.

Clash for AI is designed around those two compatibility families, but the current implementation is not equally mature in both directions:

- OpenAI-compatible local access is the clearest and most stable primary path

- Anthropic-compatible upstream auth handling and some tool integrations are already covered

- Full Anthropic-style local protocol coverage is still being improved

Because of that, for tools that let you choose a custom OpenAI-compatible endpoint, that path is currently the safest default.

About Model Lists

Provider model list fetching exists, but it should be understood as a compatibility feature rather than a guaranteed capability of every upstream.

Common reasons include:

- Different gateways expose model list endpoints differently

- Some upstreams do not expose a standard model list endpoint at all

- Returned JSON payloads may vary

So a provider can still be usable for request forwarding even if its model list is incomplete or unavailable.

FAQ

Why does macOS show “the developer cannot be verified” on first install?

Current public macOS builds may still show a Gatekeeper warning on first install or first launch because the project is currently distributed with a free ad-hoc style signing path instead of a fully trusted paid Apple distribution chain for every released artifact.

That is why users may see messages like:

“Clash for AI” cannot be opened because the developer cannot be verified.

or:

“Clash for AI” cannot be opened because Apple cannot verify it for malicious software.

If this happens, the user should do this:

- Move the app into

/Applicationsif it is still inside a temporary download folder - In Finder, right click

Clash for AI.app - Choose

Open - In the system confirmation dialog, choose

Openagain

If the Open action still does not appear, use:

System SettingsPrivacy & Security- Scroll to the security warning area for Clash for AI

- Click

Open Anyway

If you are comfortable with the command line and have confirmed the app came from the official release page, you can also remove the macOS quarantine attribute with xattr:

sudo xattr -rd com.apple.quarantine "/Applications/Clash for AI.app"

After that, launch Clash for AI.app from Finder or Launchpad.

After the first successful open, later launches normally stop showing the same warning.

If a .pkg installer is attached to the release, prefer the .pkg build over dragging a raw .app bundle manually.

Are request logs uploaded to any remote service?

No. Request logs are stored locally on your machine only. Clash for AI does not upload request log records to any remote service.

Local Development

Requirements:

- Node.js

- pnpm

- Go toolchain, if you want the core service to build locally

Install dependencies:

pnpm install

Run the desktop app in development mode:

pnpm dev

Run the Web UI development mode:

pnpm dev:web

pnpm dev:web starts both the core service and the Web dev server. It is intended for local Web UI debugging. The default ports are:

- core API:

3456 - local gateway runtime:

3457

If those ports conflict with other local programs, or if you want to use a locally built ai-mini-gateway, override them in the repository root .env.local:

HTTP_PORT=3456

LOCAL_GATEWAY_RUNTIME_PORT=3457

LOCAL_GATEWAY_RUNTIME_EXECUTABLE=/path/to/ai-mini-gateway/bin/ai-mini-gateway

These values are only local development helpers. If they are omitted, the default ports are used. Before starting, pnpm dev:web releases old listeners on those ports so core and local gateway restart with the latest local code.

Build the desktop app:

pnpm build

Build packaged desktop releases:

pnpm --filter desktop build:mac

pnpm --filter desktop build:win

pnpm --filter desktop build:linux

Sync the bundled ai-mini-gateway runtime version before packaging when a new upstream release is available:

pnpm --filter desktop update:ai-mini-gateway-runtime

pnpm --filter desktop update:ai-mini-gateway-runtime v0.1.1

pnpm --filter desktop update:ai-mini-gateway-runtime v0.1.1 --prepare

Use the default command to track the latest release, or pass an explicit version to pin a specific tag. Add --prepare to also refresh the local bundled runtime binary and version metadata.

Project Structure

apps/desktop Electron desktop application

core/ Go local gateway and provider management backend

docs/ Public user-facing documentation

License

This project is licensed under the GNU Affero General Public License v3.0 only.

See:

Brand Notice

The source code in this repository is licensed under AGPL-3.0-only.

However:

- The project name

Clash for AI - Logos

- Icons

- Other brand assets

are not granted for unrestricted use by this source license unless explicitly stated otherwise.

Status

This project is under active development. Interfaces, packaging flow, and update behavior may still change.

Yorumlar (0)

Yorum birakmak icin giris yap.

Yorum birakSonuc bulunamadi